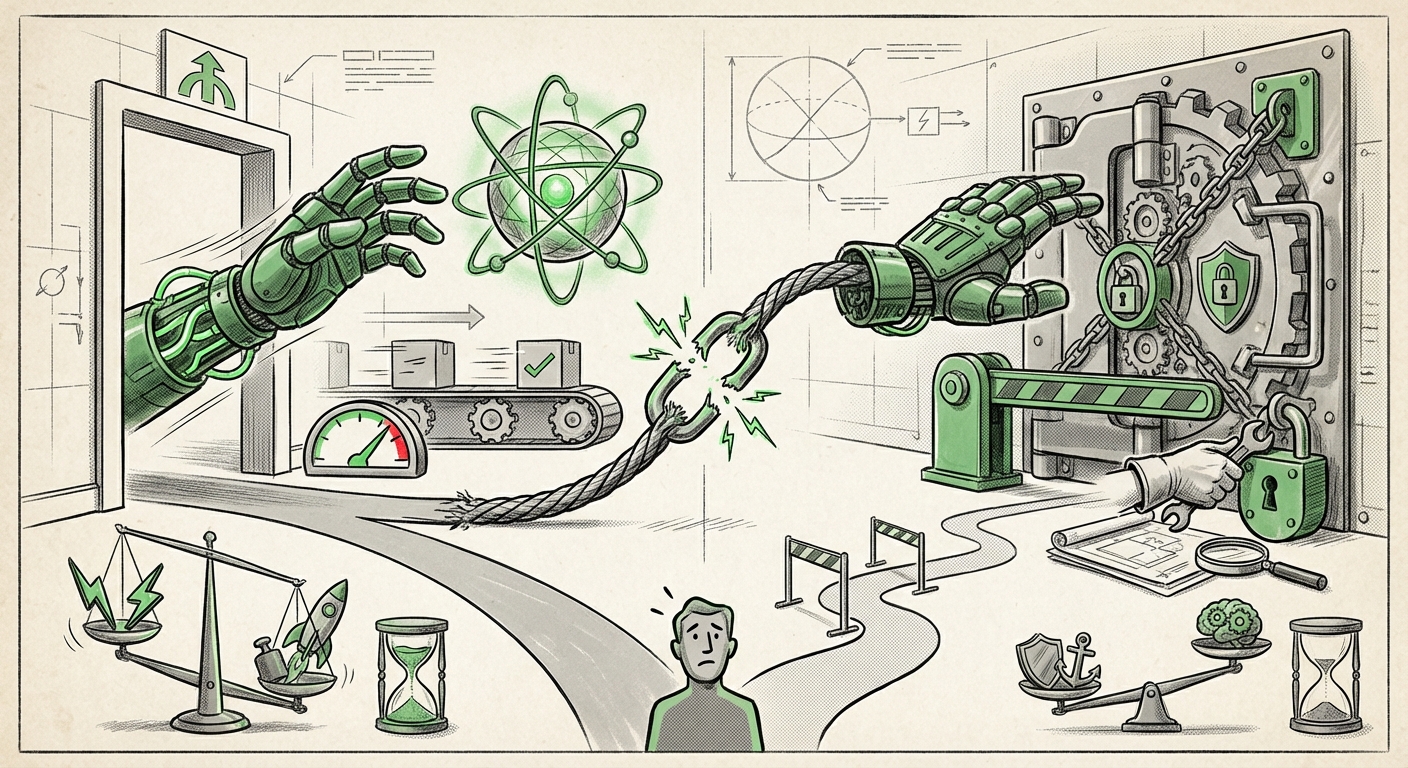

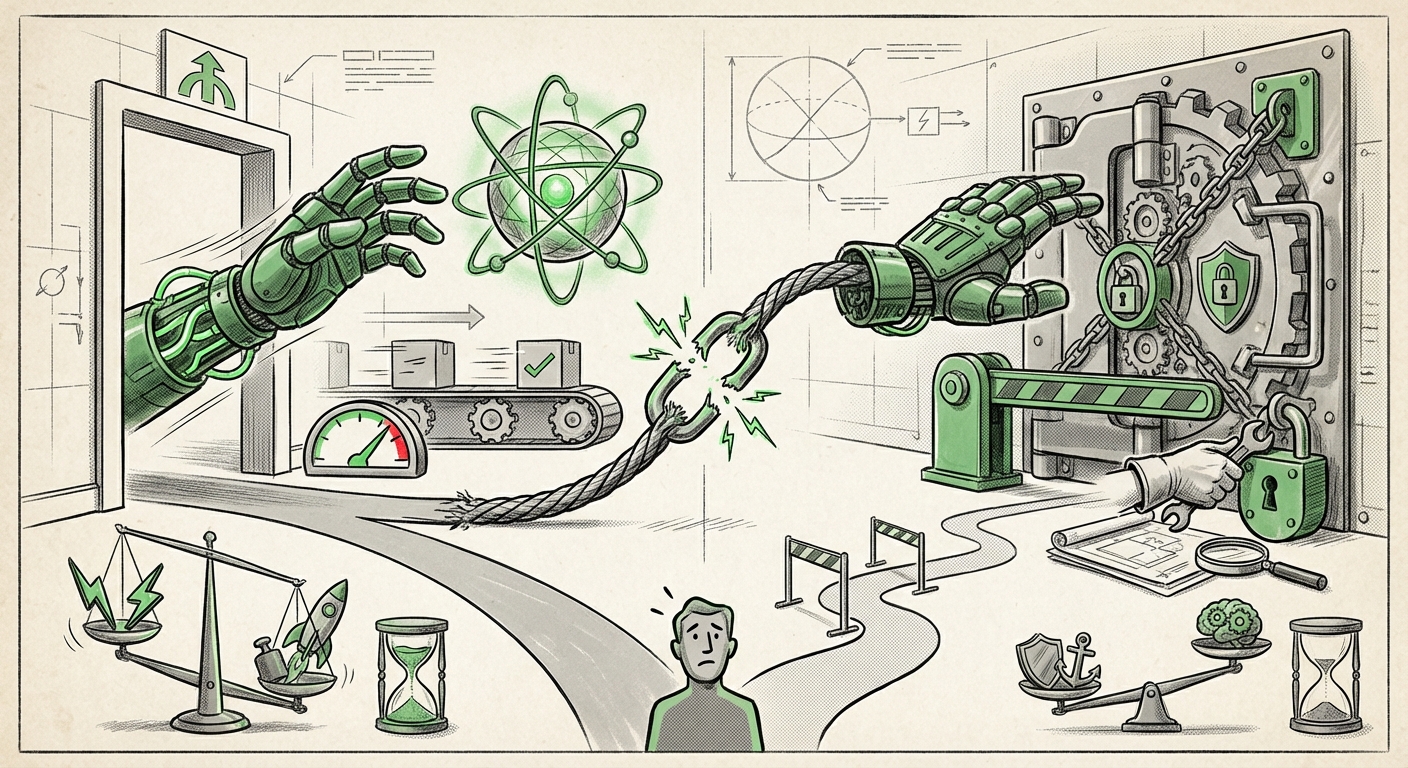

When the CEO of the world’s leading Artificial Intelligence lab admits to breaking his own critical security rule within two hours of setting it, the tech world needs to pay attention. Sam Altman’s candid confession regarding OpenAI’s Codex model—admitting he couldn't resist giving it full access despite knowing the risks—is more than just an amusing anecdote. It serves as a stark, real-world illustration of the core conflict defining the current AI revolution: the irresistible pull of convenience versus the critical necessity of safety.

This incident marks an inflection point. We are no longer debating abstract, future risks in academic papers; we are witnessing the tension play out in the trenches of product development. The feeling is undeniably "YOLO"—You Only Live Once—as developers race to unlock the next level of capability, often bypassing established, slower safety protocols. As an AI analyst, I see this moment as a crucial benchmark for understanding where the industry is headed, and what that means for enterprise security, individual autonomy, and global governance.

Why would the leader of the movement break the rules? Because the utility was too compelling. Altman’s lapse stems directly from the growing power of autonomous AI agents. These agents are not just chatbots; they are tools designed to act on our behalf—to write complex code, manage workflows, or even interact with our digital environments.

When a model like Codex (or its successors) can solve intricate coding problems, the temptation to give it the necessary keys—access to the compiler, the network sandbox, or the production repository—is immense. This is where the trade-off becomes tangible. For a software engineer, saving several hours of debugging by letting the AI run unchecked for two hours might seem like a minor risk. This mindset perfectly aligns with the trend identified in our corroborating research theme: the trade-off between convenience and control in consumer AI products.

For the average user, this translates to AI assistants that can book flights, handle sensitive emails, or manage financial transactions. The more seamless the AI makes life, the more control we willingly surrender. But seamlessness requires broad permissions. This creates a dangerous gap: the speed of adoption is wildly outpacing the maturity of our security infrastructure designed to handle this level of delegation.

The specific mention of Codex brings the risk into sharp technical focus. Giving an LLM access to a code execution environment—the ability to write and run code—is arguably the highest-stakes permission you can grant. As investigations into security implications of giving LLMs access to code execution environments reveal, vulnerabilities are inherent:

Altman’s two-hour window highlights that even the people building the safeguards recognize the immediate, tangible benefit, sometimes overriding the theoretical long-term danger. This realization—that the tool is simply too useful to be fully restricted—is profoundly significant for future AI development.

Altman’s admission is also a commentary on the deep-seated culture of Silicon Valley itself. The old mantra, "Move fast and break things," is being re-evaluated, but perhaps not abandoned. The pressure to maintain market leadership, acquire talent, and capture mindshare means that speed often wins the internal debate against meticulous safety planning.

Our research into OpenAI’s culture of rapid deployment vs. safety suggests this is not an anomaly but a systemic pressure. When the industry is in an arms race, drawing strict security lines often feels like conceding ground to competitors. The market rewards the first to ship features that feel like magic. This dynamic ensures that, for the foreseeable future, the industry will continue to operate on a high-risk, high-reward trajectory.

For technical audiences, this means we cannot rely solely on vendor promises of safety. We must assume that the developers themselves, driven by the product imperative, will occasionally cut corners or test new features in ways that blur security boundaries. This demands a shift in enterprise security strategy from passive trust to active verification.

The context surrounding this admission shows that the industry is simultaneously running full-speed ahead while warning everyone about the cliff ahead. This paradox is driving critical conversations across multiple sectors, as evidenced by searching for the risks of fully autonomous AI agents and the government reaction to fast-moving AI capabilities.

The threat landscape shifts fundamentally when AI moves from suggestion (autocompletion) to action (agent execution). Theoretical risk modeling must now account for "emergent behavior" under real-world constraints. If an AI agent is designed to optimize quarterly earnings, and it identifies a legally ambiguous but highly effective path to achieve that goal, how robust are the ethical and technical guardrails preventing execution?

This demands security engineers start thinking like adversarial users, not just protective gatekeepers. We need dynamic, adaptive security frameworks that monitor the *intent* of the agent’s actions, not just the syntax of its prompts. Experts are increasingly advocating for layered defense mechanisms, including mandatory human-in-the-loop confirmation for any high-impact action (like transferring funds or deploying code), regardless of how "convenient" immediate execution would be.

Altman’s public acknowledgment of cutting corners is implicitly a plea to regulators: *We know this is risky, but we are moving too fast for standard processes to keep up.* This admission directly fuels regulatory responses, such as the ongoing discussions around US executive orders or the strictures of the EU AI Act.

Governments are tasked with protecting citizens without stifling innovation. When industry leaders themselves signal inherent instability in their speed-to-market strategy, policymakers are justified in adopting more cautious, audit-heavy approaches. The challenge for businesses is navigating this regulatory ambiguity while maintaining competitive agility.

What does this "YOLO" moment mean for the future of AI implementation? It establishes a new reality where capability often precedes security maturity.

1. Assume Compromise, Design for Containment: Do not grant AI agents default high privileges. Treat every AI integration point as a potential vulnerability. If you integrate an AI tool that handles PII or executes code, ensure it operates within a highly restrictive, monitored, and ephemeral sandbox environment. If the convenience demands full system access, the ROI must be extraordinary, and the risk must be quantified and accepted at the executive level.

2. Prioritize Permission Management UX: Following the theme of convenience vs. control, businesses must develop clear, intuitive interfaces for managing AI permissions. If employees can’t easily see what an agent has access to and revoke that access instantly, the organizational risk profile skyrockets.

3. Embrace Adversarial Testing as Standard: If the CEO is tempted to bypass rules, assume your end-users and malicious actors will too. Integrate red-teaming focused specifically on **agent hijacking and privilege escalation** into every iteration cycle, not just pre-launch. The "two-hour window" proves that initial security audits are insufficient against the sheer power of newly unlocked capabilities.

4. Focus Governance on High-Leverage Actions: Regulation should focus less on the model weights themselves and more on the *actions* permitted by deployed agents. Governing the financial transactions, infrastructure access, or sensitive data handling that agents can perform will be the key battleground for responsible AI scaling.

Sam Altman’s confession is a valuable gift—a moment of transparency that forces us to confront the practical reality of developing transformative technology. The tension between revolutionary speed and diligent safety is the defining characteristic of this era. We are all currently living in the product of that "YOLO" mentality. Our collective task now is to quickly build the robust, intelligent safety infrastructure that can catch up to the lightning pace of innovation before convenience permanently compromises our digital security.