The artificial intelligence landscape is no longer defined just by chatbots or creative image generators. A quieter, yet arguably more transformative revolution is happening deep within the tools developers use every day. The recent launch of Mistral AI’s Vibe 2.0, a terminal-based coding agent powered by the specialized Devstral 2 model, is not just an incremental update; it is a definitive signal that AI is moving from the suggestion box to the command line—the nerve center of modern software creation.

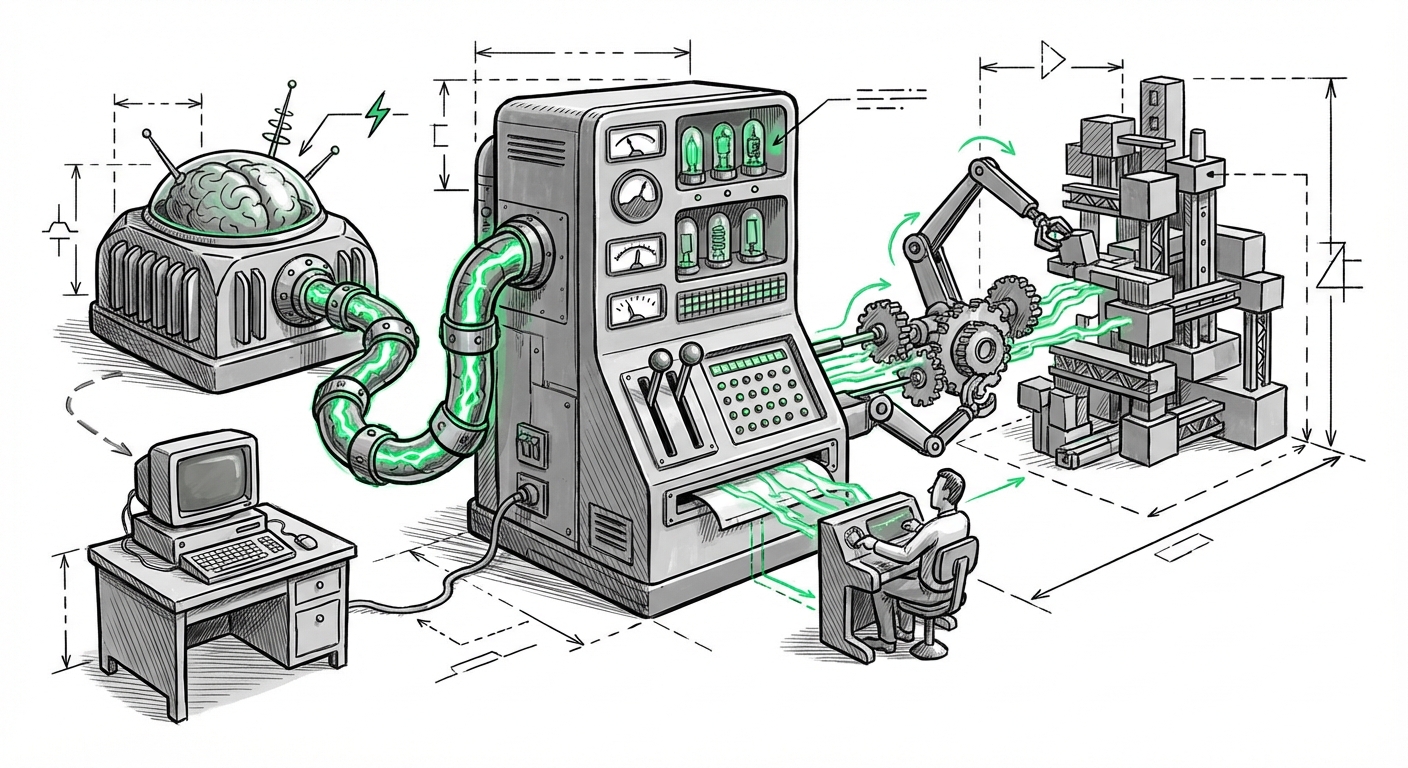

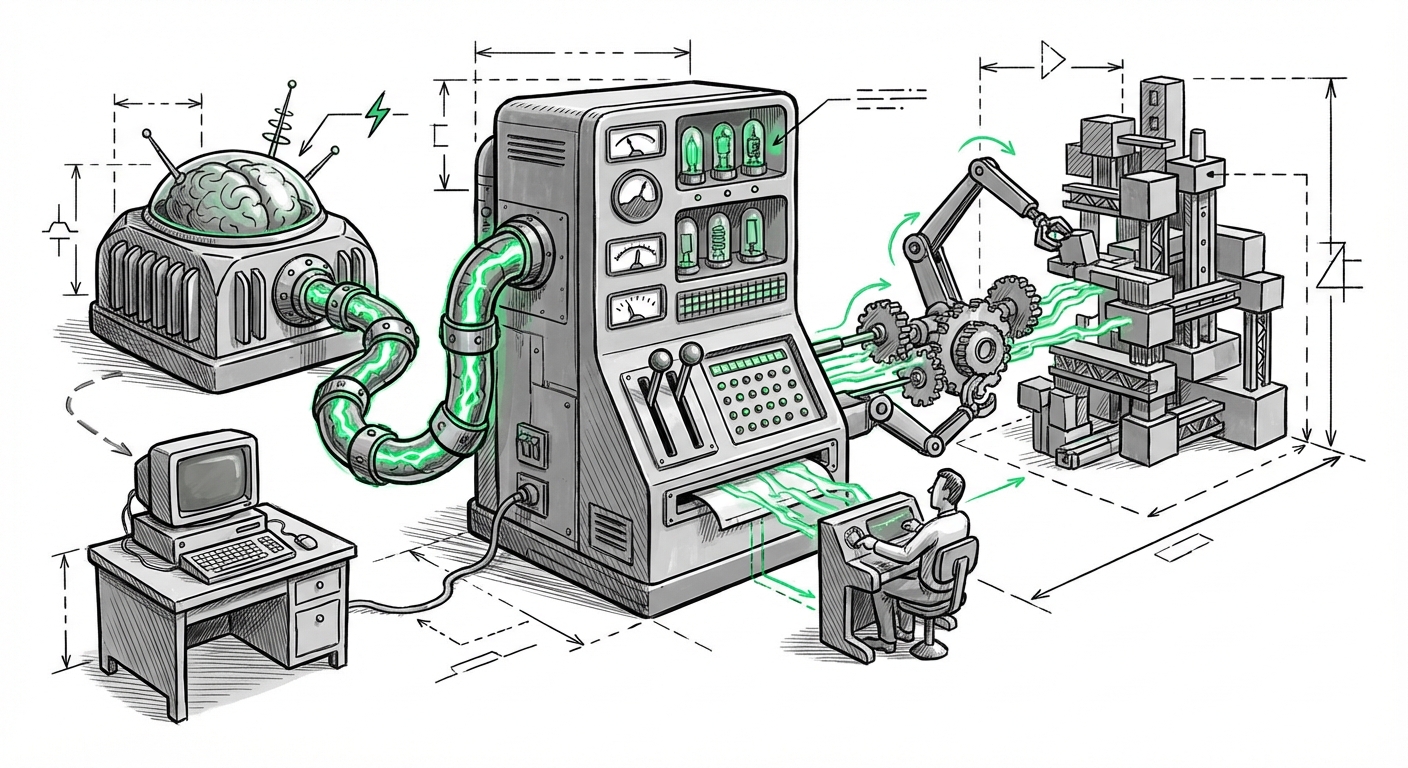

As an AI technology analyst, I see this development as a critical nexus point where large language models (LLMs) transition from being helpful plugins to essential, semi-autonomous collaborators. To fully grasp the impact of Vibe 2.0, we must analyze it through the lens of competitive trends, specialized model training, and the unique demands of the command-line interface (CLI).

For years, AI coding assistance was focused on the Integrated Development Environment (IDE)—autocomplete suggestions inside a code editor. While useful, this often required developers to switch context between coding and using external AI interfaces. Vibe 2.0 and its competitors represent a deliberate strategic pivot: embedding intelligence directly where developers execute operations, manage infrastructure, and debug live systems.

This shift is not accidental. Developers spend countless hours in the terminal—the text-based interface for running commands, managing versions, and deploying applications. Giving an AI direct, context-aware access to this environment means handing over the ability to execute complex, multi-step workflows. We are moving from AI that *writes* code to AI that *acts* on code.

Mistral is entering a space heating up rapidly. The success of Vibe 2.0 will depend on how it stacks up against existing integrations focused on CLI productivity. Competitive analysis shows that tools like **Warp** (with its AI features) and advanced integrations within established platforms are setting a high bar for seamless terminal interaction. If Vibe 2.0 can offer superior command generation or error diagnosis than these established players, it validates the "terminal first" strategy. (See analysis on How Warp, Fig, and Now Mistral are Reimagining the Command Line Experience for market context).

For Tech Strategists, this means understanding that owning the developer's core interface—whether IDE or terminal—is the new battlefield for platform dominance. The winning tools will be those that reduce context switching to near zero.

The power behind Vibe 2.0 is the Devstral 2 model. When we talk about specialized LLMs, we are moving away from generalists trained on the whole internet toward experts trained specifically on code, documentation, and system logs. This specialization is crucial for accuracy, especially in high-stakes environments like the terminal.

General models like GPT-4 are brilliant, but specialized models like Devstral 2 are tuned for different outcomes. We need to understand its training data—was it focused on security vulnerabilities, idiomatic Python, or Linux shell scripting? If Devstral 2 has been rigorously trained on cleaner, validated codebases, its output will inherently be safer and more reliable when asked to execute commands that modify system state.

For Deep Learning Engineers and Researchers, the key question is architectural: Does Devstral 2 utilize Mixture-of-Experts (MoE) structures common in Mistral’s lineup, and how has that been optimized for code sequences versus natural language? Superior code-native reasoning, even if the general intelligence scores remain similar to competitors, translates directly into higher developer satisfaction. (Research into Decoding Devstral: An Early Look at Mistral’s New Coding-Native Architecture will reveal these technical nuances).

Furthermore, the **Benchmarking Landscape** is vital. Are specialized models beginning to plateau, or is Devstral 2 showing gains on standardized tests like HumanEval that prove its specialized training pays off? If it outperforms general models in logical problem-solving specific to coding tasks, it justifies the investment in proprietary, specialized models.

The most profound implication of Vibe 2.0 lies in its nature as an *agent*. An agent is more than a sophisticated search engine; it can observe, plan, act, and iterate. When an AI operates within the CLI, these actions directly affect the machine.

Unlike editing a file in an IDE, issuing a wrong `rm -rf` command in the terminal can wipe out hours of work or compromise a production environment instantly. This interface demands near-perfect, low-latency performance. For DevOps Engineers and Security Analysts, the introduction of powerful agents in the CLI raises immediate red flags:

This focus on the CLI interface drives the need for deep context understanding. The AI needs to know the current directory, the active Git branch, the state of the running containers—context that general assistants often miss. The trend toward autonomous agents signifies that developers are ready to delegate tactical execution, provided the safety rails are robust. (Discussions found through searches like From Autocomplete to Autonomous Agents: The Next Frontier for Developer Productivity highlight this critical tension).

The arrival of highly capable, specialized agents in the developer workflow provides clear takeaways for organizations managing technical teams.

If Vibe 2.0 can expertly generate boilerplate, debug obscure errors, and craft complex shell scripts, the traditional pathway for junior developers—learning by trial-and-error in the terminal—is fundamentally altered. Businesses must shift training focus toward prompt engineering for complex task delegation, system architecture review, and, crucially, AI output verification. The new junior developer must be an excellent AI auditor.

For Enterprise Architects, the decision is not *if* to adopt these tools, but *which ones* and *how to secure them*. Relying on publicly available models for tasks involving proprietary code or cloud infrastructure management is a risk. The trend points toward enterprises either demanding private, secure deployments of models like Devstral 2 or heavily vetting specialized, audited solutions.

The ROI here is clear: massive acceleration in the tedious, often frustrating parts of development. For every hour saved debugging a deployment script or writing routine database migration code, developers gain time for creative problem-solving. Businesses need to track metrics beyond mere code output; they should track **time-to-deployment** and **bug density** in AI-assisted projects.

Mistral AI’s Vibe 2.0, powered by Devstral 2, is a loud declaration that the AI race is now entering its phase of hyper-specialization and deep workflow integration. We are moving past the "wow factor" of generative AI toward practical, high-leverage productivity tools built for specific, demanding contexts like the command line.

This development forces every technology organization to confront a dual reality: incredible productivity gains are within reach, but they come tethered to new vectors of risk related to autonomy and access. The future of software engineering won't be about coders vs. AI; it will be about the organizations that master collaboration with specialized, agentic tools like Vibe 2.0, leveraging them to solve bigger problems faster, while rigorously controlling the execution surface area they are granted.