The Great Shift: How AI is Freeing Leaders for High-Impact Strategy

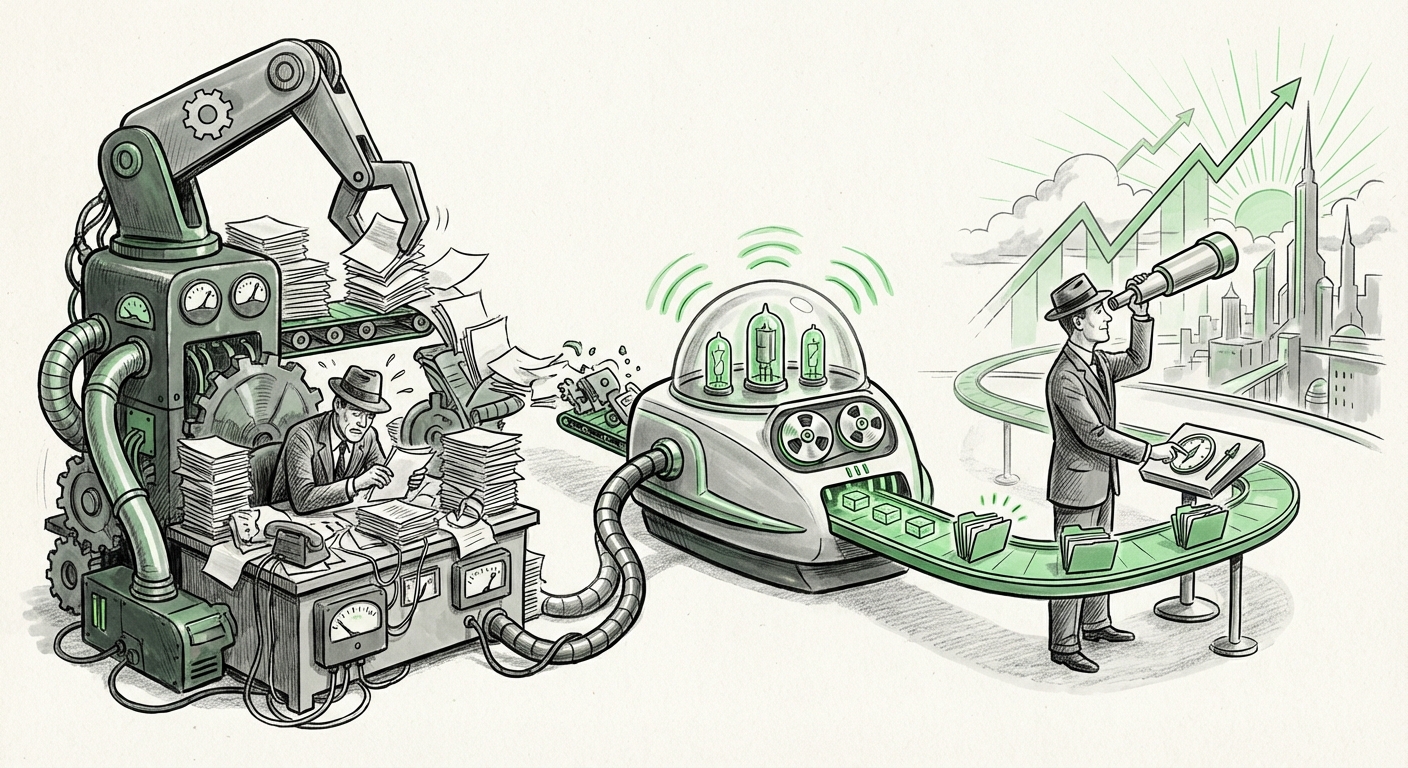

In the ongoing digital transformation of the enterprise, Artificial Intelligence (AI) is often framed in terms of disruption—job replacement, model drift, or data security threats. However, one of the most profound and immediate impacts of current AI technology, particularly Generative AI, is far more constructive: liberation. AI is systematically dismantling the administrative burden that has long bogged down executive decision-making, ushering in an era where leaders can finally focus on tasks only humans can truly master: vision, empathy, and high-stakes strategy.

As technology analysts, we see this transition—moving from the drudgery of routine tasks to the elevation of high-impact work—as the central theme of enterprise AI adoption today. This is not merely efficiency; it is a fundamental reshaping of the leadership role itself. To understand the scope of this shift, we must look beyond simple task automation and examine the required skill changes, the implementation realities, and the critical governance frameworks needed to manage AI-augmented strategy.

The Automation Dividend: From Paperwork to Possibility

The core promise of automation has always been time savings. In leadership contexts, this translates directly into an "automation dividend"—time reinvested into areas that generate disproportionate value. Before sophisticated AI, leaders spent hours summarizing quarterly reports, drafting preliminary board communications, compiling competitive research from disparate sources, and managing intricate scheduling logistics.

Now, tools leveraging Large Language Models (LLMs) and Robotic Process Automation (RPA) are handling the aggregation and synthesis of data. Imagine a scenario where a leader needs a summary of Q3 performance across three global regions, benchmarked against five top competitors, delivered in a concise, narrative format suitable for the next board meeting. Previously, this took days of analyst time and executive review. Today, AI can process the raw data feeds, draft the narrative structure, flag potential risks in the commentary, and even suggest alternative strategic narratives. This is the fundamental shift: AI acts as a powerful, tireless Chief of Staff, processing the data so the leader can focus on the *meaning* and the *next move*.

Corroboration: Quantifying the Decision Quality Boost

This move from task completion to strategy enhancement is heavily supported by industry analysis (Search Query: "AI augmented decision making" executive productivity impact). Consulting reports frequently detail how generative AI is now being integrated into high-level functions like scenario planning and complex risk modeling. Instead of asking analysts to build three scenarios, a leader can prompt an AI to generate dozens of "what-if" scenarios based on shifting economic indicators, flagging non-obvious correlations. This deep, rapid analysis ensures that the eventual high-impact decision—such as entering a new market or structuring a major acquisition—is based on a far broader and deeper understanding than ever before possible.

The New Leadership Mandate: Skills for the Augmented Era

If AI handles the "what happened" and the "what if," the human leader must master the "why" and the "what should we do next." This radical reallocation of focus necessitates a significant evolution in core leadership competencies.

Skill Shift 1: AI Literacy and Interpretation

The modern executive cannot afford to be a passive consumer of AI output. They must possess AI literacy—understanding the capabilities, inherent limitations, and sources of their AI tools. This doesn't mean learning Python; it means understanding concepts like model confidence scores, prompt engineering sensitivity, and recognizing when an output might be based on flawed or outdated training data. As research suggests (Search Query: "Future of leadership skills" AI integration required competencies), the future leader must be an effective *orchestrator* of human and machine intelligence.

Skill Shift 2: Ethical Reasoning and Governance Oversight

When AI automates routine compliance checks or drafts key policy language, the potential for bias amplification or unintended legal consequences skyrockets. Therefore, ethical reasoning transforms from a soft skill into a critical governance function. Leaders must move from merely signing off on compliance reports to actively questioning the ethical scaffolding beneath the data used to generate those reports. This requires a profound focus on accountability.

Skill Shift 3: Cultivating Strategic Ambiguity

Routine tasks are typically defined by clear rules and predictable outcomes. High-impact decisions, conversely, thrive in ambiguity, requiring intuition, organizational context, and emotional intelligence. By removing the structure of routine work, AI forces leaders into environments where clear answers are scarce. The most valuable skill will be leading effectively through uncertainty, fostering psychological safety for teams to innovate, and making judgment calls where the data is incomplete.

Implementation Realities: How Automation Moves from Theory to Practice

The theoretical benefit of freeing up executive time is compelling, but its practical realization depends on successful, targeted implementation across the organization. We need to look closely at the 'how' (Search Query: enterprise AI adoption routine task automation case studies).

Beyond RPA: The Rise of Cognitive Automation

Early automation focused on Robotic Process Automation (RPA)—simple, rule-based actions like data entry. The current wave integrates cognitive capabilities. For example, in Finance departments, AI isn't just moving numbers; it's analyzing qualitative variance explanations in budgeting documents and drafting the initial commentary paragraphs for the CFO. This is known as Cognitive Automation, and it directly targets the tasks that eat up executive bandwidth.

Case studies reveal that successful early adopters focus automation on areas that are:

- High Volume, Low Cognitive Load: Initial wins are found in summarizing meeting transcripts, drafting standardized internal memos, or synthesizing employee feedback surveys.

- Data-Rich, Context-Heavy Synthesis: Preparing executive dashboards that integrate data from CRM, ERP, and HR systems into a unified, interpretive view, saving the leader from manually synthesizing silos of information.

The implication for IT Directors and Operations Managers is clear: the focus must shift from automating simple workflows to integrating sophisticated models that can handle nuanced, narrative-based information necessary for strategic input.

The Critical Guardrails: Governing AI in High-Stakes Decisions

The most compelling future use of AI in leadership involves its role as a decision-support system, yet this is where the greatest risks reside. When an AI moves from drafting an email to suggesting a multi-million dollar capital allocation, the stakes change entirely. This is the domain of AI Governance (Search Query: AI governance for executive decision support systems).

The Explainability Imperative (XAI)

If an executive bases a critical business decision on an AI model's recommendation—say, predicting which regional division is most likely to fail its compliance audit next year—the executive must be able to explain *why* that decision was made, both to their board and to regulators. This demands Explainable AI (XAI). If the AI operates as a "black box," the leader is accountable for an opaque process. Effective governance requires mandated audit trails for all high-impact AI suggestions, ensuring there is a clear lineage from input data to final recommendation.

Accountability Frameworks

Who takes the blame when an AI-supported strategy fails? The designer? The executive who acted on the advice? The consensus emerging among risk officers and legal experts is that human oversight remains the final layer of accountability. The leader’s primary responsibility shifts to validating the AI’s logic, confirming the ethical inputs, and deciding whether to override the suggestion based on non-quantifiable context. Technology must inform, but it cannot indemnify the leader from responsibility.

What This Means for the Future of AI and Its Use

The trajectory outlined—from routine task removal to strategic augmentation, coupled with skill and governance evolution—paints a clear picture for the next decade of enterprise AI.

- AI Becomes Ubiquitous Infrastructure: Just as electricity is assumed in a modern factory, AI will become the assumed layer beneath all digital interactions. For leaders, this means AI tools will be seamlessly embedded into every productivity suite, not bolted on as separate applications.

- The Rise of the 'AI Translator' Role: Organizations will urgently need individuals who bridge the gap between data science teams (who build the models) and executive teams (who use the outputs). These leaders must understand both the algorithmic limitations and the strategic goals.

- Focus on Human-Machine Teaming Metrics: Productivity measurement will pivot. Success will no longer be measured by how many emails a leader sends, but by the quality and speed of their strategic pivots, directly correlated with their ability to effectively collaborate with their AI assistants.

Practical Implications and Actionable Insights

For organizations looking to capitalize on this shift now, the path forward requires deliberate action across three domains:

For the Executive (The User):

Actionable Insight: Dedicate 20% of your non-meeting time this quarter to stress-testing your current AI tools. Ask them deliberately difficult or ethically ambiguous questions. Learn their failure modes so you are prepared to intervene intelligently when high-stakes decisions arise. Do not wait for formal training; drive your own AI literacy.

For Technology Leadership (The Implementer):

Actionable Insight: Prioritize integration over isolated deployment. Instead of seeking niche AI tools for single tasks, invest in platforms that can ingest and interpret data across organizational silos. Focus implementation efforts on creating "Executive Summary Narratives" that synthesize multiple data sources, thereby maximizing the time saved for strategic review.

For HR and Talent Development (The Enabler):

Actionable Insight: Revamp leadership development programs immediately. Core modules must now include AI governance ethics, critical thinking regarding algorithmic outputs, and frameworks for leading hybrid human-AI teams. Hire for curiosity and comfort with complexity, as adherence to rigid, process-heavy roles will diminish.

The age of the overwhelmed executive drowning in data synthesis is rapidly ending. In its place, AI offers a powerful proposition: return the executive’s focus to the essential human elements of leadership—foresight, inspiration, and navigating the truly complex unknowns. The organizations that succeed in the next decade will be those whose leaders successfully transition from managers of tasks to masters of augmented strategy.