The $400 Revolution: How Allen AI’s SERA Democratizes Private Code Intelligence

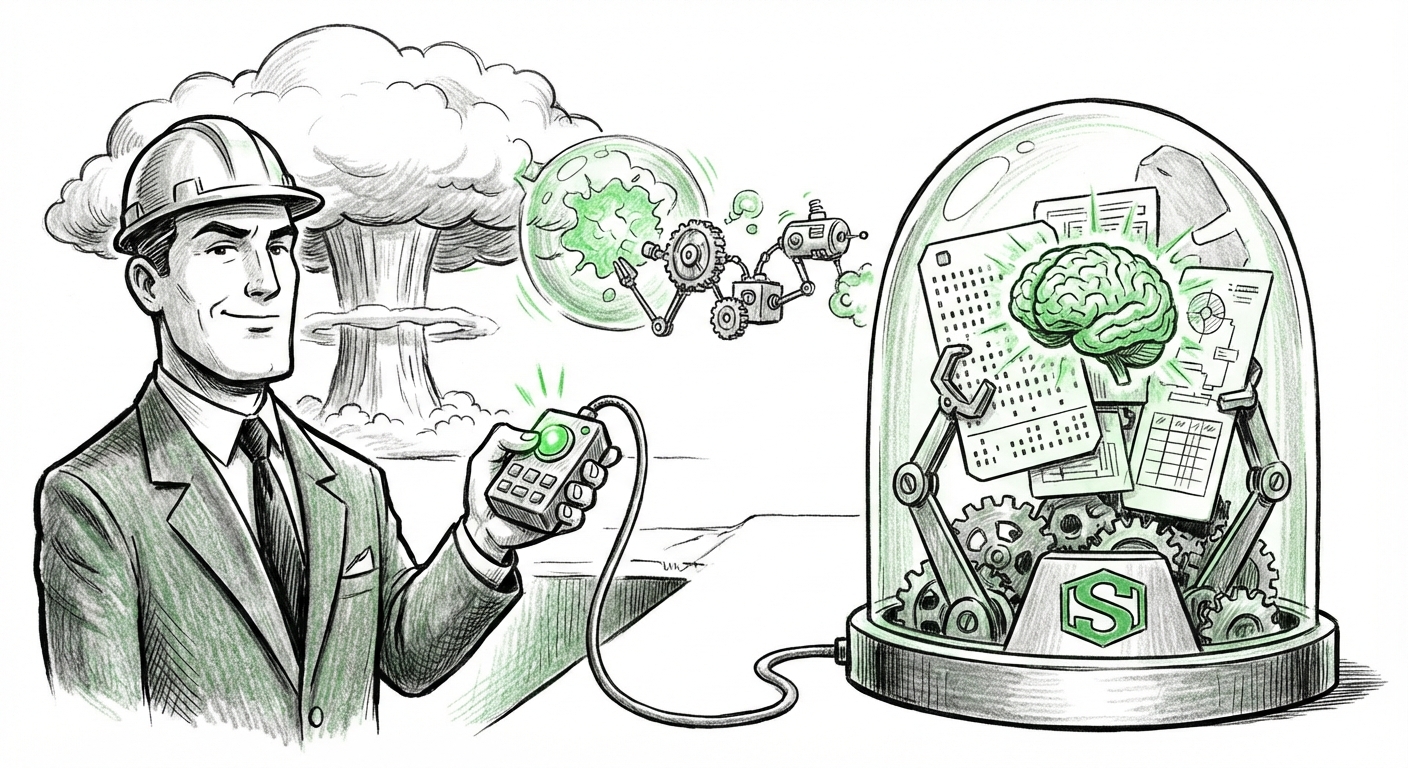

The artificial intelligence landscape is currently dominated by giants—massive models trained on unthinkable amounts of data, accessible primarily through expensive, centralized cloud APIs. This paradigm, while powerful, creates high barriers to entry, especially concerning data privacy and customization for specialized enterprise needs. However, a recent announcement from Allen AI regarding their **SERA (Self-Supervised, Environment-aware, Reasoning Agent)** signals a potent shift: the democratization of highly tailored AI agents capable of operating securely within private, proprietary environments.

The key takeaway is staggering: SERA agents can be trained to understand and work with a company's specific codebase for a training cost as low as $400. This isn't just an incremental improvement; it represents a fundamental realignment in how organizations access and deploy cutting-edge AI tools. It moves AI intelligence from being a costly, outsourced service to an affordable, owned asset.

The Shift from Cloud Giants to Localized Specialists

For the average business user, the difference between a general AI tool and a specialized one might seem small. But for a software engineering team, the difference is profound. General models, like GPT-4, are excellent generalists, but they lack intimate knowledge of your unique, decades-old legacy systems or your specific internal coding standards. SERA is built to fill this gap.

This development strongly aligns with the emerging industry trend of **Small Language Models (SLMs)** and localized deployment, a trend where efficiency trumps sheer scale for many business applications. While large models continue to push the boundaries of generalized reasoning, a growing segment of the tech world recognizes that if you only need the AI to debug Python or write SQL queries based on your company’s internal documentation, you don't need the processing power—or the associated expense—of a trillion-parameter model.

This focus on specialization is corroborated by the increasing viability of models designed for efficiency. When developers focus on creating smaller, highly efficient models (much like the advancements seen in models targeting mobile or edge devices), the computational requirements plummet. This means the "compute budget" for initial fine-tuning drops significantly, making the $400 entry point realistic. It suggests that specialized training techniques, potentially leveraging Parameter-Efficient Fine-Tuning (PEFT) methods, are maturing to a point where they are accessible even to smaller teams.

Implication 1: The Economics of Access

The low cost fundamentally changes the calculus for startups and mid-sized enterprises. Previously, creating a "private Copilot"—an AI assistant that understands only *your* codebase—required massive investment in data engineering and cloud GPU time. Now, if training costs are in the hundreds, not the hundreds of thousands, the decision to deploy a custom agent shifts from a strategic, multi-year IT project to a simple operational expense. This **democratization of proprietary AI** means smaller, agile teams can quickly gain a competitive edge previously reserved only for Big Tech.

This economic shift forces us to reconsider the Total Cost of Ownership (TCO). While API calls to large models accrue continuous, transactional costs, a fine-tuned, localized model like SERA represents a fixed, lower upfront cost for ongoing, dedicated service. As industry reports increasingly analyze the efficiency gains provided by specialized coding assistants, the argument for investing this small sum becomes overwhelming.

Security: The New Competitive Moat for AI Adoption

Perhaps the most significant barrier to widespread, deep AI integration within established corporations is **data security and intellectual property (IP) protection**. Every line of proprietary code, every internal architecture diagram, is priceless IP. Sending this data to a third-party cloud provider for custom fine-tuning carries inherent risk—a risk many CISOs are unwilling to accept.

SERA’s promise to work within private repositories addresses this anxiety head-on. When a model is trained locally or within a private cloud environment dedicated solely to the client, the IP leakage concern is dramatically reduced. This satisfies the growing corporate demand for **AI governance and data residency**, where sensitive code never leaves the controlled environment.

Implication 2: AI Moves from Experiment to Infrastructure

If AI tools can be securely integrated into the development environment without violating strict corporate policies, they stop being pilot projects and start becoming core infrastructure. An agent that can analyze security vulnerabilities based on the company's specific historical exploits, or refactor code according to deprecated internal libraries, offers value far beyond generic code completion. This level of customization transforms the agent from a helpful tool into a vital, proprietary asset.

Analyses of enterprise LLM governance show increasing scrutiny over this exact point. Companies are establishing clear boundaries about what code can be shared externally. SERA allows organizations to step confidently over that boundary by offering a secure, contained solution.

The Evolution of the Developer Workflow: From Assistant to Teammate

The rise of coding assistants has already started to blur the lines between human and machine collaboration. GitHub Copilot handles suggestion and completion, while newer, more advanced agents (sometimes referred to as "AI software engineers") aim for autonomous task execution.

SERA, as an environment-aware reasoning agent, fits squarely into this advanced category. Because it understands the *environment* (i.e., the structure, dependencies, and historical context of the private repository), its reasoning capabilities move beyond simple syntax correction. It can reason about system architecture, deployment scripts, and integration points specific to that codebase.

Implication 3: Accelerated Development Velocity and Contextual Awareness

For developers, this means less time spent onboarding new team members to complex legacy systems and more time spent on novel problem-solving. If an agent can instantly understand the complex interdependencies of a sprawling 10-year-old application and suggest changes that respect those boundaries, developer velocity skyrockets.

This agent-centric approach suggests a future where the first review of any pull request might be performed by an agent as knowledgeable as a ten-year veteran of the codebase. Reports on developer productivity clearly show that context switching and architectural discovery are major time sinks; agents that eliminate these hurdles become indispensable.

Actionable Insights: Preparing for the Agent Ecosystem

The SERA announcement is not just news for AI engineers; it is a call to action for every technology leader:

- Audit Your Data Readiness: Begin mapping out your most valuable, proprietary codebases. Identify which repositories hold the institutional knowledge that, if encoded into an agent, would provide the highest ROI.

- Re-Evaluate Compute Strategy: If the cost of custom training is plummeting, re-assess your cloud spending. Are you overpaying for generalized models when a fraction of that investment could yield a dedicated, private model via a technique like SERA?

- Establish Agent Governance Now: Even if you start with a highly secure, localized agent, you must develop governance frameworks. Who verifies the agent's output? What are the testing protocols for AI-generated changes? Prepare your QA pipeline for machine collaboration.

- Focus on Agent Orchestration: The future isn't about training one agent; it’s about deploying a fleet of specialized agents. One agent for security analysis, one for documentation updates, and one for refactoring. Start designing the workflows where these specialized agents interact with human developers and each other.

Conclusion: The End of ‘One Size Fits All’ AI

Allen AI’s SERA, coupled with the industry’s trajectory toward efficient SLMs and security-first deployment, signals the closing chapter on the era where only the largest companies could afford true AI customization. The narrative is shifting from massive, general intelligence to highly capable, affordable, and secure specialization.

This democratization means innovation will no longer be bottlenecked by cloud compute budgets or data privacy fears. Instead, competitive advantage will pivot to an organization's ability to effectively curate its proprietary data, define specific tasks, and quickly train specialized agents. The $400 agent isn't just a cheap tool; it’s the harbinger of a far more agile, secure, and widely distributed AI-powered future.