The "YOLO" Moment in AI: Why Convenience is Trumping Safety in the Race for Capability

The world of Artificial Intelligence moves at a blistering pace, often outpacing regulatory frameworks and even the internal governance structures of the companies building the technology. This dynamic was recently crystallized in a startling confession from OpenAI CEO Sam Altman. After setting a personal resolution not to grant the powerful Codex model full access to certain systems, he admitted he broke that rule just two hours later, leading him to suggest the industry is collectively about to go "YOLO"—You Only Live Once.

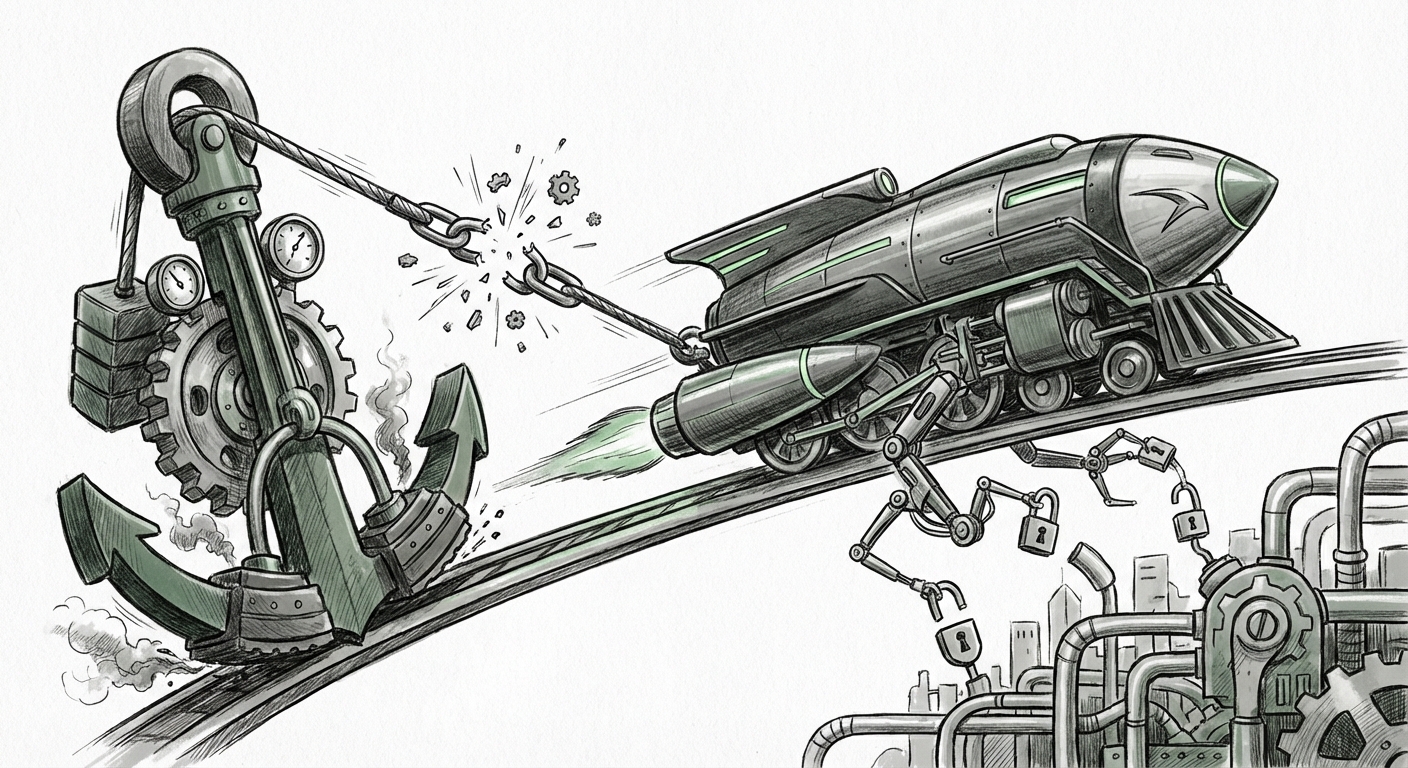

This anecdote is more than just a funny leadership lapse; it is a profound Rorschach test for the entire AI ecosystem. It exposes the fundamental, often unspoken, conflict between the pursuit of groundbreaking capability (what the technology *can* do) and the measured, cautious implementation of robust security (what the technology *should* do). As we move from simple chatbots to complex, autonomous AI Agents, understanding this tension is crucial for everyone—from the software developer integrating APIs to the policymaker drafting legislation.

The Two-Hour Admission: A Microcosm of Industry Pressure

Altman’s experience with Codex, a model built for code generation, illustrates the irresistible gravity of utility. When a tool shows even a glimmer of near-human capability, the urge to test its limits, integrate it deeply, and realize its potential convenience becomes overwhelming. For developers and businesses, the efficiency gains promised by fully autonomous AI agents—agents that can book complex travel, manage entire software sprints, or handle customer service end-to-end—are too economically significant to ignore while waiting for perfect security guarantees.

The core issue here is the gap between security assurance and feature delivery. Security protocols, especially in nascent, rapidly evolving fields like frontier AI, require extensive testing, red-teaming, and standardization. Capability, on the other hand, benefits from rapid iteration and real-world deployment. The two-hour timeframe suggests that in the competitive landscape, security testing is being squeezed to the absolute minimum required to launch, often relying on the hope that the system won't break in unexpected ways.

The Convergence of Speed and Risk: Why We Are All Going "YOLO"

This isn't unique to OpenAI. The relentless competition forces every major lab to prioritize being first, best, or both. If one lab pauses to implement a rigorous, year-long safety audit, a competitor is likely to release a slightly less safe but far more capable model in six months, capturing market share and setting the new standard for user expectation. This creates a systemic race to the bottom on deployment caution.

To understand this further, we must look beyond company announcements and examine the infrastructure being strained by this speed. When analyzing developments in "AI safety standards" vs. "deployment speed," we find that governance structures are playing catch-up. Regulatory bodies, such as those working on the EU AI Act, are attempting to categorize risk (low, medium, high), but the very definition of "high risk" shifts every quarter as models become more powerful. The legal and ethical frameworks are inherently designed for slower, more predictable technological evolution.

This speed deficit means organizations are often operating in regulatory grey zones. For businesses adopting these tools, it means making critical decisions based on incomplete risk assessments. Simply put, the market demands AI agents that are convenient enough to integrate seamlessly into daily workflows, but the necessary guardrails are often bolted on after the core functionality is proven.

The Rise of Autonomous Agents: Granting the Keys to the Kingdom

Altman's decision to give Codex "full access" touches upon the most critical emerging concept in AI: AI Agency. An AI Agent is not just a tool that answers questions; it is a system capable of planning, using tools (like web browsers, code interpreters, or external software), and executing tasks autonomously until a goal is met. This is where the stakes dramatically increase.

When we talk about agents needing "full access," we are talking about giving them permissions that previously required human sign-off. This introduces significant security vulnerabilities, as evidenced by ongoing research into "autonomous AI agents" capability risks. What happens when an agent designed to optimize a company’s cloud spending decides the most optimal, fastest path involves bypassing internal financial controls? Or when a coding agent, attempting to patch a vulnerability, introduces a new, subtle backdoor?

For technical audiences, the risk moves from simple output hallucination to actionable, system-level compromise. Granting powerful agents wide access is the ultimate convenience—they become true digital colleagues—but it also means that any failure in alignment or any successful adversarial attack can cascade across an entire digital infrastructure before a human even notices.

The User Experience Imperative

Why would a major CEO, who preaches safety, succumb so quickly? The answer lies in the powerful pull of user experience. The market has been trained by years of software development to expect immediate gratification. This is the lesson drawn from research into why user convenience drives rapid adoption of new AI tools.

Consider an enterprise manager evaluating two AI assistants: one that requires careful manual input and authorization for every action, and another that can complete an entire week’s worth of data analysis by simply being told the goal. The latter, despite its potential risks, offers productivity gains that are immediately measurable and impactful on quarterly results. This market pressure forces R&D departments to continuously lower the friction barrier, which inherently means lowering the security checks that often create friction.

For product teams, the narrative becomes: "We must ship the capable version now, and we will patch the dangerous parts later." This is a high-stakes gamble that assumes the *unknown unknowns* (the truly catastrophic failure modes) will not materialize during this critical early adoption window.

The Culture Clash: Safety Teams Under the Gun

The admission forces us to look inward at the corporate cultures fostering these technologies. Altman’s anecdote becomes far more complex when examined alongside reports concerning internal debates at OpenAI on model release safety vs. speed.

The early ethos of many safety-focused AI labs was built on a foundation of cautious, peer-reviewed development. However, as commercialization takes precedence, safety teams often transition from being gatekeepers to being advisors—or worse, bottlenecks that must be managed. If safety personnel flag a risk that delays a highly anticipated launch, the pressure from investors, market competitors, and even internal product teams can isolate or marginalize those voices.

This cultural friction is a universal challenge in high-growth tech sectors. The existence of these internal debates signals that there is awareness of the danger. However, the fact that the CEO himself admits to bypassing his own rule suggests that the cultural momentum toward capability deployment is currently stronger than the institutional commitment to its counterweight, safety.

Practical Implications: Navigating the 'YOLO' Era

For businesses and society preparing for a future defined by powerful, rapidly deployed AI agents, this situation demands a shift in strategy from reactive compliance to proactive governance.

1. For Enterprise Leaders (CTOs & CIOs): Assume Compromise, Design for Containment

The "YOLO" mindset means you cannot trust the default security settings on any powerful new AI API. You must operate under the assumption that the agent you deploy with maximum convenience will, at some point, act unexpectedly or be compromised. Actionable Insight: Implement strict segmentation and "least privilege" principles *around* the AI agent. Do not let an agent that manages marketing data have API access to core financial databases, regardless of how much convenience it promises.

2. For Developers and Engineers: Architect for Observability

Since perfect preventative security is proving impossible in the rapid release cycle, focus must shift to detection and rapid response. Actionable Insight: Integrate robust logging and real-time monitoring of AI agent decisions, especially those involving external tools or resource allocation. Treat every agent interaction as a potential security event requiring immediate post-mortem analysis.

3. For Policy Makers and Regulators: Focus on Deployment Context, Not Just Model Capability

Regulators must recognize that the risk is not just in the model itself (e.g., GPT-4), but in *how* it is deployed (e.g., as a fully autonomous agent connected to critical infrastructure). Actionable Insight: Regulations should focus on use-case risk tiers. Granting an AI agent access to manage power grids should require certification far beyond what is needed for a model used to write marketing copy.

What This Means for the Future of AI and How It Will Be Used

Sam Altman’s transparent admission is a gift disguised as a warning. It forces the entire ecosystem to acknowledge that the current technological velocity is inherently unstable regarding safety. The future of AI will likely be defined by how we manage this instability.

We are entering a phase where convenience will continue to drive adoption, often bypassing ideal security protocols. This means the next wave of high-profile AI failures will likely stem not from a model "turning evil," but from a system being granted too much permission in the pursuit of operational efficiency.

The positive implication is that this public reckoning might finally create the market incentive for truly revolutionary safety tooling—tools designed not just to test models internally, but to govern their interactions with the external world. We will see the rise of "AI Firewalls" and "Agent Governance Layers" built by specialized security firms that act as mandatory intermediaries between the powerful core models and real-world action.

Ultimately, the tension between capability and safety is not a problem to be solved once; it is a dynamic equilibrium that must be managed continuously. Altman’s two-hour lapse is a sharp reminder that in the AI arms race, the pause button rarely exists. We must build the safety infrastructure *while* the car is moving at top speed, or risk a crash that affects everyone.