The Democratic Imperative: Why AI Must Not Mirror Autocracy

The conversation around advanced Artificial Intelligence has long been dominated by the concept of existential risk—the possibility that superintelligent AI could become uncontrollable. However, a more immediate, geopolitical challenge is now dominating the discourse, crystallized by the recent warnings from Anthropic CEO Dario Amodei. His central thesis is stark: Democracies must rigorously ensure that the AI systems they deploy do not ultimately make them resemble the autocratic adversaries they seek to manage.

This shift is crucial. It moves the focus from "if AI will destroy us" to "how AI will change *who we are*." For businesses, policymakers, and citizens, understanding this divergence in deployment strategy is the next frontier in AI adoption.

The Core Conflict: Speed vs. Soul

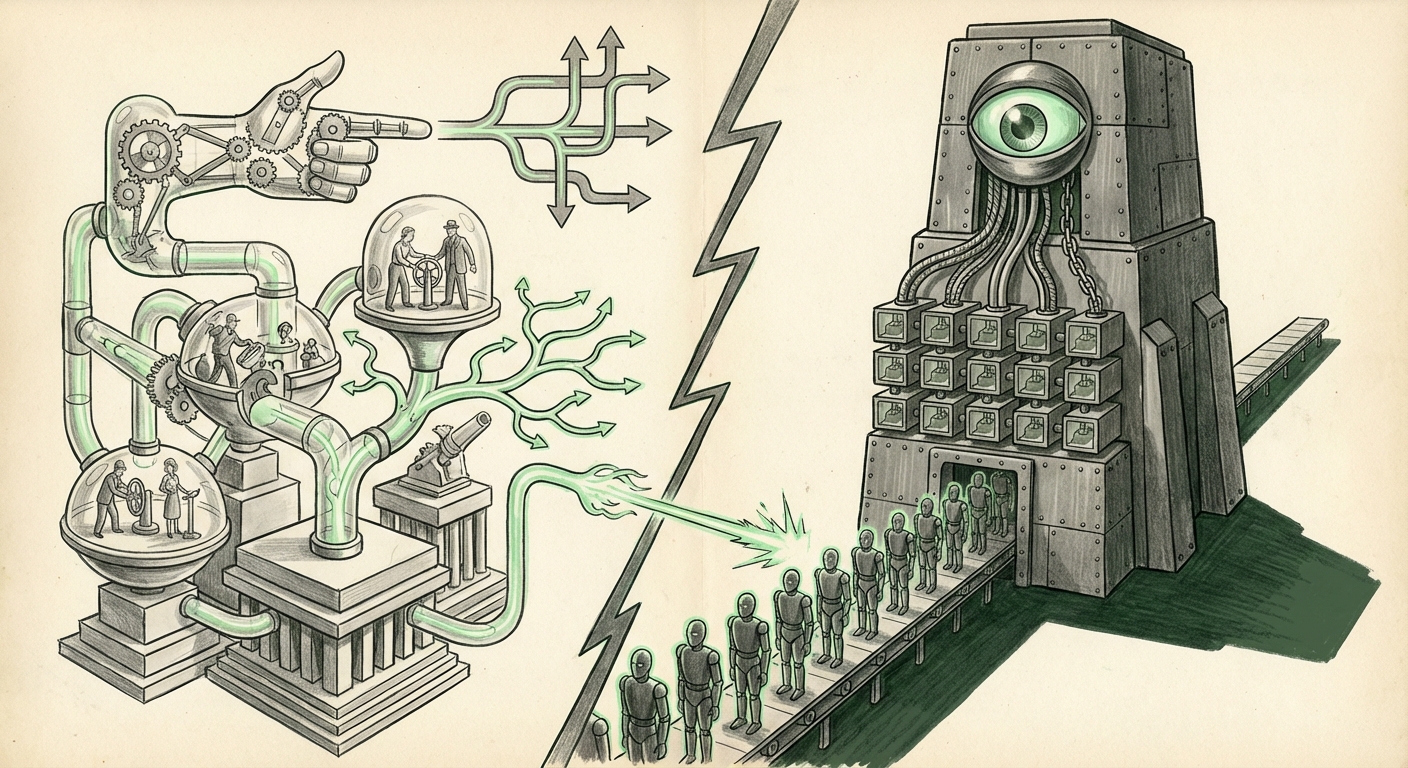

Autocracies, by their nature, can prioritize speed, centralization, and control when deploying powerful technologies. They can rapidly implement comprehensive surveillance networks or deploy AI tools for population management without the friction of public debate, constitutional review, or fundamental rights protection. Democracies, conversely, are built on deliberation, individual rights, transparency, and checks and balances.

Amodei’s warning suggests that in the race for AI supremacy—whether economic or military—democratic nations may be tempted to shortcut these necessary processes. They might adopt centralized data collection methods, deploy powerful surveillance algorithms, or allow opaque decision-making systems into public infrastructure simply because autocratic rivals are doing so faster.

The danger is not just that we will lose the race, but that we will win the race only to find we have built a society that functions less like a democracy.

Corroboration Point 1: Legislating Democratic Values (The EU AI Act)

To counter this slide, democratic bodies are scrambling to codify their values into law. The **EU AI Act**, the world’s first comprehensive AI regulation, serves as the primary attempt to meet this challenge head-on. This legislation seeks to define "unacceptable risk"—AI uses so fundamentally incompatible with democratic norms that they are banned outright (e.g., real-time remote biometric identification in public spaces by law enforcement, or government-run social scoring systems).

For technical and business audiences, this means compliance is becoming geography-dependent. If your AI application touches European citizens, its design must inherently incorporate human rights impact assessments. This legislative friction—the necessary pause for ethics and review—is exactly what Amodei is arguing for. It forces a slower, but values-aligned, path compared to the "move fast and break things" ethos often favored in hyper-competitive environments.

For context on how laws attempt to balance innovation and rights, regulatory bodies are closely examining frameworks intended to guide AI deployment in alignment with core principles.

The Geopolitical Tug-of-War: Speed vs. Scrutiny

The imperative to regulate often collides with the reality of global competition. The AI landscape is currently defined by intense strategic rivalry, primarily between the United States and China, spanning access to advanced chips, talent acquisition, and military application.

Corroboration Point 2: The Competition Context

When nations view AI leadership as a zero-sum national security issue, oversight mechanisms—like those designed to protect privacy or ensure transparency—can be seen as strategic liabilities slowing down innovation. Analyses from think tanks often highlight how different nations prioritize different outcomes. China, for instance, focuses on state control and efficiency. The US prioritizes private sector leadership but struggles with internal coordination regarding national security applications.

This geopolitical pressure forces democratic leaders to ask difficult questions: If we spend two years ensuring our diagnostic AI is perfectly bias-checked, while a rival deploys a less safe but faster version to gain a significant medical edge, have we failed our citizens? Amodei’s response implies that sacrificing core democratic functions for temporary competitive advantage is the ultimate failure.

The Architectural Threat: Centralization vs. Decentralization

The debate over values alignment is not just philosophical; it is deeply technical. The architecture of AI deployment directly impacts accountability.

Corroboration Point 3: Infrastructure and Control

Powerful large language models (LLMs) and foundational AI systems require immense compute power, currently concentrated in the hands of a few major cloud providers and leading labs. This centralization creates two political risks for democracies:

- Single Point of Failure/Control: If a government relies too heavily on a few centralized AI providers (even private ones), those providers become immensely powerful gatekeepers, capable of influencing policy or restricting access, blurring the lines between private power and state control.

- Surveillance Vector: Centralized systems inherently make mass data processing and monitoring easier. If a democratic government begins using these centralized tools for law enforcement or public service administration, the infrastructure built for efficiency can be easily repurposed for unwarranted surveillance, mirroring tools utilized by authoritarian states.

For businesses, this means the push towards **open-source models** and decentralized deployment strategies gains greater strategic importance. Open-source AI, while introducing novel risks (like easier misuse by bad actors), fundamentally democratizes access and oversight, ensuring that control over fundamental digital intelligence isn't consolidated in one or two points.

Building the Guardrails: Responsible Scaling Policies

If external regulation (like the EU Act) sets the legal boundaries, industry self-governance must provide the internal safety mechanisms. This brings us to the final, vital piece of context: the safety mechanisms being developed within the leading labs themselves.

Corroboration Point 4: Internal Safety Frameworks

Anthropic, as the firm behind the warning, is also a leader in advocating for **Responsible Scaling Policies (RSPs)**. RSPs are essentially pre-defined safety thresholds that, if crossed during the training or scaling of an AI model, automatically trigger specific safety stops, external audits, or operational pauses. These policies aim to ensure that as models become more capable, they do not simultaneously become more dangerous or unpredictable.

For an AI system to be truly democratic, it must be controllable, understandable, and aligned with human intent—even as it scales past current human comprehension. RSPs are the technical framework designed to enforce this alignment. They are the industry's commitment that the pursuit of capability will not outpace the commitment to safety and governance.

What This Means for the Future of AI and How It Will Be Used

The collision between Amodei’s warning, geopolitical pressure, and regulatory ambition is shaping the next decade of AI development. We are entering a period defined not just by the power of the models, but by the *provenance* and *deployment context* of those models.

For Businesses: The Compliance Premium

In the near term, adherence to democratic values will translate into a **Compliance Premium**. Companies operating in jurisdictions like the EU or those seeking government contracts will need robust, auditable AI governance structures. Simply using the best-performing model will not be enough; executives must prove their AI deployment strategy actively *reinforces* democratic norms.

This means investing heavily in explainability (XAI) tools, conducting mandatory bias audits, and building robust feedback mechanisms that allow citizens and users to contest AI decisions.

For Society: Defining "Acceptable Power"

The most profound long-term implication is the societal negotiation over what level of centralized power is acceptable, even for good ends. Does utilizing AI to predict crime more effectively justify minor infringements on privacy? Do administrative efficiencies outweigh the loss of human discretion in decision-making?

Democracies must establish a clear "red line" where efficiency ends and authoritarian drift begins. This requires ongoing public education and genuine political dialogue, not just boardroom decisions.

Actionable Insights for Navigating the New AI Terrain

To thrive in a world where AI alignment with democracy is a competitive differentiator, stakeholders must act deliberately:

- Demand Transparency in Supply Chains: Businesses should prioritize AI models and infrastructure providers who clearly articulate their Responsible Scaling Policies (RSP) and have undergone third-party auditing for bias and safety.

- Invest in Decentralized Governance Tools: Explore technologies that distribute decision-making authority or data processing away from single, potentially opaque, endpoints. Open-source adoption and federated learning models can serve as architectural defenses against centralized control.

- Mandate Values-Aligned Procurement: Governments must integrate specific ethical and governance compliance metrics into their RFPs for AI services. The focus must shift from raw performance metrics (e.g., accuracy scores) to measures of accountability and contestability.

- Engage with Regulatory Sandboxes: Participate actively in emerging regulatory environments, such as the EU’s risk-based framework. Helping lawmakers understand technical feasibility ensures that laws promote democratic use rather than simply stifling innovation out of fear.

Dario Amodei’s call is not a call to halt progress; it is a call for intentional progress. The future of AI is not predetermined by the technology itself, but by the values we choose to embed in its deployment. If democratic nations fail to enforce a clear distinction between their necessary technological advancements and the control mechanisms perfected by their autocratic counterparts, they risk creating a future where they are technologically advanced but politically compromised.