The Great Chip Gambit: Why China’s 400,000 H200 Import Signals a New AI Reality

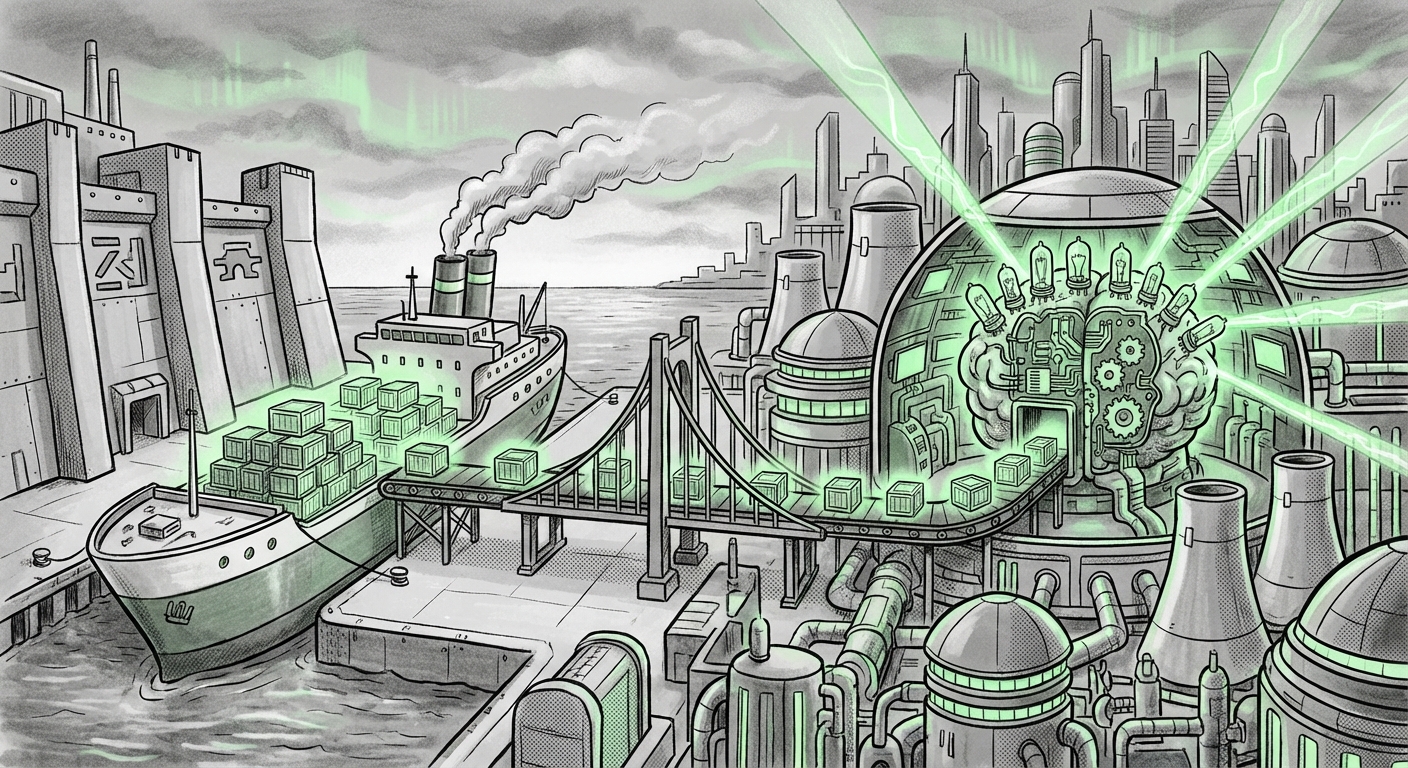

The digital world runs on computational power, and in the current era of Artificial Intelligence, that power is centralized in specialized silicon—namely, the GPUs designed by Nvidia. Recent reports indicating that China has authorized the import of an astonishing **400,000 Nvidia H200 AI chips** for its technology titans—ByteDance, Alibaba, and Tencent—is far more than a large quarterly sales order. It is a critical signal marking the current state of the global AI arms race, the immense pressure of geopolitical constraints, and the sheer, unyielding demand for cutting-edge processing capability.

For the casual observer, this is complex news involving trade policy and computer parts. But for analysts, engineers, and business leaders, this development reveals a fundamental truth: hardware access remains the choke point for global AI dominance. This article synthesizes the context surrounding this massive import, analyzes what the H200 specifically brings to the table, and explores the long-term implications for technological sovereignty.

The Geopolitical Tightrope: Navigating Export Controls

To understand the significance of receiving 400,000 H200s, one must first grasp the restrictive environment in which this deal was made. The United States, citing national security concerns, has implemented increasingly stringent export controls aimed at slowing China's advancement in frontier AI capabilities, particularly high-end model training. These rules, often updated by the U.S. Commerce Department's Bureau of Industry and Security (BIS), target chips that exceed specific performance thresholds—a direct hit at Nvidia’s top-tier accelerators.

The query into the **"US Nvidia export controls China AI chips December 2023"** highlights the dynamic nature of this policy. These restrictions typically focus on metrics like compute density and interconnect speeds. The approval for the H200 suggests one of two critical scenarios:

- The H200 is Just Below the Line: The chip may have been intentionally designed or configured by Nvidia to comply with the current U.S. regulatory limits, offering cutting-edge performance *without* crossing the specific metric that triggers an outright ban.

- A Strategic Bridge: Alternatively, the approval might be viewed by Washington as a necessary concession to prevent a total stall in crucial commercial sectors, provided that the chips are earmarked for specific corporate entities (like Alibaba Cloud) and not directly for sensitive military applications.

For policy analysts and executives, this approval is a momentary balancing act. It confirms that Beijing’s largest tech players cannot afford to be cut off entirely from the best available hardware, even as they are simultaneously pressured to develop domestic alternatives.

The Hardware Leap: Why the H200 Matters So Much

The H200 is not just an incremental update; it represents a significant boost in efficiency, especially for the most resource-intensive task in AI today: running massive Large Language Models (LLMs) for inference (generating responses).

When we examine the **"Nvidia H200 vs H100 performance specs,"** the key difference lies in memory. The H100, the current workhorse, is powerful, but the H200 upgrades to faster, higher-capacity HBM3e memory (moving from 80GB to 141GB). This 1.75x increase in memory bandwidth is transformative for generative AI.

What does this mean in practical terms (even for a 7th grader)? Imagine training a massive AI model is like reading an entire library. The H100 could only hold a few shelves of books at a time, requiring constant trips back to the main storage room (slower memory). The H200 can hold nearly double the shelves (more memory) right next to the reader, meaning the AI spends far less time waiting and much more time actually learning or responding. For companies like ByteDance, which run massive user-facing services like TikTok, faster inference translates directly to lower operational costs and snappier user experiences.

Securing 400,000 units—a substantial number for any single market outside of the US—is a direct investment in maintaining competitive parity on inference speed and capability against Western rivals.

The Domestic Imperative: The Race for Self-Sufficiency

While the H200 import represents a massive, immediate injection of capability, it simultaneously underscores a strategic vulnerability. If the world’s leading AI firms require foreign hardware to stay on the cutting edge, true technological autonomy remains out of reach.

This brings us to the context found in research concerning **"China domestic semiconductor strategy AI self-sufficiency."** China has poured immense resources into nurturing domestic champions, most notably Huawei with its Ascend AI chips (like the 910B). These domestic accelerators are rapidly improving and are highly effective within China’s controlled ecosystem.

However, comparative analyses reveal a persistent gap. While the Ascend 910B is closing the training performance gap with the older H100, it still lags behind the latest H200/B200 generation in sheer scale and ecosystem maturity (the software tools that make Nvidia chips easy to use).

The 400,000 H200 order is, therefore, a pragmatic admission: The domestic roadmap needs more time. These imports are a necessary "bridge" to ensure that China’s cloud providers and consumer internet giants do not fall critically behind in the race to deploy next-generation LLMs over the next 18-24 months.

Future Implications: What This Means for the AI Landscape

The greenlight for these imports creates distinct ripples across the global technology landscape:

1. For Chinese Tech Giants (ByteDance, Alibaba, Tencent)

Actionable Insight: Accelerated Deployment. These companies will immediately prioritize upgrading their data centers to maximize the H200's inference efficiency. This means faster rollout of sophisticated AI features across their entire product portfolio—from Alibaba's enterprise cloud solutions to Tencent's customer service bots and ByteDance's recommendation engines.

Future Trend: Hybrid Architecture. We will likely see these firms adopt a hybrid approach: using the imported H200s for the most demanding, frontier models, while simultaneously training less sensitive or highly proprietary models on domestic Ascend chips to reduce overall dependency and circumvent future export shocks.

2. For U.S. Policymakers and Nvidia

Geopolitical Dilemma: Performance vs. Access. This sale validates that Nvidia can structure its product line to remain compliant with U.S. rules while maximizing global revenue. However, it also demonstrates the limits of export controls in completely stopping the flow of high-end performance. Policymakers must constantly recalibrate the "performance threshold" as chip design evolves.

Practical Implication: Market Capture. While competitors struggle with supply chain constraints, Nvidia capitalizes on this massive demand, solidifying its near-monopoly position. The ability of China’s giants to secure this volume means the competitive gap in *deployment* might shrink, even if the gap in *design* remains wide.

3. For the Global AI Ecosystem

The Infrastructure Arms Race Continues. The primary consequence is the ratcheting up of global competition. If China’s leading AI platforms are powered by the H200, any company or nation lagging in hardware acquisition risks falling behind in AI application sophistication. This emphasizes that AI success is no longer just about having smart engineers; it’s about controlling the supply of physical components.

Focus Shift to Software Optimization. As hardware becomes the primary bottleneck, software engineering efforts will increasingly focus on extreme optimization. Researchers will strive to build models that can run efficiently on older or less powerful hardware, mitigating the risk of being locked out of next-generation chips due to political or supply chain shocks.

Conclusion: A Necessary Bridge in a Divided World

The greenlight for 400,000 Nvidia H200 imports is a pragmatic, powerful snapshot of today’s technology landscape. It is a strategic maneuver by China to ensure its core tech industry remains competitive in the critical AI race, accepting foreign dependence in the short term for high-speed capability.

For the future of AI, this means the competition will be fought fiercely on two fronts: the geopolitical front, determining who controls the faucet of advanced chips, and the engineering front, determining who can best utilize the chips they possess. This massive order does not signal a thawing of tensions, but rather a recognition that for now, the shared global pursuit of advanced AI capability requires, ironically, collaboration mediated by geopolitical constraint.