The Hip of the Machine: How Figure AI’s Unified Network Redefines Embodied AI

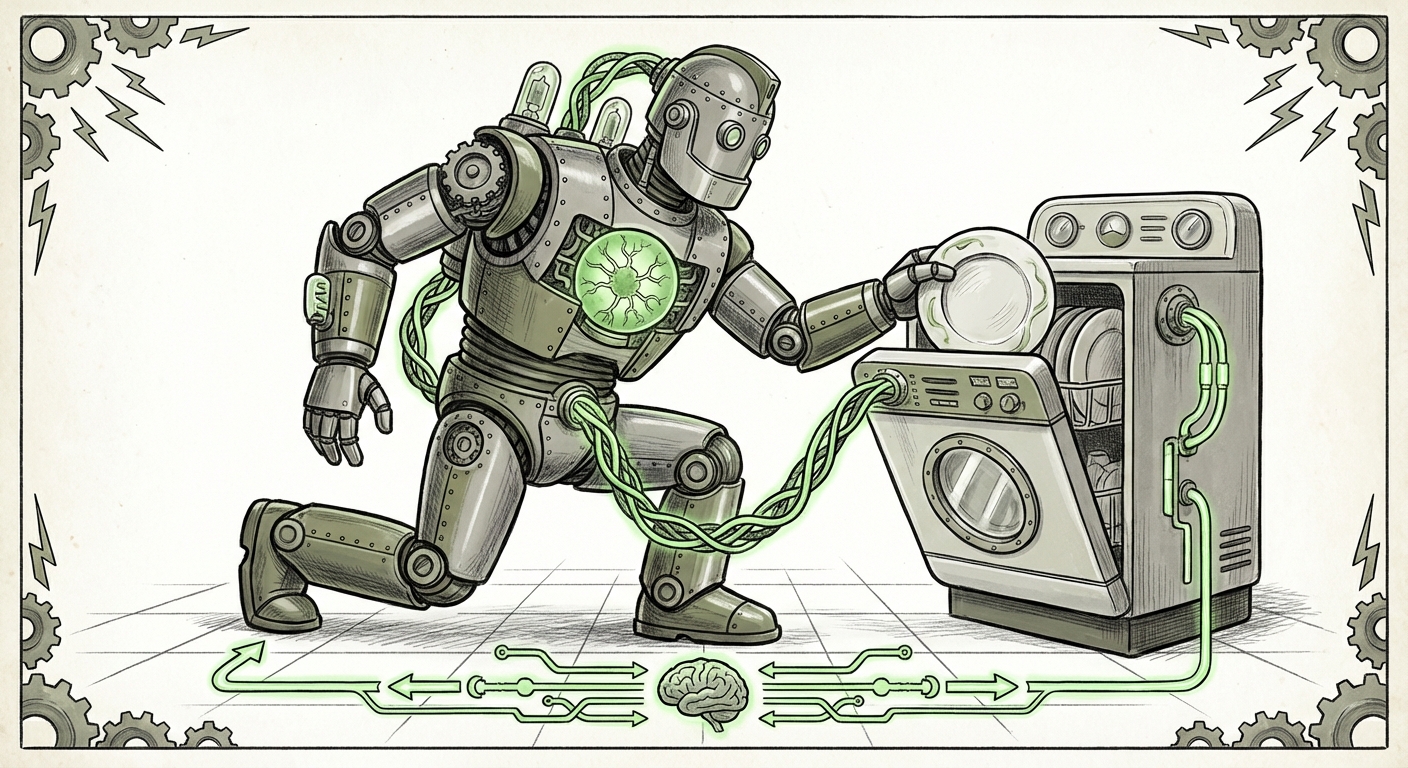

The world of robotics is rapidly moving from specialized automation to general-purpose intelligence that mimics human capability. The recent demonstration by Figure AI—where their humanoid robot, controlled by a single, cohesive neural network, efficiently loaded a dishwasher—is not just a neat parlor trick. It is a seismic indicator that the foundational barriers to truly *embodied AI* are collapsing. For too long, robots have been brilliant at specific, repetitive tasks. Now, they are learning to reason, adapt, and apply human-like dexterity in unpredictable environments.

As an AI technology analyst, I view this development as crossing a critical threshold. It signals the mainstream integration of Large Language Models (LLMs) and Large Multimodal Models (LMMs) directly into physical motor control. This is what moves us beyond simple "pick-and-place" programming and into the realm of adaptable, general-purpose workers.

The Core Breakthrough: A Single Mind for Physical Action

What makes the Figure AI demonstration so compelling is the claim of control via a single neural network. Imagine teaching a child to clean up. You don't give them separate instructions for grasping, lifting, avoiding obstacles, and placing; they integrate all sensory data (sight, touch, balance) into one continuous decision loop. This is what Figure AI appears to be achieving.

This contrasts sharply with older robotics architectures, which relied on a long pipeline: Computer Vision module outputs object coordinates $\rightarrow$ Motion Planning module calculates path $\rightarrow$ Low-level Controller executes motor commands. If any step failed, the whole process broke. The integration of powerful generative AI—the kind that powers ChatGPT—into the control loop changes the game:

- Semantic Understanding: The robot doesn't just see pixels; it understands the concept of a "dirty plate," a "handle," and the "top rack." This high-level reasoning comes directly from the foundation model.

- Zero-Shot Generalization: If the robot is trained on language, it can apply that understanding to novel objects or situations it has never physically encountered before. If you told it, "Put the oddly shaped ceramic item there," it can likely figure out how to grasp and place it.

- Dynamic Compensation: When the robot put its "hip into" the task, it was actively balancing and adjusting its grip force on the fly. This dynamic stability is far easier to manage when the planning and execution are unified within one powerful transformer architecture.

This trend of fusing language intelligence with physical action is the heart of Embodied AI. We must look at corroborating trends in the AI research community to understand the scope of this shift. The emphasis is moving from *how smart the AI is* to *how effectively that intelligence can interact with the real world* [Contextualizing the Underlying AI Model].

The Competitive Crucible: Benchmarking Dexterity

No technological leap occurs in a vacuum. Figure AI’s showcase directly challenges and validates the current pace of the humanoid robotics race. When we look at the competitive landscape, we see a concerted, well-funded push across the industry:

Tesla’s Optimus, Agility Robotics’ Digit, and the continued evolution of Boston Dynamics’ platforms all represent parallel efforts to solve general-purpose manipulation. However, the Figure demonstration prioritizes fine dexterity and intuitive interaction—skills crucial for household or light industrial tasks (like putting away dishes)—over sheer speed or brute force.

This competition is forcing rapid iteration on both hardware efficiency and software capability. The question is no longer *if* a robot can perform a task, but *how robustly* it can perform it against variations in lighting, object placement, and unexpected movements. The progress seen by Figure AI confirms that the industry is hitting parity on certain cognitive tasks, shifting the focus to durability and cost-effectiveness [Competitor Landscape and Industry Benchmarks].

Hardware Context: Powering Human-Level Motion

The sophistication of the AI model is only half the battle; the hardware must be able to faithfully execute the network's commands. A major point of analysis here is the actuation system. For a robot to apply force subtly, like setting down a thin plate without shattering it, it requires incredibly responsive actuators. The industry is currently grappling with the choice between powerful hydraulics (which are complex and messy) and increasingly capable, battery-friendly electric servo motors. Achieving human-level dexterity, complete with the ability to use body momentum (the "hip" leverage), suggests significant advancements in electric motor density and torque control, allowing for smoother, more energy-efficient movement than previous generations [Hardware and Actuation Breakthroughs].

The Road Ahead: From Kitchen to Factory Floor

While the dishwasher task is excellent for public relations and proof-of-concept, the real economic value lies in deploying these systems where labor shortages are acute and tasks are varied. This transition—from demonstration to deployment—introduces immediate practical challenges that analysts must monitor.

The Commercialization Hurdle

For Figure AI and its peers, the immediate future hinges on shifting focus from the lab environment to the messy reality of the warehouse or factory floor. A dishwasher demonstration occurs under controlled lighting with known objects. A real warehouse has dust, grease, shifting stacks, and varying temperatures.

Key considerations for commercial readiness include:

- Safety Certification: How does a general-purpose robot coexist safely with human workers? The ability to stop instantly and gently handle delicate items is paramount.

- Durability and Maintenance: How long can these complex electromechanical systems run 24/7 before requiring expensive repairs? The cost of ownership must rapidly decrease.

- Data Feedback Loops: Every successful deployment must feed data back into the centralized LMM to improve the baseline intelligence for all future robots. This network effect is where companies like Figure AI gain their decisive advantage [The Path to Commercialization and Real-World Utility].

For businesses, the initial investment in these general-purpose robots will be high, likely targeting complex logistics tasks first—sorting mixed pallets, handling irregular packaging, or performing maintenance in confined spaces—before moving into general assembly lines.

Implications for AI and the Future of Work

This leap in embodied intelligence signals several major shifts in the trajectory of AI development:

1. The Primacy of Multimodality

The era where LLMs only dealt with text is over. The ability to ingest video, process tactile feedback, and generate motor commands means that the next generation of AI is fundamentally *multimodal*. Understanding the world requires sight, touch, and language concurrently. Figure AI’s architecture is a tangible representation of this necessary evolution.

2. Democratization of Complex Robotics

Historically, automating a complex assembly line required months of specialized robotic programming. If foundation models can interpret high-level natural language commands, the barrier to entry for deploying advanced robotics plummets. A manager simply describes the new task, and the robot, leveraging its generalized understanding, adapts its behavior. This is the key to **general-purpose manipulation**.

3. Reshaping Labor Demand

The societal implication is profound. While early automation targeted repetitive manual labor (assembly line workers), general-purpose humanoid robots target tasks requiring cognitive flexibility, such as stocking shelves, sorting recycling, or even assisting the elderly. This necessitates a serious, proactive approach to workforce retraining. We are moving toward a future where human value is centered on creativity, complex problem-solving, strategic oversight, and managing the robotic workforce itself.

Actionable Insights for Leaders

For executives and strategists, ignoring the rapid pace of embodied AI is no longer an option. The following insights should guide immediate strategy:

- Identify "Cognitive Load" Tasks: Instead of looking for simple, repetitive tasks, analyze which current human jobs require high levels of on-the-fly decision-making regarding handling diverse objects. These are the first targets for successful humanoid deployment.

- Establish AI Partnerships Early: If your company relies on internal data or specialized environments, begin collaborating with leading robotics firms *now*. Integrating your proprietary operational data into their foundation models will give you a competitive moat when the technology matures.

- Develop a "Robot Management" Skillset: The new skill gap won't be in programming robots, but in training, auditing, and overseeing fleets of autonomous, general-purpose agents. Invest in internal talent capable of translating business needs into precise, safe AI prompts and performance standards.

Figure AI’s demonstration—the robot putting its "hip into" the dishwasher—is a metaphor for the industry applying its full weight—hardware, software, and funding—into solving the physical world. We are witnessing the transition from specialized tools to versatile partners, and this moment will define the next decade of industrial and domestic technology.