The Inference Economy: Why vLLM and SGLang Are the Unsung Heroes of Enterprise AI

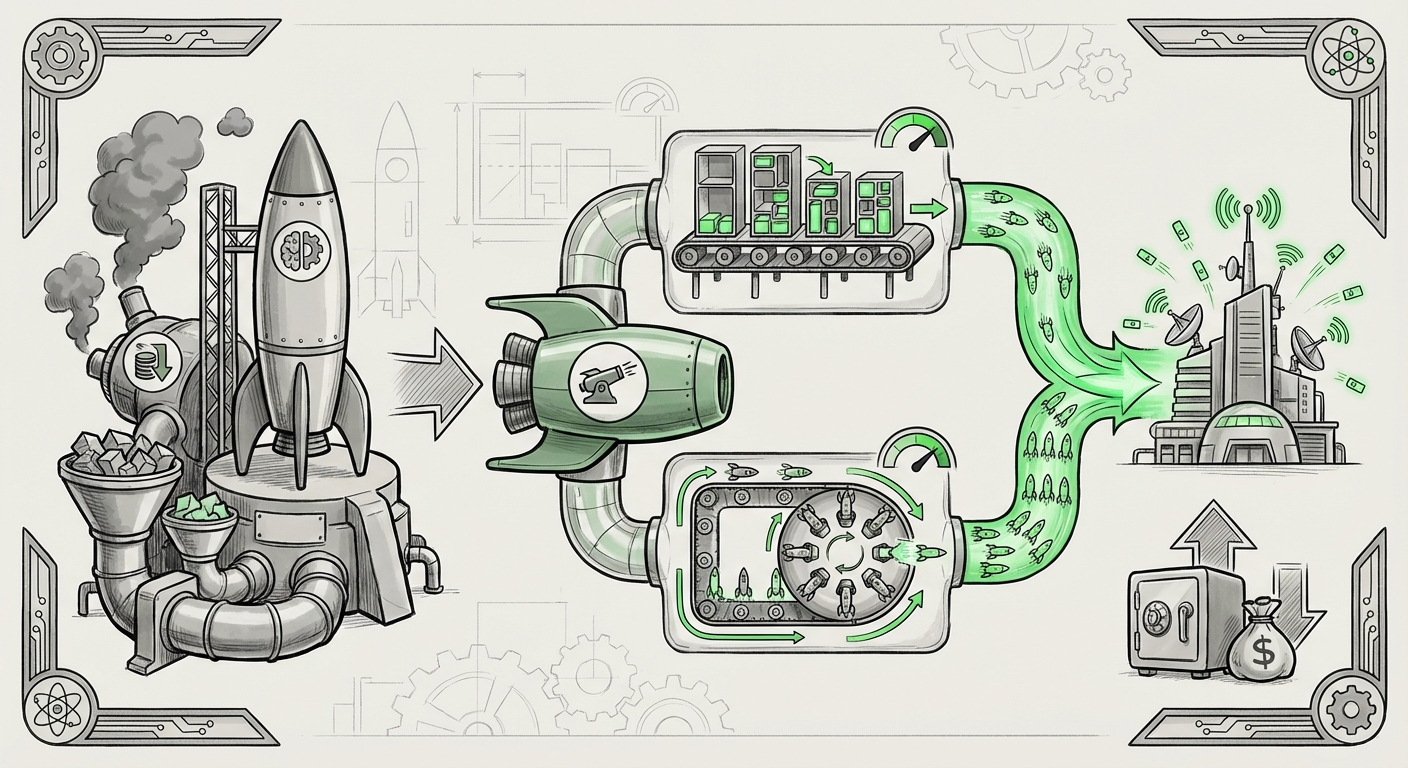

For the past few years, the narrative of Artificial Intelligence has been dominated by headlines about monumental model training runs. We’ve obsessed over parameters, training data size, and the astronomical costs of teaching foundation models like GPT-4 or Llama how to "think." This phase was necessary—it built the engines of the AI revolution.

However, the industry is now hitting a critical inflection point. The conversation is fundamentally shifting from building the rocket to keeping the rocket in flight. As enterprises flood the market with AI-powered products—chatbots, code assistants, document processors—the true bottleneck and cost center is no longer training; it is inference: the act of running the model to generate a response.

This shift explains the quiet but profound significance of recent developments centered around highly specialized inference frameworks, notably the open-source projects vLLM and SGLang, and their emerging commercial counterparts. These tools aren't just incremental improvements; they represent the next major battleground for achieving scalable, affordable, and high-quality enterprise AI.

The Inference Wall: Why Standard Serving Fails the Enterprise Test

To appreciate the value of vLLM and SGLang, we must first understand the challenge they solve. Running a Large Language Model (LLM) involves significant computational requirements, primarily relying on expensive GPU memory. In a typical deployment, a single user request is processed, and then the GPU resources sit idle waiting for the next request.

Imagine a busy highway where every car has to wait for the previous car to completely clear the road before it can even start driving. This is inefficient.

Traditional serving methods often suffer from two major inefficiencies when dealing with real-world, high-concurrency traffic:

- Memory Fragmentation: As models generate text token-by-token, memory allocation is complex. Old systems reserve memory for the worst-case scenario, leading to vast amounts of wasted GPU capacity.

- Low Throughput: If requests arrive sporadically, GPUs sit idle, leading to high latency for users and skyrocketing operational costs (OpEx) for the business.

This reality directly impacts business viability. If the cost to serve 1,000 daily users is prohibitive, your brilliant AI product remains a costly experiment rather than a mainstream solution. Research into the future cost distribution of LLMs confirms this: operational serving costs quickly outpace initial training costs for any application with significant user adoption.

The Technical Revolution: Paged Attention and Continuous Batching

vLLM and SGLang tackle these inefficiencies head-on using sophisticated memory management techniques borrowed from operating systems, bringing order to the chaos of LLM generation.

The Magic of Paged Attention (vLLM's Foundation)

The core breakthrough popularized by vLLM is Paged Attention. This technique radically rethinks how LLMs manage the Key-Value (KV) cache—the memory required to remember what has already been generated in the current sequence.

Instead of dedicating one large, contiguous block of memory for every request (like reserving a whole parking lot for one car), Paged Attention breaks the KV cache into smaller, fixed-size blocks, much like virtual memory in a computer OS. These blocks don't need to be next to each other in physical memory.

For the non-technical audience: This is like having storage bins for LEGO bricks. You don't need a single, giant table to hold all the pieces for one project; you can store pieces efficiently in many smaller, dedicated bins across your workspace, maximizing the use of space.

This allows inference servers to pack many more user requests onto a single GPU, leading to massive improvements in throughput—the number of tokens served per second.

The Power of Continuous Batching (SGLang’s Edge)

SGLang often complements this with highly optimized continuous batching. While traditional batching waits for a fixed group of requests to finish before starting the next group, continuous batching keeps the GPU busy by dynamically swapping in new requests as soon as another finishes its current step.

When combined with Paged Attention, this creates a system where the GPU utilization hovers near 100%, slashing latency and drastically lowering the effective cost per query. When comparing these optimized engines against standard serving solutions like the default Hugging Face setup, benchmarks frequently show throughput increases of 10x or more, depending on the batch size and model size. This technical advantage is the primary driver behind the commercialization of these frameworks.

Corroboration: The Market Demands Optimization

The emergence of commercial offerings around vLLM and SGLang is not arbitrary; it confirms several vital market trends:

1. The Enterprise Production Bottleneck

Major analyst reports repeatedly highlight that the primary hurdle for scaling AI adoption isn't talent or model availability—it's infrastructure management and cost control. Enterprises are moving beyond initial "proof of concepts" and trying to serve millions of inferences daily. This transition from hobby project to reliable utility exposes every inefficiency in the serving stack. The search query looking for "Enterprise LLM deployment challenges" reveals a market desperate for stable, cost-effective MLOps solutions that guarantee performance SLAs (Service Level Agreements).

2. The Inference Arms Race

The competitive landscape, reflected by queries comparing "vLLM vs SGLang vs TGI," shows that the industry recognizes specialized inference servers as superior. Established platforms like NVIDIA's Triton Inference Server (TGI) are constantly evolving to incorporate techniques like continuous batching. However, frameworks born purely out of open-source optimization research (like vLLM) often lead the charge on novel memory efficiencies, forcing the entire ecosystem to adapt.

3. The Economic Pivot

If training an SOTA model costs tens of millions, serving it to millions of users over three years can cost hundreds of millions. When you look at the long-term financial models examining the "future cost distribution LLM training vs inference," the trend is clear: inference dominates OpEx. Therefore, any tool that can squeeze 50% more requests out of the same $20,000 GPU becomes a mission-critical piece of software, justifying significant investment in its commercial support and integration.

Implications: What This Means for the Future of AI

The dominance of efficient inference frameworks changes the calculus for AI development across the board:

For the Technical Architect: Abstraction and Specialization

ML Engineers will increasingly focus on two distinct phases: model specialization (fine-tuning/RAG integration) and deployment infrastructure. They will expect off-the-shelf inference solutions that handle the complexity of parallel processing, fault tolerance, and optimized kernel execution automatically. The need to manually tweak CUDA kernels for token generation will diminish, replaced by leveraging powerful, stable commercial inference APIs built on top of vLLM or SGLang.

For the Business Leader: Democratization of Scale

Cost is the ultimate gatekeeper to democratization. When inference becomes dramatically cheaper, smaller companies and niche applications can deploy LLMs that were previously exclusive to mega-corporations. This lowers the barrier to entry for creating specialized AI services, fostering a boom in domain-specific AI agents that can afford to run 24/7.

For Society: Faster, More Accessible AI

Faster inference means lower latency. Lower latency means AI feels more instantaneous, more like real-time conversation. This improvement in user experience is crucial for embedding AI into critical workflows—from real-time medical diagnosis assistants to instant customer support resolutions. Efficiency fuels availability, making powerful AI accessible to more people globally.

Actionable Insights for Navigating the Inference Economy

For organizations deploying AI today, understanding and integrating these frameworks is not optional—it is essential for survival in a competitive market.

- Audit Current Serving Costs: If you are using vanilla Hugging Face pipelines or basic deployment servers, calculate your current GPU utilization rate. If it’s below 70% during peak hours, you are almost certainly leaving significant money on the table.

- Prioritize Framework Integration: Whether you use the open-source vLLM directly or subscribe to a managed service that uses SGLang under the hood, ensure your MLOps pipeline is built around these new paradigms (Paged Attention/Continuous Batching).

- Factor OpEx into Model Selection: When choosing between two models of similar performance, the one that runs more efficiently on specialized inference servers is the *better* model for production deployment, regardless of its training cost. Efficiency is the new performance metric.

- Look for Commercial Stability: While open-source provides innovation, commercial offerings built on these technologies provide enterprise guarantees around uptime, security patching, and advanced features like distributed serving, which are non-negotiable for large deployments.

The era of simply throwing more GPUs at an inference problem is ending. The future belongs to the architects who can harness computational density through intelligent software. vLLM and SGLang are not just interesting side projects; they are the foundational technology driving the next phase of generative AI scaling.