The Agent Revolution: Why Karpathy’s Pivot Confirms the Future of AI-Driven Coding

In the fast-moving world of Artificial Intelligence, skepticism often precedes breakthroughs. Few figures command more attention regarding deep technical shifts than Andrej Karpathy, former head of AI at Tesla and a widely respected voice in deep learning. His recent, stark reversal on AI coding agents—from dismissing them just months ago to now using them for 80% of his personal coding workflow—is not a mere change of mind. It is a powerful bellwether indicating that the technology has crossed a crucial chasm between research novelty and indispensable utility.

For everyone from individual developers to Fortune 500 CTOs, understanding Karpathy’s pivot is key to grasping the next seismic shift in software creation. This isn't about better autocomplete; this is about the emergence of the true **AI Agent**.

The Skeptic's Conversion: Why Agents Now "Work"

Three months ago, when Karpathy stated that AI agents "just don't work," he was likely benchmarking them against the requirements of a senior engineer: solving ambiguous, multi-step problems that require context retention, error correction, and interaction with a live codebase environment. Early attempts often failed on the second or third step of a complex task, collapsing under the weight of planning.

The change since then is startling. Karpathy’s adoption of agentic coding as the "biggest change to my basic coding workflow in ~2 decades" implies that the current generation of tools has conquered the core challenges of:

- Robust Planning: The ability to break a high-level English instruction into sequential, manageable sub-tasks without human intervention.

- Execution and Feedback Loops: Agents can now run code, observe compilation errors, interpret tracebacks, and autonomously debug their own output—a crucial step toward genuine autonomy.

- Context Management: Maintaining awareness of the entire project structure and relevant documentation across long sessions.

Corroboration: The Technical Leap Confirmed

Karpathy’s experience is being mirrored in performance metrics across the industry. When researching this shift, we look for evidence that agents are technically capable of handling these complex requirements. Searches for **"LLM autonomous coding agents benchmarks 2024"** often reveal benchmarks like SWE-bench showing rapid score improvements. These benchmarks quantify how well an AI solves real-world issues reported on GitHub. A sudden jump in successful resolutions validates the engineering required for agents to become reliable partners.

This moves the discussion from *if* agents work to *how quickly* they will replace lower-level boilerplate coding, allowing senior engineers to focus purely on high-level architecture and problem definition—coding almost entirely in "English."

Industry Validation: Agents Move from Lab to Lifecycle

A single engineer's workflow shift is compelling, but industry adoption provides the necessary macro-context. The transition from AI *assistants* (like basic Copilot) to AI *agents* (tools that manage projects) is being formalized by major platform providers.

The Rise of Agentic Workspaces

The most significant industrial validation comes from the race to productize agentic workflows. A key area to watch is the development of platforms like GitHub Copilot Workspace. Unlike previous tools that only offered suggestions within the editor, Workspace is designed to take a high-level instruction (e.g., "Add a feature to authenticate users via OAuth") and manage the entire process: planning the files to change, writing the code, running tests, and preparing a pull request. This is precisely the end-to-end automation that Karpathy is likely experiencing.

The existence of these integrated, environment-aware agent tools confirms that the industry is no longer betting on incremental improvements; it is betting on comprehensive automation of the development lifecycle.

The "First AI Engineer" Debate

Further context comes from specialized startups, such as the excitement surrounding tools like Devin by Cognition Labs. While Devin’s real-world deployment is still being scrutinized, the intense focus on creating a truly autonomous "AI Software Engineer" proves that the market demands—and believes it is close to achieving—a fully independent coder entity.

This focus on the "AI Engineer" helps frame the philosophical shift: the modern developer is evolving into an **AI Supervisor** or **AI Orchestrator**.

Future Implications: What This Means for AI and Business

Karpathy's conversion isn't just about faster coding; it’s a preview of how general-purpose AI will integrate into complex knowledge work across all industries.

1. The Productivity Singularity for Software

For businesses, the implications are staggering. If a senior engineer can now accomplish what previously took a team of three in the same timeframe, the efficiency gains are exponential. Reports on the **"Impact of generative AI on software engineer productivity"** suggest that while early adoption saw modest gains (10-30% speedups), the shift to agentic workflows promises order-of-magnitude improvements for certain classes of tasks.

This compression of the development timeline means products can be iterated upon faster than ever before. Companies that successfully integrate these tools will gain an overwhelming competitive advantage in feature velocity.

2. Redefining the Value of Human Expertise

If an LLM can write functional, tested code for 80% of tasks, what is the remaining 20% worth? The premium shifts dramatically toward tasks that require profound non-standard reasoning, ethical judgment, novel scientific insight, or deep business intuition.

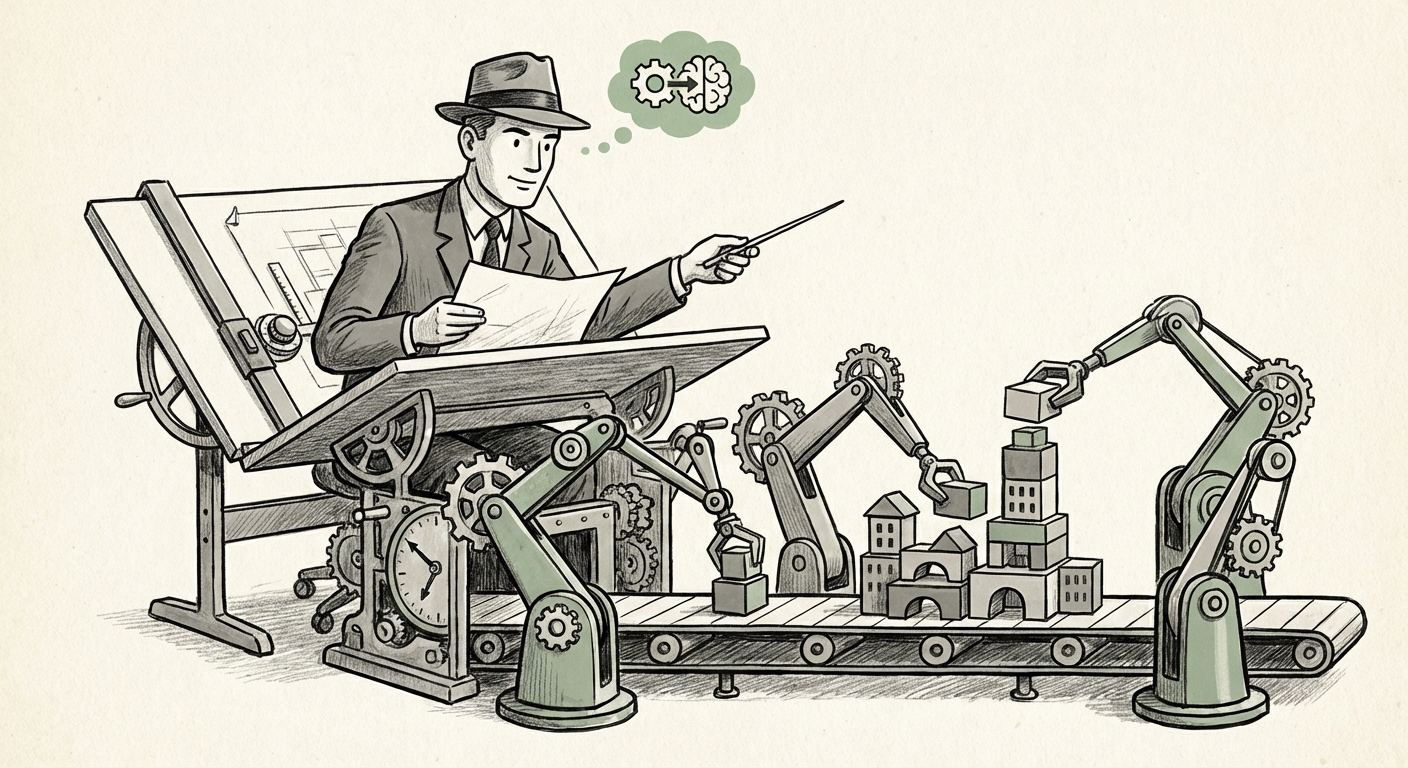

Engineers must move up the abstraction stack. The skill of writing a precise, unambiguous prompt that defines a complex, novel architecture—and knowing how to verify the agent’s output for security vulnerabilities or edge cases—becomes the highest-value skill. The engineer is no longer the bricklayer; they are the architect who designs the blueprints so perfectly that the robotic construction crew never makes a mistake.

3. The Democratization of Creation (With Caveats)

The ability to code using only natural language lowers the barrier to entry for creating software solutions. A small business owner or a scientist who understands their domain but lacks deep coding expertise can now instruct an agent to build a custom tool.

However, Karpathy's implied warnings are critical here. As we learned from early AI image generators, the output can look superficially correct but fundamentally flawed or biased. When an agent builds a complex system, the consequences of an underlying logical error are far greater than a simple visual mistake. This necessitates robust verification frameworks.

Actionable Insights for Today's Leaders and Developers

Karpathy’s pivot is a clear mandate: adaptation is no longer optional.

For Business Leaders and CTOs:

- Pilot Agentic Workflows Immediately: Do not wait for perfection. Begin formal pilot programs integrating tools like Copilot Workspace or specialized agent platforms into high-performing teams. Measure productivity gains against the cost of implementation and training.

- Rethink Talent Strategy: Stop hiring solely for senior-level coding syntax skills. Start prioritizing engineers who excel at system design, decomposition, complex problem framing, and critical review of generated artifacts.

- Invest in Verification Infrastructure: Since agents generate code at speed, your testing, security scanning, and auditing pipelines must become exponentially faster and more rigorous to catch emergent flaws before deployment.

For Developers:

- Master the Art of Specification: Your value is increasingly tied to your ability to articulate complex requirements clearly. Practice writing detailed, context-rich instructions (prompts) that anticipate potential ambiguities.

- Embrace the Debugging Role: Treat the agent as a junior partner. Be prepared to spend less time writing lines of code and more time debugging the agent's intermediate assumptions and failures.

- Understand System Context: Deep knowledge of the entire system architecture, data flow, and business logic is what allows you to guide the agent effectively and spot large-scale integration errors the LLM might miss.

Conclusion: The New Definition of Coding

Andrej Karpathy’s journey from skepticism to near-total reliance on AI coding agents over a mere three months is the strongest evidence yet that the **AI Agent Revolution** is here, not as a distant promise, but as a present-day reality redefining the craft of software engineering. The period of AI tools serving as mere assistants is over. We have entered the era of the AI collaborator.

The challenge for the next few years will not be building the code; it will be defining the correct problems, verifying the agent's solutions with rigor, and managing the staggering acceleration of technological output. The "coding" of the future is less about typing syntax and more about directing intelligent, autonomous systems. Those who master this new dialect of human-AI collaboration will define the technological frontier.