The Command Line Revolution: Why Mistral's Vibe 2.0 Signals the Next Wave in AI Developer Tools

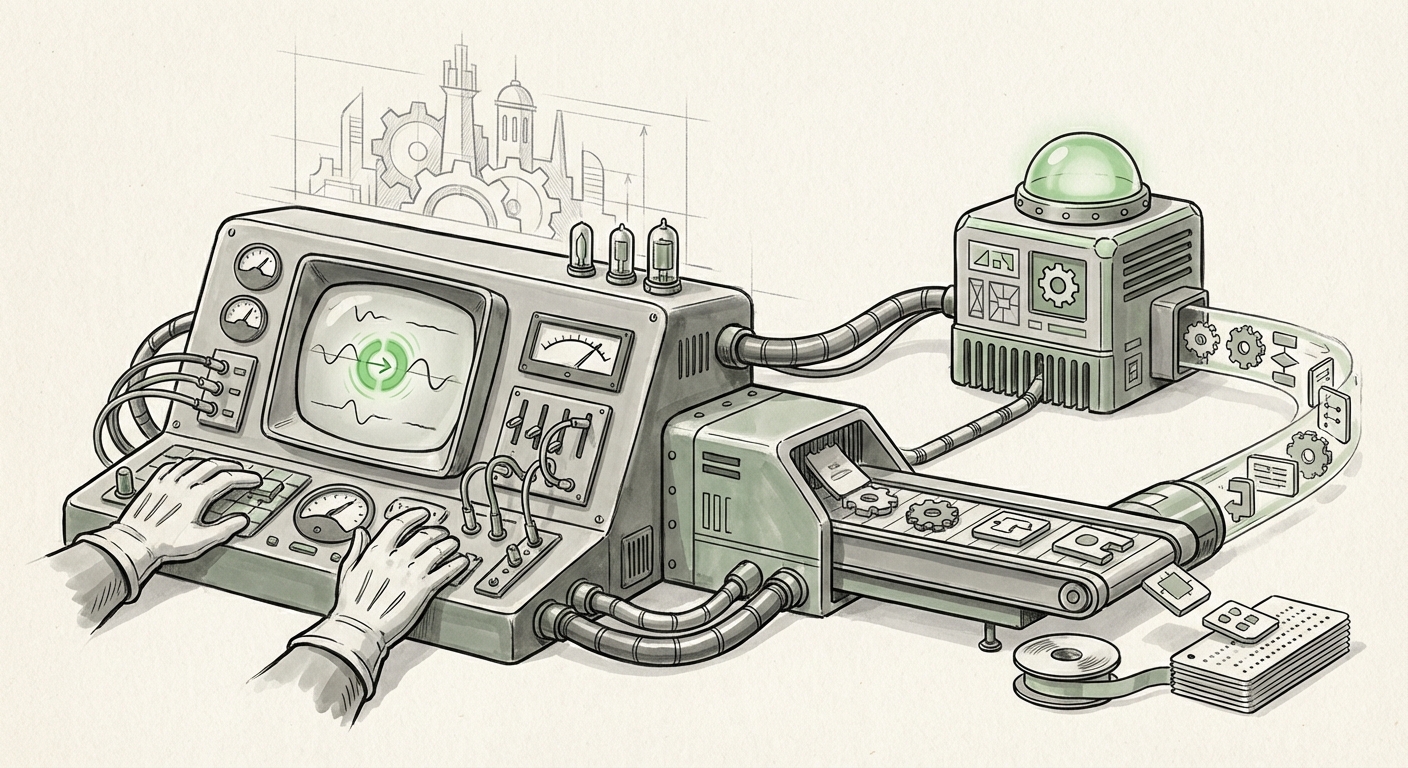

The artificial intelligence landscape is no longer defined solely by massive public chatbots. The true battleground for adoption and productivity is shifting into the specialized tools that engineers use every second of the day. Mistral AI’s recent launch of Vibe 2.0, a highly specialized, terminal-based coding agent powered by their proprietary Devstral 2 model, is far more than a simple software update. It is a loud declaration about the future direction of AI integration: moving deep, fast, and specialized into the engineer's native environment.

This development crystallizes three major trends we are tracking: the swift commoditization of generalist LLMs, the increasing demand for laser-focused tools, and the migration of AI into low-latency, high-friction zones like the Command Line Interface (CLI).

Trend 1: The Specialization Imperative—From Chatbots to Dev-Bots

When large language models (LLMs) first broke into the mainstream, the focus was on generality—a model that could write an email, summarize a book, and debug simple code. Today, the market is maturing. The barrier to creating a "good enough" general LLM has lowered, leading to a wave of commoditization. This forces companies like Mistral to seek differentiation through hyper-specialization.

Vibe 2.0 is built on **Devstral 2**. This indicates a deliberate decision to train or fine-tune a model specifically for the syntax, logic, and context required in software development. For developers, a specialized tool beats a generalist tool every time, provided the performance is there. If we investigate how this model stacks up—for instance, by looking for articles comparing "Devstral 2" performance benchmarks vs GitHub Copilot—we see the stakes. Developers are weary of AI generating code that looks plausible but fails critical tests. Specialization aims to solve this by focusing computational power on understanding APIs, idiomatic language usage, and complex algorithmic structures.

For the Business Audience: This specialization means higher ROI on AI investment. Instead of using a general-purpose model for everything (which might be slow or inaccurate for specific tasks), enterprises can deploy models optimized for niche tasks like SQL query generation, infrastructure-as-code scaffolding, or legacy code migration. This reduces engineering overhead significantly.

Trend 2: The Terminal Takes Center Stage

Why the terminal? The Command Line Interface (CLI) is the bedrock of infrastructure management, DevOps pipelines, scripting, and many developers’ daily routines. While Integrated Development Environments (IDEs) like VS Code have excellent AI extensions, the terminal often requires context-switching—jumping out of the editor to a browser or chat window to ask an AI a question, copying the answer, and pasting it back. This friction kills productivity.

The move toward **AI coding agents integrated directly into terminal CLI trends** suggests a recognition that the fastest path for an engineer is often the most direct one. Vibe 2.0 aims to sit right alongside the shell prompt, allowing developers to ask: "How do I use this obscure library function?" or "Generate a secure Bash script to handle these logs," and receive instant, actionable output without leaving their primary workflow.

This is critical for DevOps and infrastructure professionals who live in the terminal. It’s about reducing cognitive load. When AI operates seamlessly within the command line, it becomes an immediate extension of the user’s intent, rather than an external dependency.

Trend 3: Enterprise Trust and the Security Equation

Mistral’s approach often hints at European data sovereignty and enterprise privacy concerns. General cloud-based AI assistants require sending proprietary code snippets to external servers for processing. For highly regulated industries—finance, defense, healthcare—this data leakage risk is a non-starter.

This is where the **impact of localized or specialized LLMs on code security and auditing** becomes paramount. A tool designed to run efficiently within the developer environment, potentially offering an on-premise or private cloud deployment option, immediately gains an edge in securing enterprise trust. If Vibe 2.0’s architecture prioritizes minimal data egress, it directly supports Mistral’s **enterprise adoption strategy**. Enterprises are willing to pay a premium for specialized tools that do not compromise their intellectual property or compliance standing.

For the security analyst, a specialized, perhaps smaller, model like Devstral 2 running locally might even be easier to audit for backdoors or unexpected behaviors than querying a gigantic, opaque frontier model.

Deeper Analysis: Practical Implications for Workflow

The Death of Context Switching

The primary implication for the average software engineer is the significant reduction in "context switching tax." Every time a developer has to stop typing code to open a web browser, search for documentation, or formulate a prompt in a separate chat window, time and mental energy are lost. CLI integration eliminates this. The AI becomes a hyper-efficient pair programmer available instantly, whether for generating boilerplate code, understanding complex error messages, or refactoring small chunks of logic.

Shifting Developer Focus to Architecture

When AI handles the routine, repetitive, and often frustrating tasks—like remembering exact command syntax or writing unit tests for simple functions—engineers are freed up to focus on higher-level problems: system architecture, complex state management, user experience, and security design. The AI moves from being a helpful assistant to an essential productivity multiplier, pushing the bar for what one engineer can accomplish in a day.

The Rise of Model Diversity

The success of Devstral 2 reinforces the idea that one model will not rule them all. We are moving into an ecosystem where developers will likely use several specialized models concurrently:

- A powerful frontier model (like GPT-4 or Claude Opus) for complex architectural brainstorming.

- A fast, specialized model (like Devstral 2) for in-line coding completion and CLI tasks.

- A security-focused model for vulnerability scanning pre-commit hooks.

Mistral’s strategic positioning—offering high-quality, efficient models that can run well on varied hardware—makes them a strong contender in this multi-model future.

Actionable Insights for Technology Leaders

For CTOs and engineering managers looking to harness this wave, three steps are necessary:

- Audit the Workflow Friction Points: Identify where your engineers spend the most time context-switching (documentation lookups, repetitive script writing). These are the immediate targets for CLI-native agents.

- Evaluate Specialization Over Generality: Do not default to the largest available model. Test specialized coding models against your specific tech stack (e.g., Ruby on Rails, Kubernetes configuration). The specialized model often wins on latency and accuracy for the target domain.

- Prioritize Local and Private Deployment: If your teams handle sensitive code or proprietary algorithms, mandate an AI strategy that emphasizes models deployable within your private cloud or on local developer machines. Security and compliance are the gatekeepers to mass adoption in the enterprise.

The Future: Beyond Code Completion

Mistral Vibe 2.0 is not just about writing code faster; it’s about redefining the developer interface itself. If AI can operate effectively in the raw, text-based environment of the terminal, what’s next?

We can anticipate AI agents becoming fluent in managing entire operational environments. Imagine Vibe 2.0 evolving to debug infrastructure failures reported by monitoring tools, auto-generating patches, staging the deployment, and then presenting the rollback plan—all via conversational commands in the shell.

This push into the CLI demystifies AI tools for infrastructure experts, making them feel less like external web applications and more like native operating system enhancements. The long-term implication is a shift in developer identity—moving from being the person who *writes* the code to the person who *directs* the AI systems that write, deploy, and maintain the code. The terminal remains the control panel for that direction, making agents like Vibe 2.0 the essential steering mechanism for the next generation of software creation.