The Quiet Revolution: Why Deterministic AI's Rise Signals a More Accountable Future

For years, the conversation around Artificial Intelligence has been dominated by the thrilling, yet often opaque, power of deep learning and massive Large Language Models (LLMs). These systems, fueled by vast amounts of data, are brilliant pattern-matchers. However, they often operate as "black boxes"—we know *what* they output, but not always *why*.

This is changing. The recent launch of community forums dedicated to Deterministic AI, such as the one announced by Rainbird Technologies, is not just a minor industry update; it’s a signal flare indicating a massive shift in enterprise priorities. It suggests that the future of mission-critical AI hinges less on sheer predictive power and more on verifiable, auditable, and logical certainty.

As an AI analyst, I see this trend as the maturation of the industry. We are moving from the era of "AI magic" to the era of "AI engineering," where reliability and trust are paramount. To understand this shift, we must look at the forces pushing this deterministic wave forward.

The Black Box Problem: Why Enterprises Can’t Wait for Answers

Imagine a bank denying a crucial loan based on an AI recommendation, or an automated medical diagnostic tool flagging a rare condition. If the AI cannot clearly explain the chain of reasoning that led to its conclusion, the institution faces massive risks—legal, financial, and reputational. This is the core challenge driving the demand for Explainable AI (XAI).

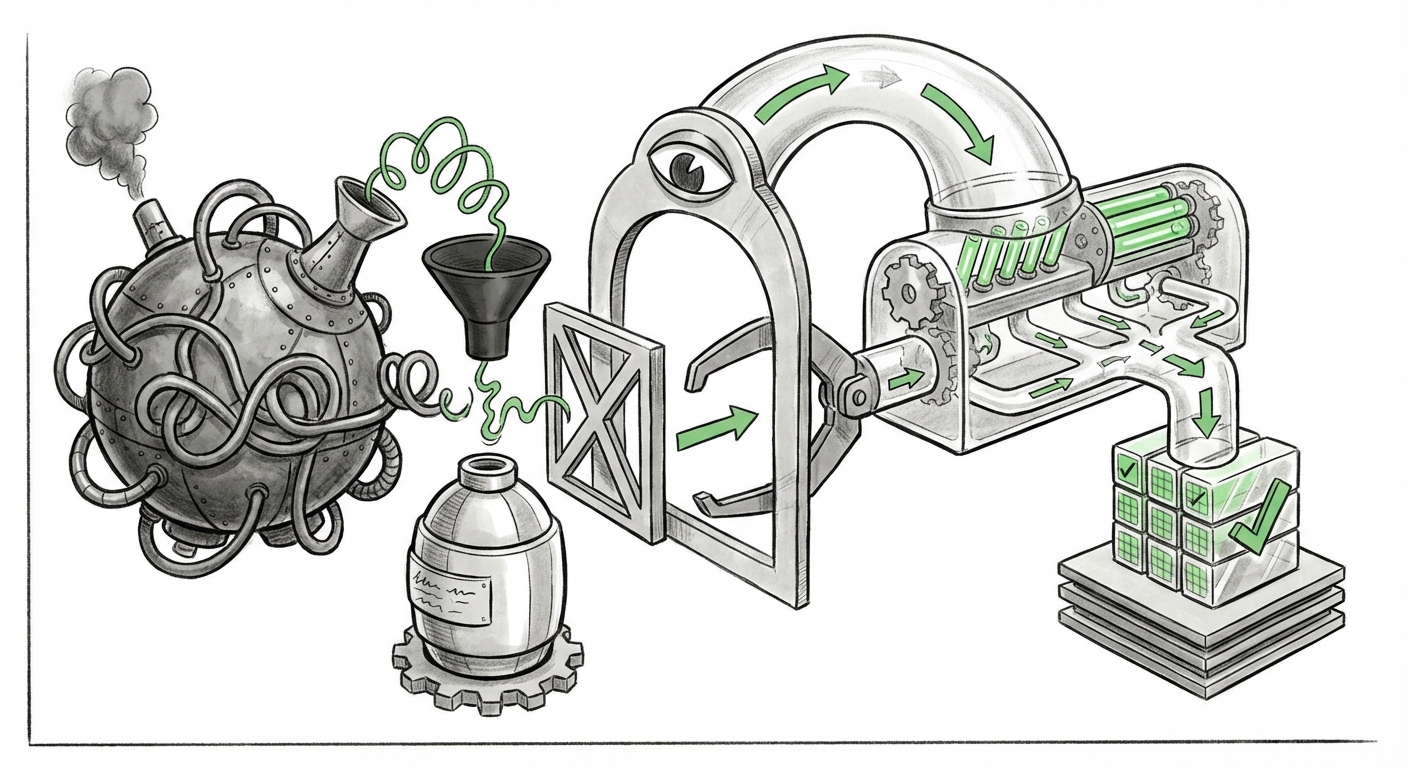

While probabilistic models excel at tasks like image recognition, they struggle when the output must be traceable to specific rules or data points. Deterministic AI, conversely, operates on logic, rules, and explicit knowledge structures. If the AI concludes "X," it can show the exact logical steps that prove X is true based on the system's established knowledge base.

This demand for clarity validates the market strategy of companies focusing on non-statistical approaches. As reports from leading analysts emphasize, enterprise adoption of AI stalls when trust is absent. Businesses are no longer satisfied with high accuracy scores; they need auditable certainty to integrate AI into core operations.

[Example of relevant search result on XAI Adoption]

The Resurgence of Logic: Symbolic AI’s Second Act

Deterministic systems often draw heavily from the principles of Symbolic AI—the branch of AI that deals with representations of knowledge using symbols, rules, and logic, rather than just numerical weights in a neural network. For a time, deep learning seemed to have relegated symbolic methods to the history books. Now, they are making a powerful comeback, often integrated with neural approaches in what is called Neuro-Symbolic AI.

If a neural network identifies a pattern (e.g., "This image looks like a tumor"), the symbolic layer can then apply known medical logic (e.g., "If Pattern A is present AND Rule B is violated, the diagnosis must be C, and the recommended action is D"). This integration offers the best of both worlds: the pattern recognition power of deep learning, combined with the logical rigor of deterministic reasoning.

The focus on building deterministic communities suggests a practical acknowledgment that for complex reasoning, humans still think logically. By mirroring this logic in our machines, we create systems that are inherently easier to debug, update, and trust.

[Example of relevant search result on Symbolic AI trends]

The Iron Fist of Regulation: Compliance is Now Non-Negotiable

Perhaps the single biggest external driver forcing the shift toward auditable AI is global regulation. The most significant example shaping the global compliance landscape is the EU AI Act. This landmark legislation categorizes AI systems based on the risk they pose to fundamental rights and safety.

Systems deemed "High-Risk"—those used in critical infrastructure, employment decisions, credit scoring, or law enforcement—face stringent requirements. They must be transparent, traceable, and subject to human oversight. For a probabilistic model, proving traceability is exceptionally difficult; it’s hard to show the regulator precisely why the weight shifted in a billion calculations to reach one specific conclusion.

Deterministic systems, built on explicit knowledge and verifiable logic trees, naturally fit these regulatory boxes. When a regulator asks, "Why did the AI reject this application?" a deterministic system can point directly to the rule set violated (e.g., "The debt-to-income ratio exceeded the threshold defined in Policy 4.2."). This capability moves deterministic AI from a niche preference to a compliance necessity for regulated sectors.

[Example of relevant search result on the EU AI Act implications]

Beyond the Hype Cycle: Specialized Platforms vs. General LLMs

The current AI landscape is overwhelmingly focused on generalized models—LLMs that can write poetry, code software, and answer trivia. While impressive, these generalists often suffer from "hallucinations" (making up facts) because their core function is next-word prediction, not truth verification.

The rise of the deterministic community forum signals a market realization: general-purpose AI is not always the right tool for critical tasks. Businesses are recognizing the need for vertical, specialized platforms. These platforms do one thing—like complex diagnostics, supply chain optimization, or regulatory mapping—and they do it with guaranteed fidelity.

This represents a healthy diversification of the AI ecosystem. While LLMs will continue to revolutionize creative and informational tasks, deterministic engines will secure the foundations of business operations, much like reliable, traditional enterprise software did before the deep learning explosion. Investors and product managers are noting this shift, favoring solutions that mitigate risk over those that promise infinite versatility.

[Example of relevant search result on specialized AI solutions]

What This Means for the Future of AI and Business Operations

The emphasis on deterministic AI, community building, and transparency is not a temporary trend; it’s a fundamental realignment of where the industry places its value.

For the Engineer and Developer: A Call for Hybrid Skills

Future AI development will increasingly require engineers who are comfortable bridging statistical modeling (Deep Learning) with symbolic manipulation (Logic Programming/Knowledge Graphs). The ability to architect a system where high-level reasoning is explicit and auditable will become a premium skill. Building a community forum emphasizes collaborative problem-solving for these complex hybrid architectures.

For the Business Leader: De-Risking AI Investment

Business leaders should see deterministic platforms as the path to scaling AI into core, high-stakes processes. Instead of fearing AI failures, leaders can mandate deterministic systems for areas like compliance checking, safety procedures, and core financial modeling. This drastically lowers the risk profile associated with AI adoption, making AI investments safer and more predictable.

For Society: The Trust Firewall

Ultimately, the push for explainability is a push for societal trust. If AI is going to manage our power grids, approve our mortgages, and assist in legal judgments, we must have a firewall of accountability. Deterministic reasoning provides that firewall. It ensures that AI remains a tool serving human logic and legal frameworks, rather than an inscrutable oracle dictating outcomes.

Actionable Insights: How to Engage with the Deterministic Shift

The rise of dedicated deterministic communities suggests that the technology is moving out of pure research labs and into mainstream application development. Here is how you can position yourself:

- Audit Your Current AI Landscape: Identify every "high-risk" decision-making process currently using a probabilistic model. Determine the regulatory exposure tied to those decisions.

- Prioritize Hybrid Training: If you are building an internal AI team, ensure training includes knowledge representation, formal logic, and reasoning systems alongside standard neural network training.

- Engage the Community: Platforms like the new Rainbird forum are crucial. They are hubs for sharing implementation patterns, regulatory interpretations, and best practices for deploying verifiable AI architectures. Participation now secures a seat at the table for shaping future standards.

- Budget for Logic Tools: As regulations tighten, invest in tooling that naturally enforces consistency and auditability, rather than trying to wrap brittle post-hoc explanation layers onto models that were never designed for transparency.

The excitement surrounding generative AI is understandable, but the true structural change in the technology industry is happening quietly, driven by the need for reliability. Deterministic AI is not meant to write the next great novel; it’s meant to ensure the train runs on time, the loan is legally sound, and the diagnostic tool is accountable. This quiet revolution signals that AI is finally growing up, prioritizing wisdom and certainty over raw, unverified power.