The Real-Time Revolution: Decart’s Lucy 2.0 and the Future of Prompt-Driven Video

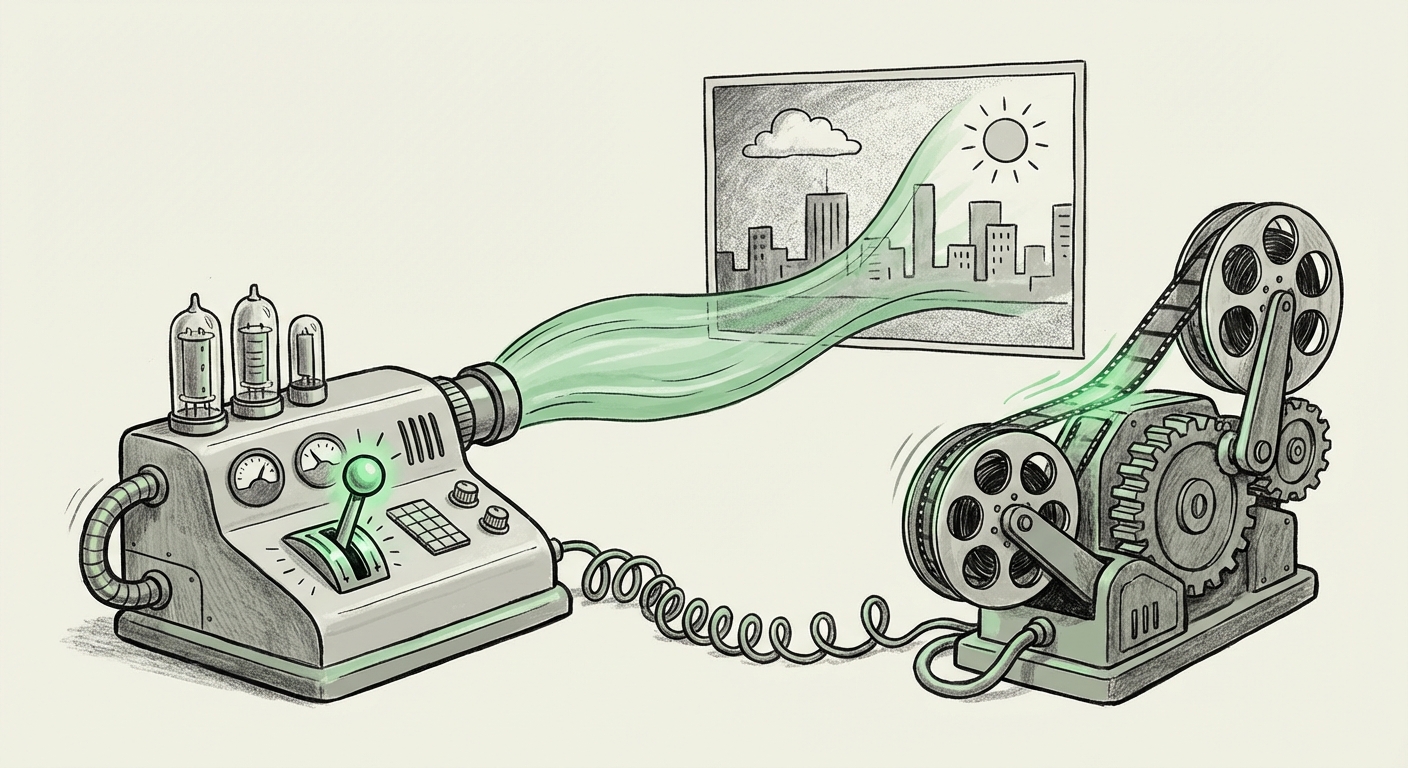

The world of generative Artificial Intelligence has been sprinting, moving rapidly from generating static images to creating short, high-fidelity video clips. However, a major bottleneck has always been speed. Video production, whether for film, advertising, or live broadcasting, demands instantaneous results. The recent unveiling of Decart's Lucy 2.0, a model capable of transforming live video using simple text prompts in real time, signals a fundamental shift in this paradigm. This is not just an iterative improvement; it is the crossing of a significant technological threshold.

As an AI technology analyst, I see Lucy 2.0 as a pivot point. We are moving from AI as a post-production tool to AI as a co-pilot operating during the moment of creation. To fully grasp the implications, we must look beyond the demo reel and analyze the underlying technological context, the competitive environment, and the sweeping societal ramifications.

The Leap: From Frames to Fluidity

For many years, generative video models operated primarily in an offline manner. A user inputs a prompt, the model generates a sequence of frames, and the resulting video is compiled much later. This process is computationally heavy, requiring significant time and powerful hardware for every second of output. This made these tools unsuitable for anything that needed to happen instantly—like a live TV segment or a video call.

Lucy 2.0 appears to have cracked the code on low-latency inference for complex visual models. When an AI system can take an input command—such as "Change the scene lighting to a rainy cyberpunk cityscape"—and apply that visual transformation to a live video stream almost instantly, it democratizes high-level visual effects. For the non-technical audience, imagine asking your computer to "repaint" a live video feed into a Picasso painting, and it happens instantly.

Contextualizing the Breakthrough: The Race Against Latency

The primary technical challenge Decart has addressed is one shared across the AI research community. To properly understand the magnitude of Lucy 2.0, we must benchmark it against the general industry pursuit of speed. Researchers focusing on **"Real-time video diffusion models latency benchmarks"** are battling constraints imposed by the massive size of neural networks. Every pixel needs to be processed sequentially through layers of computation.

If Decart has achieved near-real-time performance (often defined as sub-100 milliseconds or faster than human perception can register lag), it implies groundbreaking efficiency. This efficiency often stems from innovations in model architecture (making the model smaller or more efficient) or optimization techniques applied during deployment, such as leveraging specialized hardware libraries (like those often detailed on the NVIDIA Developer Blog for TensorRT optimization). A key takeaway for AI engineers is that mastering deployment optimization is now as critical as mastering the initial model training.

Disruption in the Media Pipeline: Business Implications

The ability to manipulate video streams via text prompts creates seismic shifts across multiple industries, particularly those reliant on high-volume, high-speed content creation. This directly challenges established workflows, offering immense cost and time savings.

The End of Slow Post-Production?

For professionals in film and advertising, the comparison between **"Generative AI vs traditional video processing pipelines"** is stark. Traditionally, achieving a specific stylistic change—say, adjusting the entire color palette of an hour-long feature film to mimic vintage stock footage—requires specialized colorists spending days or weeks in software like DaVinci Resolve. Lucy 2.0 suggests this entire process could be reduced to typing: "Apply a warm, saturated Kodachrome look to the entire sequence."

This level of iterative, prompt-based editing empowers creative directors and even front-line editors to experiment rapidly. The bottleneck shifts from rendering time to the quality and creativity of the prompt engineer.

Live Content Transformation

The most potent application lies in live media. Publications covering **"Text-to-video editing industry applications,"** such as those often found in trade journals like *Broadcast Engineering*, highlight the need for dynamic visual solutions. Consider:

- Live Broadcasting: Instantly swapping sponsor logos on digital hoardings during a live sports event based on a text command, or changing a commentator's background environment during a remote interview.

- Virtual Production: In film sets using LED walls, directors can verbally direct the environment ("Make the distant mountains glow purple") and see the result reflected instantly, rather than waiting for complex 3D render updates.

- E-commerce and AR: Enabling users to virtually "try on" clothing or see furniture in their homes with immediate visual feedback based on textual description changes.

This transition means that media companies may soon look less like rendering farms and more like creative prompt laboratories.

The Necessary Shadow: Ethics, Control, and Misinformation

No analysis of powerful, real-time visual generation technology is complete without addressing the ethical chasm it opens. When the barrier to creating highly convincing, manipulated video drops to the speed of typing, the implications for trust and security are profound.

The concerns raised by discussions around **"Controversies and ethics of real-time deepfake generation"** move from theoretical future worries to immediate operational threats. If Lucy 2.0 can transform a live feed perfectly, malicious actors could theoretically inject convincing falsehoods—a false statement from a politician during a live press conference, or fraudulent product endorsements.

This escalating risk places significant pressure on developers and regulators:

- Provenance and Watermarking: Technology companies must aggressively deploy standards like C2PA (Coalition for Content Provenance and Authenticity) to cryptographically sign media, proving whether it was captured live or synthesized/altered by AI.

- Detection: Research into detection tools must advance at the same pace as generation tools. Real-time detection systems will become essential infrastructure in broadcast and social media verification.

- Usage Policy: Companies deploying models like Lucy 2.0 must implement strict usage policies, perhaps blocking prompts targeting specific political figures or installing internal guardrails to prevent known forms of harmful manipulation.

For business leaders, adopting this technology requires an accompanying commitment to responsible AI governance. The competitive advantage gained by speed must be balanced against the reputational risk of being associated with unverified synthetic content.

Actionable Insights for the Future-Forward Enterprise

Where does this leave businesses today? The adoption curve for such transformative technology is rapid. Here are concrete steps derived from analyzing this trend:

For Media and Entertainment Executives:

Audit your pipeline now. Identify the 20% of your video tasks that consume 80% of your rendering budget or time (e.g., B-roll adjustments, specific color grades, minor visual effects). These are the first targets for disruption by prompt-driven systems. Begin training key creative staff not just as editors, but as AI Directors, fluent in prompt engineering.

For AI and Engineering Teams:

Focus on Deployment Optimization. The next frontier isn't bigger models; it’s faster deployment. Invest heavily in research around inference optimization, quantization, and hardware-specific acceleration for diffusion models. The ability to serve complex models with low latency is the key differentiator for the next generation of generative AI products.

For Legal and Risk Officers:

Develop a Synthetic Media Policy. Before your marketing team demands real-time style changes for an upcoming campaign, establish clear, enforceable rules regarding what types of modifications are permissible and what detection methods your organization will employ to verify the authenticity of incoming visual data. Proactive governance mitigates reactive crisis management.

Conclusion: The Visual Interface is Now Conversational

Decart’s Lucy 2.0 is a powerful demonstration that the long-held dream of conversational visual editing is achievable. The interface for manipulating reality—or at least, our recorded perception of it—is shifting from complex graphical user interfaces (GUIs) to simple, natural language prompts.

This revolution promises unprecedented creative velocity. It will lower the technical barrier to entry for high-quality visual production, fostering an explosion of new content formats we cannot yet fully imagine. However, the parallel responsibility lies in mastering the deployment challenges—the technical latency—while simultaneously building the ethical and technological safeguards necessary to ensure this powerful new tool serves creation, not deception. The future of video is here, and it listens to what you type.