From Hours to Seconds: How Ultra-Fast AI Indexing is Redefining Software Development

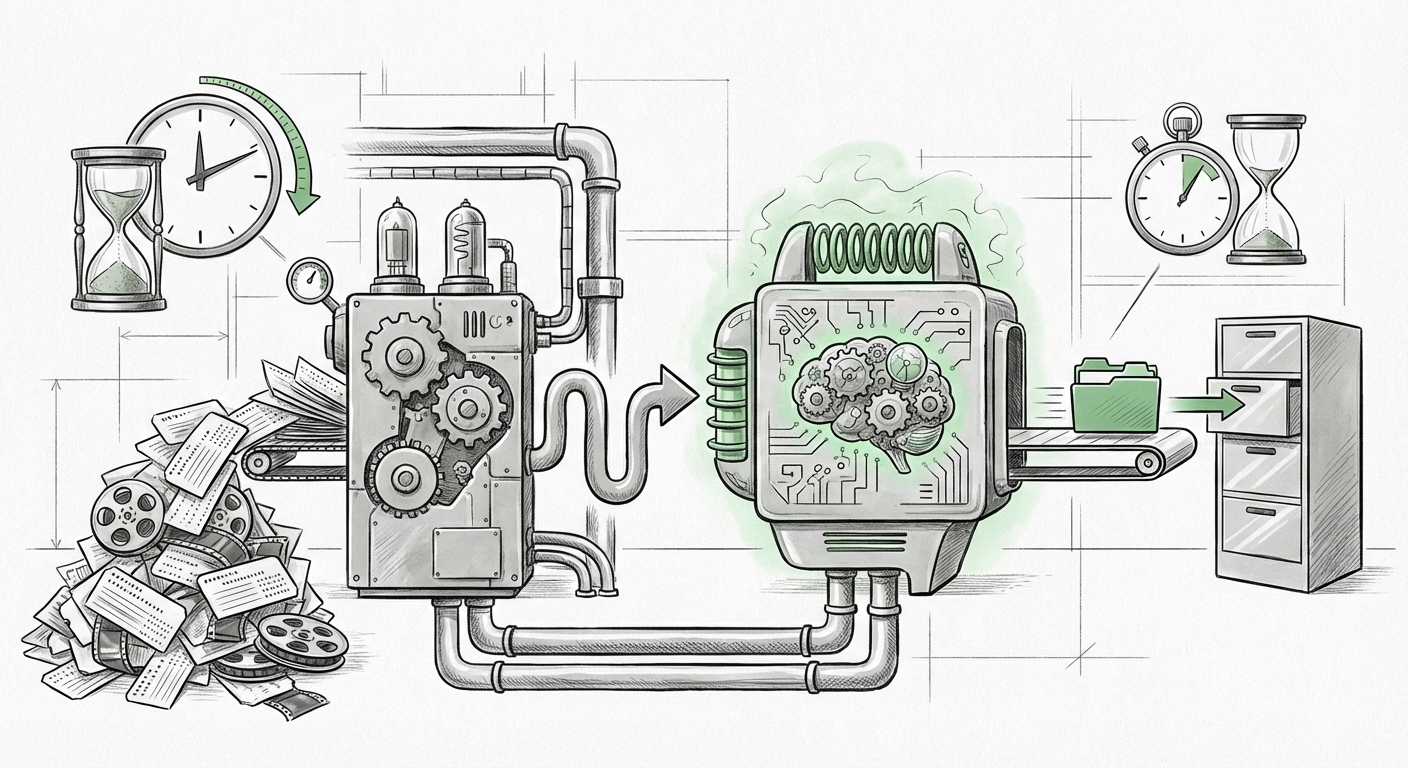

The narrative around Artificial Intelligence in software development often focuses on the sophistication of the Large Language Models (LLMs) themselves—their reasoning, creativity, and code generation prowess. However, the true utility of an AI assistant hinges on its ability to access and utilize your specific context. When dealing with vast, proprietary codebases, context is everything, and access speed determines whether the tool is a novelty or a necessity.

The recent announcement from the AI coding assistant Cursor, which slashed codebase indexing time from over four hours down to a mere 21 seconds, is not just a footnote in a software update log. It signals a fundamental shift in the viability of context-aware AI tools. This is an inflection point where infrastructure engineering has caught up with model capability, paving the way for true, enterprise-scale adoption.

The Bottleneck Shattered: Why Speed Matters

For context, AI assistants that claim to "understand" your entire project operate by converting source code files into mathematical representations called embeddings. These embeddings are stored in specialized databases (vector databases) to allow for lightning-fast similarity searches—a process known as Retrieval-Augmented Generation (RAG).

Previously, processing a massive codebase (hundreds of thousands or millions of lines of code) into these embeddings and indexing them for retrieval was agonizingly slow. Waiting four hours meant developers could only get insights on stale code, or worse, they had to segment their work, defeating the purpose of holistic understanding. As highlighted by Cursor’s breakthrough, this delay was the primary friction point for practical application.

The move to 21 seconds changes the equation entirely. It transforms indexing from a rare, overnight maintenance task into a dynamic, on-demand feature. This radical reduction suggests advancements in two core areas:

- Efficient Embedding Pipelines: The speed at which the raw code is processed into embeddings has been optimized, likely through better parallelization and perhaps smarter chunking strategies.

- Vector Database Performance: The retrieval efficiency of the underlying vector database must have seen massive improvements, allowing the system to pull relevant code snippets almost instantaneously when a developer asks a question. This directly relates to the ongoing advancements in vector databases for code indexing performance.

For the engineering manager or CTO, this speed means that when a new developer joins, or a major refactoring occurs, the AI context is updated almost instantly. This capability directly influences the expected return on investment (ROI) for AI tooling, moving it from theoretical productivity gains to tangible, real-time benefits.

The Architecture Battle: RAG Optimization vs. Context Window Expansion

To give an LLM context about a large project, developers have historically faced a design choice, which we can simplify for clarity:

Option A: The Mammoth Context Window (Brute Force)

Modern LLMs boast massive context windows (the amount of text the model can consider at one time). The idea here is to dump huge amounts of relevant code directly into the prompt. While powerful, this is expensive, slow (as the model has to read everything), and hits physical token limits eventually.

Option B: Smart Retrieval (RAG)

RAG systems index the code first, then intelligently select only the 5-10 most relevant files or functions based on the user’s query, sending only those snippets to the LLM. This is faster and cheaper.

Cursor’s success strongly implies that smart retrieval is winning the race for large-scale context. While context window expansion is crucial for nuanced reasoning on small, immediate tasks, effective enterprise AI requires indexing and rapid retrieval across millions of lines of code. Articles exploring the LLM context window expansion vs RAG optimization landscape suggest that RAG systems, when optimized for speed (as 21-second indexing implies), provide the superior architecture for deep, codebase-wide understanding.

For the AI researcher, this signals that the real innovation isn't just building bigger models, but building smarter retrieval layers around them. The speed of the underlying vector infrastructure is now a key competitive differentiator.

The Impact on Developer Workflow: From Interruption to Flow State

Imagine a developer debugging an obscure error buried three layers deep in legacy code they haven't touched in years. Traditionally, this involves hours of searching documentation, trawling Git blame history, and manually cross-referencing definitions.

With near-instant indexing, the developer can ask the AI assistant: "Why is function X throwing an error when called from module Y, given the dependency injection pattern defined in file Z?" The AI instantly scans the entire repository, finds the relevant logic, dependency injection setup, and the exact error context, delivering an answer in seconds.

This shift drives productivity in ways that are hard to quantify on a timesheet but deeply felt by engineers:

- Reduced Context Switching: Developers stay "in the zone." Four hours of indexing was a guaranteed workflow interruption; 21 seconds is faster than grabbing a cup of coffee.

- Accelerated Onboarding: New team members can achieve functional fluency in complex codebases dramatically faster because the AI acts as an instant, comprehensive guide. This directly addresses the impact of AI code assistants on developer workflow speed.

- Effective Refactoring: Large-scale code changes become less risky when the AI can instantly map out all dependencies and potential breaking points across the entire system.

This productivity boost is what companies are looking for. It’s about turning a four-hour investigation into a four-minute conversation.

The Next Frontier: Enterprise Adoption and Security

The ability to index massive, complex, and often messy proprietary code in under a minute is the key that unlocks mass enterprise adoption. Many companies were hesitant to adopt early AI coding tools because they couldn't reliably analyze the internal logic unique to their business or because the indexing process required taking their systems offline for long maintenance windows.

The 21-second benchmark dramatically alleviates these concerns, directly tackling the enterprise adoption challenges for proprietary code LLMs.

Actionable Insights for Business Leaders

- Audit Your Infrastructure Readiness: If your current AI tooling provider still quotes multi-hour indexing times, your architecture is outdated. Prioritize vendors who have invested heavily in RAG optimization and high-speed vector processing.

- Measure Context Latency, Not Just Model Quality: The best LLM is useless if it can't access the right data fast enough. Treat the speed of context retrieval as a critical Service Level Objective (SLO) for your development tools.

- Embrace Continuous Context: Since indexing is nearly instantaneous, mandate continuous indexing or scheduled, ultra-fast updates. This keeps the AI assistant perpetually synchronized with the latest commits, ensuring that AI suggestions are based on the absolute current state of the codebase.

Future Implications: Beyond Code Generation

This infrastructure leap signals that AI is moving past simple code completion and into genuine, high-level system comprehension. If an AI can index and understand four hours of work in 21 seconds, what does that mean for the future?

1. Architectural Compliance as a Service: Future tools won't just write code; they will instantly verify that code against complex internal architectural standards (e.g., "Does this new module adhere to our security mandate laid out in the 2022 Governance Document?").

2. Autonomous Debugging Pipelines: Errors reported from production can be immediately fed into a system that indexes the latest production code, traces the issue back through deployment history, and suggests the exact fix, all within minutes rather than days.

3. Codebase Evolution Management: Imagine performing an entire language migration or dependency upgrade. Instead of months of manual triage, the AI can assess the entire scope of necessary changes across the organization's repositories instantly, prioritizing the highest-risk updates first.

The speed of indexing is democratizing powerful, custom AI reasoning. It removes the friction associated with "teaching" an AI system about a company’s intellectual property, making deep, customized AI interaction as accessible as a standard search engine query.