The Foundation Shift: Why $1B+ Is Being Bet on AI Architectures Beyond Deep Learning

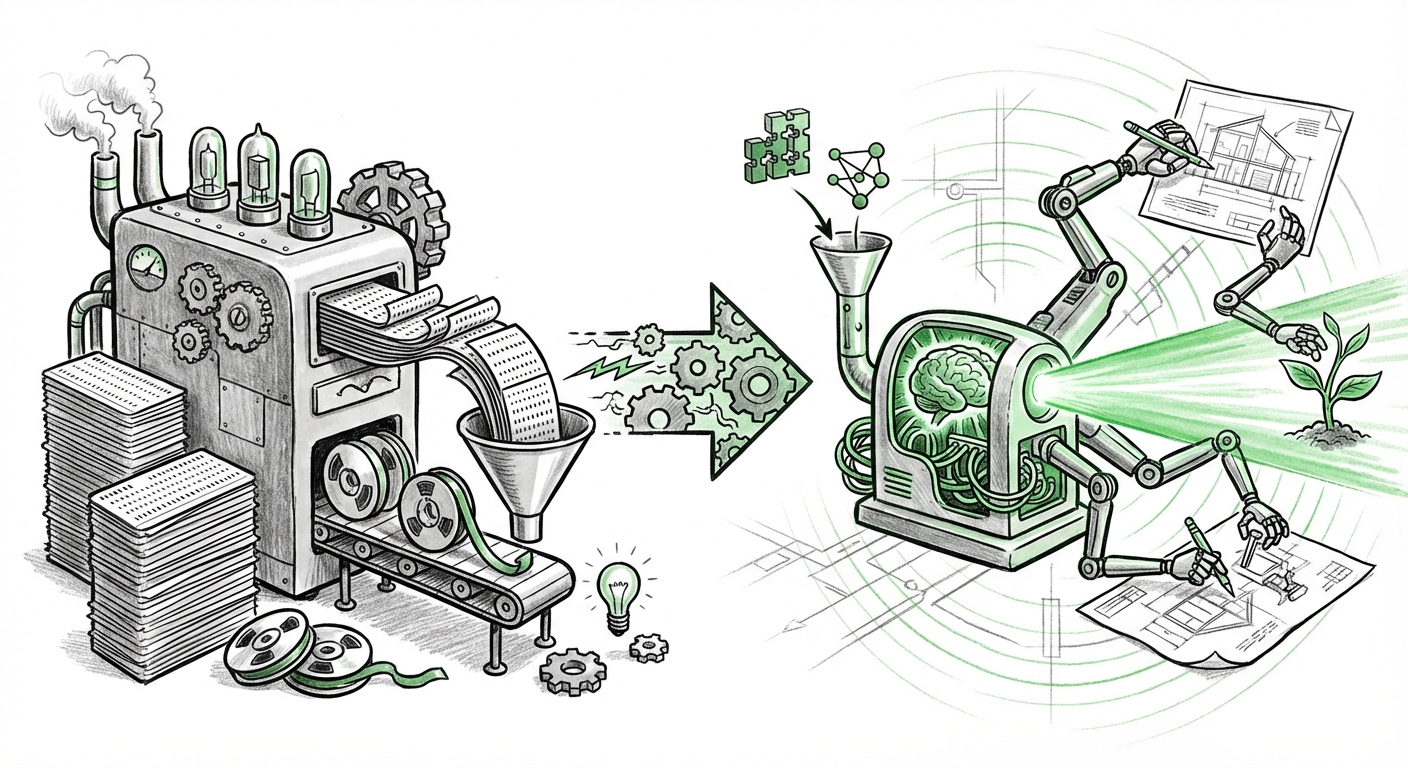

The world of Artificial Intelligence is currently dominated by large language models (LLMs) built on the Transformer architecture. These models, which power everything from advanced chatbots to complex code generation, rely on a simple but costly premise: more data and more computing power yield better results. But what if the core engine itself is reaching its limit?

Recent news confirms that a major fissure is opening in AI development. Two ambitious startups, Flapping Airplanes raising $180 million and Core Automation seeking up to $1 billion, are not focused on building bigger LLMs. Instead, they are betting their massive capital piles on *reinventing how AI learns entirely*. This isn't iteration; it’s revolution. As an AI technology analyst, I see this movement as the most significant indicator that the industry is preparing for a fundamental paradigm shift, moving away from brute-force correlation toward smarter, more efficient reasoning.

The Cracks in the Current AI Edifice: Why Foundations Must Change

For the last decade, deep learning has been the undisputed champion. However, as we push these models to solve increasingly complex, real-world problems, their inherent weaknesses become glaringly apparent. These issues justify the aggressive venture funding seen by these new labs:

1. The Insatiable Appetite for Data (The Scaling Wall)

Current models are data gluttons. To achieve minor performance gains, they require exponentially more training data—often scraping the entire accessible internet. As analyses of LLM limitations suggest, we are approaching the "Scaling Wall." We are running out of novel, high-quality data, and the cost (both financial and environmental) to process what remains is becoming unsustainable.

For the Practitioner: This means that niche industries requiring proprietary, sensitive, or rare data cannot benefit as much as general applications. New methods must be data-efficient.

2. Lack of True Reasoning and Causality

Deep learning models are masters of pattern recognition and correlation. They can tell you *what* usually follows *what*, but they often fail spectacularly at *why*. They lack true causal understanding—the ability to understand cause and effect like a human does. When faced with scenarios outside their training distribution, they become brittle and hallucinate confidently.

This gap is precisely why investors are shifting focus, as evidenced by growing interest in Causal Inference vs Deep Learning investment. VCs are realizing that building systems that can truly reason, adapt, and explain their decisions (not just predict the next word) requires different mathematical foundations.

3. The Brittleness Problem and Catastrophic Forgetting

When you teach a deep learning model a new skill, it often forgets an old one—a problem known as catastrophic forgetting. Humans learn continuously; AI struggles to integrate new knowledge without erasing old expertise. This severely limits AI deployment in dynamic, long-term environments like robotics or complex scientific research.

The New Frontiers: Architectures Beyond the Transformer

The multi-billion dollar bets placed on companies like Flapping Airplanes and Core Automation signal a strong belief in alternatives. These efforts are typically chasing architectures that address the inherent weaknesses of standard neural networks. We are seeing a tangible push toward AI architectures beyond transformers, focusing on efficiency and robustness.

Neuro-Symbolic Integration

One major contender involves combining the pattern-matching strength of deep learning (neural networks) with the logical structure of classical AI (symbolic reasoning). Imagine a system that can read and understand vast text (the neural part) but then use structured, logical rules to solve math problems or prove theorems reliably (the symbolic part). This hybrid approach offers the potential for AI that is both flexible and rigorously logical.

Biologically Plausible Learning

Other emerging fields, often inspired by neuroscience, aim for biologically plausible AI models. This includes exploring how the brain learns sparsely, continuously, and with very little energy. Research into neuromorphic computing—hardware designed to mimic the brain’s structure—and algorithms that focus on sparse connections rather than massive density are key areas.

If these startups are successful, the resulting AI won't just be slightly better at summarizing text; it will be fundamentally smarter, capable of learning from fewer examples, understanding context more deeply, and achieving general intelligence faster. This is why the scale of the funding is necessary—changing the physics of AI requires immense resources.

Investor Sentiment: A Shift from Optimization to Foundational Rebuilds

The sheer size of the capital being raised—especially the potential billion-dollar raise—is not just about paying engineers; it is about buying time to conduct high-risk, high-reward fundamental research. This investment climate is crucial to track:

- DeepTech Maturation: We are witnessing a maturation in the venture landscape that is willing to back "DeepTech." Investors are now more comfortable supporting ventures whose payoff is five to ten years away, provided the potential upside is truly disruptive.

- The "One More Bet": After pouring trillions into optimizing the current stack (better GPUs, bigger LLMs), the market seems to be saying, "We need one more foundational bet to unlock true AGI." The $180M and potential $1B raises position these companies as the primary candidates for that next leap.

- The Risk of the Next AI Winter: As analysts tracking The History of AI Hype Cycles know, massive speculative funding can lead to collapse if promised breakthroughs fail to materialize. However, current reports suggest that the need for better data efficiency is so pressing that this funding feels less like hype and more like essential infrastructure investment for the *next* decade of AI.

Implications for Business and Society: What This Means for Tomorrow

The success or failure of these foundational shifts will dictate the trajectory of technology adoption globally. For executives and strategists, understanding this dynamic is paramount.

For Businesses: Moving Beyond API Dependency

Currently, most enterprises rely on external API access to powerful LLMs. If Flapping Airplanes or Core Automation succeed, the future enterprise might move toward bespoke, data-efficient models that require minimal fine-tuning and can operate reliably on local, domain-specific knowledge.

Actionable Insight: Businesses should closely monitor advancements in *data efficiency* and *explainability* coming from these foundational labs. Investing in internal teams that explore causal methods or neuro-symbolic frameworks now positions them to adopt the next generation of AI before competitors relying solely on monolithic models.

For Society: Efficiency and Trust

The societal implications hinge on data efficiency. If AI can learn complex tasks from 1,000 examples instead of 100 billion, the environmental footprint shrinks dramatically, and access to powerful AI tools democratizes beyond the few entities that can afford massive cloud compute bills.

Furthermore, if these new systems can deliver genuine causal understanding and explainable logic (a hallmark of symbolic systems), the trust deficit currently plaguing AI deployment in critical sectors like medicine, law, and finance could begin to close. We move from systems that are compelling black boxes to systems that are verifiable partners.

Conclusion: Preparing for the Architectural Reboot

The news that two distinct AI labs are aggressively pursuing fundamental overhauls in learning mechanisms is the clearest signal yet that the era of scaling existing deep learning paradigms is nearing its natural, economically constrained end. The $180 million and the pursuit of $1 billion are not just monetary figures; they are votes of no confidence in the status quo and massive endorsements for innovation at the mathematical core of artificial intelligence.

We are witnessing the very early stages of the next great AI transition. Whether these specific startups succeed or fail, the technological pressure driving them—the need for efficiency, reasoning, and robustness—is irreversible. The future of AI won't just be bigger; it will be fundamentally smarter, more architecturally diverse, and hopefully, far more reliable.