The AI Scaling Crucible: Why Compute, Data, and Cost Are Defining the Next Wave of Innovation

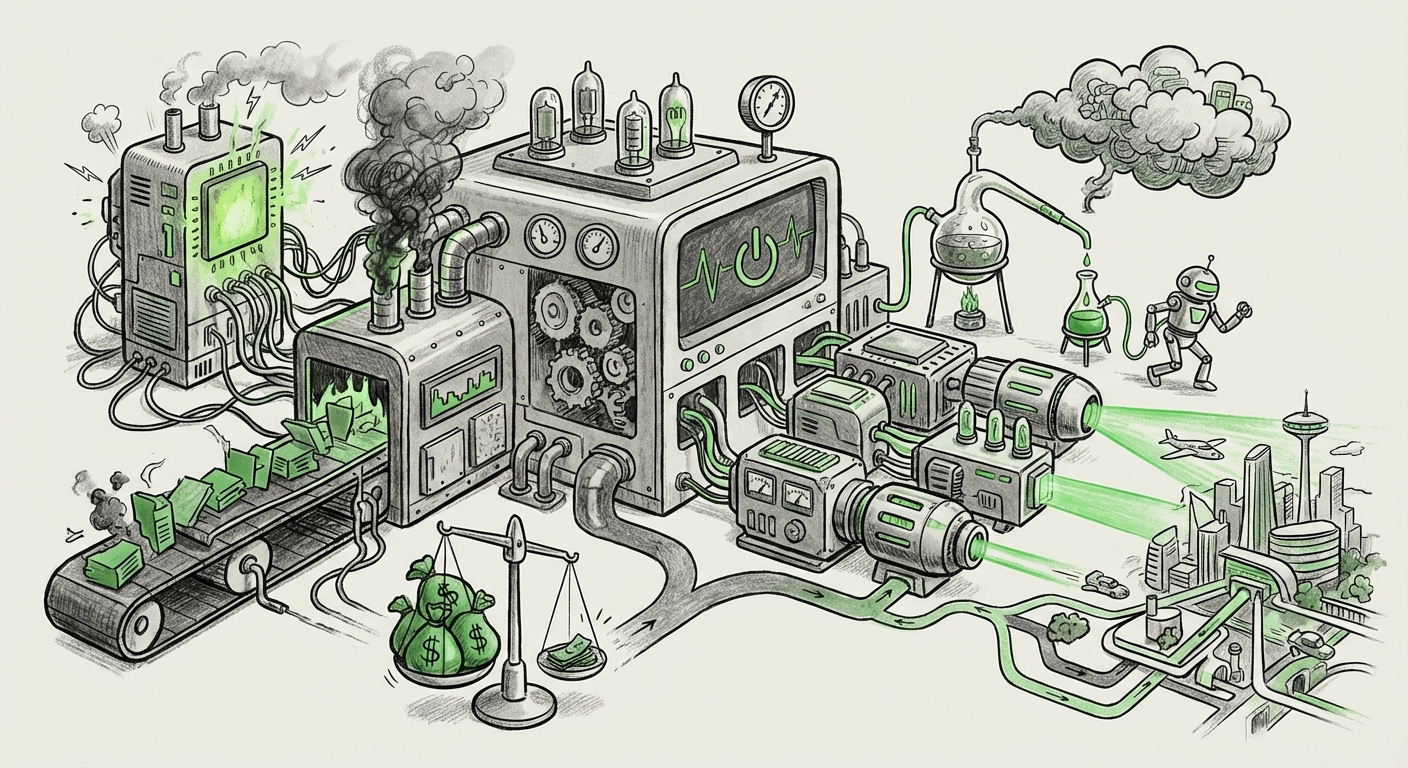

The current era of Artificial Intelligence feels defined by breakthroughs—models that write poetry, design proteins, and code software. Yet, beneath the glittering surface of these achievements lies a harsh reality for the innovators trying to build the next generation of AI companies: scaling is lethal. The ambition to build bigger, better models often runs headfirst into the hard limits of physics, finance, and logistics.

Recent analysis highlights that many AI-native startups are collapsing not due to a flawed idea, but due to fundamental mistakes in managing their core resources: Data, Compute, and Memory. This isn't just a theoretical problem; it’s an existential crisis playing out on the balance sheets of Silicon Valley. To understand the future of AI, we must look beyond the model weights and examine the infrastructure battle being waged.

The Hardware Tightrope: Beyond the GPU Monopoly

For years, the AI landscape has been dominated by a single architecture. However, as the demand for massive training runs—especially for Large Language Models (LLMs)—skyrockets, reliance on one supplier becomes a critical vulnerability. We are seeing serious consideration of alternatives, such as specialized hardware like the AMD MI355X, which promises high performance for inference and training.

This signals a vital technological pivot. Startups are realizing that simply buying the latest, most powerful chips isn't enough; they must optimize for the entire lifecycle, from initial LLM training to cost-effective deployment (inference). Mistakes here—such as miscalculating memory scaling requirements or accepting poor performance trade-offs—lead directly to crippling operational costs.

The Financial Moat: Compute as the New Capital Requirement

When discussing scaling failures, the financial impact is immediate and severe. Training foundational models requires staggering computational power. For a startup burning investor cash, a failed training run costing millions can wipe out their entire runway. This has led to an environment where the cost of large language model training for startups is becoming a primary barrier to entry, effectively establishing a massive financial moat around incumbents who already possess data centers. The future of AI innovation hinges on whether this moat can be breached by efficiency, rather than sheer spending power.

What this means for the future: We will see a bifurcation. A few large players will continue to build the behemoth, frontier models. For everyone else, success will depend on *efficiency* and *optimization*, not just size.

The Ecosystem War: Diversification and Portability

The choice of accelerator hardware is no longer just a performance decision; it's an ecosystem commitment. While one vendor may offer superior peak performance, relying solely on their proprietary software stack locks a company into their platform. This vendor lock-in prevents agility.

We must examine the broader industry trend toward AI chip diversification strategy beyond NVIDIA. Companies are actively seeking solutions that run effectively on alternative silicon, such as Google’s TPUs or Amazon’s Inferentia chips, or even exploring open-source hardware concepts. A startup that engineers its software to be highly portable across different hardware architectures (a complex task known as abstraction layer management) gains a massive strategic advantage.

If a startup fails because they chose hardware that couldn't scale or was too expensive to operate in the long run, the lesson is clear: Software portability beats peak performance isolation. The ability to smoothly switch or scale across varied compute environments will become a key indicator of long-term technological resilience.

The Data Imperative: The Unforgiving Foundation

Hardware is merely the engine; data is the fuel. A recurring theme in AI scaling failures is the underestimation of the data challenge. You can deploy the most advanced AMD chips, but if the data used for training is noisy, biased, or insufficient, the resultant model will perform poorly. This failure mode is arguably more insidious than compute failure because it can take longer to diagnose.

Insights drawn from analyzing the data quality impact on LLM training performance show that iterative, high-quality data curation—often termed building a 'data flywheel'—often yields better ROI than blindly pouring compute cycles onto mediocre datasets. For many businesses, the future success of their proprietary AI won't come from training a GPT-5 competitor, but from perfecting the unique, clean data sets that feed a smaller, specialized model.

Implications for Business: Chief Data Officers (CDOs) and Data Science leads must pivot from viewing data collection as a precursor to modeling, to viewing data curation as a continuous, mission-critical product development cycle that deserves equal or greater investment than the GPU cluster.

The Counter-Revolution: Efficiency and Democratization

If the road to success requires billions in capital and access to vast server farms, AI innovation risks becoming centralized among a few tech giants. Fortunately, the industry is pushing back with a powerful counter-trend: efficiency.

This movement is driven by the rise of small language models (SLMs) efficiency. Techniques like model distillation (teaching a small model to mimic a large one) and quantization (shrinking the model's size without losing much accuracy) are revolutionizing deployment. Models that can run effectively on a single local server, or even a high-end laptop, unlock vast new application spaces.

This democratization of infrastructure means startups no longer need to chase the frontier models. Instead, they can target niche, high-value problems where a specialized, efficient SLM outperforms a general, bloated LLM. This offers a crucial lifeline for smaller players, validating that an alternative success path exists outside the centralized compute arms race.

What This Means for the Future of AI and How It Will Be Used

The convergence of these scaling pressures—financial constraints, hardware diversification needs, data quality demands, and the efficiency counter-movement—paints a clear picture of the next few years in AI:

- Specialization Over Generalization: We will see fewer attempts to create one "do-everything" AI. The successful startups will master specific domains (e.g., legal review, specific manufacturing QA) using highly optimized, smaller models trained on curated private data.

- Inference Economics Reign Supreme: Training costs are massive, but inference costs (the cost to *use* the model daily) compound over millions of queries. Future architecture decisions—whether using an MI355X for faster throughput or a highly compressed SLM for lower latency—will be dominated by inference cost analysis.

- The Rise of the Infrastructure Agnostic Developer: Developers who can write code that abstracts away the underlying hardware (be it specialized AMD, standard NVIDIA, or custom silicon) will be invaluable. Portability becomes a core technical skill.

- Data Governance as Strategic IP: Proprietary, clean, high-quality data pipelines will become more valuable than the model architecture itself. Companies that fail to invest heavily in data governance will find their expensive compute investments producing poor returns.

Actionable Insights for Tech Leaders

For founders and CTOs navigating this complex terrain, the path forward requires disciplined focus:

- Audit Your Compute Strategy: Don't just buy the fastest chip. Analyze the total cost of ownership (TCO) for training *and* long-term inference. Explore cloud providers offering competitive pricing on alternative accelerators if your workload allows for it.

- Institute a Data-First Mandate: Allocate significant engineering resources not just to prompt engineering, but to data validation, cleaning, and feedback loops. Your data moat is your ultimate protection against better-funded competitors.

- Embrace Model Minimalism: Before starting a massive training project, conduct thorough research on whether a 7B or 13B parameter model, heavily fine-tuned on your specific task, can achieve 90% of the performance at 1% of the cost.

- Architect for Flexibility: Build software layers using established, portable ML frameworks. Avoid deep dependencies on proprietary, vendor-specific optimization libraries unless absolutely necessary for survival.

The journey of AI development is shifting from a brute-force marathon to a nuanced tactical operation. The "why AI-native startups fail" narrative is a powerful warning: success now belongs to those who master efficiency, not just scale. The future AI landscape will be defined not by who has the biggest model, but by who can deploy the right model, at the *right* cost, powered by the *right* data.