The Positioning Paradox: Why the ChatGPT Agent Stumble Signals the Next Frontier in AI Adoption

The promise of Artificial Intelligence has always been about delegation: handing off complex, multi-step tasks to a tireless digital assistant. We call these assistants AI Agents. They are supposed to be the next big leap beyond simple chatbots, capable of planning, executing, and verifying work autonomously.

However, recent reports surrounding OpenAI’s dedicated ChatGPT Agent feature suggest a harsh reality check: capability does not automatically equal adoption. With user numbers reportedly plummeting from four million to under one million, the issue wasn't the *power* of the technology, but its *presentation*. The central culprit appears to be a classic product management failure: Nobody knew what it was actually for, or how it differed from what they already had.

This stumble is far more than just a footnote in OpenAI’s quarterly review; it is a critical data point shaping the future of how we interact with sophisticated AI. This article analyzes what this failure means for the path toward true AI integration, looking at product confusion, consumer readiness, and the necessary evolution of the "Agent" category.

The Identity Crisis: When Features Overlap the Foundation

The core problem, as detailed in reports, was one of branding and interface design. ChatGPT, even in its baseline mode, has always possessed elements of agentic behavior—it can browse the web, analyze data, and write code if prompted correctly. When OpenAI introduced a separate "Agent" mode, it created immediate friction:

- Redundancy Perception: If the standard chat box could already act somewhat like an agent, why was a dedicated, separate mode necessary? Users defaulted to the familiar, proven interface.

- Unclear Value Gap: The new feature lacked a compelling "killer application" that immediately differentiated itself. Users couldn't quickly articulate, "I use the Agent for X, and the standard chat for Y."

- Discoverability Failure: A significant portion of users simply didn't know the new mode existed, suggesting marketing and in-app guidance were insufficient for such a fundamental shift in interaction.

This situation mirrors broader industry challenges in differentiating between the *model* (like GPT-4) and the *product* (like ChatGPT Plus). As these foundational models become more powerful, companies often bolt on new functionalities as distinct product layers. For the average user, this creates confusion. They are paying for a service, and when a new button appears, they struggle to integrate it into their mental model of the tool.

Corroboration: A Systemic Naming Problem

This issue isn't unique to OpenAI. Competitors frequently struggle with layering features, leading to user frustration. Research into product naming conventions within major AI releases confirms that clear differentiation is a persistent industry weak spot. If the naming suggests overlap, users default to the path of least resistance.

For instance, as noted in external analysis regarding AI feature naming, the constant rebranding and re-layering—moving from Bing Chat to Copilot, for example—often obfuscates the underlying capability for the end-user, reinforcing the idea that one mode is merely a slight tweak on another.

A relevant analysis on this topic points out: The Verge: Why naming AI features is so hard for Microsoft and OpenAI.

Consumer Readiness: The Leap to True Autonomy

Beyond poor positioning, the usage drop suggests a deeper psychological hurdle: consumer comfort levels with autonomous AI.

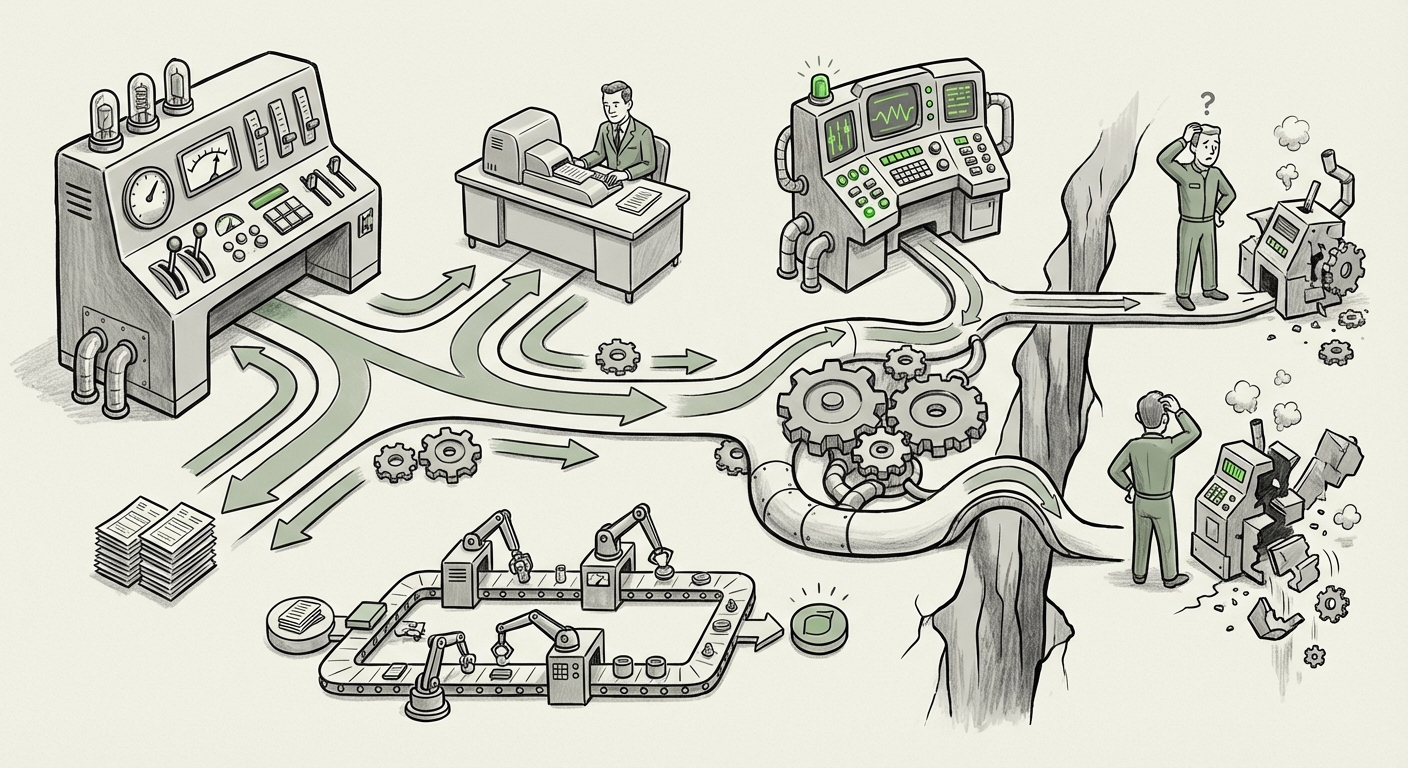

When a user interacts with the standard ChatGPT, they operate in a comfortable, familiar paradigm: Input Prompt -> Receive Output. It is a powerful conversation, but the user remains firmly in control of the pacing and the sequence of actions.

An "Agent," by definition, implies delegation of *intent*. It suggests the AI will plan multiple steps, navigate potential roadblocks, and execute tasks without constant human micromanagement. For many everyday users, this level of autonomy feels like a significant cognitive jump.

The "Agent" Cognitive Load

For a consumer wondering how to quickly summarize a document or draft an email, introducing an "Agent" mode—which might require a different setup or a more complex initial instruction—increases the cognitive load. Users ask themselves: *“Is this going to do what I want? Will it mess up? Do I have to teach it a new way to work?”*

If the value proposition isn't instantly obvious (e.g., "This saves you five hours of spreadsheet work"), users revert to the known quantity. This market research theme—the gap between technological possibility and consumer behavioral readiness—is crucial for anyone planning consumer AI rollouts. People are highly comfortable with AI as an advanced tool, but less so as an autonomous partner.

The Enterprise Contrast: Where Agents Are Thriving

To understand the future, we must contrast the consumer confusion with the enterprise adoption of agents. While consumers rejected the abstract "Agent" button, businesses are rapidly adopting highly specific, task-oriented agents.

This divergence is key: Enterprise adoption prioritizes utility within a closed, high-stakes workflow, while consumer adoption demands broad, intuitive accessibility.

Task-Specific Utility Wins

In the business world, success is being found where the agent’s purpose is narrow and its impact is measurable. Consider tools like GitHub Copilot, which acts as a coding agent. Developers readily adopt it because its mandate is clear: write code faster. It isn't asked to manage their calendar or book travel; it solves one massive pain point.

The lesson here is that for AI agents to succeed widely, they must either become an invisible, integrated layer in existing software (like Copilot in VS Code) or solve a problem so profoundly difficult that the initial confusion is easily outweighed by the relief of delegation. The general-purpose ChatGPT Agent failed to deliver on this decisive utility.

Implications for the Future of AI Development

The ChatGPT Agent episode provides four major takeaways for every company developing AI tools:

1. Integrate, Don't Isolate, Agentic Capabilities

The future likely lies in the seamless integration of agentic workflows into the default interface. Instead of forcing users into a separate "Agent Mode," the base ChatGPT (or Copilot, or Claude) should gradually gain more autonomous abilities based on the complexity of the prompt. If a user asks, "Plan my two-week trip to Japan, including flights and budget," the system should respond by saying, "I will perform this in three steps: (1) research flights, (2) find hotel pricing, (3) compile itinerary." The user observes the steps but doesn't need to click a new button to initiate the process.

2. Clarity Over Hype: The Need for Actionable Naming

The word "Agent" is exciting for researchers but potentially intimidating for general users. Future products must adopt naming conventions that describe the *outcome*, not the *mechanism*. Instead of "AI Agent," consider "Automated Project Planner" or "Deep Research Assistant." The market needs to know what it gets, not just what it is.

3. The B2B Vector is the Leading Edge

For the next 18-24 months, investment and focus should remain heavily on B2B applications where users are paid to use the tool and where ROI for task automation is clear. Enterprises possess the mandate, the workflow scaffolding, and the willingness to train employees on new complex interfaces necessary to harness multi-step automation.

4. Managing User Expectations for Autonomy

Companies must become experts in managing the "handoff" from human control to AI autonomy. This involves robust transparency layers—showing the user *what* the agent is doing, *why* it chose a certain action, and providing easy "undo" or "override" buttons. If users feel they have lost control, they will abandon the feature, regardless of its eventual success rate.

Practical Steps for Businesses Navigating the Agent Frontier

For product leaders, developers, and strategists, the roadmap forward must account for this positioning paradox.

For Product Managers and UX Designers:

Stop creating modes; start building intelligent pathways. Analyze user behavior: at what point does a simple query evolve into a multi-step task? That inflection point is where the agentic functionality should be offered, not as a toggle switch, but as a suggested next step. Design for "graceful failure" within agentic tasks, ensuring that if the AI gets stuck, it asks the user a highly specific clarifying question rather than simply crashing or giving a vague error.

For Marketing and Sales Teams:

Shift messaging from focusing on *intelligence* to focusing on *time saved* or *risk reduced*. If your agent can process compliance documents 10 times faster, lead with "10x Compliance Review Speed," not "New Agent Architecture Launched." Tie the feature directly to existing business metrics.

For Developers and Engineers:

Focus on agent specialization. Building the "one agent to rule them all" is too complex for initial rollout. Build specialized agents designed to interface only with specific APIs or datasets (e.g., "The CRM Agent" or "The Inventory Agent"). This limits the potential scope of failure while maximizing utility in a specific business function.

Conclusion: The Future is Agentic, But the Journey Must Be Intuitive

The struggle of the dedicated ChatGPT Agent is a classic technology adoption curve phenomenon: the technology races ahead of the user’s ability to integrate it. The fundamental direction of AI—toward intelligent, autonomous agents capable of planning and executing complex goals—remains inevitable. This is where productivity gains will truly materialize.

However, this powerful future will not be unlocked by forcing users to switch modes or learn complex new terminologies. It will be unlocked when the agentic capability feels like a natural extension of the core interaction—when the system simply does more for you, seamlessly, without requiring a separate introduction. The lesson from this user exodus is clear: Until AI solutions are positioned with crystal clarity and integrated without friction, even the most advanced technology risks fading into digital obscurity.