The Great Compute Race: How AI Shortages Are Forcing a Hardware Renaissance

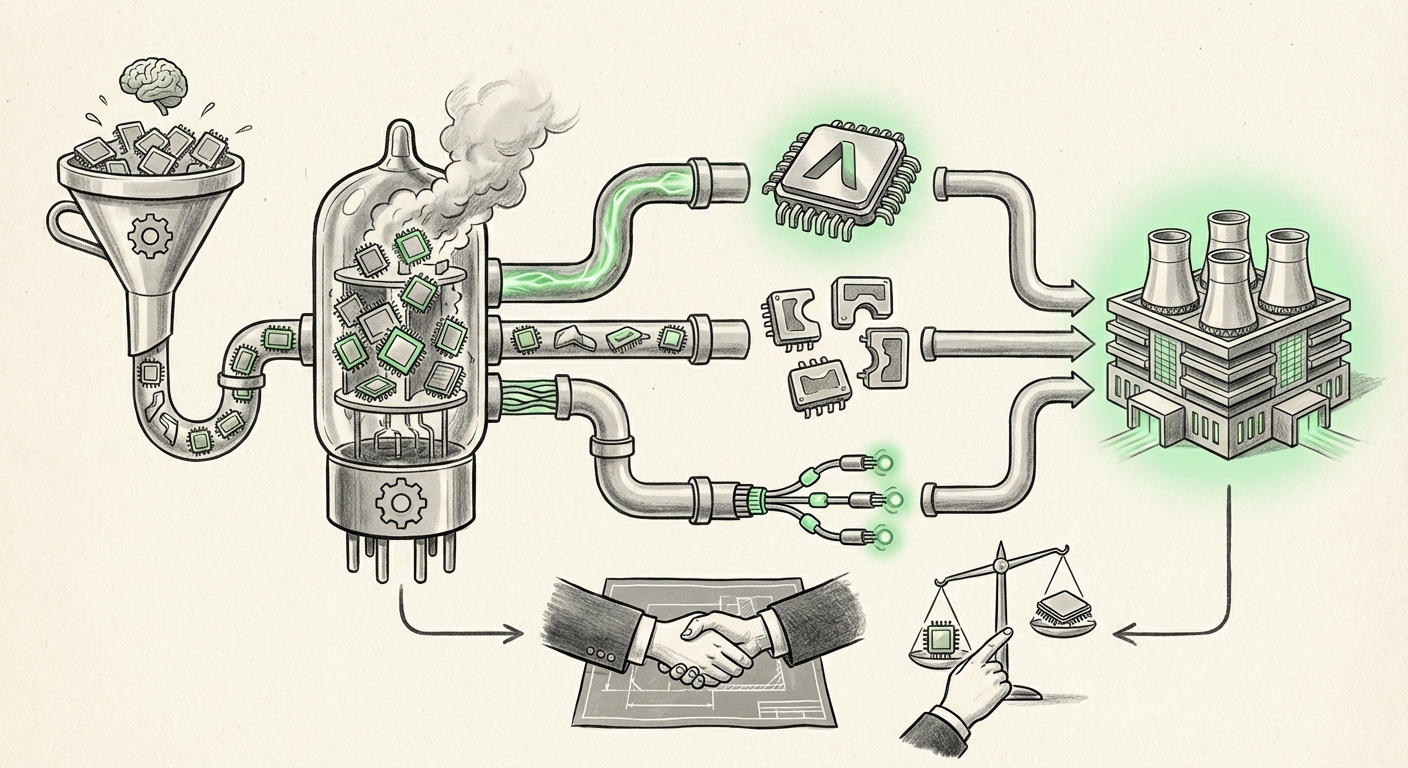

The Artificial Intelligence revolution is often characterized by breakthroughs in algorithms, like new Large Language Models (LLMs). However, the engine driving this revolution—the physical hardware—is sputtering under the strain of unprecedented demand. We are living through the AI Compute Crunch, a period defined by scarcity, massive capital expenditure, and an urgent search for technological alternatives.

Recent developments, particularly the focus on enterprise-ready accelerators like the AMD MI355X, signal a pivotal shift. The industry is moving away from reliance on a single supplier toward a more competitive, diversified hardware landscape. This isn't just about sourcing faster chips; it’s about reshaping the entire digital infrastructure bedrock of the next decade.

The Pain Point: Validating the Scarcity and Cost Overload

For a time, the narrative was simple: if you needed to train a massive AI model, you needed an NVIDIA GPU. The result of this undisputed dominance is a bottleneck that spans the entire supply chain. Reports tracking the lead times for flagship accelerators like the NVIDIA H100 and H200 chips paint a clear picture: demand far outstrips supply, leading to years-long waiting lists for major cloud providers and deep-pocketed enterprises.

This scarcity translates directly into inflated costs and delayed innovation. For a business looking to deploy cutting-edge AI—whether for customer service, complex logistics planning, or scientific research—the inability to secure necessary compute power quickly becomes a competitive disadvantage. This environment validates the industry’s intense interest in alternatives. When the primary path is blocked or prohibitively expensive, diversification becomes an imperative, not an option.

Actionable Insight for Procurement:

IT procurement managers must stop treating AI hardware as a standard commodity purchase. Expect long lead times and budget volatility. Strategic contracts and early engagement with secondary suppliers (like AMD or specialized ASIC providers) are now critical risk mitigation strategies.

The Counter-Attack: The Rise of Hardware Alternatives

The desperation created by the compute crunch is ironically fueling the most exciting hardware race in years. The interest in components like the AMD MI355X guide—covering memory scaling and performance trade-offs—shows enterprises are actively engineering solutions around non-dominant supply.

AMD’s Strategic Play

AMD is positioned to capitalize directly on the enterprise need for choice. While NVIDIA dominates the training segment, AMD's push into high-memory, high-throughput accelerators tailored for both training and efficient inference (running the finished models) offers a credible path forward. For businesses, adopting this ecosystem means less negotiating power on pricing and better assurance of delivery schedules.

The Hyperscaler Effect: Custom Silicon Takes Center Stage

The competition extends beyond the traditional GPU vendors. The largest consumers of AI compute—Google, Amazon, and Microsoft—are increasingly designing their own custom chips, known as ASICs (Application-Specific Integrated Circuits). Think of Google’s Tensor Processing Units (TPUs) or Amazon’s Trainium and Inferentia lines.

Why build when you can buy? Because custom silicon offers unmatched efficiency for specific tasks. While an NVIDIA GPU is designed to be excellent at everything, a custom TPU is designed to be *perfect* for Google’s particular version of LLM training. This trend signifies a fundamental shift: AI infrastructure is becoming highly specialized, moving away from a one-size-fits-all approach.

What this means for the future: We will see a layered compute infrastructure. General-purpose research might stick to merchant silicon, but high-volume, stable production inference workloads will increasingly run on specialized, highly efficient custom chips tailored by the cloud provider hosting the service.

Beyond the Chip: The Next Bottleneck—Interconnects

A single, powerful AI chip is impressive, but modern LLMs require thousands of these chips working in perfect unison, often spread across multiple server racks. A faster chip is useless if the chips cannot communicate instantly.

This brings us to the "next frontier" of the compute crunch: **interconnect technology**. Technologies like NVIDIA’s NVLink have been crucial for intra-server communication. But as clusters grow to millions of processors, the challenge shifts to moving data *between* servers efficiently. This requires revolutionary leaps in networking speed and latency reduction.

Emerging technologies like **Co-Packaged Optics (CPO)**—which integrate the optical transceivers directly onto the chip package—are essential. CPO aims to break the I/O bottleneck that slows down massive training jobs. If the hardware makers solve the chip shortage but fail to solve the speed-of-light problem between chips, we will simply swap one crunch for another.

Future Implication for Data Centers:

Data centers are transforming into monolithic, super-fast communication fabrics. Network engineers and infrastructure planners must look beyond traditional Ethernet/InfiniBand roadmaps and focus on optical solutions that support trillions of operations per second across entire campuses, not just within single server boxes.

The Gatekeeper: Software Portability and Vendor Lock-In

The most subtle, yet perhaps most impactful, hurdle to hardware diversification is software. For years, NVIDIA's CUDA programming platform has been the undisputed standard for deep learning development. This creates immense **vendor lock-in**. A company that invests heavily in CUDA optimization for their models finds it incredibly difficult, time-consuming, and expensive to retrain those models on, say, an AMD architecture.

This is where the maturity of alternative software stacks, like AMD’s **ROCm**, becomes a deciding factor. If ROCm offers near-parity with CUDA in terms of stability, developer tooling, and library support (through platforms like PyTorch or TensorFlow), the risk of switching hardware plummets.

Recent efforts across the industry are focused on abstraction layers and open standards, encouraging developers to write code that can run *wherever* the hardware is available. Initiatives that focus on model portability allow researchers to write a model once and deploy it across NVIDIA, AMD, or custom ASICs with minimal recompilation.

Actionable Insight for Developers & MLOps:

MLOps teams must prioritize flexibility. When developing new AI pipelines, evaluate the required investment needed to support ROCm or other open standards *alongside* CUDA. Building infrastructure that abstracts the underlying silicon ensures that your AI strategy can pivot quickly when a better hardware price point or availability schedule emerges.

Practical Implications for Businesses: Building Resilience in AI

The current compute crunch is more than a temporary supply issue; it is signaling the end of an era dominated by a single hardware provider for bleeding-edge AI. For businesses, navigating this new landscape requires a strategic pivot toward infrastructure resilience and architectural diversity.

1. Strategic Splitting of Workloads

Enterprises should segment their AI workloads:

- Exploration/Research (High Fluctuation): Use the highest-end, most readily available hardware, even if premium-priced, for rapid proof-of-concepts.

- Production/Inference (Cost-Sensitive): Dedicate optimized, potentially custom or AMD-based infrastructure here, where efficiency and stable supply chains yield the best Return on Investment (ROI).

2. The Rise of "FinOps for Compute"

Financial Operations (FinOps) teams, typically focused on cloud spending, must now become intimately familiar with hardware performance metrics. Understanding the dollar-per-Training-Hour metric across different hardware generations (NVIDIA H100 vs. AMD MI355X vs. Cloud TPU) is no longer optional; it is a core competency for managing AI expenditure.

3. Preparing for a Multi-Vendor Future

The future data center will look more like a balanced investment portfolio than a single-vendor stack. This means training teams on multiple frameworks, securing procurement pathways with secondary suppliers, and designing systems capable of load-balancing across heterogeneous hardware.

Conclusion: The Road Ahead is Diversified

The AI compute crunch has ripped the veil off the underlying infrastructure requirements of modern intelligence. It is forcing a rapid, necessary evolution in how compute is procured, designed, and deployed. The era of the undisputed GPU king is giving way to an exciting, if complex, ecosystem comprising merchant silicon challengers, deep-pocketed custom chip designers, and an intense focus on the physical pipes connecting them all.

For the enterprise, this means opportunity. The path to next-generation AI is no longer gated by a single monopoly; it is gated by architectural flexibility and the willingness to embrace software portability. The winners in the next wave of AI deployment won't just be those who have the most cutting-edge models, but those who have built the most resilient, adaptable, and diverse hardware foundations to run them on.