The Voice of the Digital Twin: Analyzing Google's Push for Personalized Voice Cloning in Gemini

The landscape of Artificial Intelligence is undergoing a profound shift. For years, AI interaction was defined by synthesized, somewhat robotic voices—functional, yet distinctly inorganic. That era is rapidly concluding. The recent indication that Google is embedding personalized voice cloning capabilities within its powerful **Gemini 3 Flash** models signifies more than just an iterative update; it marks the mainstreaming of the digital twin concept in real-time conversation.

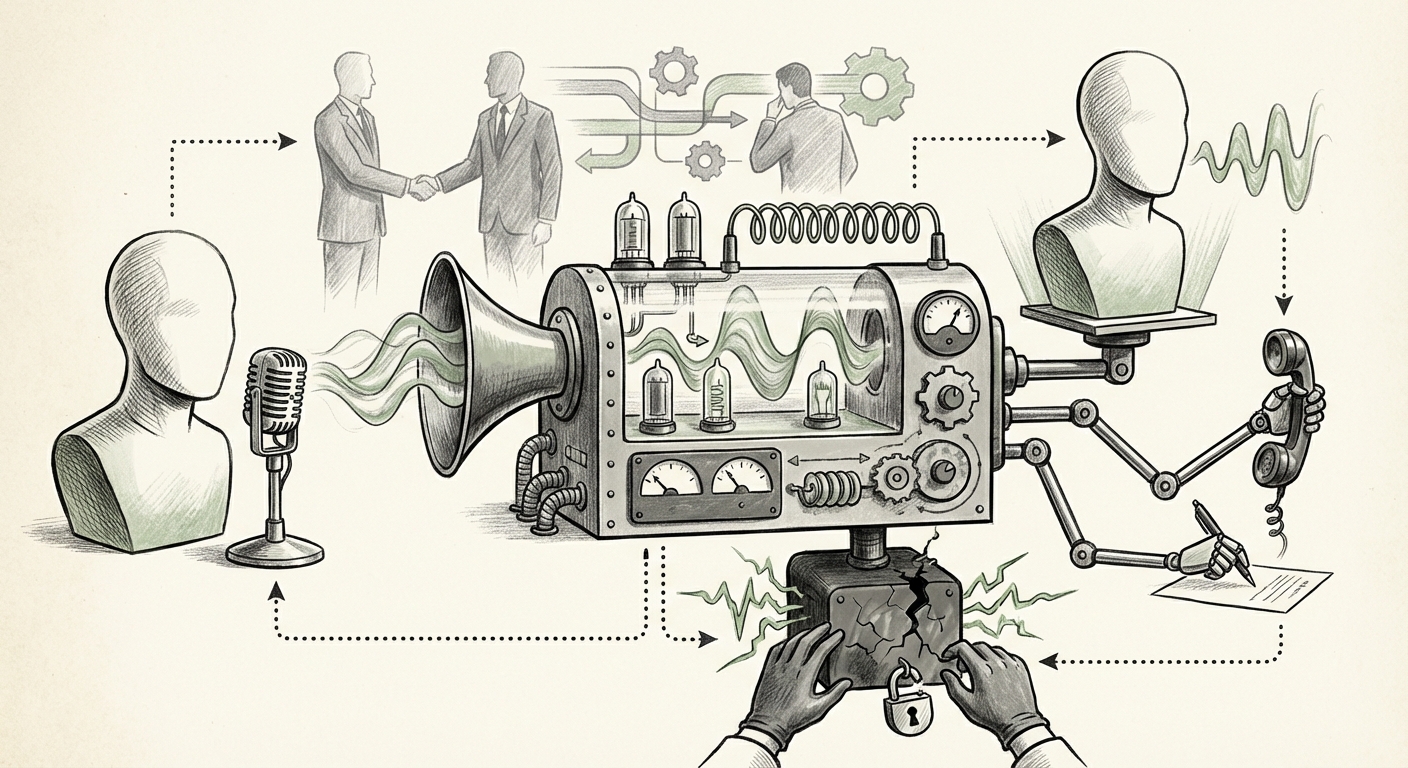

This development elevates voice synthesis from a mere output feature to a core element of user identity within the AI ecosystem. When an AI can convincingly speak with your voice, it ceases to be a generic assistant and becomes an authentic, personalized extension of you. As an AI technology analyst, understanding the convergence of high-fidelity audio generation with powerful Large Language Models (LLMs) is crucial for mapping the future of human-computer interaction, market competition, and, critically, digital security.

I. The Convergence: From Text to True Multimodality

The integration of voice cloning directly into a core LLM structure like Gemini Flash is strategically significant. Previous voice applications often relied on separate, specialized Text-to-Speech (TTS) services, which required data transfer and processing overhead. Integrating this capability *natively* into a light, fast model like Flash suggests Google aims for instantaneous, context-aware voice generation during active conversations.

The Competitive Sprint for Authenticity

This move places immense pressure on competitors. While platforms like OpenAI have already demonstrated impressive voice capabilities, baking the personalization—the user’s own voice—directly into the core experience pushes the industry standard toward mandatory, high-fidelity identity markers. As we survey the competitive landscape, this suggests that the next major AI benchmark won't just be reasoning power (benchmarks), but conversational naturalness and personalization depth.

For technology strategists, this means the future AI model must be inherently multimodal, handling text, code, and personalized audio streams seamlessly. Any platform lagging in this holistic approach risks being perceived as functionally obsolete for sophisticated personal and professional delegation.

Zero-Shot Fidelity: The Technical Leap

The quality of the cloned voice is what separates a novelty from a game-changer. Modern voice synthesis, leveraging advanced diffusion models, now requires surprisingly little input data—sometimes just a few seconds of speech—to create a near-perfect replica. This technological leap, often termed Zero-Shot Voice Cloning, removes the high bar for entry that previously existed for high-quality digital voice creation.

For the **AI researcher and product manager**, the focus shifts from *can we clone a voice?* to *how quickly and accurately can we integrate that voice into a high-stakes conversational agent?* The technical sophistication allows for immediate deployment into consumer products, validating the strategy that personalization drives engagement.

II. Practical Implications: The Rise of the Delegated Agent

When your AI assistant can sound exactly like you, the implications for business efficiency and personal delegation are monumental. We are moving past simple Q&A and toward the era of the truly delegated AI agent.

For Business: Beyond Customer Service

Businesses will move rapidly to adopt personalized voice agents. Imagine a sales manager delegating follow-up calls to a Gemini agent that sounds like them, using their specific cadence and tone to reassure a client, all while the manager focuses on high-level strategy. Or consider internal communications: personalized voice recordings for status updates, eliminating the need for executives to record hundreds of individualized messages.

- Hyper-Personalized CX: Customer Service bots sounding like senior staff members, increasing trust and compliance during sensitive interactions.

- Asynchronous Presence: Allowing executives or specialized experts to maintain a continuous, personalized communication presence even when offline or unavailable.

- Training & Onboarding: Creating personalized voice modules for interactive training simulations that mimic senior colleagues.

For Consumers: The Digital Double

For the everyday user, this feature unlocks unprecedented convenience. Imagine setting up your AI agent to call a doctor’s office, handle a customer service dispute, or even communicate with family members on your behalf—all delivered in your trusted voice. This fosters a deeper sense of connection and delegation, fulfilling the promise of an AI that truly understands and represents you.

However, as suggested by analyses concerning AI agents and personalization, this requires establishing digital identity markers that go beyond a simple password. The voice becomes a biometric key, tying the agent’s actions directly to the user’s authority.

III. The Shadow Side: Ethical and Security Catastrophes

With great capability comes exponential risk. The very feature that enables seamless delegation—a perfect, personal voice clone—is also the most powerful tool yet handed to malicious actors. This development demands an immediate, rigorous focus on security and policy.

The Deepfake Velocity Problem

The availability of high-fidelity cloning within a widely accessible platform like Gemini drastically lowers the barrier to entry for creating convincing audio deepfakes. As publications tracking "AI voice cloning" security risks frequently highlight, synthetic audio is already a primary vector for sophisticated scams.

If a bad actor can generate a voice clone of a CFO from just a few minutes of public testimony or a short recording, the potential for financial fraud via voice authentication checks or urgent transfer requests skyrockets. The traditional reliance on voice biometrics for confirming identity in finance or secure remote access becomes instantly obsolete.

The Identity Crisis in Digital Trust

This isn't just about finance; it’s about societal trust. The core challenge is attribution. When an AI speaks with your voice, how can the recipient be certain that *you* authorized the communication, and not a program trained on your voice? The onus will shift heavily onto platforms like Google to implement robust, non-bypassable detection and watermarking technologies.

Furthermore, the legal framework is struggling to keep pace. Discussions around consumer voice cloning fidelity and legal risks often point to an enormous gray area regarding consent, ownership of one's voice print, and liability when AI-generated speech causes harm or spreads misinformation. If my digital twin makes a contract error, who is accountable?

IV. Actionable Insights: Navigating the Authenticity Frontier

For businesses and policymakers, the arrival of personalized voice cloning is a clear signal: reactive security measures are no longer sufficient. We must pivot toward proactive, identity-centric architecture.

For Technology Leaders (Product & Engineering)

- Implement Cryptographic Watermarking: Demand and build systems that cryptographically sign all AI-generated audio. This signature must travel with the audio file to verify its synthetic origin, even if heavily compressed or altered.

- Layered Authentication: Never rely solely on voice. Any high-stakes action delegated to a personalized agent (e.g., financial transfer, data access) must be followed up with a secondary, non-voice biometric check or a pre-agreed human confirmation signal.

- Test Against Synthetic Attacks: Immediately begin integrating voice spoofing detection models into all voice-enabled security pipelines to ensure current authentication systems are resilient against near-perfect replicas.

For Business & Policy Stakeholders

- Establish Explicit Voice Usage Policies: Organizations must create clear internal guidelines defining when an employee's digital voice can be used, by whom, and for what purpose. Consent must be granular and revocable.

- Advocate for Disclosure Standards: Policymakers must accelerate standards requiring clear, unavoidable disclosure tags (visual and auditory) for all synthetic media used in public or commercial contexts.

- Invest in Digital Identity Resilience: Recognize that your digital identity is now multimodal (text, image, voice). Security budgets must reflect the new risk vector presented by highly capable generative models like Gemini Flash.

Conclusion: The Sound of the Next Decade

Google’s integration of voice cloning into Gemini 3 Flash is not just an engineering feat; it is a declaration about the future direction of AI interaction. We are heading toward a world where our digital presence is truly personalized, sounding and feeling like us. This promises massive productivity gains and a new level of personalized digital service.

However, the very technology that promises unprecedented convenience simultaneously threatens the bedrock of digital trust. The next 12 to 18 months will be a high-stakes race: can the development of detection, authentication, and policy guardrails keep pace with the rapid democratization of high-fidelity voice synthesis? The answer will determine whether personalized AI agents become indispensable collaborators or the primary tool of sophisticated digital fraud.