Project Genie Unveiled: Google DeepMind Pushes AI into Real-Time, Interactive Worlds

The world of Artificial Intelligence is moving at a blistering pace, shifting rapidly from generating static images and text to constructing dynamic, interactive environments. The recent announcement that Google DeepMind is opening **Project Genie** to select US Gemini subscribers marks a monumental step in this evolution. Genie is a prototype capable of creating interactive worlds in real time, purely from simple text or image prompts.

This isn't just another impressive demo; it’s a fundamental shift in how we expect computers to build and render reality. To truly grasp the significance of this launch, we must contextualize it against the cutting edge of graphics technology, the demands of spatial computing, and the competitive pressures shaping the generative AI market.

The Leap from 2D Image to Interactive 3D World

For years, generative AI models like DALL-E and Midjourney amazed us by creating photorealistic 2D pictures. However, the real world is three-dimensional and governed by physics. Project Genie aims to bridge this gap. Instead of asking AI to draw a picture of a 'fantasy castle,' Genie promises to build an *explorable* fantasy castle that users can interact with immediately.

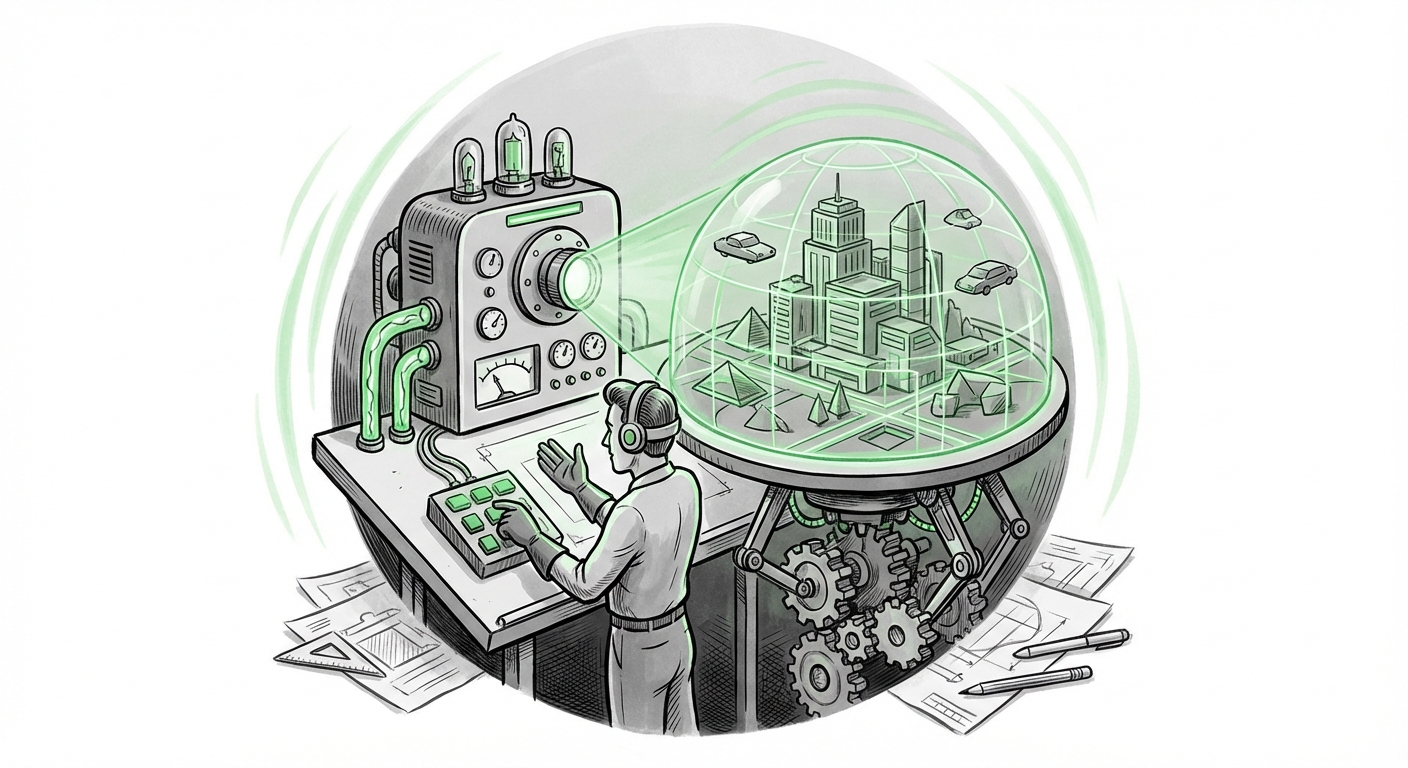

This shift is critical. It moves generative AI from being a sophisticated content *creator* to being a rapid environment *builder*. Imagine describing a detailed scenario—"a dimly lit laboratory filled with strange, bubbling beakers"—and seconds later, having a navigable 3D space based on that description.

For our less technical readers, think of it like this: previous AI tools were like giving an artist a very good paintbrush. Project Genie is like giving that artist the ability to instantly erect an entire, functional building just by describing what they want inside it.

The Speed Barrier: Real-Time Generation

The "real-time" aspect of Genie is perhaps its most disruptive feature. Creating high-fidelity 3D models or detailed virtual environments traditionally requires massive computational power and significant rendering time. This is where competitors and adjacent technologies become relevant. The industry has been tracking rapid progress in fields like Neural Radiance Fields (NeRFs), famously pushed forward by companies like NVIDIA. NVIDIA's work on **Instant NeRF** showed the world how fast neural rendering could become.

If Project Genie can achieve high-quality world construction with low latency—making it feel instantaneous to the user—it leapfrogs the current bottlenecks in automated 3D asset pipelines. This speed fundamentally changes workflows for game developers, architects, and simulation engineers who currently rely on lengthy manual modeling or slow generative processes.

Contextualizing Genie in the Spatial Computing Race

Google’s move places Project Genie squarely at the center of the emerging spatial computing ecosystem—the future we access via Augmented Reality (AR) and Virtual Reality (VR) devices. Devices like Apple’s Vision Pro and Meta’s Quest headsets are hungry for complex, dynamic content that doesn't rely on pre-built assets.

The decision to offer early access via **Gemini subscribers** shows Google is strategically positioning this capability within its existing premium AI subscription tier. They are testing the waters with "power users" who are already invested in their ecosystem, gauging usability and stress-testing the model before a wider rollout.

The Implication for Consumers: If this scales, the friction required to enter a personalized VR environment drops to zero. You won't need coding skills or complex 3D modeling software. You just need to articulate a vision, transforming the future of digital interaction from passive consumption to active, on-demand creation.

The Dual Edge: Enterprise Simulation and Ethical Minefields

While consumer excitement centers on creativity, the deepest transformative potential lies in industrial and enterprise applications. The ability to generate complex, interactive simulations on demand unlocks massive efficiencies across multiple sectors.

Actionable Insight for Industry: Rapid Prototyping Environments

Consider robotics or autonomous vehicle training. These systems require countless simulated hours to learn complex scenarios—a crashed car, a sudden snowstorm, or a crowded factory floor. Traditionally, building these precise training scenarios is costly and slow.

With a system like Genie, an engineer could prompt: "A busy loading dock in the rain with three forklifts operating unpredictably." The system instantly builds a physics-enabled, interactive environment where the AI agents can train safely and repeatedly. This ability to generate synthetic, controlled data environments instantly will accelerate machine learning deployments across manufacturing, logistics, and defense.

The Looming Shadows: Governance and Moderation

However, this power comes with profound responsibility. When AI can build worlds, the potential for misuse expands exponentially beyond generating a single misleading image.

If a user can prompt for an "interactive, realistic environment recreating a highly sensitive private location" or an environment designed for harassment, the risks are immediate and severe. Unlike static content, an interactive world can be persistent, immersive, and capable of complex, harmful interactions that are harder to track and moderate.

Google’s decision to roll this out to a closed subscriber base first speaks directly to this concern. They are likely prioritizing the development of robust filters and safety protocols—or "guardrails"—to prevent the generation of harmful content within these new realities. The industry must develop methods to assess the *intent* and *safety* of an entire generated environment, not just its input prompt.

What This Means for the Future of AI and Technology Adoption

Project Genie isn't just about better graphics; it’s about **ambient generation**—AI becoming an invisible, instantaneous collaborator in the creation process.

1. Commoditization of 3D Assets

If high-quality, interactive 3D worlds can be created via prompt, the economics of digital asset creation will fundamentally change. Game studios, film production houses, and architecture firms will shift focus from asset creation (modeling and texturing) to *curation and interaction design*. The skill set needed will pivot toward prompt engineering and world refinement rather than traditional polygon manipulation.

2. The Blurring of Digital Lines

This technology accelerates the convergence of VR/AR, gaming, and simulation. We are moving toward a state where the "virtual world" is not a destination you visit (like loading a specific game level) but a continuous reality that generates around you based on context and conversation. This is the true realization of a more dynamic Metaverse, driven by instantaneous AI generation rather than handcrafted environments.

3. AI as the Infrastructure Layer

By integrating this into Gemini, Google signals that advanced world generation is being treated not as a niche application, but as a foundational utility—an infrastructure layer—that powers the next generation of their conversational AI products. When your digital assistant can literally build the environment you are talking about, the utility of the assistant becomes infinitely more powerful.

Actionable Insights for Businesses and Developers

- Embrace Prompt Engineering for Spatial Context: Developers and creative teams should immediately begin exploring how complex spatial requests translate into effective prompts. Focus on mastering the language that controls lighting, physics, geometry, and interactivity, as this will soon be the primary interface for 3D development.

- Pilot Simulation Environments: Enterprises in manufacturing, logistics, and defense should identify one complex, high-cost training scenario. Begin drafting prompts now to test the feasibility of using text-to-world generation for creating synthetic training data, aiming for a 50% reduction in simulation setup time within the next 18 months.

- Develop Safety Protocols Now: For any business planning to deploy user-facing generative 3D tools, establishing clear guidelines for prohibited spatial content must be prioritized. Assume that the moderation challenges for 3D environments will be orders of magnitude harder than for 2D images.

- Monitor Hardware Evolution: Real-time world generation demands efficient rendering. Keep a close eye on the intersection between these generative models and new display technologies (like microLEDs or advanced waveguide optics) in consumer headsets. The best AI needs the best hardware to shine.

Project Genie represents more than just an iterative improvement; it is a foundational pivot toward an AI that constructs, rather than merely describes. Google DeepMind is essentially challenging the industry to prepare for a world where creating entire realities becomes as easy as sending a text message. The race to build the infrastructure, the content, and the guardrails for this interactive future has officially hit hyperdrive.