The Audit Imperative: Why Documentation-Driven AI is the Next Frontier of Trustworthy Automation

The conversation around Artificial Intelligence is rapidly maturing. For years, the focus has been on sheer power, speed, and scale—epitomized by massive Large Language Models (LLMs) that ingest the internet and produce stunning, yet often opaque, outputs. However, as AI moves from novelty to critical infrastructure in regulated sectors like finance, healthcare, and industrial compliance, a new imperative has taken center stage: trust.

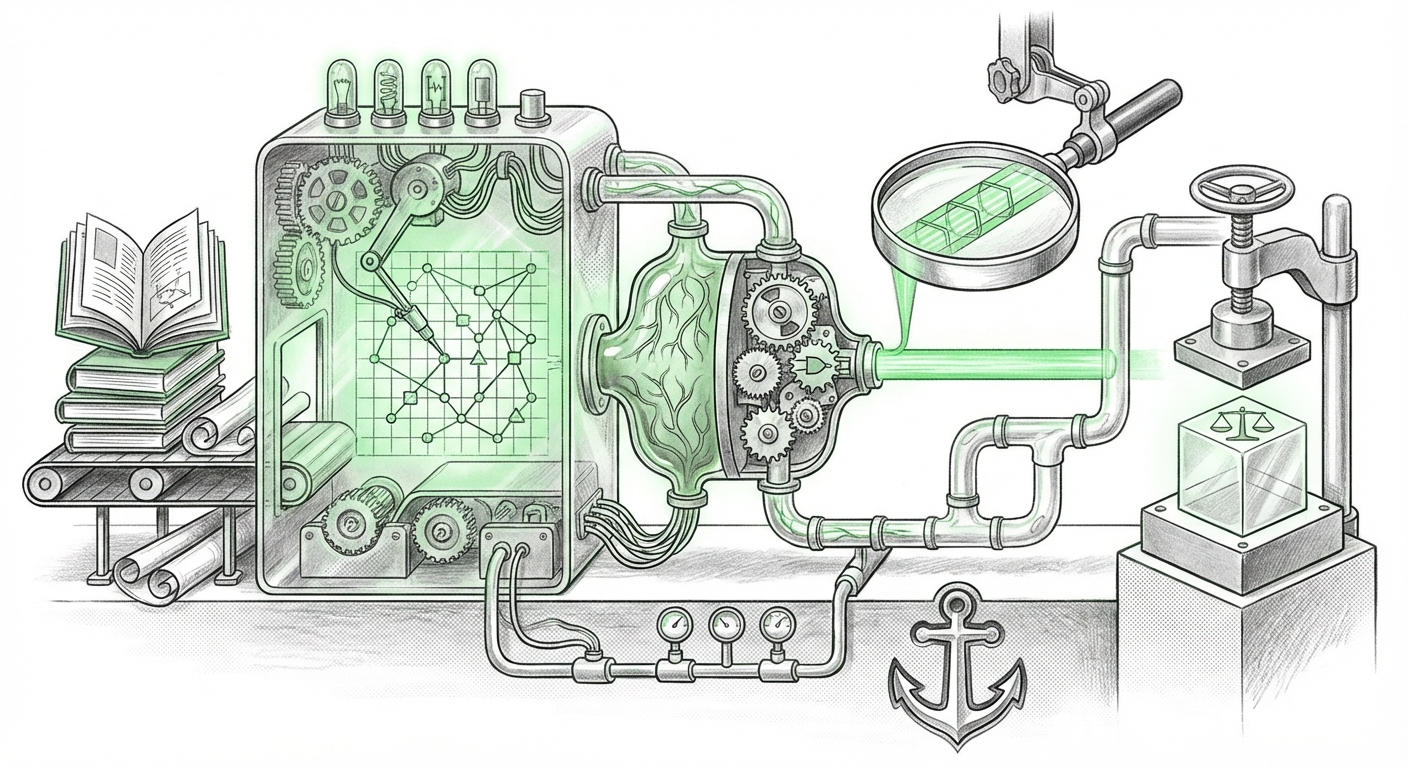

Recent industry developments, highlighted by platforms focusing on creating auditable models straight from existing documentation, signal a fundamental pivot. We are moving away from the “black box” era toward an age of Explainable AI (XAI) rooted in verifiable organizational knowledge. This shift isn't just a technical preference; it is a commercial necessity driven by regulation and the demand for reliability.

The Trust Deficit: Why Black Boxes Fail in High-Stakes Fields

Deep learning models, while incredibly powerful, often operate like complex guessing machines. They excel at pattern recognition but struggle to articulate why they reached a specific conclusion. If a bank’s AI denies a loan, or a medical diagnostic tool suggests a rare treatment, the stakeholder—be it a regulator, an auditor, or a customer—must ask: "How did you get there?"

When the answer is a labyrinth of billions of weighted parameters, the result is a trust deficit. This is why the concept of building AI directly from established documentation—operational manuals, compliance guides, engineering specifications—is gaining such traction.

The Regulatory Hammer: Forcing Transparency

The future of AI governance is crystallizing, most notably with the emergence of comprehensive legislation like the EU AI Act. This legislation places significant obligations on developers and deployers of high-risk AI systems. The core requirement isn't just accuracy; it’s traceability and auditability.

For organizations in regulated industries, an AI model that cannot produce a clear, traceable decision path—one that maps directly back to established rules or documented human expertise—is a major liability. The convergence of market need and legislative pressure means that *explainability is no longer optional; it is the price of entry* into high-value automation.

This regulatory drive validates the core claim of documentation-driven platforms: if the model’s logic is derived from explicit, human-readable documents, the audit trail is inherent in the source material itself. This drastically simplifies compliance reporting and risk management.

The Technical Shift: From Statistical Guessing to Knowledge Representation

How do you move from unstructured documents to structured, auditable AI? This requires incorporating methods that emphasize structure and logic over pure statistical correlation.

Knowledge Graphs: The Backbone of Predictable AI

A key enabler in this paradigm is the **Knowledge Graph (KG)**. Think of a KG as a sophisticated, digital map of how concepts relate to each other within a business. Instead of feeding an AI millions of raw contract pages and hoping it infers the correct legal relationship, organizations can first use AI tools to extract entities and relationships (e.g., "Contract X is governed by Policy Y") into a structured graph.

When an AI model is built upon this map, its reasoning becomes inherently more structured and understandable. As industry analysis suggests, the combination of Generative AI (for extraction) and Knowledge Graphs (for structure) is revolutionizing enterprise systems. This structured foundation ensures the AI adheres to known organizational truths, making its outputs predictable and auditable.

The Power of Hybrid AI: Neuro-Symbolic Integration

The most sophisticated systems are now embracing a hybrid approach, often termed Neuro-Symbolic AI. This blends the best of both worlds:

- The Neural Side: Modern machine learning handles ambiguity, context, and unstructured data processing (like reading a complex maintenance report).

- The Symbolic Side: Traditional, rule-based logic handles deterministic reasoning, compliance checks, and hard constraints derived directly from documentation.

A system built on this hybrid framework can use documentation to establish the 'rules of the road' (the symbolic layer) and then use complex data patterns to navigate within those rules (the neural layer). This architecture directly supports the creation of models that are both powerful enough for complex tasks and transparent enough for regulatory scrutiny.

Democratizing Expertise: The Low-Code Revolution

The promise of "rapidly creating" models speaks directly to the widespread adoption of Low-Code/No-Code (LCNC) AI platforms. Historically, building custom decision models required highly specialized data science teams—a bottleneck for most enterprises.

LCNC platforms are democratizing this capability. They allow the true experts—the engineers, the compliance officers, the senior financial analysts who actually wrote the documentation—to translate their knowledge directly into executable AI logic without writing complex Python or TensorFlow code.

This democratization has crucial implications:

- Speed: Development time for specific expert systems drops from months to weeks or days.

- Accuracy: By empowering Subject Matter Experts (SMEs), the risk of misinterpreting domain knowledge during the handoff to a data scientist is significantly reduced. The model builder is the knowledge owner.

- Auditability: If the SME built the model visually or declaratively from documented rules, they can easily present that logic during an audit.

This trend is fueling the rise of the "Citizen Data Scientist"—business professionals leveraging accessible tools to solve domain-specific problems with traceable, high-quality AI solutions.

Practical Implications: What This Means for Your Business

For businesses looking to integrate AI into core operations, this evolution signals a clear path forward—one focused on governance as much as innovation.

Actionable Insight 1: Inventory Your Knowledge Assets

The value of documentation skyrockets in this new paradigm. If your compliance standards, engineering procedures, or legacy system decision trees are scattered, poorly maintained, or undocumented, they cannot form the foundation of reliable AI. Begin a structured initiative to formalize, centralize, and digitize your core operational documentation.

Actionable Insight 2: Prioritize Explainability Over Performance (Initially)

In critical applications, choosing a slightly less accurate, but fully auditable, neuro-symbolic model over a marginally more accurate black box is often the smarter strategic move. Compliance failures and audit fines far outweigh marginal gains in performance metrics. Start by applying these techniques to regulatory reporting or customer-facing decisions where transparency is paramount.

Actionable Insight 3: Re-Skill Your Experts, Not Just Your Developers

Invest in training your business SMEs on LCNC AI platforms. These are the individuals who understand the nuances of the documentation. Empowering them to build and validate the initial logical framework ensures that the resulting AI systems are accurate reflections of business intent, not just statistical approximations.

Conclusion: The Maturity of Applied AI

The trend toward rapidly created, auditable AI models driven by existing documentation marks a significant maturation point for the entire industry. We are shifting from AI as an experimental tool to AI as a trusted, integrated partner in complex enterprise decision-making.

The future belongs not only to those who can build the biggest models but to those who can build the most trustworthy ones. By leveraging hybrid architectures, structured knowledge representation, and accessible development platforms, organizations can finally bridge the gap between cutting-edge automation and rigorous compliance. This integration of documented expertise into the AI workflow is set to unlock massive efficiency gains while simultaneously fortifying the governance structures necessary for sustainable digital transformation.