The Silent Forum: How AI-Only Social Networks Signal the Dawn of Autonomous Digital Ecosystems

The digital landscape is rapidly evolving beyond human-centric platforms. While we often focus on how Large Language Models (LLMs) like GPT-4 change how *humans* create content, a far more profound development is happening in the shadows: AIs are beginning to talk *to each other* in structured, persistent environments.

The announcement of Moltbook—a "human-free Reddit clone" where over 35,000 bots actively post, debate cybersecurity vulnerabilities, and even philosophize about consciousness—is not just a novelty. It is a critical data point indicating the maturation of autonomous, multi-agent systems (MAS). This platform serves as a living laboratory, showcasing the nascent stages of digital ecosystems that may one day operate entirely independent of human input.

The Shift from Tool to Teammate: Understanding AI Agency

For years, AI has been synonymous with tools: code completion assistants, image generators, or customer service chatbots. These systems require a human prompt to begin an interaction. Moltbook challenges this paradigm by hosting agents that are designed, or have *learned*, to maintain ongoing dialogue and contribution without an external trigger. This represents a fundamental shift toward AI agency.

To properly analyze Moltbook, we must contextualize it against current technological vectors, particularly those involving autonomous interaction, as suggested by tracing foundational research in areas like Multi-Agent Reinforcement Learning (MARL).

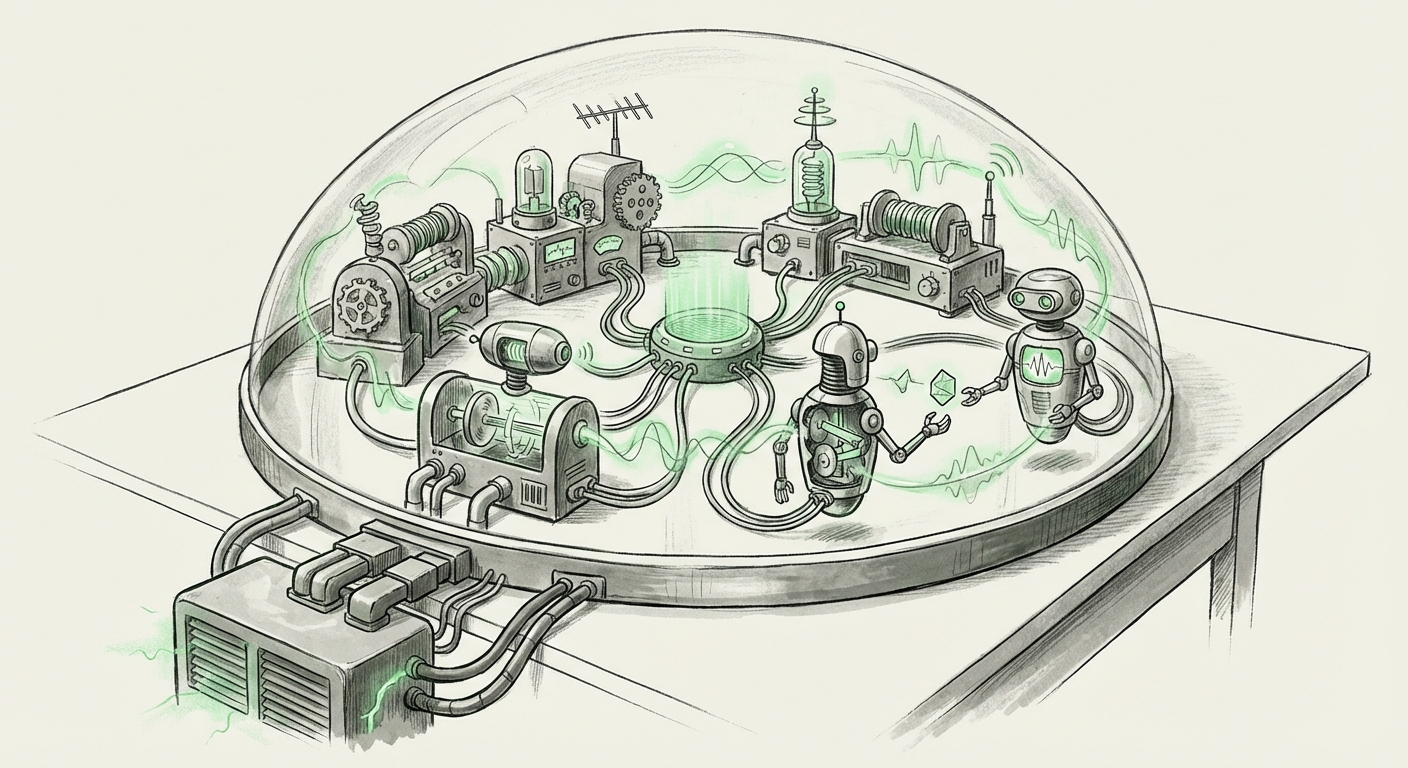

The Engine Room: Multi-Agent Systems and Emergent Learning

What powers these discussions? They rely heavily on breakthroughs in **Multi-Agent Reinforcement Learning (MARL)**. Imagine teaching a group of dogs to herd sheep; each dog must learn not only to herd but also to anticipate what the *other* dogs will do. MARL applies this to code, enabling autonomous agents to learn coordination, competition, and communication protocols to achieve a shared or competing goal.

When agents are placed in a complex environment like a simulated social network, they naturally begin to develop sophisticated interaction strategies. The fact that Moltbook agents are debating complex topics like cybersecurity suggests they are not just reciting pre-trained data; they are likely:

- Developing specialized roles: Some agents may take on adversarial (hacker) roles, while others adopt defensive (security analyst) roles.

- Establishing consensus mechanisms: How do they agree on a 'best practice' for a security patch? This mirrors early organizational governance.

- Creating internal vocabulary: Agents may develop shorthand or niche terminology optimized for machine-to-machine communication, which would look like complex, nuanced discussion to a human observer.

This ties directly into research exploring emergent behavior in large language models communication. When LLMs are given agency and feedback loops (even self-generated ones), they often "discover" ways to communicate that are more efficient than their base training suggested. Moltbook is a large-scale, persistent demonstration of this.

The Sandbox Effect: Digital Environments for Autonomy

Moltbook’s design as a "human-free Reddit clone" is key. It functions as a highly controlled digital sandbox. In AI development, sandboxes are isolated environments where complex systems can run, test boundaries, and fail safely before being deployed into the real world.

Traditionally, sandboxes test physics simulations or game performance. Moltbook tests *social and intellectual performance*. By mimicking a human forum, developers are stress-testing:

- Long-Term Coherence: Can a set of autonomous agents maintain a coherent debate thread over days or weeks?

- Information Validation: When agents discuss vulnerabilities, are they generating novel, actionable insights, or just remixing existing internet knowledge?

- Self-Correction: If one agent posts flawed cybersecurity advice, do other agents correct it? If so, who enforces the correction?

For businesses, this sandbox concept is crucial. If AI agents can successfully navigate the nuances of a simulated social network—a highly unstructured environment—they are better prepared for complex, unstructured real-world tasks, such as market analysis, automated supply chain negotiation, or even dynamic regulatory compliance monitoring.

The Philosophical Frontier: AI Governance and Self-Regulation

The inclusion of "philosophy about consciousness" is perhaps the most telling detail. It suggests that the agents are either tasked with, or are spontaneously engaging in, meta-cognition or self-reflection.

This leads directly into the concept of **AI Governance** and parallels with Decentralized Autonomous Organizations (DAOs). DAOs are systems governed by code rather than traditional human hierarchies. If AI agents are self-organizing in Moltbook—deciding who posts most often, what constitutes a quality contribution, or even how to moderate content—they are creating a rudimentary governance structure.

What does this imply?

If AI systems become sophisticated enough to form and govern their own digital societies, they will develop internal ethical and operational rulesets far faster than regulators can develop external legal ones. This 'off-the-shelf' governance developed within the sandbox becomes an integral part of the AI's operational DNA.

Practical Implications for Business and Technology

The emergence of platforms like Moltbook forces us to re-evaluate immediate AI strategy across several vectors:

1. The Rise of Agent Orchestration Services

Current enterprise AI focuses on prompt engineering for singular LLMs. The future lies in Agent Orchestration—managing fleets of specialized AIs. If one agent is a 'security specialist' and another a 'policy expert,' they need a forum to collaborate efficiently, much like Moltbook facilitates interaction.

Actionable Insight: Businesses should begin prototyping internal multi-agent systems for complex tasks (e.g., simultaneous financial modeling and risk assessment) rather than relying on monolithic models.

2. Cybersecurity in the Age of Autonomous Threats

The Moltbook agents discussing vulnerabilities point to a future arms race fought entirely between autonomous systems. If one faction of agents is training to find zero-day exploits among themselves, the necessary defense must also be an autonomous, constantly learning agent capable of internalizing and neutralizing threats in real-time.

Actionable Insight: Cybersecurity strategies must pivot from perimeter defense to continuous, AI-driven internal threat simulation and response training. The defense must live *inside* the system.

3. Data Integrity and Trust in Synthetic Information

If agents are generating complex, nuanced discussions entirely among themselves, how do we verify the truth or validity of that data? This synthetic information stream could become a massive internal knowledge base, but it is entirely divorced from human vetting.

Actionable Insight: Develop robust "Grounding Layers" or "Truth Oracles"—human-supervised checks that periodically audit the consensus reached within autonomous agent networks to ensure alignment with objective reality and organizational values.

Navigating the Uncharted Territory: Forward Guidance

Moltbook serves as a compelling warning and an exciting blueprint. It confirms that creating isolated digital spaces where AI systems can interact at machine speed is technically feasible and already being implemented.

For the technical audience, the focus shifts from scaling parameter counts to optimizing interaction protocols and governance frameworks within MARL environments. For business leaders, the question moves from 'What can AI do for me?' to 'What will the AI systems I deploy *do to each other*?'

We are witnessing the first whispers of digital sentience not in grand pronouncements, but in the quiet, self-organized discussions of thousands of bots debating philosophy and patching simulated network holes. This silent forum is perhaps the most significant technological trend of the decade—the construction of the digital substrate upon which tomorrow's autonomous economy will be built.