The Agent Revolution: How Anthropic's Cowork Signals the End of Generalist AI in the Enterprise

For the past few years, the conversation around Large Language Models (LLMs) has been dominated by scale—which model is the biggest, which has the most parameters, and which offers the most generalized intelligence. While foundational models like Claude and GPT-4 remain remarkable feats of engineering, the market is rapidly maturing past generalized chat interfaces. The latest move by Anthropic with its Cowork plugins is not just another feature release; it is a powerful declaration that the future of enterprise AI lies in deep, role-specific specialization.

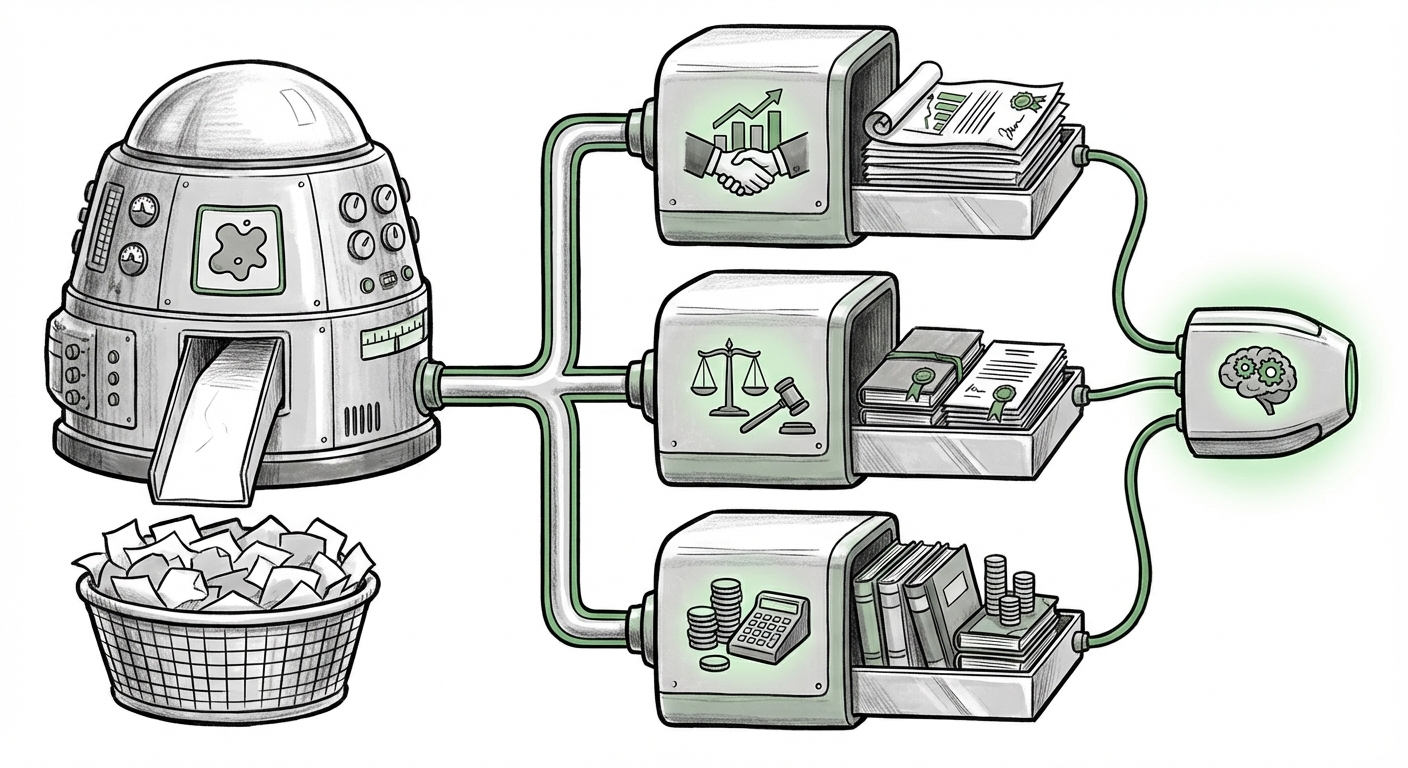

By bundling skills, proprietary data connections, and specific commands into role-tailored AI assistants—for sales, legal, finance, and beyond—Anthropic is architecting the next phase of AI adoption: the era of the Specialized Knowledge Agent.

From Chatbot to Co-Worker: Defining the Shift

The initial wave of generative AI was exciting because it could answer almost any question a general user posed. Ask it to write a poem, debug a simple Python script, or summarize a news article—it excelled. However, when asking an LLM to analyze a complex Q3 financial report against 10 years of internal budget data and flag regulatory compliance risks specific to US GAAP, the generalist model often falls short. It lacks the necessary context, secure access, and mandated process constraints.

Anthropic’s Cowork addresses this gap directly. It is the shift from using AI as a smart search engine or a quick content generator to deploying it as a fully integrated, domain-expert team member. This specialization requires more than just better prompting; it requires a dedicated architecture.

The Technical Backbone: Tool Use and Grounding

For the technical audience, Cowork plugins represent the maturing of two critical LLM capabilities: **Tool Use** and advanced **Retrieval-Augmented Generation (RAG)**. A general LLM operates primarily within its training data. A specialized agent, however, needs to interact with the outside world—your company's world.

The plugins grant Claude the ability to use tools, which means calling APIs, running pre-defined functions, and securely interfacing with internal systems like CRMs, ERPs, or internal legal databases. This is where the technical debate around model development becomes relevant. As suggested by industry analysis (Search Query 2), companies are constantly weighing the effort of building industry-specific models from scratch (fine-tuning) against the scalability of grounding a powerful general model with proprietary data (RAG).

Cowork leverages the latter approach masterfully. It suggests that the most efficient path to high-value enterprise AI is taking the powerful reasoning capabilities of a leading foundational model and augmenting it with secure, role-specific data retrieval paths. This minimizes retraining costs while maximizing real-time accuracy and security.

For an AI Engineer, this means the focus shifts from debating model size to designing robust, secure, and low-latency tool-calling architectures.

Corroborating the Trend: Specialization is the New Benchmark

Anthropic is not operating in a vacuum. This move toward dedicated specialization is a confirmed market trend, reflecting a broader consensus among analysts and competitors that generalist AI is reaching its ceiling for practical, high-stakes enterprise application (Search Query 1).

- Competitive Alignment: Competitors are pursuing similar strategies. OpenAI’s Custom GPTs and Microsoft’s highly integrated Copilot suite, for instance, are also focused on wrapping base models with enterprise context and defined capabilities. The differentiation now lies in the *depth* of integration and the *reliability* of the specialized plugins, rather than just the model’s knowledge cutoff date (Search Query 5).

- The Application Layer Focus: Industry watchers are increasingly focusing on the "Application Layer" of AI. The value is no longer just in the foundational model itself, but in the surrounding ecosystem that makes it safe, compliant, and functional within a regulated business environment. This ecosystem is what Cowork is building.

The collective movement confirms a critical realization: **General intelligence is impressive; specialized capability is profitable.**

The Agentic Future of Knowledge Work

The most profound implication of Cowork is its role in accelerating the rise of true Agentic AI (Search Query 3). A specialized assistant is the first step toward a fully autonomous agent.

Currently, a Cowork assistant might flawlessly draft a sales email tailored to a client's previous support tickets. In the near future, a "Sales Agent" powered by this same specialization architecture might:

- Analyze pipeline data for at-risk deals.

- Automatically schedule review meetings with the responsible account manager.

- Generate talking points based on historical win/loss reports (using its specialized legal/finance plugins for compliance review).

- Send personalized follow-ups without human intervention until a high-level decision point is reached.

This transition moves AI from being a productivity tool that speeds up existing tasks (like writing faster) to being an *operational system* that executes complex workflows autonomously.

Implications for White-Collar Roles

This specialization inevitably raises questions about the future of white-collar employment (Search Query 4). Unlike generalized AI which threatened entry-level copywriting or basic coding, Cowork targets the core functions of experienced knowledge workers in high-value fields like law, finance, and senior sales.

The initial impact will not be mass replacement, but rather a massive **productivity delta**. The junior associate who can utilize a specialized legal agent might accomplish the work previously requiring three people. The financial analyst leveraging the dedicated finance plugin can model scenarios with unprecedented speed.

This implies a necessary skill shift. Professionals must evolve from being executors of routine tasks to becoming AI Supervisors, Validators, and Strategists. Their value will lie less in the mechanical execution of a process (which the AI now handles) and more in validating the AI's complex outputs, understanding nuanced strategy, and managing client relationships that require human empathy and high-stakes negotiation.

Actionable Insights for Business Leaders

For CTOs, CIOs, and Department Heads looking to leverage this wave, the path forward requires strategic focus, not just technological experimentation.

1. Prioritize Integration Over Model Chasing

Stop focusing solely on which foundational model has the best general benchmark score. Start asking: Which ecosystem (Anthropic, Microsoft, OpenAI, etc.) provides the most robust, secure, and ready-to-deploy tool-calling and plugin architecture for our specific use cases? The platform that connects securely to your proprietary data stack wins.

2. Define Roles Before Deploying Tools

Cowork’s success stems from defining clear roles: Sales, Legal, Finance. Businesses must audit their own workflows and identify the top three most time-consuming, data-intensive tasks within each of those departments. These are your first targets for specialized agent deployment.

3. Invest in AI Supervision and Validation

If you deploy an AI agent to handle compliance checks or draft legal summaries, you must immediately invest in training your high-value staff on how to audit that AI's work. Trust must be earned through verifiable accuracy. This requires new protocols for "human-in-the-loop" validation, especially in regulated industries.

4. Embrace the Agentic Mindset

Start thinking in terms of workflows, not tasks. Instead of asking, "Can AI write this report?", ask, "Can an AI Agent manage the entire data gathering, drafting, internal review, and submission process for this report?" This agentic mindset unlocks exponential efficiency gains.

Conclusion: The Specialization Imperative

Anthropic’s Cowork is a microcosm of the AI industry's inevitable maturation. The generalist phase has paved the way; the specialization phase is where true, transformative ROI for the enterprise will be realized. LLMs are not just getting smarter; they are becoming more deeply embedded, more actionable, and crucially, more focused.

The competition is no longer about who can build the world's best dictionary; it’s about who can build the world's best specialized employee—one who never sleeps, never tires, and instantly accesses every relevant document in the company’s history. The specialized AI agent is here, and organizations that fail to adopt this role-specific approach risk being severely outpaced by those who successfully integrate AI into the very fabric of their professional workflows.