The Context Crucible: Why OpenAI's 6-Layer Data Agent Marks the Real Enterprise AI Revolution

The public discussion around Large Language Models (LLMs) often centers on headline-grabbing capabilities: generating poetry, writing basic code, or passing standardized tests. But behind the scenes, the real battle for AI supremacy is being waged over data access. A recent development from OpenAI—the creation of an internal AI data agent capable of navigating a staggering 600 petabytes of proprietary information using a sophisticated, six-layer context system—is not just an internal productivity boost; it is a massive signal flare indicating the direction of the next industrial wave of artificial intelligence.

This shift moves AI from being a general knowledge tool to becoming a deeply specialized, context-aware corporate brain. For both technical leaders and business executives, understanding this transition is paramount. This analysis explores the technical underpinnings, the competitive necessity, and the vast implications of mastering proprietary data at petabyte scale.

The Core Innovation: Beyond Simple Prompting to Deep Context

When you ask ChatGPT a question, it relies on its pre-trained knowledge—a static snapshot of the internet up to its training cut-off. When an OpenAI employee asks their internal agent about a complex, unpublished feature, the agent must do something far more advanced: it must *understand* the company's living, breathing codebase, database structures, and internal documents.

The key technique mentioned is "Codex Enrichment," which actively crawls the codebase to determine what database tables actually contain—a monumental task requiring deep semantic understanding of structure and meaning. This is where the six-layer context system comes into play.

Layered Context: An Analogy for Understanding

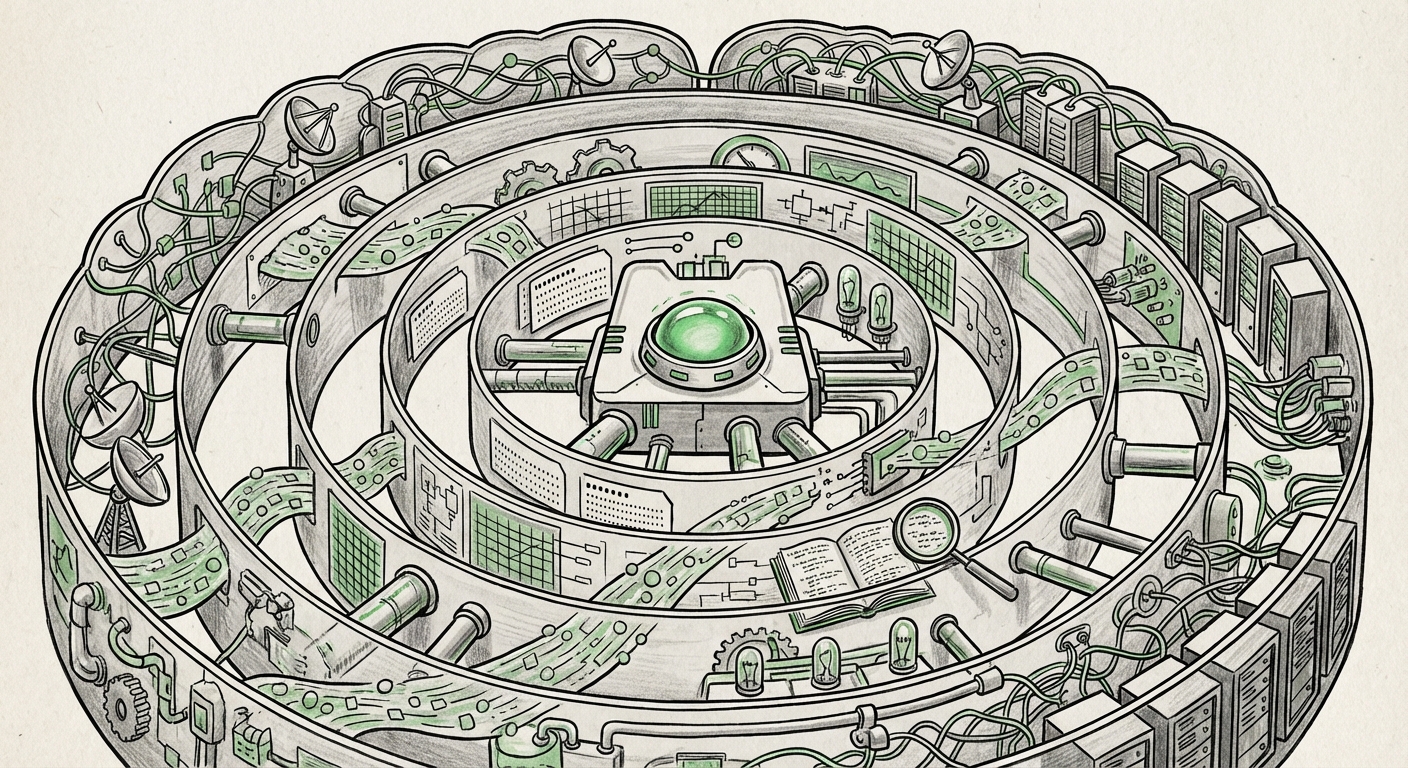

Imagine trying to find a specific tool in a massive, disorganized warehouse containing everything the world has ever produced (600 petabytes). A basic search engine (simple prompting) would give you thousands of boxes containing hammers, but none that are the exact size you need.

OpenAI's system acts like an expert foreman with multiple layers of understanding:

- Layer 1 (Surface Search): Identifies keywords related to the query.

- Layer 2 (Schema Mapping): Matches keywords to database or code structure names.

- Layer 3 (Codex Enrichment): Analyzes the actual content description of that code/table to confirm its purpose (e.g., "This table isn't about users; it's about billing history").

- Layer 4+ (Hierarchical Indexing): Navigates relationships between different data sets, looking across services or repositories.

This layered approach ensures the AI retrieves not just related *words*, but contextually accurate *knowledge* relevant to the specific inquiry. This technical sophistication directly addresses the primary challenge of enterprise AI: the context gap between what the model knows generally and what the business needs specifically.

Corroboration: The Industry Embraces Advanced Retrieval

OpenAI’s move is an internal demonstration of a technology rapidly evolving across the industry: Retrieval-Augmented Generation (RAG). RAG architectures are necessary because continually retraining massive models on fresh, proprietary data is prohibitively expensive and slow.

The RAG Scalability Imperative

As we search for technical discourse on RAG scalability (`"LLM RAG scalability proprietary enterprise data"`), the narrative is clear: production RAG requires far more than just vector search. It needs sophisticated indexing, chunking, and retrieval strategies to handle enterprise reality—messy, deeply interconnected, and massive data.

A key finding in industry analysis suggests that multi-layered or hierarchical indexing systems are emerging as essential solutions. Why? Because simple vector searches often fail when context requires combining information from multiple disparate sources (e.g., "What was the Q3 sales performance for Product X, *and* what infrastructure teams supported its deployment?"). OpenAI’s six-layer system appears to be an extreme, proprietary optimization of these very techniques, pushing the boundaries of what is possible with real-time contextual grounding.

The Competitive Race for the 'Internal Oracle'

This breakthrough is also a strategic necessity in the high-stakes competition among AI superpowers. If OpenAI can rapidly empower its engineers with deep, queryable knowledge, it creates a force multiplier that rivals must match.

Big Tech's Internal Data Wars

Our investigation into competitive developments (`"Microsoft Copilot internal data management" OR "Google Gemini internal tooling"`) confirms this race. Microsoft, heavily integrated into the enterprise via Azure and GitHub, is aggressively pushing Copilot across its development lifecycle. Google similarly leverages Gemini to synthesize knowledge from its vast internal repositories.

What differentiates OpenAI’s reported system is the sheer scale (600 petabytes is immense) and the focus on **code and schema enrichment**. In the context of an AI company, the code *is* the definitive knowledge base. For Microsoft or Google, their internal tools must master their unique mixtures of code, design documents, and internal policies.

The implication for competitors is stark: simply applying off-the-shelf RAG is insufficient. Every major player must develop bespoke, multi-layered context engines tailored to the complexity and sensitivity of their proprietary assets to maintain an edge in research velocity and operational efficiency.

The Petabyte Problem: Engineering at the Edge of Scale

Six hundred petabytes is not just a large number; it represents an infrastructural Everest. A single petabyte is 1,000 terabytes. Storing and searching this volume efficiently for complex natural language queries presents unique engineering hurdles that shift the focus from model training to data pipeline reliability.

Infrastructure: The Unsung Hero of Enterprise AI

When we examine the engineering challenges (`"Engineering challenges indexing petabytes AI data"`), we find that the bottleneck is moving away from raw compute power toward efficient indexing and retrieval latency. Traditional relational databases buckle under the speed required by conversational AI. This forces organizations toward modern architectures, often involving high-performance vector databases and graph databases designed specifically to map relationships across vast, unstructured data stores.

For the CIO or infrastructure lead, OpenAI’s internal success proves that the *governance and indexing* of data are as critical as the model itself. If an AI agent takes 30 seconds to return an answer because it is traversing petabytes of data layers, its utility plummets. The six-layer system must therefore be optimized for near-instantaneous, multi-hop traversal—a significant feat in data engineering.

Future Implications: From Productivity to Democratization

What do these trends mean for the broader AI landscape? The trend points toward the democratization of specialized expertise, driven by contextual mastery.

1. The Death of the "Data Silo"

The fundamental purpose of this agent is to dissolve data silos. In large corporations, crucial knowledge is often trapped in undocumented spreadsheets, legacy code comments, or highly technical reports accessible only to specific departments (e.g., Finance, Legal, Core Engineering). An agent that can natively query these silos using natural language means that a new hire in marketing can, in theory, ask complex questions previously requiring a decade-old database administrator.

2. The Rise of the 'AI Analyst' Role

This technology enables a new class of employee: the AI Analyst. These individuals are not necessarily expert coders or data scientists, but they are masterful at framing complex, multi-step queries that leverage the AI agent's deep context. Their value lies not in executing the analysis, but in *asking the right questions* of the newly accessible corporate intelligence.

3. Security and Contextual Sandboxing

With access to 600 petabytes comes unprecedented risk. The six-layer system must inherently incorporate robust security checks at every layer. If the system can find data, it must also know *who* is allowed to see it. Future enterprise deployments will necessitate context-aware security protocols—the AI agent must dynamically apply user permissions to the retrieval process, ensuring sensitive project data is only surfaced to authorized personnel.

Actionable Insights for Business Leaders

For organizations looking to harness the power demonstrated by OpenAI, three immediate actions are required:

- Audit Your Context Gap: Identify your most critical, yet inaccessible, proprietary data stores (codebases, specialized research logs, complex financial models). These are your highest-value targets for future AI integration.

- Invest in Metadata and Schema: The "Codex Enrichment" success proves that raw data isn't enough; its structure must be rigorously understood. Prioritize projects that clean, document, and map your data schemas, as this metadata is the fuel for advanced RAG layers.

- Pilot Hybrid Retrieval Systems: Do not wait for the next generation of foundational models. Start piloting advanced RAG systems now, focusing on multi-hop retrieval that forces the AI to synthesize answers from multiple document chunks, mimicking the layered approach.

The race for AI dominance is no longer about who has the biggest model; it is about who can build the most effective interface between that massive model and the massive, messy reality of proprietary business data. OpenAI’s internal agent, with its complex context layering, is setting the engineering benchmark for this new, data-centric era of artificial intelligence.