The Learning Cliff: Why AI Coding Tools Demand a New Pedagogy to Prevent Skill Erosion

The promise of Generative AI in the workplace is intoxicating: instant answers, rapid prototyping, and a massive boost to developer productivity. Yet, a recent finding from Anthropic casts a necessary shadow over this optimism. Their study found that developers who learn new programming skills using AI assistance scored significantly worse on subsequent knowledge tests compared to those who learned without it—unless, critically, they stopped to ask the AI why the code worked.

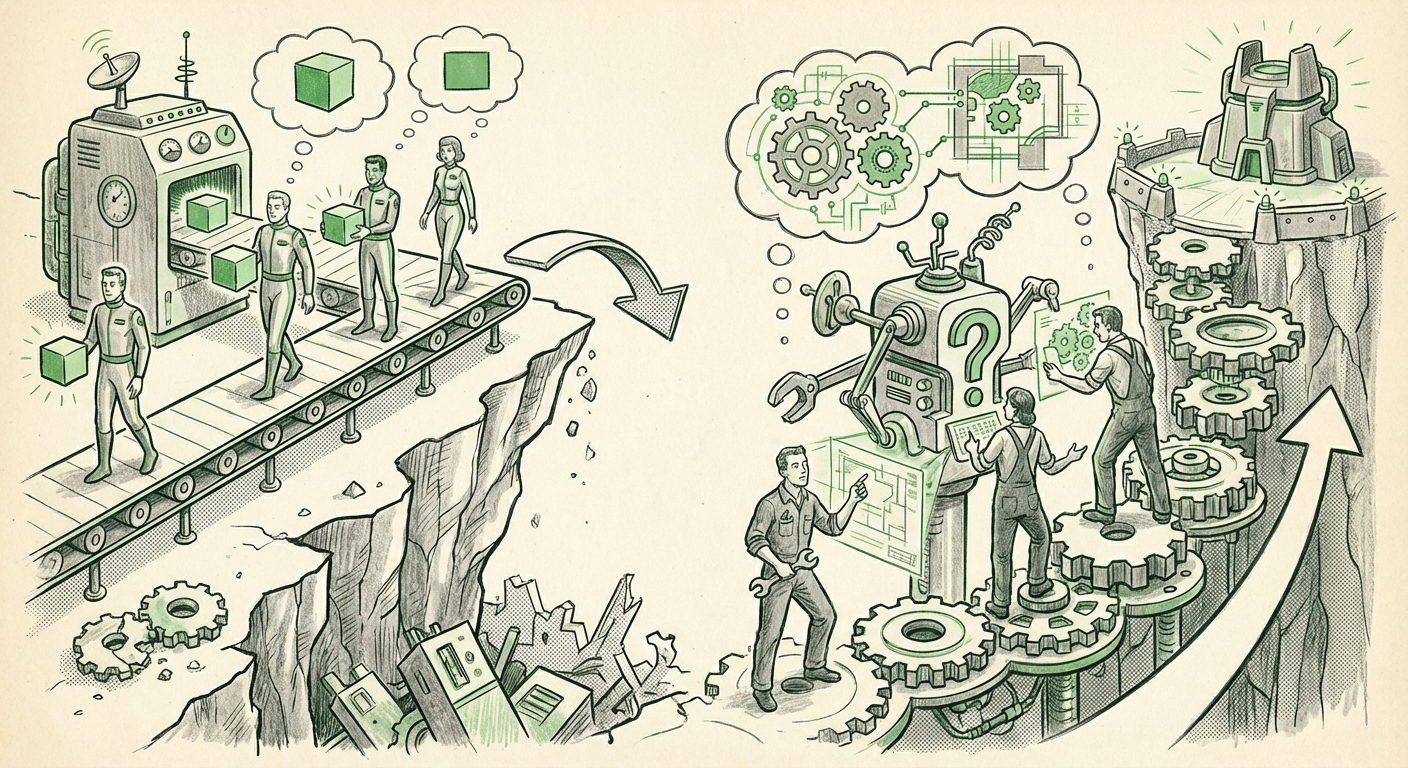

This isn't a failure of the tool; it’s a revelation about human cognition in the age of automation. We are standing at a critical inflection point where the drive for immediate productivity clashes directly with the need for deep, durable skill acquisition. As an AI technology analyst, I see this as the first major, empirical signal of what I term the "Learning Cliff"—the point where easy access to an answer causes the brain to skip the hard work necessary for true understanding.

The Psychology of Offloading: Why Asking 'Why' Matters

When a developer asks an AI tool (like GitHub Copilot or ChatGPT) to write a complex function, the immediate result is often perfect, working code. This feels like success. However, if the developer merely copies, pastes, and moves on, they have bypassed the crucial cognitive struggle required to map the problem space, choose the right algorithms, and debug the logic themselves. This process is known as cognitive offloading.

We have seen this before with technology. When GPS became ubiquitous, many drivers lost their innate ability to read maps or navigate without constant verbal instruction. The Anthropic finding confirms that LLMs represent a far more potent form of cognitive offloading. We are offloading not just rote calculation, but reasoning. Without that mental friction, the new skill doesn't embed in long-term memory.

Our analysis of related research supports this. When we search for articles covering "cognitive offloading" combined with "large language models", the consensus is clear: convenience trades off against mastery. The AI becomes a highly effective, but ultimately superficial, crutch. The key takeaway from the Anthropic work is the intervention: the moment the user is forced to engage with the *rationale* (asking "why"), the learning process snaps back into focus. The AI transitions from being an answer generator to a Socratic partner.

The Corporate Training Gap: Productivity vs. Mastery

For engineering leaders and CTOs, the Anthropic study is a flashing warning sign. Many organizations have rushed to deploy AI coding assistants across the board, hoping for an immediate 30-50% productivity bump. While that surface-level boost might appear on metrics like lines of code written, this research suggests we are simultaneously creating a workforce potentially less capable of handling novel, complex, or broken systems where the AI cannot help.

We must look at how this affects the development pipeline. Articles addressing "AI coding assistants" and "corporate training gaps" highlight a real fear: How do we effectively onboard junior developers? A new hire relying entirely on AI for their first year might become proficient at implementing known patterns but utterly incapable of architectural design or deep system debugging.

This leads directly to the **Developer Productivity Paradox**: You can increase the speed at which people produce *code*, but if you degrade the foundational *competence* of the people producing it, you introduce systemic fragility. When the system inevitably fails in a way the LLM hasn't been trained on, you need engineers who know the fundamentals deeply enough to take over. If everyone is trained via prompt-and-paste, who fills the gap?

The Future Roadmap: Engineering AI for Pedagogy, Not Just Production

The analysis must pivot from problem identification to prescriptive action. If the harm is done by passive consumption, the cure lies in structured, active engagement. This brings us to the vital area of AI as a **Socratic Tutor**.

The most forward-thinking developers and educators are already exploring this counter-argument through advanced **prompt engineering for learning**. Instead of simply asking, "Write function X," the learning-focused prompt structure is: "I need function X. Before you write it, outline the three primary data structures you might use and explain the trade-offs. Then, write the code using the structure you recommend, and finally, generate three edge cases I should test for."

This approach shifts the AI's role from a magical answer box to an interactive teacher. It forces the user to consciously engage with the problem space, mirroring the active learning that happens when a human mentor challenges a junior colleague. For businesses, this means the next wave of AI integration won't be about deploying the tool widely, but about deploying specific training protocols built around the tool.

Contextualizing the Shift: The Broader Automation Complacency Trend

This phenomenon is not exclusive to coding. In fields from medicine to logistics, increasing automation can lead to **automation complacency**, where human operators stop monitoring the system actively because it usually works flawlessly. When the rare failure occurs, the operator misses the critical cues because their vigilance has been dulled by months of routine success.

In software, the failure might not be a catastrophic outage, but a subtle security vulnerability introduced by boilerplate AI code, or an inefficient architectural decision that costs millions in cloud resources years down the line. We need industry analysis on "software engineering trends 2024" to see if we are preparing for an era of faster, yet shallower, software development.

The core implication for the future of AI is this: The next generation of successful AI tools will not simply be the best coders; they will be the best **coaches**. The value proposition will shift from "AI that writes code for you" to "AI that makes you a better, deeper engineer faster."

Practical Implications and Actionable Insights

Based on this synthesis of findings—from cognitive science to practical training—here are actionable steps for individuals and organizations:

For Developers (Individual Growth):

- The 80/20 Rule of AI: Use AI for the 80% that is repetitive (boilerplate, syntax checks, simple transformations). Spend the crucial 20% of your time wrestling with the novel, complex, or core architectural problems yourself.

- Mandate the "Why": Never accept generated code without demanding an explanation of the underlying logic. If the AI can’t explain it clearly, you don't understand it well enough to commit it.

- Use AI as a Reverse Tutor: After writing a piece of code yourself, ask the AI to critique it, suggest improvements, or point out potential hidden bugs. This tests your understanding against an expert model.

For Engineering Leadership (Organizational Strategy):

- Revamp Onboarding: Prohibit the heavy use of AI coding assistants for new hires (especially entry-level) during their initial 3-6 months. Force them to build foundational muscle memory manually.

- Train on Prompt Pedagogy: Implement mandatory internal training on "Socratic Prompting" for all developers. Treat the prompt itself as a design artifact that maximizes learning, not just output.

- Measure Deeper Metrics: Supplement productivity scores with skills assessment tests designed to probe conceptual understanding, not just task completion speed. If your performance review favors speed over substance, the Learning Cliff will claim your best talent.

Conclusion: Architecting Smarter Partnership

The Anthropic study serves as a vital corrective lens. It reminds us that technology is only as powerful as the human intelligence that directs it. We are not replacing engineers with AI; we are building a new partnership structure. If we allow AI to become a shortcut around effort, we risk fostering a generation of highly productive, yet fundamentally fragile, technical professionals.

The future of AI integration is not about minimizing human effort; it is about maximizing human comprehension. Success will belong to the organizations and individuals who treat these powerful LLMs not as servants that deliver final answers, but as demanding, interactive mentors that require rigorous questioning to unlock true, deep learning. Mastering the art of the thoughtful question—the "why"—is the essential new skill for the AI era.