The Great Schism in AI: Why DeepMind Pioneers Believe LLMs Alone Won't Reach Superintelligence

The current artificial intelligence landscape is dominated by a singular, powerful narrative: scaling up Large Language Models (LLMs) will eventually lead us to Artificial General Intelligence (AGI). This belief, fueled by astonishing advances in chatbots and generative systems, drives billions in investment. However, a significant tremor just shook the foundation of this consensus.

The news that David Silver, one of the intellectual titans behind DeepMind’s world-conquering systems like AlphaGo and AlphaZero, is leaving to found a new startup based on the premise that LLMs alone are insufficient for AGI, is more than just an executive change. It signals a potential Great Schism in high-level AI research.

The LLM Ceiling: Prediction vs. Understanding

To understand Silver’s move, we must first look at the core capability of the transformer architecture powering models like GPT and Gemini. These models are essentially hyper-sophisticated next-token predictors. They learn patterns, grammar, and vast amounts of factual data, allowing them to produce astonishingly coherent and seemingly intelligent text.

For many in the industry, this is the path to AGI. For Silver and others who focus on goal-oriented systems, this is merely a powerful form of *mimicry*. As a technology analyst, I see this as the central debate:

- LLMs (Pattern Matchers): Excellent at synthesis, recall, and fluency. They learn *what* to say next based on trillions of examples.

- Silver's Vision (Goal Seekers): Based on his background in Reinforcement Learning (RL), the goal is to build systems that can *plan*, *experiment*, *reason* about cause and effect, and truly *master* complex tasks, even if those tasks are novel and haven't appeared in their training data.

When we seek corroborating evidence for this philosophical divide, we look to experts questioning the scaling hypothesis. Research often highlights the "Limitations of transformer architecture for AGI", pointing out their struggles with long-term planning, true deductive reasoning, and maintaining a consistent internal "world model." Silver’s belief echoes a growing unease that while LLMs are incredible tools, they may not be the *architecture* of true intelligence.

The Resurgence of Planning and RL: AlphaZero’s Ghost

David Silver’s legacy is intrinsically tied to systems that taught themselves mastery through structured interaction with an environment. AlphaGo didn't just read every Go game ever played; it played millions of games against itself, developing strategies humans couldn't fathom. This is the power of Reinforcement Learning combined with deep search and planning.

This expertise directly contrasts with the current focus on pre-training massive models on static internet data. Silver’s new venture is likely betting on integrating this RL-centric approach—building agency and decision-making—into the next generation of AI, moving beyond mere conversational fluency. As analyses often suggest, "Why Planning, Not Just Prediction, Defines Intelligence," underscores the gap LLMs leave open.

For Business Leaders: The Practical Implication

If Silver is right, businesses chasing immediate productivity gains via LLMs are building powerful, yet ultimately brittle, tools. For tasks requiring complex, multi-step decision-making, dynamic adaptation, or verifiable accuracy (like complex scientific discovery, logistics optimization, or advanced robotics), pure LLMs might reach an architectural ceiling.

Actionable Insight: Don't abandon LLMs, but recognize them as an essential *component*, not the final destination. Future-proofing your AI strategy means investing in hybrid systems that can handle both language understanding and goal-oriented action.

The Architectural Alternative: The Rise of Hybrid AI

If the Transformer is insufficient, what is next? The answer being explored by researchers leaving the hype cycle often points toward a reunion of old and new paradigms: Neuro-Symbolic AI.

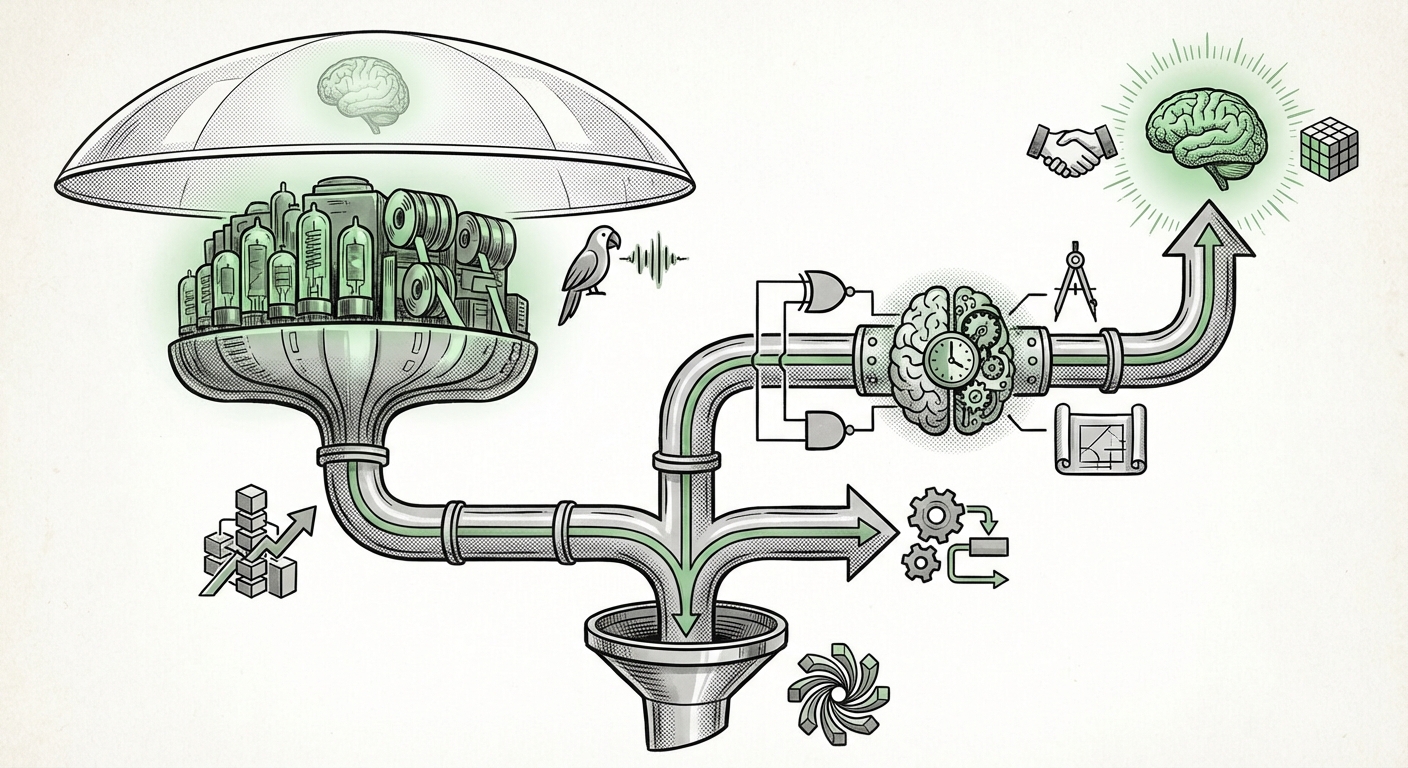

Imagine an AI system built like a human brain thinks. The "Neuro" part (the LLM) handles the messy, intuitive perception—understanding messy language, recognizing images, and spotting subtle patterns. The "Symbolic" part handles the hard logic, planning, rules, and abstract relationships, much like traditional programming or mathematics. This combination offers the best of both worlds: the flexibility of deep learning and the rigor of symbolic logic.

This trend is not isolated to Silver. We are seeing evidence of "AI researchers leaving big tech for symbolic AI or planning" ventures. This brain drain, driven by ideological differences regarding AGI’s necessary building blocks, is where the next wave of foundational AI might emerge. These new startups aren't competing with OpenAI; they are competing on a different track entirely, prioritizing reasoning depth over sheer breadth of knowledge recall.

For technical audiences, this suggests a major shift in research grants, hiring priorities, and academic focus away from simply "more parameters" towards novel connectivity, memory architectures, and external planning modules.

The Hype Cycle vs. Reality: Diminishing Returns

The financial markets and media thrive on exponential growth curves. The LLM story has been one of glorious, predictable exponential scaling. However, every technology eventually faces diminishing returns. Reports analyzing "Will scaling laws lead to AGI" skeptical analysis often conclude that while scaling continues to yield improvements, the *quality* of improvement changes.

At a certain point, adding ten times the parameters might only yield a 1% improvement in complex reasoning, whereas a fundamental architectural shift (like adding robust planning mechanisms) could yield a 50% leap.

This is the bet David Silver is placing: the industry is over-indexing on the scaling laws of the current architecture, missing the fundamental ingredients required for true generalized intelligence. He is moving his capital—intellectual and financial—to explore those missing ingredients.

Future Implications: Diversification is Key

The departure of a figure like Silver is a powerful market signal. It forces a crucial conversation about risk mitigation in AI development.

1. Investment Bifurcation

We will see venture capital increasingly split. One stream will fund the "scaling-up" giants, seeking incremental improvements in existing LLM frameworks. The second, perhaps riskier but potentially higher-reward stream, will fund ventures focused on architectural breakthroughs—neuro-symbolic approaches, causal inference engines, and advanced RL agents.

For investors, tracking "DeepMind researchers new startups contrasting LLMs" is vital, as these ventures often represent the most thoughtful, non-consensus bets on the future of the field.

2. The Enterprise Reality Check

For large enterprises adopting AI, this signifies a need for architectural diversity. If your core value relies on optimizing complex industrial processes (e.g., autonomous manufacturing, drug discovery simulations), relying solely on a general-purpose LLM might be inadequate or unsafe. The demand for specialized, goal-oriented AI—the kind Silver champions—will grow rapidly.

3. Redefining AGI

Ultimately, this schism forces us to be more precise about what we mean by "intelligence." If AGI means a system that can flawlessly write code or generate poetry, LLMs are winning. If AGI means a system capable of independent scientific discovery, true moral reasoning, or robust self-correction in unknown environments, then the path must involve planning, world modeling, and explicit causal understanding—the areas LLMs inherently struggle with.

Conclusion: The Next Frontier is Architectural

David Silver’s decision is not a rejection of deep learning; it is a statement that the *current dominant paradigm* of deep learning, when narrowly focused on generative scaling, has reached an exciting but ultimately constrained plateau regarding AGI. The battle for superintelligence is shifting from the war for data volume to the war for architectural elegance.

The future of AI will likely be a mosaic, not a monolith. It will combine the incredible language capabilities forged by the current LLM era with the goal-directed mastery pioneered by researchers like Silver. Businesses and researchers who anticipate this merger—who build systems that can *talk* fluently while also *thinking* logically and planning effectively—are the ones who will define the post-LLM era of artificial intelligence.