The Great Leap: Why AI Just Moved Beyond Chatbots to Become Digital World Builders

The past few weeks in Artificial Intelligence have felt less like incremental progress and more like a genuine technological acceleration. Reports consolidating recent major announcements—from seismic shifts at Google and OpenAI to significant forward movement from Chinese tech giants—paint a clear picture: the era of the simple conversational chatbot is drawing to a close. We are officially entering the age of Agentic AI, or what we can call the 'World Builders.'

If you’ve been using LLMs only to draft emails or summarize articles, you’ve been using a powerful sports car as a grocery-getter. The latest innovations signal that these models are gaining the memory, foresight, and tooling capabilities necessary to execute complex, multi-step missions. This transformation has profound implications for every industry, demanding that businesses rethink workflows, not just input methods.

The Fundamental Shift: From Response to Action

For years, AI has excelled at *pattern matching* and *generating* text based on a prompt. This is the chatbot paradigm. You ask a question, you get an answer. It's reactive.

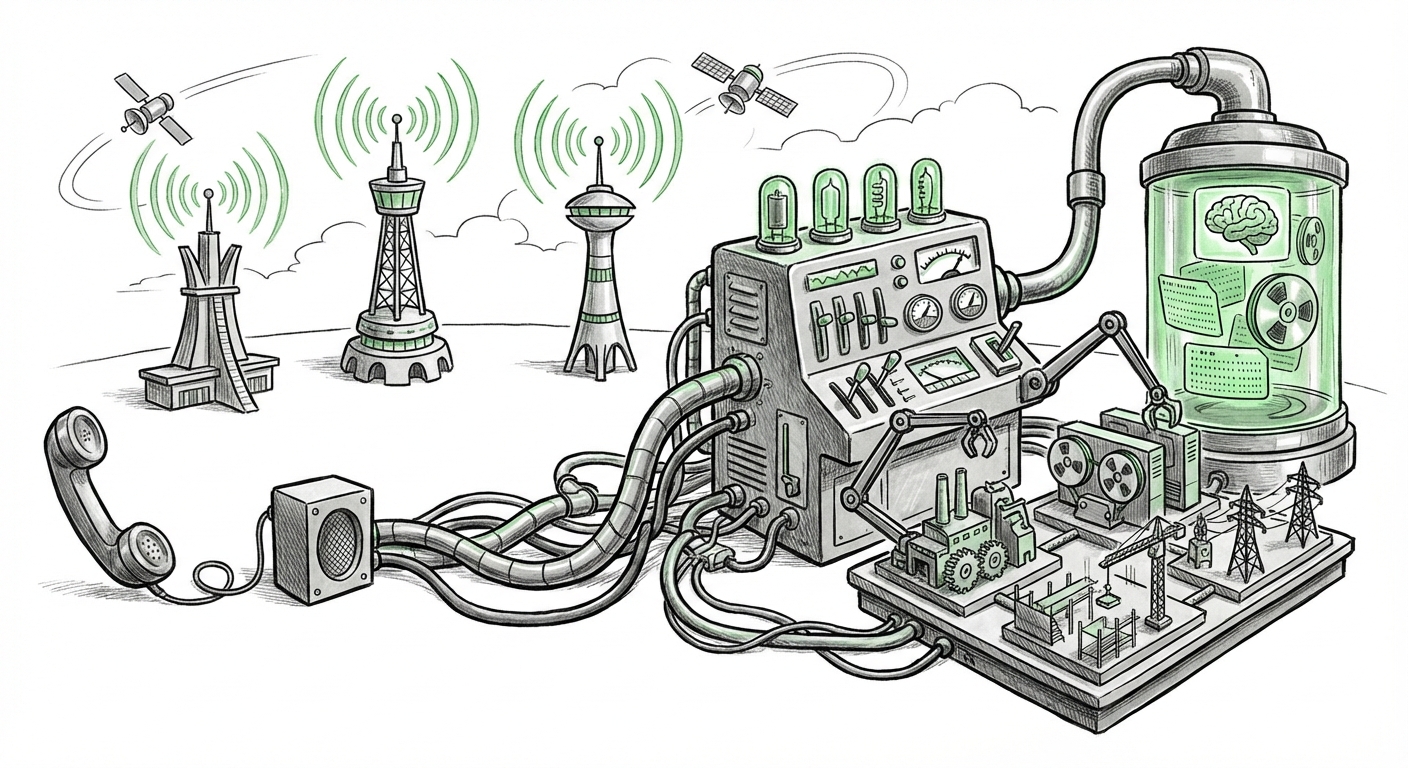

The new paradigm, fueled by competitive pressure and engineering breakthroughs, is about *agency*. An AI agent doesn't just answer; it plans, executes sub-tasks, checks its own work, retrieves external data, and iterates until a defined goal is met. It builds a temporary, functional "world" or environment to solve the problem.

The Context Revolution: Understanding the Bigger Picture

One of the quiet but most revolutionary components enabling this shift comes from fundamental memory upgrades. Recent developments, particularly demonstrated by Google’s advancements with Gemini’s context window capabilities, represent a sea change for enterprise adoption. Imagine trying to debug a complex software issue where you only see three lines of code at a time—that’s the old LLM limit.

The massive expansion of context windows—allowing models to ingest and analyze hundreds of thousands, or even millions, of tokens at once—means the AI can now hold the entire context of a massive project, regulatory document, or codebase in its "working memory."

What this means in practice:

- Software Development: An agent can review an entire application's source code, identify security vulnerabilities across disparate files, and propose a comprehensive patch, rather than just fixing one function at a time.

- Legal & Finance: Instead of summarizing one contract, an agent can compare 50 contracts across a merger, flagging every variation in liability clauses instantly.

This move transforms RAG (Retrieval-Augmented Generation) pipelines from simple lookups to deep comprehension.

This concept aligns with technical analysis discussing the engineering breakthrough behind handling massive context windows and its impact on RAG pipelines, which are core to enterprise AI integration.

The Leaders Race: OpenAI’s Strategy Meets Google’s Scale

The recent activity from the two established titans illustrates two slightly different paths toward agentic dominance.

OpenAI’s roadmap is increasingly focused on defining the *interface* for these powerful agents. Discussions around their future models suggest a strategic move away from users having to write perfect prompts toward creating complex, tool-using entities that can manage workflows autonomously. The focus is shifting from the model's intelligence score to its *reliability* in complex, multi-step assignments. This trajectory is about creating a truly intelligent, customizable personal assistant capable of managing digital work.

Google, leveraging its vast computational resources, appears focused on democratizing this massive contextual capability across its ecosystem. By offering ultra-long context windows, they are betting that access to comprehensive, high-fidelity background data will be the key differentiator for enterprise users who need reliability over raw novelty.

The fierce competition between these two is accelerating the timeline for real-world deployment. It forces both camps to rapidly bridge the gap between impressive demos and stable, production-ready AI assistants capable of complex planning.

These strategic alignments are tracked by analysts reviewing roadmaps and public statements regarding agentic systems and next-generation model releases (like anticipated GPT-5 features).

The Third Vector: The Rise of Global AI Competitors

No analysis of this pivotal week would be complete without acknowledging the rapid ascent of Chinese AI development. The release of new, highly capable models from Chinese firms signals a maturing ecosystem that is competitive on the global stage, even amid geopolitical headwinds.

While Western conversations often center on AGI safety and scaling, Chinese releases are frequently optimized for unique market demands, regulatory environments, and specific application stacks. These models are not just catching up; they are developing distinct strengths, often focusing heavily on multimodal integration (combining text, image, and voice seamlessly) or achieving exceptional performance within massive, domestic user bases.

This competition is vital for the future of AI. It prevents stagnation by ensuring that progress isn't confined to a single cultural or technological playbook. For global technology businesses, this means that AI infrastructure decisions must account for a multi-polar AI world. Vendor lock-in becomes a greater risk when powerful, localized alternatives can be deployed efficiently.

Monitoring releases and benchmarking reports on emerging models from key Chinese technology players provides the necessary context for understanding this geopolitical technological race.

What is an Agent, Really? Deconstructing the "World Builder"

To understand the future, we must define what the "World Builder" means beyond marketing speak. This is where the shift from chatbot to agent becomes concrete:

- Tool Use and Interactivity: Old bots could generate code; new agents can *run* that code, check the output in a sandbox environment, and fix compilation errors. They interact with APIs, databases, and software tools—they use the digital world to achieve their goals.

- Long-Term Memory & State Management: A true agent remembers the context of a week-long project, not just the last five messages. It maintains a persistent state about the task it is currently managing, allowing for true delegation.

- Self-Correction and Planning: If a step fails, the agent doesn't simply report an error; it utilizes its reasoning capacity to backtrack, reassess its plan, and attempt an alternative strategy—a hallmark of rudimentary autonomy.

This transition requires robust frameworks for AI autonomy. Experts emphasize that we are moving into an area where the architecture supporting the LLM—how it manages its memory, how securely it interacts with external systems (tool use), and how well it can reason about future steps—is becoming more critical than the size of the language model itself.

This understanding is built upon current research focusing on the necessary engineering advancements, such as improved reasoning loops and secure tool-use frameworks, required to transition LLMs into reliable autonomous software agents.

Implications for Business Strategy: Actionable Insights

For CIOs, developers, and strategists, these developments demand a pivot from curiosity to implementation. The question is no longer, "Should we use AI?" but rather, "How quickly can we redesign our core processes around AI agents?"

1. Process Redesign Over Feature Addition

Do not attempt to simply bolt agent capabilities onto existing, linear workflows. The true value comes from identifying tasks that currently require human coordination across disparate systems (e.g., sales onboarding, complex compliance checks, iterative product design) and designating an AI agent to *own* that entire process end-to-end.

2. Focus on Data Contracts, Not Just Prompts

When building with long-context models, the quality and structure of the data you feed it become paramount. Businesses must invest heavily in clean, structured internal knowledge bases. The LLM is the brain, but your structured data is its reliable, high-speed nervous system. Think of this as establishing "data contracts" that govern how the agent can access and rely upon proprietary information.

3. The Security Imperative of Delegation

As agents gain the ability to use tools—sending emails, executing database queries, managing cloud resources—the attack surface expands dramatically. Implementing secure sandboxing, strict permission boundaries (least privilege access), and rigorous monitoring for unintended actions are non-negotiable prerequisites for deploying agentic systems into production environments.

4. Global Talent and Deployment Flexibility

The rise of strong, competitive Chinese models underscores the need for a flexible AI procurement strategy. Enterprises operating globally must develop architectures that allow for swapping foundational models based on performance, cost, and regional regulatory needs, rather than committing solely to one vendor stack.

Looking Ahead: Navigating the Next Horizon

The recent flurry of activity solidifies one central truth: AI is maturing rapidly from a specialized tool into general-purpose digital infrastructure. The leap from generating text to building functional, digital environments is the inflection point we have been anticipating.

This acceleration means that the time frame between a breakthrough concept being published and it becoming a competitive necessity is shrinking. Those businesses that proactively map their complex workflows onto agentic frameworks—focusing on secure tool usage, high-fidelity data access via long context, and clear goal setting—will define the next era of productivity. Those that wait for the definitive "GPT-5" announcement might find they are already behind, as the groundwork for world-building is being laid today, across multiple continents.