The Token Revolution: Deepseek OCR 2 and the Future of Efficient Visual AI

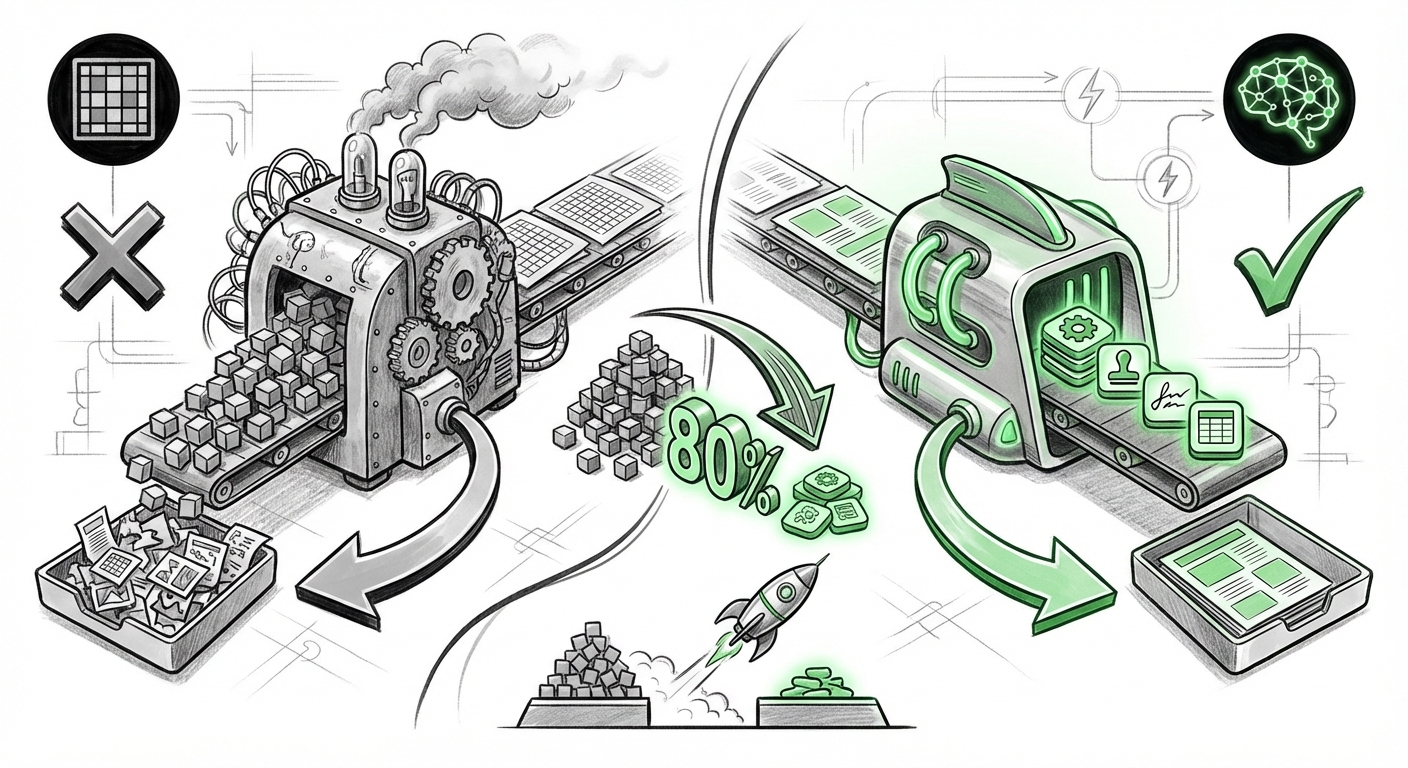

The field of Artificial Intelligence is often defined by massive increases in model size, data volume, and computational power. However, sometimes the most significant leaps forward come not from scaling up, but from scaling smarter. The recent unveiling of Deepseek OCR 2, which reportedly cuts visual tokens by a staggering 80% while achieving superior performance on document parsing tasks over competitors like Gemini 3 Pro, is precisely one of these moments. This development is not just a win for one company; it signals a fundamental re-architecting of how AI models "see" and understand the world.

As an analyst focused on the core technologies driving AI, this news immediately flags a crucial shift: the transition from brute-force visual processing to semantic visual encoding. To understand the magnitude of this shift, we must dissect the token economy, the competitive landscape it disrupts, and the underlying architectural philosophy driving this efficiency.

The Economics of Seeing: Why Visual Token Reduction Matters

In Large Language Models (LLMs), text is broken down into "tokens"—small chunks of words or characters that the model processes sequentially. When we introduce images, the process becomes far more demanding. Traditional Vision Transformers (ViTs) typically chop an image into thousands of small, fixed-size patches (like tiny squares on a checkerboard). Each patch must be converted into a token.

Imagine trying to describe a complex map. An old method would require describing every single square inch of the map, regardless of what was in it. This results in an explosion of tokens. Deepseek OCR 2, by contrast, is using a vision encoder that processes information based on meaning rather than position.

To grasp this, consider two target audiences. For AI Researchers and Engineers, an 80% reduction in visual tokens means the complexity bottleneck for long-context multimodal reasoning is shrinking rapidly. This efficiency directly addresses one of the main limitations of current multimodal models: their inability to maintain detailed context over vast visual inputs (like scanning entire books or complex blueprints). This push for **token efficiency in multimodal LLMs** is a recognized industry goal, making Deepseek’s achievement highly significant in the broader context of making models more tractable and scalable.

For Business Leaders, this translates directly into operational cost savings. Processing tokens requires significant GPU time. Cutting the input requirement by 80% lowers inference costs dramatically, making sophisticated visual AI tasks economically feasible for large-scale deployment across industries like legal tech, insurance claims, and logistics.

The Paradigm Shift: Meaning Over Position

The most profound aspect of Deepseek's approach lies in abandoning rigid positional encoding in favor of semantic understanding right at the encoding stage. Traditional vision models often spend significant computational effort figuring out *where* things are, even if the "thing" itself (a signature, a specific table row, a header) has already been identified.

The search for understanding the implications of **visual tokenization based on meaning vs position** reveals a trend towards concept-grounded models. When an AI tokenizes based on meaning, it’s not just encoding a patch of pixels; it’s encoding the *concept* of "invoice number field" or "customer signature block."

Resilience and Reasoning

- Robustness: A meaning-based encoder is inherently more robust to noise, minor rotations, or slight cropping, because it identifies the core information rather than relying on perfect spatial alignment.

- Deeper Reasoning: By abstracting the visual data into fewer, richer tokens, the downstream LLM component can spend more of its limited attention budget on reasoning, logic, and synthesis, rather than simply parsing the visual layout. This is critical for complex tasks like summarizing medical charts or auditing financial reports.

This technological direction suggests that future foundational models will integrate vision and language much more seamlessly, moving away from treating vision as a separate, high-bandwidth input that must be compressed, toward treating it as a continuous stream of conceptual data, much like text.

Disrupting the Document AI Landscape

Document understanding—Optical Character Recognition (OCR) married with Layout Understanding (LayoutLM)—has long been a specialized, fiercely competitive arena. While general-purpose models like Google’s Gemini and Anthropic’s Claude are incredibly powerful, niche tasks often require specialized optimization.

Deepseek’s claim of **outperforming Gemini 3 Pro on document parsing** is a significant challenge to the established giants. When evaluating the current state of play, industry analysts look closely at comprehensive **document understanding benchmarks**. If Deepseek’s efficiency gains translate into SOTA accuracy, it forces a strategic re-evaluation:

- For Incumbents (e.g., Google, OpenAI): They must prove that their massive general-purpose models can match or exceed specialized efficiency leaders without sacrificing general flexibility.

- For Enterprises: Companies specializing in Business Process Automation (BPA) now have a powerful, potentially more cost-effective tool to consider, especially if Deepseek offers wider availability or superior fine-tuning capabilities.

This competition fuels innovation. It proves that architectural breakthroughs—like semantic tokenization—can beat raw scale. We anticipate that major players will swiftly integrate similar token-efficient encoders into their next generations of multimodal releases to remain competitive in the document intelligence sector.

What This Means for the Future of AI Implementation

The ripple effect of Deepseek OCR 2 extends far beyond better PDF readers. It impacts the entire architecture of deployed AI systems. We are looking at a future where multimodal AI is lighter, faster, and significantly cheaper to run at scale.

For Enterprise Adoption: The Cost Curve Flattens

The financial barrier to entry for complex visual reasoning drops significantly. Imagine a logistics company using AI to instantly process thousands of photographs of damaged freight daily. Previously, the token cost of sending high-resolution images to a central cloud API might have been prohibitive. With an 80% reduction in visual input tokens, this becomes instantly scalable and affordable.

This has direct implications for **AI infrastructure architects** concerned with GPU utilization. Reducing the memory footprint and computational load per image means more simultaneous queries can be handled by existing hardware, postponing expensive capital expenditure on new server clusters.

For Scientific and Creative Fields: Context Without Overload

The implications for long-context tasks are massive. Think about analyzing historical archives, geological surveys, or complex biological imagery. If a model can process an entire high-resolution scan of an artifact or a geological cross-section using vastly fewer tokens, it gains the ability to reason about context across the entire visual field—a capability previously limited by context window exhaustion.

Actionable Insights for Leaders

Technology leaders should take immediate note of this development:

- Evaluate Specialized vs. General Models: Do not assume the largest general model is the best for document processing. Actively benchmark specialized, efficiency-focused models like Deepseek OCR 2 against multimodal titans for specific tasks.

- Demand Token Transparency: When evaluating new multimodal APIs, inquire specifically about their visual encoding strategy. The future demands transparency regarding how input images are tokenized, as this directly impacts latency and cost.

- Invest in Data Strategy: Semantic encoding thrives on well-labeled conceptual data. Ensure your training data reflects clear semantic relationships so that models can learn what truly matters visually, rather than relying on pixel patterns.

Looking Ahead: The Convergence of Vision and Language

The story of Deepseek OCR 2 is not just about beating a benchmark on one specific task. It’s a clear signal that the era of computationally expensive, unrefined visual processing is waning. The future of multimodal AI lies in achieving human-like abstraction:

Humans rarely look at a photograph and mentally process the RGB value of every pixel. We see concepts: "a contract," "a stamp," "a signature." Deepseek’s success suggests that AI is rapidly catching up to this conceptual level of efficiency. As these semantic encoders become standard, we will see multimodal AI move faster from analyzing raw data to providing genuine, contextualized insight across sight, language, and logic.

This technological elegance—achieving more with significantly less input—is the true definition of progress in the age of massive foundational models. It promises a future where AI assistance is not just powerful, but also economically sustainable and universally accessible.