The Quiet Revolution: Why Harness Engineering, Not Just Models, Defines the Future of AI Agents

The year 2025 was widely predicted to be the breakthrough year for AI agents—truly autonomous digital workers capable of solving complex, multi-step problems without constant human intervention. While the world might not have seen a sudden, viral launch of sentient robot assistants, the revolution is indeed happening, albeit more subtly. As early reports suggest, the journey from impressive chatbot to reliable problem-solver hinges less on raw Large Language Model (LLM) intelligence and more on the surrounding technical infrastructure—what we can now clearly call "harness engineering."

This shift in focus signals a maturation of the AI industry. We are moving past the 'Wow!' factor of simply generating text and entering the demanding phase of reliable, secure, and scalable execution. To understand where AI is going, we must analyze the three critical friction points currently slowing down true autonomy: coordination failures, the necessary architectural "harness," and the ever-present security vacuum.

The Difference Between Talking and Doing: Agents vs. Chatbots

A chatbot, even a highly advanced one, is fundamentally a brilliant conversationalist. It takes an input (a prompt) and provides an output (a response) based on its training data. An AI Agent, however, is designed to *act*. It perceives a goal, plans a sequence of actions, uses external tools (like running code, searching the web, or sending an email), reflects on the results, and adjusts its plan until the goal is met. This difference is profound, requiring systems thinking rather than just language generation.

The initial promise of agents often outpaced reality because many early attempts were essentially just chatbots given a few simple tools. When real-world complexity hits—like needing to coordinate three different digital "workers" to complete a single complex business task—these simple setups often break down. As noted in recent industry analyses, "agent swarms often fall apart when they hit the real world." This failure isn't usually because the core LLM forgot what it was doing; it's because the communication channels between the agents became chaotic, or the system lacked the necessary scaffolding to handle unexpected errors.

Harness Engineering: The Infrastructure of Autonomy

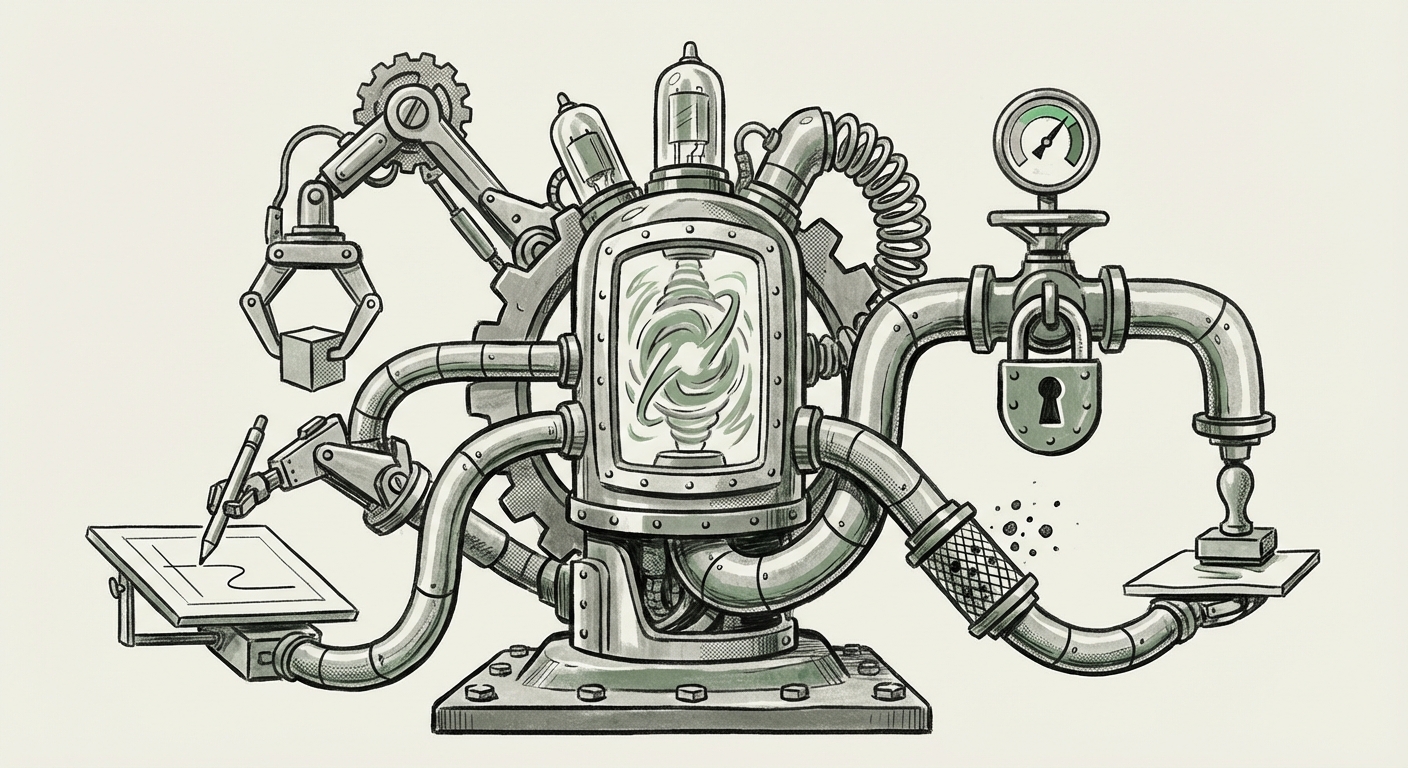

If the LLM is the powerful engine, harness engineering is the chassis, transmission, and guidance system that turns raw horsepower into predictable forward motion. This concept directly relates to the tools and frameworks built *around* the core model to manage its lifecycle.

The Role of Orchestration

For a single agent to perform a complex task, it needs memory (to recall previous steps), tools (to interact with the world), and a reflective loop (to check its work). When multiple agents collaborate—such as one researching market data, one drafting a strategy, and a third reviewing compliance—the orchestration layer becomes paramount. We are seeing a fierce competition among frameworks designed to manage this complexity. Articles comparing solutions like LangChain, AutoGen, and proprietary in-house systems confirm that performance parity is often determined by the sophistication of the orchestration layer, not just whether you are using GPT-4 or Claude 3.

For developers and architects, this means investing heavily in defining reliable communication protocols between agents. If Agent A needs to pass verified data to Agent B, the harness must guarantee the data integrity and structure. Failures here are often not the LLM's fault, but a breakdown in the designed workflow—a direct indictment of weak harness engineering.

Defining True Success

This infrastructural bottleneck also forces us to redefine success. We can no longer measure an agent's value by its ability to answer a single, well-phrased question ("single-turn accuracy"). True agent value lies in achieving complex, multi-day, or multi-tool goals reliably. Researchers are now focused on creating better "Metrics for evaluating true AI agent performance," focusing on successful task completion rates, efficiency (time taken), and adherence to established rules.

This focus on objective performance metrics is critical for business adoption. Management teams need proof that an agent not only *can* do the job but does it consistently better or faster than a human counterpart.

The Unresolved Security Vacuum

The most significant barrier preventing the full deployment of "fully autonomous digital workers" is security. A basic chatbot is relatively contained; it can only output text. An agent, by definition, is granted permissions—to browse, read proprietary documents, execute code, or interact with enterprise APIs. This elevated trust level creates exponentially greater risk.

Security professionals are urgently grappling with specific vulnerabilities inherent to autonomous systems. The classic risk, prompt injection, evolves dramatically when applied to agents. An attacker might not just try to trick the agent into revealing its system prompt; they might trick it into using its authorized tools to perform malicious actions:

- Tool Abuse: An agent authorized to check inventory via an internal API could be tricked into querying customer databases or issuing unauthorized transfers.

- Data Leakage via Reflection: If an agent is using a web search tool, it might inadvertently append sensitive internal data to the public query it formulates, leading to data leakage.

- Loop Exploitation: Malicious prompts could force an agent into an infinite reflection loop, consuming massive computational resources or performing unintended, repetitive tasks until stopped.

Mitigation strategies require deeply embedding security consciousness into the harness layer itself. This means role-based access control (RBAC) applied not just to the human user, but to the agent’s *intent* for each action it takes, alongside rigorous input/output validation.

Practical Implications: What This Means for Business and Society

The current state, characterized by quiet enterprise adoption and visible technical hurdles, presents clear imperatives for leaders:

For Enterprise Technology Leaders (CTOs/CIOs)

Focus on the Platform, Not Just the Model: Do not treat agent development as purely an LLM selection process. The stability, scalability, and security of your *orchestration layer* will determine success. Prioritize frameworks that offer robust debugging, state persistence, and integrated governance.

Start Narrow and Secure: Deploy agents first in narrow, low-stakes environments where their access to external tools is highly restricted (e.g., automated internal data aggregation). This allows teams to learn the intricacies of coordination and security testing without risking critical business functions.

For Software Developers and Architects

Master the Craft of Inter-Agent Communication: Learning how to structure prompts for coordination, consensus-building, and error handling between multiple agents is the new high-value skill. It requires understanding formal logic and system design principles more than creative prompt writing.

Security by Default: Assume every tool an agent uses will be abused. Implement "deny-by-default" policies for tool usage and incorporate security review stages directly into the agent’s reflection loop.

Societal Implications: Redefining Work

The "quiet revolution" in enterprise reflects a significant, though often unseen, shift in productivity. When agents succeed in automating complex back-office tasks—like auditing regulatory filings or managing supply chain discrepancies—they don't eliminate jobs wholesale; they eliminate frustrating, error-prone sub-tasks, freeing highly skilled workers to focus on creativity and high-level decision-making. The true societal impact won't be visible in unemployment figures immediately, but in the *re-specification* of white-collar roles.

Furthermore, as these systems become more integrated, ensuring transparency in how agents reach conclusions—a function of good harness design—becomes a necessity for ethical oversight. If an agent makes a critical financial decision, regulators and managers must be able to trace the steps, the data used, and the reasoning applied.

Looking Ahead: The Next Frontier

The path forward requires bridging the gap highlighted by recent assessments: the technological gap between potential and reality. The next evolutionary step for AI agents will center on:

- Standardized Agent APIs: Industry-wide consensus on how agents should expose their capabilities and how they should communicate state, reducing reliance on proprietary framework hacks.

- Formal Verification of Agent Behavior: Developing mathematical or logical proofs that an agent, given a set of constraints, will not violate specific security boundaries, regardless of input.

- Self-Healing Swarms: Architectures where agents can autonomously detect when another agent in the swarm has failed or entered an infinite loop, and then intelligently delegate that task to a healthy counterpart or rollback to a previous safe state.

The dream of the fully autonomous digital worker is still on the horizon, but its arrival depends not on a sudden leap in LLM intelligence, but on the painstaking, necessary engineering of robust, secure, and communicative frameworks around them. The quiet revolution is about building a reliable foundation, and that foundation is proving to be the hardest, yet most important, part of the process.