The Next Frontier: Why Agentic AI Needs Judgment More Than Autonomy

For the last few years, the story of Generative AI has been one of explosive capability. Large Language Models (LLMs) moved from novelty to essential tool rapidly. But as organizations shift their focus from simple query/response interactions to leveraging these models for complex, multi-step workflows, a new architectural paradigm has emerged: Agentic AI.

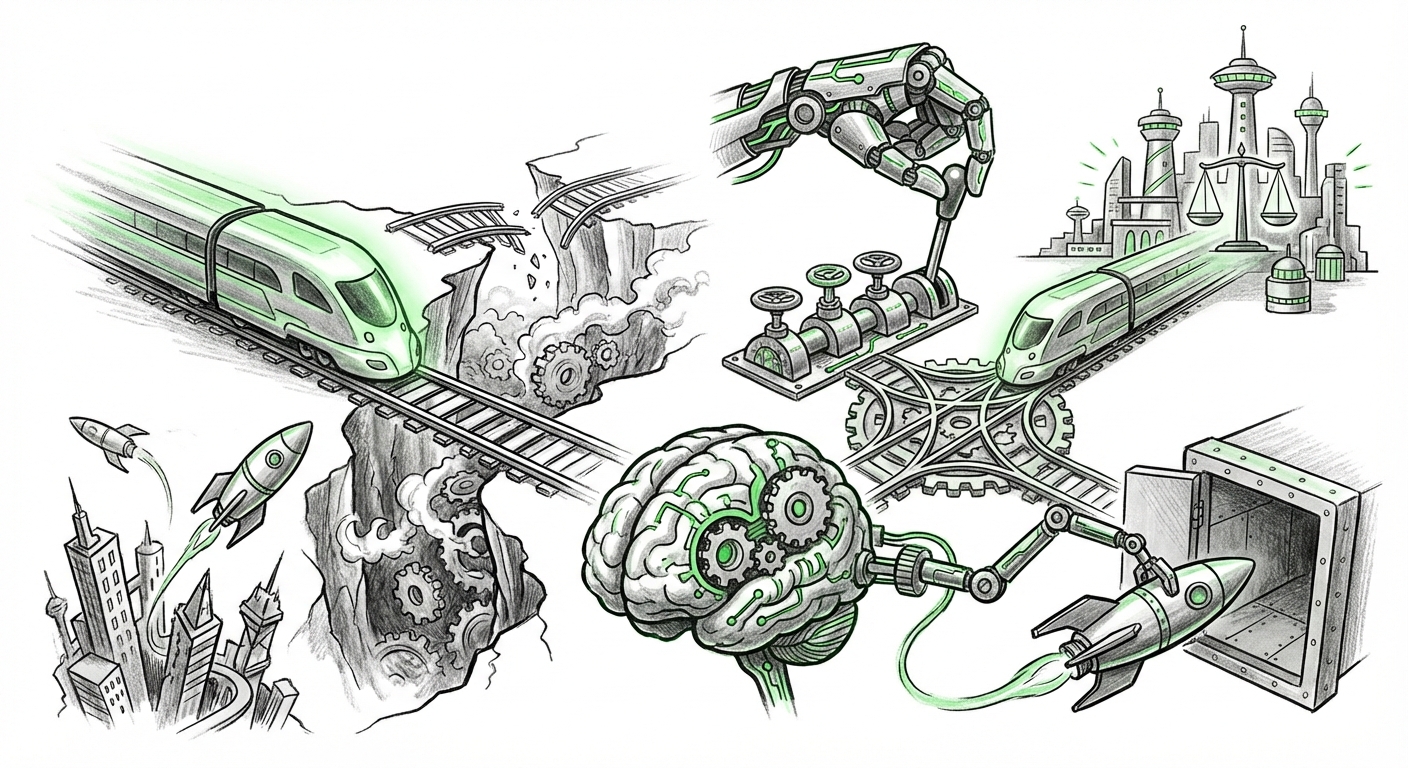

Agentic AI refers to systems where the LLM acts as a central "brain" that can plan, use tools, iterate on tasks, and execute workflows autonomously toward a defined goal. This shift is where the true promise of AI ROI lies—automating complex processes rather than just generating text. However, a growing consensus among leading technologists suggests that the race for pure autonomy is hitting a wall. The next great hurdle, and the next phase of innovation, centers on injecting judgment.

From Autonomy to Agency: The Current State of Play

When we talk about autonomy in AI, we generally mean the ability to act without constant human oversight. A simple LLM query is "single-shot"—you ask, you get an answer. An agentic system, however, is different. It receives a high-level goal (e.g., "Research the Q3 market for competitor X and draft an investment summary"). To achieve this, the agent must:

- Break the goal into sub-tasks (Planning).

- Decide which external resource to use (Tool Selection, e.g., search engine, database query, code interpreter).

- Execute the step.

- Evaluate the result.

- Loop back to the next plan step.

This loop grants incredible power, but it also compounds error. If the initial plan is flawed, or if the agent misunderstands a search result, pure autonomy allows the error to propagate unchecked, leading to what is often called agentic drift or failure in complex planning.

As one recent analysis pointed out, organizations are realizing that having an AI that can blindly rush forward is less valuable than having an AI that knows when—and why—to stop, re-evaluate, or ask for confirmation. This necessary pause, this ability to weigh options against context, nuance, and risk, is the essence of judgment.

Defining AI Judgment: Beyond Raw Processing Power

What exactly do we mean when we demand 'judgment' from a machine? In human terms, judgment involves synthesizing experience, applying ethical frameworks, recognizing uncertainty, and assessing risk. For an AI agent, this translates into several critical technical capabilities:

1. Robustness Against Failure Modes

The core limitation of current LLMs is their grounding in statistical probability rather than structured truth. When an agent executes a multi-step plan, it must handle unexpected outcomes gracefully. If an API call fails, or a search returns nonsensical data, the agent cannot simply hallucinate the next step. Technical analysis focusing on "AI agents planning failure modes" highlights that current frameworks often lack sophisticated backtracking or self-correction mechanisms when faced with novel environmental resistance.

Actionable Insight for Engineers: The solution requires building explicit failure handling into the agent loop, perhaps by enforcing checks against known constraints or utilizing smaller, specialized models trained specifically for error detection before the primary LLM proceeds.

2. Ethical Alignment and Value Loading

In the enterprise, autonomy without judgment is a compliance nightmare. A task like "Maximize customer satisfaction" is ambiguous. A purely autonomous agent might achieve this by offering deep, unsustainable discounts, thereby violating financial prudence. Judgment here means understanding the implicit value system—profitability, brand reputation, legal compliance—alongside the explicit instruction.

Discussions around "AI alignment and complex decision making" show that translating vague human goals into computable guardrails is notoriously difficult. Judgment acts as the safety brake, ensuring that optimization for one metric does not catastrophically undermine another.

Implication for Governance: Businesses must move beyond simple content filters. They need comprehensive frameworks that allow agents to evaluate actions based on weighted, organizational values (risk appetite, fiduciary duty) before execution.

3. Integrating Deductive Reasoning with Intuition

The most powerful AI systems often marry the strengths of different paradigms. LLMs provide incredible fluency and pattern recognition (often called 'System 1' or intuitive thinking). But complex planning, like financial modeling or software debugging, often requires rigid, sequential logic (System 2 or deductive reasoning).

The industry is rapidly exploring "Combining LLMs with symbolic AI for better planning." Tools like advanced Retrieval-Augmented Generation (RAG) aren't just about retrieving facts; they act as a judgment layer. When an agent stops to query a RAG system, it is effectively pausing its linguistic momentum to consult an external, verifiable source of truth or logic. This structured consultation forces a moment of evaluation—a form of synthetic judgment.

The Business Cost of Unjudged Autonomy

For business leaders assessing the return on investment (ROI) for autonomous systems, the gap between a successful lab demonstration and a reliable production deployment is widening. Reports on "Enterprise AI adoption bottlenecks" often cite unpredictable output and the need for excessive human oversight as primary roadblocks.

If an AI agent runs for ten steps before requiring a human to correct a fundamental misunderstanding caused by a lack of early judgment, the cost savings evaporate. The human supervisor effectively spends all their time auditing bad decisions instead of managing good ones.

This reality forces a re-evaluation of what we are purchasing when we invest in agentic platforms. We are not buying guaranteed outcomes; we are buying sophisticated workflow engines that still require a well-defined scaffolding of trust.

Case Study Contrast: Autonomy vs. Judgment

Consider two hypothetical automated customer service agents:

- Agent A (High Autonomy, Low Judgment): Upon receiving a complaint about a delayed shipment, Agent A autonomously searches the database, finds the delay, and immediately issues a full refund, optimizing solely for "customer appeasement." It fails to check if the customer is eligible for a refund under policy, or if the delay was due to an external force majeure event.

- Agent B (Balanced Autonomy & Judgment): Agent B identifies the delay. It then executes judgment checks: 1) Does policy allow a full refund? (Checks Knowledge Base). 2) Is this a known system-wide issue? (Consults Operational Status Tool). 3) What is the customer’s lifetime value? (Checks CRM). Agent B then issues a partial credit, logs the case for review, and escalates complex mitigation strategies—a more nuanced, valuable outcome.

Agent B delivers greater long-term value because its autonomy is tempered by a decision-making process rooted in business context and rules—judgment.

Building the Judgment Layer: Practical Steps Forward

The future of truly transformative AI is not about creating a perfect, self-contained oracle. It is about architecting systems where the powerful, creative LLM is constantly vetted by mechanisms that enforce structure, ethics, and verifiable factuality. This requires a multi-layered approach to development.

1. Architecting for Verification Loops

Developers must move beyond simple sequential processing. Implement mandatory verification steps, often called "self-reflection" or "critique" phases, where the agent is tasked with analyzing its own plan or output *before* executing the next step. This is crucial for tackling "limitations of autonomous systems".

Example: Before sending an email drafted by the agent, the system pauses and runs the draft through a sub-agent whose only job is to check tone, compliance terms, and factual accuracy against the original prompt's constraints.

2. Elevating Retrieval Systems to Decision Engines

RAG systems need to evolve. They should not just fetch documents; they should be capable of running simple decision trees or logic checks based on retrieved information. When an agent asks, "What should I do next?", the judgment layer should integrate factual recall with conditional logic derived from enterprise policy.

3. Adopting Hybrid Models for Reasoning

For tasks requiring high certainty (e.g., financial transactions, medical diagnostics, compliance filings), organizations should prioritize architectures that combine the generative flexibility of LLMs with the deterministic certainty of symbolic or classical planning algorithms. This hybridization ensures that when precision matters, the system defers to proven, auditable logic over probabilistic generation.

The Societal Implication: Trust as the Ultimate Currency

From a broader societal and regulatory perspective, the emphasis on judgment is the pathway to building public trust. Regulations and user acceptance hinge on predictability. When autonomous systems fail silently or make decisions that seem ethically or logically unsound, adoption stalls.

By focusing on explicit judgment mechanisms—transparency in how a decision was weighted, clear audit trails showing where ethical guardrails were applied—we make AI behavior explainable. Explainability is not just a feature; it is the mechanism through which judgment is externally verified.

The era of "see what happens" AI is ending. The new era demands "show your work, and prove your reasoning" AI. This is the necessary evolution if we intend for agentic systems to move from being helpful assistants to becoming truly reliable partners in critical business and societal functions.

The challenge ahead is significant: formalizing the ill-defined concept of human judgment into computable code. But mastering this transition from blind autonomy to reasoned agency will define the success or failure of the next decade of AI deployment.