The Great Model Purge: Why Specialized AI Tools Are Being Chopped Block for Unified Systems

The whisper circulating in developer forums suggests a seismic shift: a once-celebrated, specialized tool—hypothetically, "ChatGPT’s Best AI Writer"—is slated for phase-out. While the date cited is futuristic (February 2026), the underlying dynamics are happening right now, in 2024 and 2025. This isn't a sign of AI failure; it’s a flashing signal of its hyper-acceleration. When a successful, focused product is retired, it means the foundational technology has leaped forward, rendering the specialized solution obsolete.

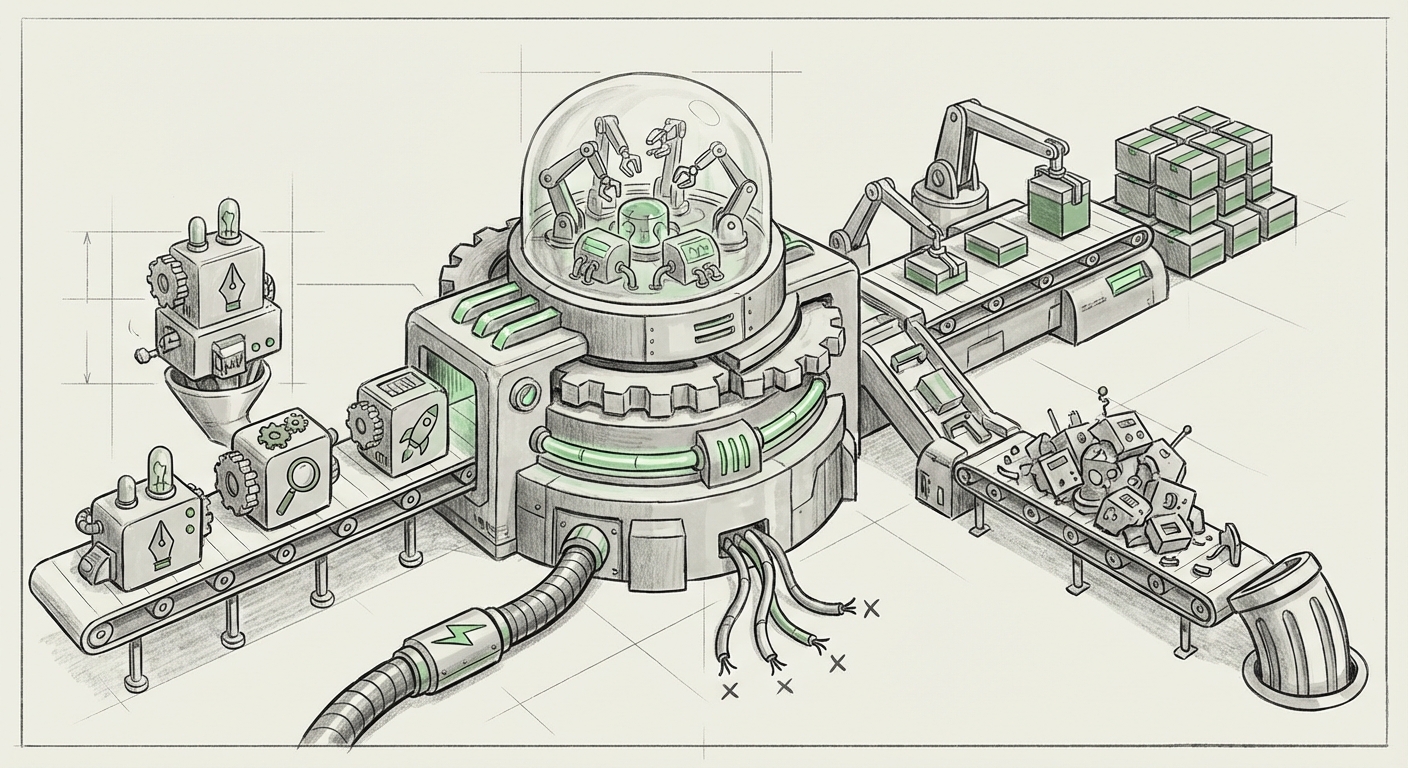

As an AI technology analyst, I view this scenario not as a product failure, but as evidence of four convergent trends reshaping the entire generative AI landscape. We are moving away from a market of numerous, narrow tools toward powerful, unified platforms, driven by architectural breakthroughs and ruthless economic efficiency.

Trend 1: Model Lifecycle Management and the Cost of Maintenance

The first principle of platform longevity is efficiency. Companies like OpenAI do not maintain dozens of slightly different models forever. If a model dubbed the "Best AI Writer" was an early fine-tune or a customized GPT-3.5 iteration, it likely requires separate hosting, monitoring, and updating. This incurs operational drag.

The technical community understands this lifecycle. We look closely at official communications regarding model retirement. When older models are pulled, it forces adoption of newer, usually superior, versions. This mirrors the history of software development; think of how Microsoft deprecated old versions of Office tools.

- Technical Implication: Maintaining legacy code—or in this case, legacy model weights—is expensive. If the new, flagship model (e.g., GPT-5) can perform specialized writing tasks better *and* cheaper as part of a unified system, the specialized model is surplus to requirements.

- Corroboration: Developers track official announcements, such as those detailing the OpenAI API model deprecation schedule. These policies establish the precedent that specialized endpoints are temporary fixtures, not permanent ones. For an enterprise user, this means building on the newest architecture is the only path to long-term stability.

Trend 2: The Consolidation Play: From Toolkits to Operating Systems

Why download three separate apps when one powerful operating system can do everything? This is the central question driving the consolidation of generative AI platforms. Initially, the market was flooded with tools: one for code, one for writing summaries, one for generating images. This fragmented approach met an immediate need but is unsustainable.

The next evolution sees foundational providers embedding these specialized skills directly into the core large language model (LLM) or the primary interface.

Imagine a high school student: they used to need a calculator app, a dictionary app, and a thesaurus app. Now, their single smartphone operating system handles all those functions instantly when requested. Similarly, the new frontier models are becoming the 'AI Operating System.' The "writer" function is simply one excellent capability within that OS, not a separate entity.

- Business Implication: This trend dictates that businesses should prioritize integration over niche subscriptions. When assessing AI adoption, the key question is no longer "What does this tool do well?" but "How seamlessly does this tool integrate with our primary workflow hub?" Analysis discussing the future of AI platforms often concludes that unification wins for the user experience and overall efficiency.

Trend 3: Architectural Leaps: The Efficiency of Mixture-of-Experts (MoE)

Behind the scenes, the technology powering these platforms is fundamentally changing. The rumored or actual adoption of Mixture-of-Experts (MoE) architectures is perhaps the single greatest technical driver for retiring specialized models.

Think of an MoE system as a massive library staffed by many specialized librarians (the 'Experts'). When a request comes in—say, "Write a persuasive sales email in the style of Shakespeare"—the central routing system identifies that this task requires the 'Persuasion Expert' and the 'Historical Style Expert.' It activates only those two experts and ignores the rest of the library. This is incredibly efficient.

A specialized, standalone "Writer Model" is like having a small library dedicated *only* to writing, which sits empty most of the time. An MoE model can perform that specialized writing task using dedicated subnetworks, often faster and more accurately than the old specialized model, while also handling coding, vision, and reasoning.

- Technical Value: For technical architects, this confirms that the race is won by generalized models that can dynamically allocate resources. Research into Mixture of Experts architecture shows why this efficiency means older, monolithic fine-tunes become costly overhead.

Trend 4: The Rise of Agentic Workflows: From Generation to Execution

Perhaps the most profound shift is the move from *generation* to *agency*. A 'writer tool' creates output (a document, an email draft). An AI *agent* performs actions, manages projects, and corrects its own errors across multiple steps.

If the older tool was simply good at writing a blog post, the new system is an agent that can:

- Research trending topics (using search tool).

- Outline three potential angles (reasoning).

- Draft the content using the *best internal writing module* (generation).

- Format the output for CMS integration (execution).

- Flag the result for human review.

The act of writing becomes one tiny, automated step within a massive, valuable workflow. If your business relies on an AI that only writes, you are already lagging behind those using AI that *manages* the writing process from start to finish.

- Future Implication: Businesses must stop measuring AI success by the quality of a single output and start measuring it by the complexity of the *workflow* the AI can manage autonomously. Discussions on AI agents vs generative text tools highlight that agents embody the next generation of productivity gains.

The Shifting Economics: Who Gets Paid in the Platform War?

All these technological shifts culminate in a brutal re-evaluation of the market economics. Specialized AI tools built by third parties often function as "API wrappers"—they add a nice interface layer onto OpenAI’s engine and charge a premium subscription.

When the engine provider (OpenAI) significantly upgrades its core product (e.g., releasing a free, ultra-high-quality writing feature within the standard ChatGPT subscription), the value proposition of the wrapper collapses.

For a founder relying on this model, the calculation becomes grim: foundational model costs are either rising unpredictably or, more commonly, the perceived value of simply repackaging output is dropping to zero.

- Venture Capital View: Investors are fleeing models that rely purely on user interface abstraction. The capital is now flowing to true infrastructural innovation or deep, proprietary data integration. Articles covering the viability of API wrapper startups confirm that the barrier to entry has become too high, and the risk of being made redundant by the platform owner is too great.

Actionable Insights for a Post-Specialization World

If the phase-out of a specialized "best writer" model is inevitable, what should decision-makers do today?

1. Audit Your AI Dependencies for Platform Risk (For Business Leaders)

Identify any critical business process relying on a specialized, third-party AI tool that primarily offers content generation. Determine if that functionality is already being absorbed by your core platform (Microsoft 365 Copilot, Google Workspace, or OpenAI’s premium tier). If the value is easily replicated, plan the migration to the core platform now to avoid disruption in 2026.

2. Prioritize Agentic Integration Over Feature Quality (For Product Managers)

Do not chase the absolute best single output quality; chase the best end-to-end process automation. The new "best writer" will be the one that requires the fewest human prompts to complete a full task, not the one that uses the most sophisticated prose.

3. Focus on Fine-Tuning Proprietary Knowledge (For Developers)

If you must specialize, do not specialize the *skill* (writing); specialize the *knowledge*. The future value lies in fine-tuning models on proprietary corporate data, niche industry terminology, or unique brand voices. The underlying architecture will be provided by the giants, but the specialized, irreplaceable knowledge must come from you.

The retirement of specialized tools marks a maturity milestone for generative AI. It signals that the underlying foundation models are powerful enough to handle niche tasks internally. The market is clearing the decks, pruning the redundant foliage, so that the next generation of massive, unified, and agentic AI systems can take center stage.