The Agentic Revolution: OpenAI's Codex App Signals the Shift from Co-Pilots to Autonomous Workflows

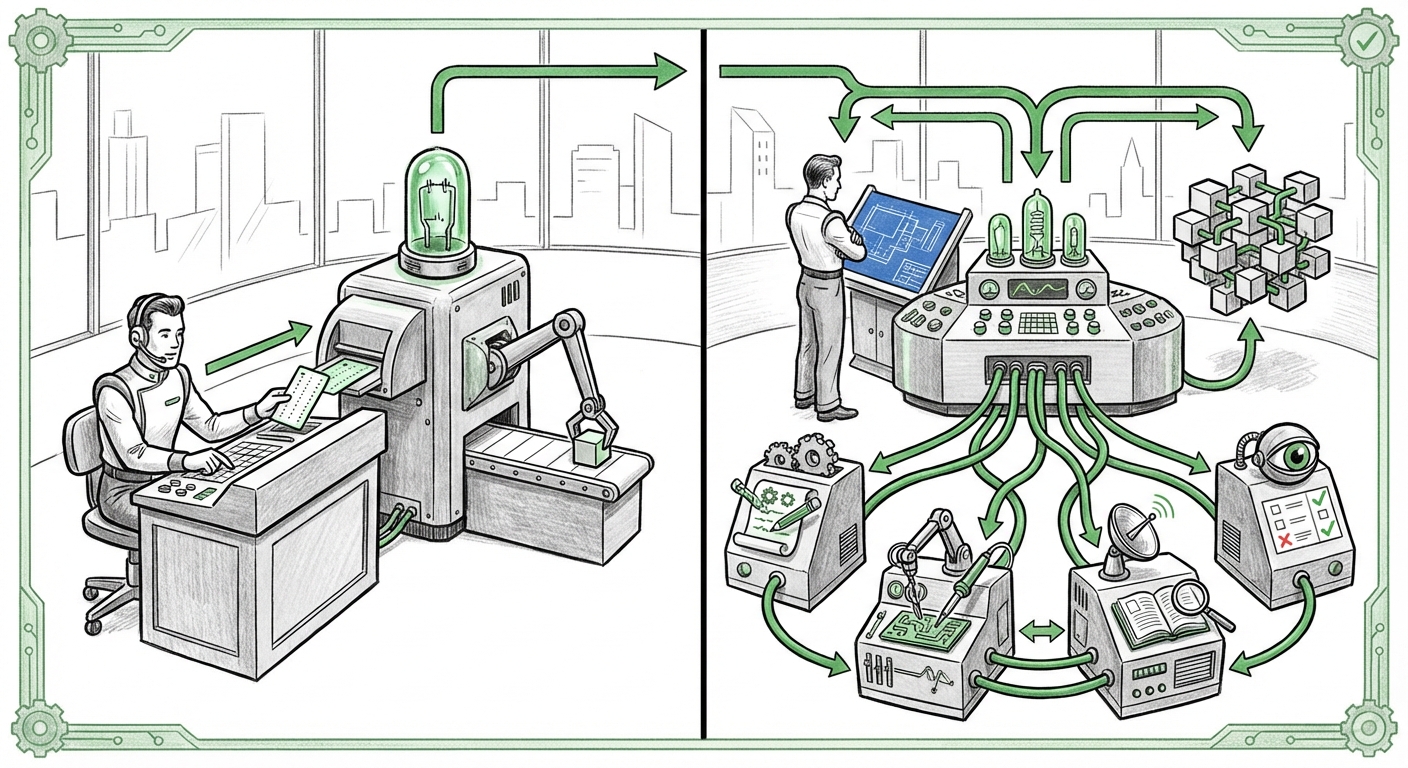

The landscape of Artificial Intelligence is shifting beneath our feet faster than ever before. For the past few years, the conversation has been dominated by powerful Large Language Models (LLMs) acting as brilliant, yet passive, assistants—the classic "Co-pilot." However, a recent announcement from OpenAI—the launch of a specific Codex app for macOS designed to manage multiple AI agents—is a clear declaration that the era of passive assistance is rapidly giving way to active autonomy.

This move is far more significant than just another software release. It represents the formalization of Agentic AI—systems capable of breaking down complex goals, delegating tasks, executing steps, and self-correcting failures without constant human prompting. To understand the full weight of this development, we must examine the underlying trends driving this infrastructure shift.

The Fundamental Shift: From Prompt to Plan

To truly grasp the significance of the Codex agent manager, let’s use an analogy. Think of the standard GPT interface as a very skilled chef who can follow any recipe you give them, step-by-step. If you ask them to "Bake a cake," they need precise instructions for mixing, oven temperature, and timing.

An AI Agent, however, is like a Restaurant Manager. If you tell the manager, "Open a new branch of the restaurant in the city by next month," they independently determine the needs (find a location, hire staff, design the menu), create sub-tasks, assign those tasks (perhaps to other AI agents), monitor progress, and report back only when the overall goal is achieved. This capability to plan, iterate, and execute is the essence of Agentic AI.

Our analysis, informed by looking into current industry focus (Query 1: `"agentic AI" software development trends 2024`), confirms this is the sector’s primary focus. Companies are realizing that pure conversational power hits a ceiling when tasks require sequential logic, external tool use, and memory retention across multiple steps. The Codex app is OpenAI’s infrastructure solution to deploy and manage these nascent autonomous teams.

Why Multiple Agents Matter

A single powerful LLM is often a generalist. Real-world complexity, however, requires specialization. Managing *multiple* agents means deploying a specialized workforce:

- The Planner Agent: Breaks down the user's goal into steps.

- The Coder Agent: Writes and tests specific blocks of code.

- The Researcher Agent: Scours documentation or the web for necessary context.

- The Debugger Agent: Reviews the output from the Coder Agent and flags errors.

The macOS Codex app appears to be the control panel—the operating system—that ensures these specialized AIs communicate effectively, share context, and work toward a unified objective, democratizing access to sophisticated, multi-step automation.

Hardware Meets Autonomy: The Significance of macOS Execution

One of the most telling details about this launch is its presentation as a dedicated macOS application. This points directly toward a critical technical bottleneck in agentic systems: latency and data governance. Complex agentic workflows involve many back-and-forth calls (or "thoughts"). If every single step requires a round trip to a remote cloud server, the process becomes slow and prohibitively expensive.

Searching through technical discussions (Query 2: `running multiple LLM agents locally macOS`) reveals a massive appetite among developers for running these orchestrations locally.

The Power of Local Processing

For the developer audience, this local capability unlocks several advantages:

- Speed: Leveraging Apple Silicon (M-series chips) for rapid, low-latency inference significantly speeds up the iterative thinking process of an agent team.

- Privacy and IP Protection: When agents are handling proprietary codebases or sensitive internal documents, keeping the entire workflow—planning, execution, and tool use—off external cloud servers is a massive security advantage.

- Cost Control: Developers can run test agents or handle smaller tasks using their local hardware, saving on API usage fees associated with constant cloud calls.

By building this directly into the desktop environment, OpenAI is signaling that agent orchestration is moving from a backend cloud service to a fundamental part of the developer workstation, deeply embedding AI into the daily tools of creators.

The Competitive Gauntlet: Agents as the New Moat

OpenAI’s aggressive push into agent management is forcing competitors to accelerate their own roadmaps. The battleground has moved past "which model is smartest" to "which platform can best manage complex, autonomous processes."

Examining the competitive landscape (Query 3: `Google Gemini vs OpenAI agent architecture`) shows that major players are keenly aware that infrastructure for agents will define the next cloud war.

Differentiation Through Orchestration

While Google, Anthropic, and others boast powerful base models, controlling the framework through which developers build and deploy multi-agent workflows is where true long-term platform lock-in occurs. If the Codex app becomes the most intuitive way to coordinate specialized coding agents, developers will build their entire automation strategy around that ecosystem.

For enterprise strategists and investors, this means watching not just the performance benchmarks (like MMLU scores), but the usability and feature parity of the agent orchestration layers offered by each major provider. The ability to seamlessly manage resource allocation, tool access, and failure recovery across a suite of specialized AIs will become the primary metric for judging cloud AI competitiveness.

Implications for Work: The Evolving Software Development Lifecycle

The name "Codex" intentionally evokes the previous generation of AI coding tools. But managing *parallel agents* transforms the developer’s role entirely. We are transitioning from AI as a suggestion box to AI as a fully functional, though supervised, development team.

Our investigation into the future impact (Query 4: `impact of AI agents on software development lifecycle`) reveals that this change is disruptive:

From Writing Code to Verifying Orchestration

For Software Engineering Managers and Lead Developers, the job description is evolving. Instead of spending 70% of time writing boilerplate code, debugging syntax errors, or manually checking documentation, developers will spend more time:

- Defining High-Level Goals: Translating business needs into precise, multi-step agent objectives.

- Interrogating Agent Reports: Reviewing the logs and decisions made by the agent team to ensure alignment with architectural standards.

- Building Agent Tools: Developing and integrating custom functions (tools) that the agents can reliably use to interact with proprietary databases or legacy systems.

The productivity gains promise to be exponential, not linear. A complex feature that once took a junior developer a week might be prototyped by an agent team in an afternoon, leaving the human engineer to focus on critical system design, security auditing, and complex innovation.

Societal and Practical Considerations for Adoption

As AI moves into autonomous execution mode, the stakes get higher, requiring a more mature approach from both business users and society.

Actionable Insight 1: Prioritize Observability Over Output

When using conversational AI, if the answer is wrong, you just ask again. When an agent executes a sequence of steps—perhaps modifying a configuration file, updating a database, or deploying code—a single misstep can cause widespread disruption. Businesses must invest immediately in robust AI Observability and Auditing Tools. The Codex app’s ability to manage parallel runs is a good start, but enterprise adoption requires clear, human-readable logs tracing every decision, tool invocation, and self-correction the agent took.

Actionable Insight 2: The Rise of the Prompt Engineer Specialist

The skill of writing a simple, effective prompt for a single query is different from the skill required to define an entire agent workflow. We need experts capable of defining goal states, structuring agent communication protocols, and poisoning against specific failure modes. Companies must start training personnel specifically in Agentic Workflow Design.

Actionable Insight 3: Navigating the Local/Cloud Spectrum

The macOS app suggests a hybrid future. Critical, sensitive, or high-volume work will likely remain in the cloud ecosystem for scalability. However, rapid prototyping, personal coding tasks, and high-security data handling will migrate to local or on-premise solutions leveraging models capable of running locally. CTOs need strategies for managing the security and context synchronization between these two operational spheres.

Conclusion: The Orchestration Layer is the New Frontier

OpenAI’s Codex app for macOS is not merely a convenience; it is a foundational piece of infrastructure for the next era of computing. It validates the market trajectory toward Agentic AI, acknowledging that true automation requires coordination, planning, and specialization.

The future isn't just about smarter chatbots; it's about intelligent, coordinated software systems that operate with a degree of autonomy previously reserved for highly skilled human teams. The ability to manage these agents locally on powerful consumer hardware like macOS further democratizes this power, placing sophisticated automation capabilities directly onto the desks of developers and knowledge workers worldwide. The transition from being the pilot to merely being the flight supervisor is underway, and the architecture supporting that supervision is now being formalized.