The Great AI Unplug: Why Firefox’s Centralized AI Off-Switch Signals a User Uprising

The integration of Generative Artificial Intelligence (AI) into everyday software is happening at breakneck speed. From summarizing emails to drafting reports directly within our productivity suites, AI is being woven into the very fabric of digital life. However, this rapid deployment has uncovered a critical fault line in the tech landscape: the tension between forced convenience and user autonomy. The recent announcement that Firefox users will soon be able to block *all* generative AI features in one centralized place is not just a niche browser update; it is a profound declaration about the future of digital control.

This move by Mozilla’s Firefox signals a shift—a clear acknowledgment that not all users want, or trust, AI running silently in the background of their digital tasks. To understand the implications of this "AI Off-Switch," we must look beyond the immediate headline and examine the broader trends driving this friction in the technology world.

The Unstoppable Tide: AI Integration in the Digital Ecosystem

For the last two years, the narrative has centered on *adoption* and *feature parity*. Competitors are rushing to embed Large Language Models (LLMs) into every possible interface. We are witnessing the start of a new "browser war," where features like AI-powered summarization, contextual help, and code completion are becoming expected standard offerings, not optional add-ons.

If we look at the competitive landscape, established players are heavily invested in making AI indispensable. For example, Microsoft has deeply integrated Copilot into the Edge browser, and Google is rapidly deploying Gemini features across its suite. The implicit business model here is that these features enhance engagement and, by extension, generate value, often by routing user data (even anonymized context) through massive cloud infrastructure.

This aggressive integration makes Firefox's counter-move stand out. When a company provides a simple, centralized toggle to disable these powerful tools—as opposed to burying the settings deep within menus or requiring manual extension removal—it directly challenges the assumption that AI integration must be universal and mandatory. It suggests that for a significant segment of the user base, less integration means a *better* experience.

Why Centralized Control Matters

Imagine every application on your computer having a small, smart helper running alongside it. Now imagine needing to turn off every single helper individually. That is the current, fragmented reality for users wary of AI. Firefox’s centralized control (Version 148+) offers a powerful principle: if a feature fundamentally changes how data is processed or shared, the user must have a simple, accessible master switch. This moves customization from being a technical chore to a fundamental user right.

The Core Conflict: Privacy vs. Performance

The desire to flip that AI off-switch is overwhelmingly rooted in privacy concerns. When a browser uses a web page’s content to fuel an AI prompt—even one as benign as "summarize this article"—that content is being sent off the user’s machine to be processed by a third-party LLM provider (like OpenAI or Google’s proprietary models).

Articles covering growing user privacy concerns regarding AI browsing data consistently highlight user anxiety over Personally Identifiable Information (PII) inadvertently being captured, stored, or used for model training. For many, the risk of sending sensitive financial, medical, or proprietary work details to a remote server for real-time summarization simply outweighs the benefit of a three-sentence summary.

For businesses, this presents a significant risk. An employee using an AI-enhanced browser tool to summarize a competitor’s leaked annual report might unknowingly transmit that sensitive data beyond secure corporate walls. The ease of integration masks the inherent data leakage pathways being opened.

The Trust Deficit in Data Handling

When tech giants push integrated AI, they often present simplified privacy notices that few read. Firefox is effectively saying: "We see the trust deficit. We will make the secure choice the *easy* choice." This is a direct appeal to users who value **digital sovereignty**—the right to control their digital footprint and the mechanisms processing their information. The ability to switch off AI means regaining digital sovereignty over one’s real-time browsing session.

Implications for Future AI Development and Governance

Firefox’s strategy is a market signal that will force the entire industry to reckon with the concept of user consent in the AI era. This is not just about web browsing; it sets a precedent for operating systems, word processors, and communication platforms.

1. The Rise of "Opt-In by Default" for Sensitive Features

If a major player proves that a successful product can launch with AI features disabled by default (or easily disabled), it puts pressure on others whose models rely on maximizing data ingestion. We may see a regulatory push, inspired by these market examples, demanding that complex, data-intensive features like generative AI must be strictly opt-in, not opt-out.

2. The Customization Renaissance

The tension between integrated features and user customization options speaks to a deeper desire among power users. Firefox has long championed its extension ecosystem. The ability to toggle an entire category of features allows users to build their own preferred stack—whether that stack is AI-rich or completely lean. This signals a future where software layers are increasingly modular. Instead of monolithic applications, users demand layered control, allowing them to choose the *degree* of AI involvement they wish to tolerate.

3. The Emergence of Privacy-Focused AI Stacks

If users fear cloud-based AI processing, the solution will be twofold: better controls (like Firefox’s toggle) and the development of **on-device AI**. Businesses and developers must increasingly focus on delivering powerful, yet locally processed, AI capabilities that never leave the user's hardware. This trade-off—local processing is often less powerful but infinitely more private—will define the next competitive frontier.

Practical Implications for Businesses and Developers

For software vendors, content creators, and enterprise IT departments, the Firefox development demands a reassessment of their AI rollout strategy.

For Software Developers and Product Teams:

Actionable Insight: Audit Your "Always-On" Features. Every developer integrating an LLM feature must immediately audit whether that feature is mission-critical or merely "delightful." If it is not mission-critical, it should be easily decoupled or switched off via a clear interface. Assume that a segment of your users will *always* choose the off-switch, and ensure your core product functions seamlessly without the AI layer.

Furthermore, developers must prepare for granular control. Today, it's an all-or-nothing toggle. Tomorrow, users may demand the ability to disable AI summarization but keep AI grammar checks. Robust, modular design is key.

For Business Strategy and IT Security:

Actionable Insight: Define Clear AI Data Boundaries. Enterprises must treat browser-integrated AI as a potential data exfiltration vector. IT security policies must explicitly dictate which AI services are sanctioned and which are banned. If the default browser setting allows AI to send data externally, companies must mandate the use of browsers (or browser configurations) that restrict this behavior, or adopt specialized, secure browser environments.

The "digital sovereignty" movement implies that users and employees will vote with their feet (or their browser choice). If a competitor offers a demonstrably more private digital environment, talent and users will migrate.

The Future: Coexistence, Not Conquest

The tension highlighted by Firefox's decision is unlikely to dissipate. AI will continue to become more capable, and therefore more pervasive. However, the market is establishing a necessary boundary condition. The future of successful AI integration will not be about forcing adoption, but about proving utility within an environment of explicit user consent.

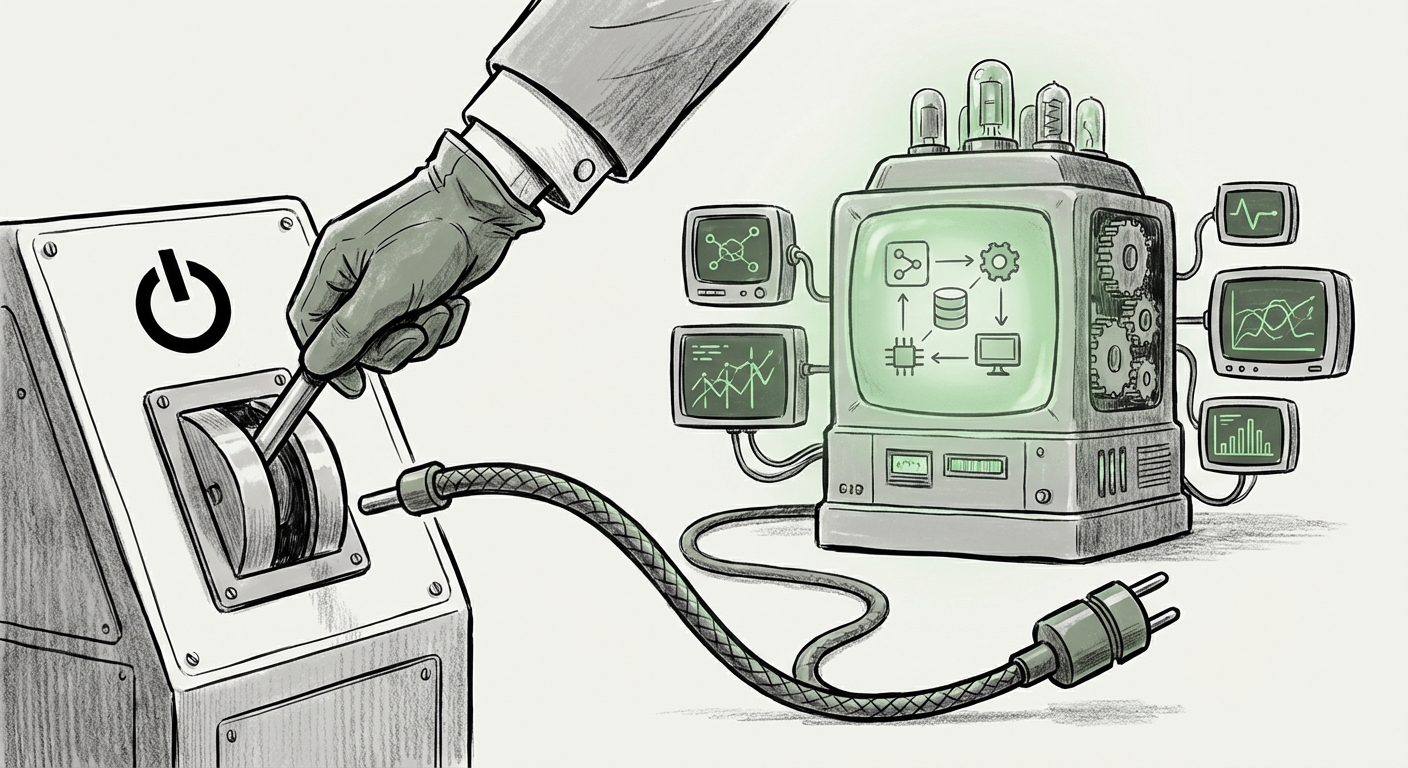

What Firefox is demonstrating is that the power dynamic is beginning to rebalance. Users are tired of being passive participants in technological experiments conducted by large corporations. They want tools that serve them, not tools that observe them. The centralized AI control is the technological manifestation of this demand for user agency.

This move forces the industry to mature its approach to AI deployment. We are moving away from the "move fast and break things" era toward a more considered, consent-based architecture where the power to *unplug* is just as important as the power to *connect*.