The Great Reckoning: How the Raid on X Signals the End of Unfettered Data for AI Development

The recent action by French prosecutors raiding the Paris offices of X (formerly Twitter), stemming from allegations related to data handling and child protection, is far more than a localized legal skirmish. For those of us tracking the trajectory of artificial intelligence, this event represents a seismic shift. It confirms that the era of unchecked data ingestion—the very lifeblood of modern generative AI—is rapidly closing.

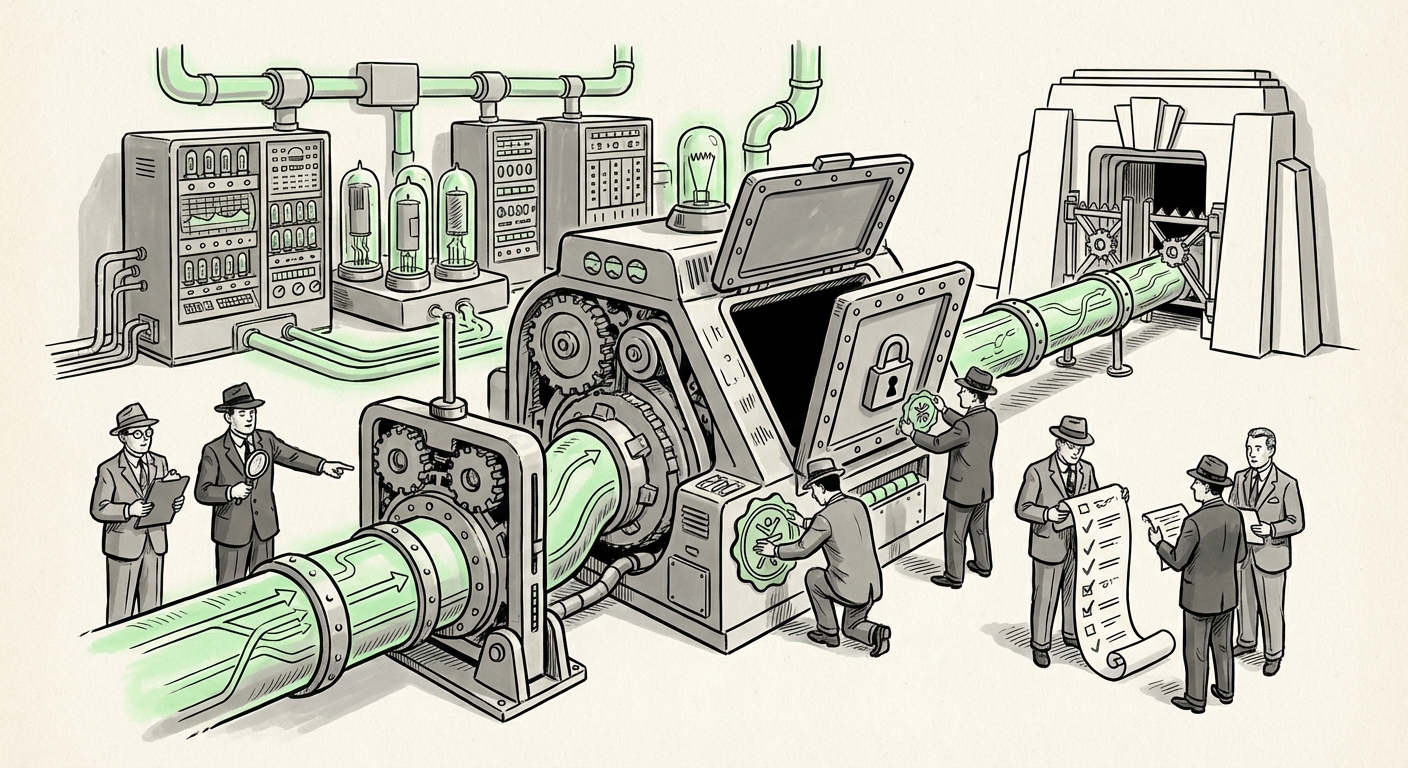

Large social media platforms like X are not just communication tools; they are the world's largest, richest repositories of human behavior, language, and interaction. This data fuels the massive Large Language Models (LLMs) and sophisticated recommendation engines that define our current AI landscape. When law enforcement descends upon these digital fortresses, it sends a clear message: Data governance is no longer negotiable, and the technological infrastructure powering AI must answer to national laws.

The Convergence of Legal Pressure and AI Dependence

To understand the gravity of this moment, we must overlay the immediate legal challenge onto the broader technological reality. AI models, especially sophisticated ones, are inherently data-hungry. They learn to communicate, reason, and even "see" by analyzing petabytes of text, images, and interactions scraped from the open (and sometimes less-than-open) web, often aggregated via platforms like X.

The French investigation is a flashpoint where these two forces meet:

- The Demand for Data Access: Prosecutors require access to specific data logs related to user behavior and content moderation decisions to investigate potential criminal activity.

- The Platform's Defense: X must balance compliance with local judicial orders against its established data privacy policies, ownership structures, and commitments to user data protection (often dictated by US laws or its own corporate stance).

This balancing act is now the defining challenge for any company training models destined for global markets. We are moving from a philosophical debate about AI ethics to a concrete, enforceable mandate about where data resides and who can inspect it.

The Shadow of the DSA: A New Regulatory Superpower

The most significant context surrounding this raid is the European Union's **Digital Services Act (DSA)**. This sweeping regulation fundamentally rewrites the rulebook for digital intermediaries operating within the EU. Platforms designated as Very Large Online Platforms (VLOPs), like X, face stringent new obligations designed to mitigate systemic risks to fundamental rights, including safety and data protection.

For AI analysts, the DSA is crucial because it demands algorithmic transparency and verifiable risk assessment. If a platform's AI moderation system fails to catch child abuse material (CSAM) or allows widespread data abuses, the regulator doesn't just issue a fine; they can demand radical changes to the platform's core systems.

We have seen significant discussion regarding DSA compliance challenges, where platforms must prove they have adequately addressed risks. The French raid serves as an acute real-world enforcement mechanism showing that the EU is prepared to use judicial means to enforce regulatory compliance that AI systems have allegedly failed to uphold. This forces AI teams to shift focus from sheer model capability to auditable safety and compliance.

(For deeper context on how these rules apply: Search results related to the "EU Digital Services Act (DSA) compliance challenges social media" show that the regulatory burden is forcing immediate, tangible engineering changes across Europe's largest tech players.)

The Achilles' Heel of AI: Moderation Failure

The specific nature of the allegations—child abuse—cuts to the core weakness of automated moderation. While AI excels at identifying known patterns (like basic spam or obvious copyright infringement), identifying nuanced, evolving, or deliberately obscured harmful content remains incredibly difficult.

Current AI systems often rely on training data that is already cleaned or tagged. However, when dealing with new or novel forms of illicit content, these models fail, requiring human review. When human review is slow, understaffed, or overwhelmed—as critics often allege at large platforms—the gaps become criminal entry points.

This is the technical reality behind the headlines. Prosecutors are not just investigating platform *policy*; they are investigating the efficacy of the technology deployed. This means that future AI development must embed verifiable safety nets. It’s no longer enough to have a powerful LLM; one must have a robust, legally defensible system for detecting and preventing the dissemination of illegal material ingested or hosted on the platform.

(Further reading on this challenge: Investigative reports looking into "AI-driven content moderation failure child safety" often detail how adversarial attacks or new visual codecs allow harmful material to slip past automated defenses, proving the technology is not yet mature enough for full autonomy in high-stakes areas.)

The Sovereignty Imperative: Where Your Data Lives Matters

Perhaps the most profound long-term implication for AI infrastructure is the rise of **data sovereignty**. The global, borderless infrastructure that Silicon Valley built is being challenged by national interests demanding control over citizen data.

When French prosecutors raid a local office, they are asserting French jurisdiction over data concerning French citizens. This friction—between the cloud-native design of platforms and the territorial demands of nation-states—is accelerating.

For AI businesses, this means that a model trained on data ostensibly "available globally" may face severe legal jeopardy if that data originated from a jurisdiction that requires local residency or specific access protocols. We are seeing a fragmentation of the global data layer:

- Local LLMs: Governments may mandate that certain sensitive data (especially national security, health, or child safety data) must be processed and stored exclusively within national borders.

- Compliance Overhead: AI development teams must now design complex federated learning frameworks or use advanced privacy-preserving techniques to train models without physically moving sensitive user data across jurisdictions.

This trend directly impedes the efficiency gains that massive, centralized cloud training typically offers. It forces the industry to embrace a more nuanced, geographically segmented approach to data stewardship.

(To track this geopolitical shift: Analysis concerning "Data Sovereignty laws impact on global tech infrastructure" illustrates a worldwide trend where countries leverage regulation to ensure their citizens' digital presence remains within their legal reach.)

From Immunity to Accountability: The Global Liability Shift

In the US, Section 230 of the Communications Decency Act traditionally shields platforms from liability for content posted by users. However, much of the rest of the developed world—especially Europe—is actively dismantling this blanket immunity, viewing platforms as active distributors, not passive hosts.

The success of the French enforcement action, backed by the authority of the DSA, will further cement this shift away from platform immunity. If X is found to have failed in its duty to moderate known illegal content effectively, the resulting penalties will set precedents that impact every future platform.

What does this mean for the future AI builder?

- Inherent Liability: Any tool that ingests user content—whether it’s a consumer chatbot, a social listening tool, or a new recommendation engine—must now bake in preemptive legal defense mechanisms.

- Transparency as Currency: The ability to prove *how* an AI made a decision (e.g., "Why did our system fail to flag this image?") becomes as important as the decision itself.

This is the global move toward platform accountability, where the technological shields that protected social media for two decades are finally being tested under the intense scrutiny of real-world regulatory and criminal enforcement.

(Examining the contrast: Legal commentary on "Tech platform liability for illegal content under Section 230 alternatives" highlights the growing legal risk premium associated with operating internationally outside the US legal sphere.)

Practical Implications: Engineering for Accountability

This convergence of regulatory pressure and technological capability means that businesses involved in deploying AI systems must adapt immediately. The focus shifts from speed to security and compliance.

For AI Developers and Engineers:

You must integrate "Safety by Design." This is not just an ethical footnote; it is an engineering requirement. Systems must be built with mandatory audit trails for moderation decisions, verifiable data provenance logs, and fail-safes that trigger mandatory human review for high-risk content areas (like child safety or disinformation).

For Business Leaders and Investors:

The risk profile of investing in large, user-generated content platforms has fundamentally changed. Due diligence must now heavily weigh jurisdictional risk. A platform that operates primarily in the EU must engineer its data infrastructure to be instantly auditable by local regulators, even if that means sacrificing some operational simplicity.

For Society:

While these regulatory cracks cause immediate friction for tech giants, the long-term benefit should be a safer digital public square. If successful, these enforcement actions push AI governance toward systems that are demonstrably better at protecting vulnerable populations, aligning technological capability with societal values.

Conclusion: The Dawn of Accountable Algorithms

The raid on X in Paris is a powerful symbol. It represents the moment the global digital commons pushed back against the platforms that aggregate its data. AI development, currently predicated on near-limitless access to public interaction, now faces jurisdictional roadblocks, accountability mandates, and verifiable safety thresholds.

The future of AI will not be defined solely by the next breakthrough in neural network architecture, but by the robustness and auditability of the data pipelines that feed those architectures. Compliance is the new frontier of innovation. In the coming decade, the most valuable AI companies will be those that successfully build powerful models within the strict, localized boundaries set by global regulators.