Musk's Cosmic Gambit: Why Merging xAI into SpaceX Signals the Future of Integrated AI and Space Exploration

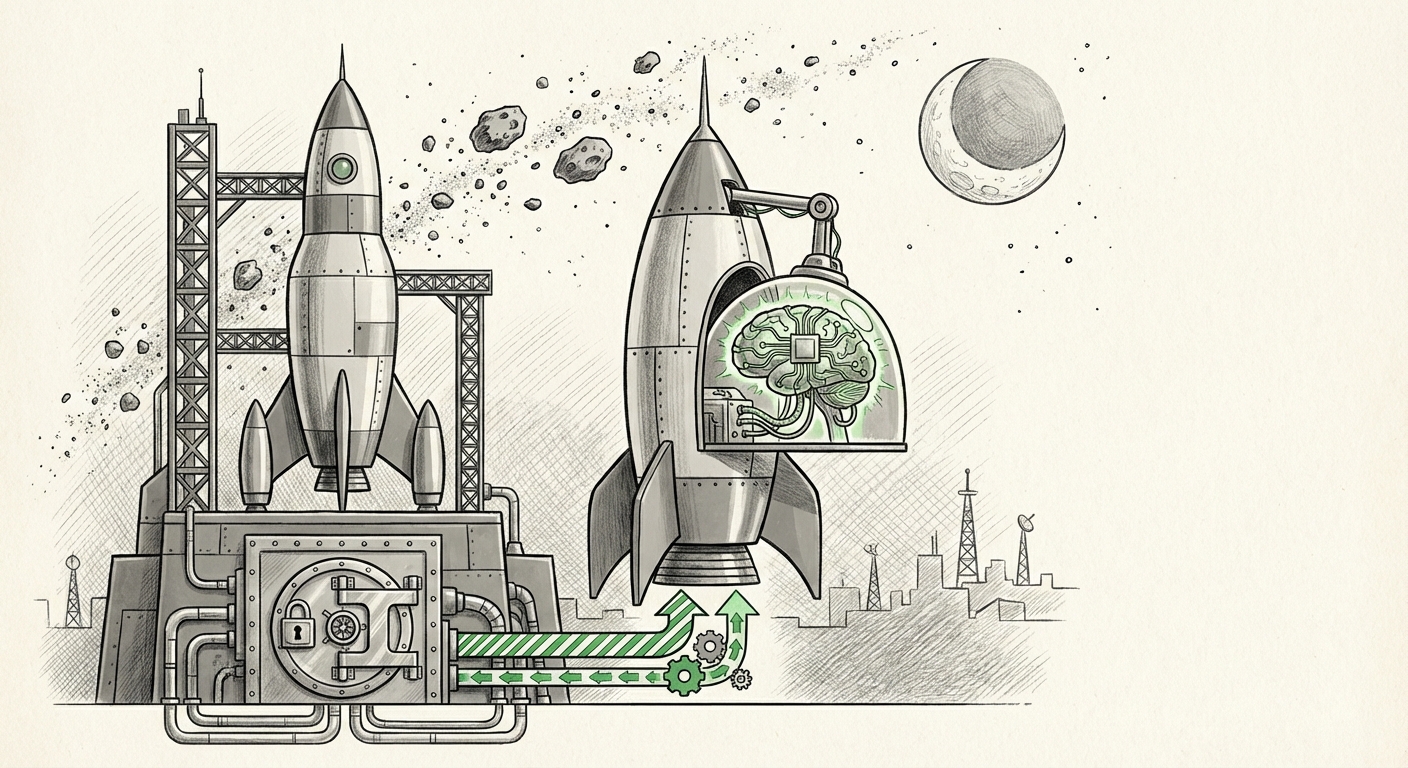

The technology world has been buzzing over Elon Musk’s latest structural maneuver: absorbing the money-losing AI venture, xAI, directly into the aerospace giant, SpaceX. On the surface, this looks like a drastic measure to shield an expensive, nascent AI project from immediate public scrutiny ahead of a potential SpaceX IPO. However, when analyzed through the lens of Musk’s interconnected technological empire, this merger suggests a profound thesis about the future: Artificial General Intelligence (AGI) must be developed and deployed in extreme, data-scarce environments like space to achieve true robustness.

This move is more than just financial engineering; it is a declaration about the next frontier of AI development. By intertwining the high-risk, high-reward world of foundational AI models (like xAI’s Grok) with the high-capital, high-stakes reality of space travel and satellite operations (SpaceX), Musk is building a feedback loop unlike any other currently operating in Silicon Valley.

The Dual Drivers: Finance Meets Physics

To understand this merger, we must peel back two layers: the economic necessity and the technical vision.

1. The Economic Realities: De-Risking for the IPO

xAI, like its contemporaries, requires enormous capital expenditure, primarily driven by the insatiable hunger for specialized computing power—the GPUs needed to train truly massive language models. As highlighted by analyses regarding the "Compute requirements for training massive foundation models," maintaining a competitive edge against well-funded rivals like OpenAI demands multi-billion dollar investments before generating meaningful revenue. For a pre-revenue entity, this presents a constant existential threat.

By merging xAI into SpaceX, Musk achieves several immediate financial benefits, aligning with established practices discussed by financial analysts looking at "Financial benefits of merging pre-IPO startup with established company."

- Capital Pooling: xAI instantly gains access to SpaceX’s deep financial reserves, stability derived from existing revenue streams (like Starlink subscriptions and launch contracts), and the ability to absorb operational losses without jeopardizing the core mission.

- Infrastructure Sharing: While AI compute clusters are specialized, integrating xAI allows for unified procurement strategies, shared real estate costs, and collaborative hiring for highly specialized engineering talent.

- IPO Clean-Up: When SpaceX eventually pursues a mega-IPO, a unified entity presents a clearer, albeit more complex, growth narrative to investors, potentially hiding short-term AI development losses within the overall robust valuation of the space segment. This structuring mirrors previous maneuvers seen in the planning phases for Starlink’s potential separation.

For the financial audience, this is textbook risk management, leveraging a proven, cash-generating asset to nurture a future, world-changing, but currently unprofitable one.

2. The Technical Imperative: Scaling AI in the Void

Musk’s stated rationale—that "AI can only scale properly in space"—is the more visionary, and perhaps more compelling, aspect. This claim is rooted in the fundamental challenges of creating robust, generalized intelligence.

On Earth, AI operates in a relatively predictable, data-rich environment. Space, however, is the ultimate testbed for autonomy. Consider the challenges explored in technical literature concerning the "Role of AI in autonomous space missions and deep space computing."

- Latency and Autonomy: A signal to Mars can take minutes to hours. Any complex operation—a navigation correction, a decision during a landing sequence, or a failure response—cannot wait for confirmation from Earth. This necessitates AI that can reason, adapt, and correct course instantaneously.

- Data Scarcity and Diversity: Spacecraft environments are wildly diverse—from vacuum to radiation exposure, and operating on limited power budgets. An AI trained exclusively on Earth simulations will fail under real-world extraterrestrial stress. Integrating xAI's development with Starlink’s massive orbital network and future deep-space missions forces the AI to learn from radically different data sets.

- Embodied Intelligence: If the goal is AGI, it needs to interact with the physical world across many domains. SpaceX provides the perfect platform: launching vehicles, managing thousands of satellites, and eventually, operating robotic systems on other planets. This integration moves AI out of the purely digital realm and into embodied, mission-critical action.

In simple terms: An AI that successfully manages a complex, self-healing satellite constellation during solar flares and autonomously lands a Starship on Mars is inherently more intelligent and reliable than one whose primary function is writing marketing copy or answering simple queries.

What This Means for the Future of AI Development

This merger signals a critical divergence in the AI landscape, moving beyond the centralized, cloud-based LLM race.

The Rise of Mission-Critical AI

The immediate future will see a bifurcation. On one side, you have generalized, broad-application LLMs optimized for consumer interaction and data analysis. On the other, catalyzed by this merger, will be Mission-Critical AI (MCAI). MCAI is not about conversational fluency; it is about verifiable, safe, and extremely high-stakes decision-making under duress.

For businesses, this means that the next wave of AI investment won't just be in model size, but in deployment context. Companies involved in infrastructure, logistics, defense, and energy will increasingly seek to anchor their specialized AI development within their core operational entities, rather than treating AI as a separate software service. The financial models supporting these endeavors will need to align, often requiring absorption into stable operating companies, much like xAI into SpaceX.

The Nexus of Compute and Control

The compute requirements for training Grok are staggering (Query 3). However, the compute required to run a Martian colony's life support system is unforgiving. This merger suggests Musk is creating a pipeline where the vast compute resources secured for generalized AI training can be redirected or repurposed for ultra-efficient, low-latency deployment in space systems. It closes the loop between theory and application.

This synergy might lead to breakthroughs in Federated Learning in Space, where thousands of Starlink satellites become distributed nodes, constantly updating and refining the core xAI model based on local orbital conditions, all while feeding crucial real-world environmental data back to the central intelligence.

Practical Implications for Businesses and Society

The consolidation of AI under the SpaceX umbrella has wide-ranging implications that stretch far beyond the IPO filing.

For Business Strategists and Investors

The key takeaway is the value of vertical integration in the AI era. Companies that own both the data source (e.g., a proprietary fleet of drones, a unique manufacturing line) and the intelligence layer (the custom AI model) will possess an insurmountable competitive moat. Analysts tracking Musk’s structural history (Query 4, concerning Starlink structuring) will note a pattern: **Isolate the high-growth, high-cost ventures under the umbrella of the most valuable asset until the moment of maximum leverage.**

Actionable Insight: Businesses should evaluate whether their AI strategy is siloed (a separate software cost) or integrated (a fundamental part of their core operations). True competitive advantage will favor integration, even if it means absorbing short-term R&D costs into established P&Ls.

For Society: The Ethics of Autonomous Expansion

When AI is directly responsible for maintaining human life support systems millions of miles from Earth, ethical concerns surrounding safety, bias, and alignment become hyper-critical. The merging of these entities concentrates immense power—economic, technological, and existential—into a single organizational structure, albeit one already under intense public scrutiny.

This accelerates the timeline for establishing regulatory frameworks for AGI. If space exploration becomes intrinsically tied to proprietary, generalized AI systems, governance over these systems—who audits them, who owns the decisions they make—becomes a geopolitical issue, not just a commercial one.

The Road Ahead: From Earth-Bound Models to Cosmic Cognition

The xAI/SpaceX merger is not just about optimizing shareholder value; it’s about optimizing intelligence for the hardest environments humanity intends to inhabit. It suggests that the training data for true AGI won't come from scraping the internet; it will come from managing the logistics of building a multi-planetary civilization.

We are moving toward an era where AI development is dictated not just by which lab has the most money, but by which organization has the most challenging, real-world operational environment to test and refine its creations. By placing the bleeding edge of computational learning directly into the operational heart of its space endeavor, Musk is betting that survival in the void is the ultimate stress test for creating intelligence capable of navigating any future challenge.