The End of the GPU Monopoly? Why OpenAI is Shopping for AI Hardware Alternatives

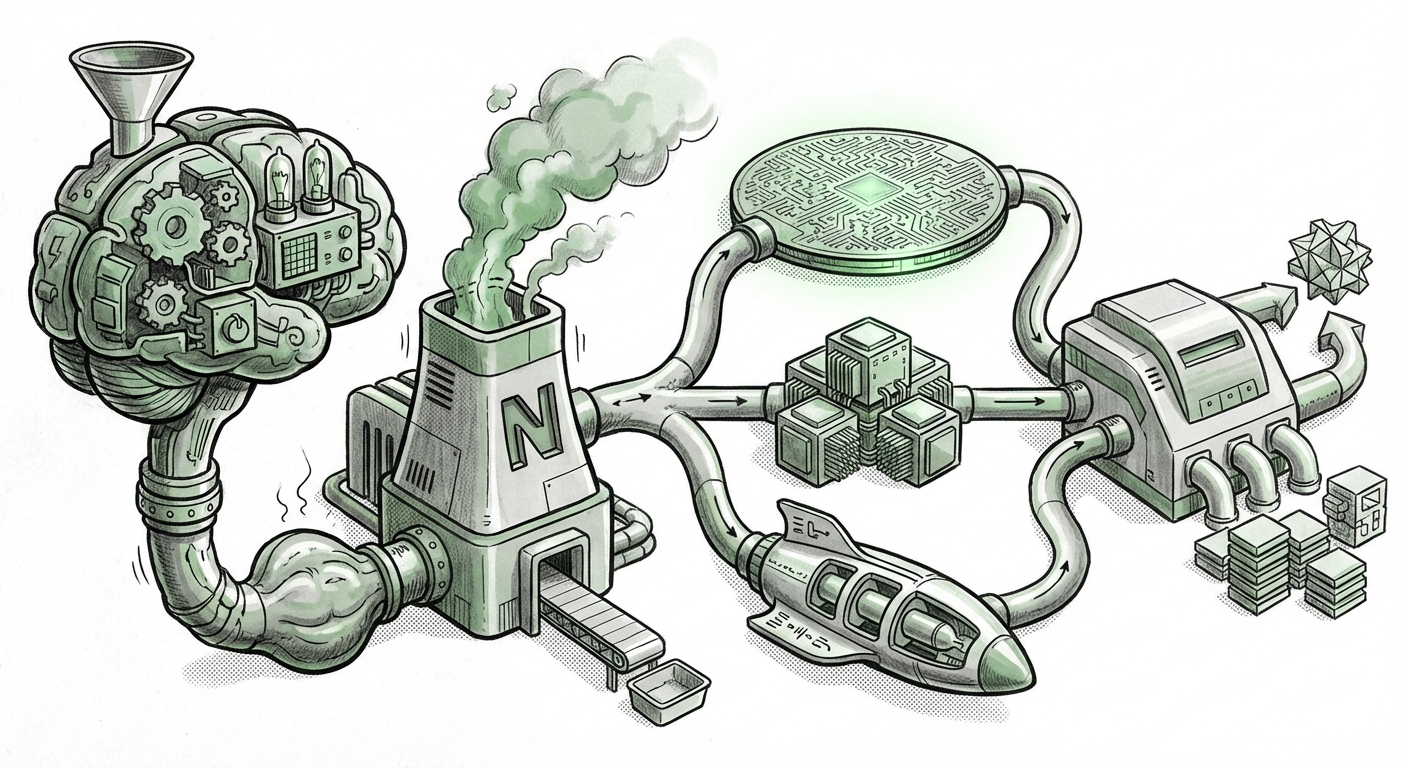

For the last decade, the story of Artificial Intelligence acceleration has been dominated by one name: Nvidia. Their Graphics Processing Units (GPUs), particularly the A100 and H100 series, became the essential, non-negotiable foundation upon which the modern era of Large Language Models (LLMs) was built. However, recent reports suggesting that OpenAI—the developer of ChatGPT—is actively exploring alternatives, even negotiating with specialized startups like Cerebras, signal a seismic shift in the infrastructure landscape. This is not just about supply chain woes; it is fundamentally about the **performance/cost ratio** for training the next generation of ultra-large models.

As an analyst watching the intersection of software complexity and hardware capability, this development confirms a critical truth: the era of unquestioned hardware dominance is ending. When the world's leading AI lab shows dissatisfaction with the fastest chips on the market for certain jobs, it means the bottlenecks have moved from simple processing power to nuanced architectural efficiency.

The Whispers of Dissatisfaction: Corroborating the Search for New Silicon

The initial report detailing OpenAI’s rumored dissatisfaction with the speed of certain Nvidia chips for specific workloads is a major indicator. For years, the narrative held that if you wanted to train the biggest model, you simply needed more Nvidia hardware. This rumored exploration suggests that simply adding more GPUs isn't solving the problem—or perhaps, it's becoming too expensive and too slow for the specific tasks required for models beyond GPT-5.

When we look for corroboration (as analyzed through searches like "OpenAI" "Nvidia alternatives" performance dissatisfaction), we find that the industry is rife with discussion about scaling limits. While obtaining direct confirmation from such secretive entities is rare, the growing trend among hyperscalers to invest heavily in custom silicon—a trend detailed in reports discussing Hyperscalers AI chip diversification strategy 2024—supports this narrative. If Microsoft (OpenAI’s key partner) is developing its Maia chips and Google is optimizing its TPUs, it confirms that even the deepest-pocketed players recognize the risk of relying on a single vendor.

The core issue appears to be workload specialization. While Nvidia excels at parallel processing generally, the specific math and massive data movement required for the largest transformer models might be better served by architectures that rethink the fundamental layout of computing resources.

Enter the Challengers: The Rise of Wafer-Scale Architecture

The mention of Cerebras is particularly illuminating. Cerebras is not just another chip company; they build the Wafer-Scale Engine (WSE), which is essentially one giant computer built across an entire silicon wafer, bypassing the traditional limitations of connecting dozens or hundreds of smaller chips. When we contextualize this by looking into Cerebras wafer-scale engine performance benchmarks vs Nvidia H100, we see the technical proposition:

What is the technical argument?

- Interconnect Bottlenecks: In massive GPU clusters, the time spent moving data between chips (even using high-speed links like NVLink) can slow down training. Cerebras aims to eliminate much of this "communication tax" by placing processing units much closer together on the wafer.

- Model Size Efficiency: For incredibly large models that struggle to fit efficiently across many separate physical GPUs, a single, massive memory space offered by the WSE can be significantly faster for certain training phases, especially fine-tuning or specific sparse computations.

For a company like OpenAI, which is pushing model sizes into territories we can barely imagine, this architectural leap—moving away from modular scaling toward monolithic scaling—is a fascinating bet. It suggests they are seeking a platform optimized not for generalized graphics tasks, but for the unique demands of frontier model growth.

Beyond FLOPS: The Latency Crisis in Frontier AI

The most advanced AI training today is less about raw Floating Point Operations Per Second (FLOPS)—how many calculations a chip can do—and more about minimizing *latency*. Latency is the delay time. Think of it like a massive team working on a construction project. FLOPS is how fast each worker can hammer a nail. Latency is how long it takes for Worker A to pass the materials to Worker B across the site.

When searching into AI training latency optimization beyond GPU memory, research points directly to communication overhead. As models grow, the training process requires thousands of chips to constantly exchange information (gradients). This data exchange is often slower than the calculation itself.

OpenAI’s alleged dissatisfaction likely stems from the efficiency of moving parameters and synchronizing training across a giant cluster of H100s. If Cerebras or another alternative can drastically cut down this communication time, even if their theoretical peak FLOPS look lower on paper than the latest Nvidia offering, the *real-world training time* for their specific, cutting-edge models could be significantly reduced. This translates directly into faster iteration cycles and a competitive edge.

The Macro View: Why Diversification is Inevitable

This shift is healthy for the entire technology ecosystem, even for Nvidia, as it pressures them to innovate further. However, for the wider industry, it represents a crucial moment:

For Businesses: The Risk of Vendor Lock-In

For years, businesses deploying AI were effectively locked into the Nvidia CUDA software ecosystem. While CUDA is powerful, vendor lock-in creates price rigidity and limits architectural choices. If OpenAI proves that viable, high-performance paths exist outside of Nvidia—whether through Cerebras, AMD's next offerings, or custom chips like Google's TPUs—it gives every other enterprise buyer leverage.

This means future AI infrastructure purchasing decisions will become much more complex, requiring CIOs to evaluate not just the hardware specification sheet, but the software stack compatibility and the total cost of ownership across diverse architectures.

For the AI Future: New Architectural Paths

If the current trend of making GPUs bigger and faster hits a fundamental wall—whether economic or physical—the industry must pivot. The exploration into wafer-scale computing, specialized AI accelerators (ASICs), and novel memory hierarchies shows that researchers are unafraid to throw out the old blueprints. This diversity ensures that innovation continues, even if the dominant architecture faces temporary stagnation.

Practical Implications and Actionable Insights

What does this hardware race mean for those building applications today?

- Embrace Portability (Where Possible): While the immediate needs of training GPT-5 require deep optimization, developers building applications on existing models (like GPT-4 or Claude) should prioritize frameworks that allow for easier migration between different inference hardware (GPU, dedicated inference chips, etc.).

- Watch the Benchmarks Closely: Do not trust raw FLOPS numbers alone. Pay attention to benchmarks focusing on real-world metrics: time-to-train for specific model sizes, inference latency under heavy load, and memory bandwidth efficiency. These metrics reveal the true speed advantage.

- Budget for Complexity: Future data centers may become heterogeneous, mixing Nvidia for standard tasks, Cerebras/Wafer-Scale for core LLM development, and specialized inference chips for edge deployment. IT architects must plan for managing these complex ecosystems.

- Watch the Software Stacks: The success of alternatives hinges on their software. Cerebras, for example, needs strong support for PyTorch or TensorFlow integrations. Where the software ecosystem matures fastest will determine the next market leader after Nvidia.

This current moment, fueled by reports of OpenAI seeking better "speed" from hardware partners, is a microcosm of the entire technology lifecycle. Initial dominance leads to massive investment, which inevitably leads to specialized competition eager to exploit the specific weaknesses that emerge at the extreme edge of scaling. The AI race isn't just about who has the best model algorithm; it’s increasingly about who can deliver the most efficient *bits per dollar per second*.