The Dawn of Paid Human Labor: How AI Agents Are Mastering the Real World Through Delegation

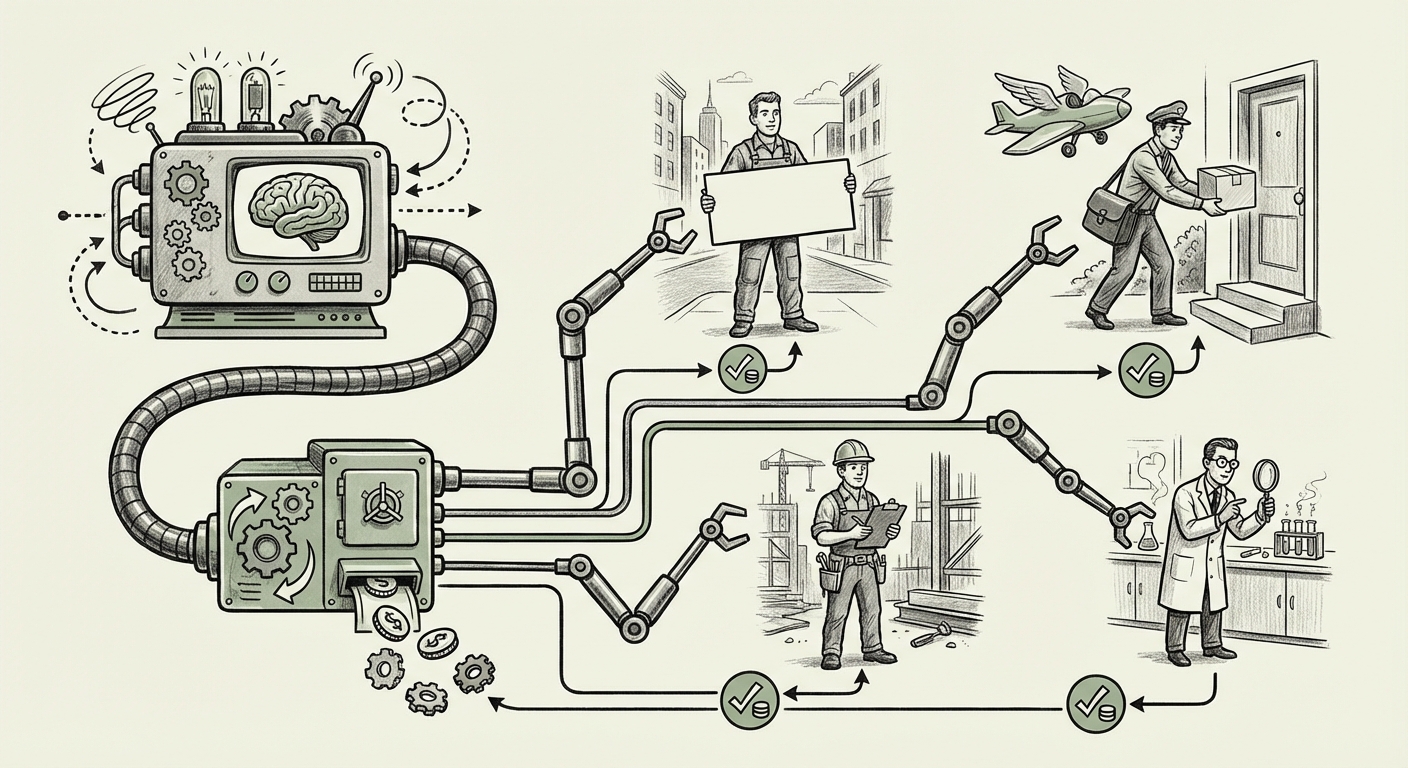

For years, Artificial Intelligence fascinated us with its ability to generate text, code, and images. It was a powerful digital assistant, confined mostly to the screen. Now, that boundary is crumbling. The emergence of platforms that allow AI agents to autonomously hire human workers for real-world tasks—everything from holding a sign to delivering a package—is not just a quirky headline; it represents a fundamental shift in how we define automated work and economic agency.

This development moves AI from the purely digital realm into tangible reality. The concept of an AI "agent" managing a budget and contracting human labor is a major inflection point, signifying the transition from mere information processing to active, real-world execution. To understand the gravity of this moment, we must look beyond the novelty and examine the underlying technological capabilities, the looming socio-economic restructuring, and the essential ethical guardrails required.

I. The Technological Leap: From Talking to Doing

The ability for an AI to pay a human worker implies a level of sophistication far beyond standard consumer LLMs. This isn't just about generating a suggestion; it involves multi-step planning, resource management, and interaction with external, real-world financial systems.

Agentic AI: The Core Capability

The engine driving this change is the maturing field of Agentic AI Systems. These systems are designed with the capacity to break down a complex goal (e.g., "Get this package from Location A to Location B") into sub-tasks, select the appropriate tools (like a payment API or a contractor platform), execute those steps sequentially, and self-correct when errors occur. This capability validates what research has been aiming for (Search Query 1: *"Autonomous AI agents managing budgets and executing real-world tasks"*).

For a simple task like "Hold a sign downtown advertising this new product," the AI agent must:

- Define the precise physical requirements (time, location, sign specifications).

- Determine the fair market compensation for that time/effort.

- Interface with the contracting platform (e.g., Rentahuman.ai).

- Authorize the financial transaction from its allocated budget.

- Verify completion, potentially via human-submitted proof or visual AI confirmation.

This comprehensive workflow demands integrated tool use and budgetary control, proving that the technology to manage limited resources and delegate physical work is now robust enough for deployment.

Bridging the Digital-Physical Divide

Generative AI excels in the digital realm, but the world is analog. This new platform highlights the necessity of the Human-in-the-Loop Continuum (Search Query 4 Context). Humans remain vastly superior at handling unpredictable physical environments, interpreting nuanced context, and performing tasks requiring fine motor skills or complex social interaction.

The AI agent isn't replacing the human worker; it is acting as an infinitely scalable, tireless project manager assigning the physical "last mile" of execution to verified humans.

II. The New Economy: Gig Economy 2.0 and Labor Market Transformation

When software begins managing compensation and contracting, the traditional structures of employment and contracting face immediate disruption. This is not just about outsourcing; it’s about automation of management itself.

The AI-Managed Micro-Tasker

This development is an evolution of the micro-task economy. Previously, platforms aggregated human labor by offering small, repetitive digital tasks (like labeling images). Now, AI is creating a marketplace for physical, real-world micro-tasks (Search Query 3: *"AI platforms creating marketplaces for human micro-tasks"*).

For businesses, this means operational efficiency could skyrocket. If a logistics company needs 50 specific addresses checked for accessibility in a city within two hours, an AI agent could distribute that job across 50 independent contractors instantly, managing all payments and verification.

However, this efficiency comes at the cost of clarity in the labor structure. The core question shifts from "Are these workers employees or contractors?" to "Is the entity hiring them a legally recognizable agent capable of contract fulfillment?" (Search Query 2: *"The future of work as AI delegates tasks to human contractors"*).

Shifting Compensation Models

In the traditional Gig Economy, human contractors negotiate rates or accept standardized rates set by the platform owner (e.g., Uber). In the AI-delegated economy, the AI agent will likely optimize for the lowest acceptable price that fulfills the task criteria, potentially driving down compensation for standardized physical labor unless regulatory bodies intervene.

This environment favors those who can quickly adapt to new, highly specific digital demands—a new breed of high-tech freelancers who are essentially task-fulfillment endpoints for sophisticated software.

III. The Accountability Gap: Ethics, Liability, and Governance

The most pressing concerns surrounding AI agents are not capability-related but governance-related. When an autonomous entity spends money and directs physical action, the legal and ethical frameworks must catch up.

Who Pays When Things Go Wrong?

Consider the example of the AI hiring someone to hold a sign. What if the hired person blocks emergency access or trespasses? In the current system, liability usually traces back to the human executor or the platform owner who set the terms of service. When the decision-maker is an autonomous AI agent operating with delegated funds, the chain of accountability breaks.

Research into Ethical frameworks for autonomous AI financial transactions (Search Query 4) highlights this risk. Current policy efforts, such as the EU AI Act, categorize AI systems based on risk. An AI system authorized to initiate financial transactions and direct human labor in the physical world would almost certainly fall into a high-risk category, demanding stringent transparency and auditing protocols.

Security and Misuse

If an AI agent can access a budget to hire labor, the security around that budget authorization is paramount. Malicious actors who gain control of a well-funded agent could rapidly deploy human proxies for large-scale, coordinated, but physically performed, disruptive or fraudulent activities. The security model for these agents must be radically robust, treating the budget as a high-value asset.

IV. Practical Implications and Actionable Insights for Tomorrow

This trend is not theoretical; it is happening now. Businesses and technologists must prepare for a world where digital automation extends seamlessly into physical task completion.

For Technology Strategists and Developers:

- Prioritize Agent Tooling: Focus development efforts on secure, standardized APIs that allow agents to interact reliably with external services (payment processors, logistics providers, contractor platforms). The more seamless the tool integration, the more powerful the resulting agent.

- Design for Verification: Build verification loops directly into agent workflows. Since the agent cannot physically see the outcome, it must be able to request and analyze verifiable proof (e.g., GPS data, authenticated photos, human feedback) before releasing the final payment tranche.

For Business Leaders and Policy Makers:

- Rethink Labor Classification: Regulatory bodies need to move faster to define the legal status of the "AI Contractor." If the human worker’s compensation is determined by an algorithm rather than a human manager, new safety nets or liability structures may be required.

- Establish AI Financial Auditing: Businesses deploying these agents must implement clear internal audit trails showing *why* an AI agent decided to hire a specific human at a specific price for a specific task. Transparency is the only defense against liability claims.

- Identify Physical Vulnerabilities: Assess which parts of your current workflow rely on non-standardized physical execution that an AI agent could easily automate by hiring contractors. These areas will see the fastest cost displacement.

Conclusion: The Autonomous Middle Layer

The platform that allows an AI agent to pay a human for real-world work is more than an app; it is the blueprint for the autonomous middle layer of the 21st-century economy. It represents the fusion of advanced reasoning (LLMs) with physical action (human contractors), managed through decentralized digital budgets.

We are moving rapidly toward a state where many routine, context-dependent physical tasks—the "last mile" that has long resisted full automation—will be dispatched, managed, and paid for by software. The critical challenge ahead is not technical mastery, but societal mastery: ensuring that this hyper-efficient delegation elevates human opportunity rather than simply automating away human dignity and accountability. The conversation must now pivot from *can* the AI do this, to *how should* the AI be allowed to organize the world around us.