AI Monetization Showdown: Why Anthropic's Ad-Free Promise vs. OpenAI's Advertising Strategy Defines the Future of Generative Interfaces

The rapid ascent of Large Language Models (LLMs) from research labs to everyday tools has been breathtaking. Now, as these models mature, the focus shifts from pure capability to sustainable business models. The contrasting strategies emerging from two of the most powerful players—Anthropic, promising an ad-free experience for Claude, and OpenAI, moving toward integrating advertising into ChatGPT—signal a critical inflection point for the entire generative AI ecosystem.

As an AI technology analyst, this divergence isn't merely a competitive tactic; it's a fundamental decision about the future relationship between AI providers, their users, and their data. Will the interface we use to create, learn, and work be treated as a utility worth paying for, or as a distribution channel for targeted ads?

The Two Competing Visions of AI Commerce

The core difference lies in perceived value and target audience. OpenAI, having captured massive public mindshare with ChatGPT, seems compelled to monetize that scale. Advertising, historically, is the path to "free" software for billions of users. However, integrating ads into a conversational interface—a space where users seek focus and deep work—presents unique challenges that Anthropic is clearly trying to avoid.

Anthropic’s Bet on Exclusivity and Trust (The Subscription Path)

Anthropic’s pledge to keep Claude ad-free positions the model as a premium utility. This strategy strongly suggests a primary focus on **subscription models** and **enterprise licensing** (API usage). If you are an engineer debugging code, a lawyer summarizing case law, or a creative professional drafting complex narratives, interruptions are not just annoying—they break focus and degrade the quality of the output.

This aligns with findings suggesting that while consumers enjoy free access, power users—the segment most willing to pay—prioritize speed, access to the latest model iterations (like Claude 3 Opus), and an uninterrupted workflow. For Anthropic, trust is the currency. By guaranteeing an ad-free zone, they are signaling that user data will not be mined or leveraged for third-party commercial purposes within the chat environment, appealing directly to risk-averse corporate clients.

OpenAI’s Gambit for Scale (The Ad-Supported Path)

OpenAI, facing incredible compute costs and the pressure of rapid scaling, sees advertising as the fastest route to recouping billions in investment. For the casual user—someone asking for a recipe or a quick summary—a free, ad-supported tool might be perfectly acceptable. This taps into the traditional tech behemoth strategy: achieve near-ubiquity, then monetize the attention.

However, this strategy is fraught with peril. As our research confirms (querying the viability of subscription models vs. free tiers), the market expects powerful generative AI to be *at least* basic-free. Introducing ads risks alienating the same user base that built the product's initial buzz. Furthermore, it raises serious usability questions regarding latency and relevance.

The Technical and Ethical Hurdles of Ad-Supported AI

Moving beyond the business decision, inserting advertisements into real-time generative conversation is a significant technological challenge with heavy ethical implications. This is where the differences between the two companies' approaches become most pronounced.

Latency and Flow Disruption

Generative AI thrives on speed. Every millisecond counts in maintaining the illusion of a seamless dialogue. Traditional web advertising requires complex, asynchronous calls to ad servers, bidding systems, and content delivery networks. Implementing this infrastructure *within* the real-time generation pipeline risks slowing down Claude or ChatGPT responses, frustrating users who are expecting instantaneous feedback. If the AI response is punctuated by a loading delay while an ad is fetched, the entire utility suffers.

The Privacy Paradox: Personalization vs. Trust

The most potent advertising is personalized advertising. For ads to be highly relevant within a chat context (e.g., suggesting a specific travel insurance plan mid-conversation about booking a trip), the model must deeply analyze the user's immediate context and historical data. This directly challenges query 4: user sentiment AI personalization vs. data privacy.

Users may willingly surrender data for better search results, but sharing intimate, real-time operational data with a chatbot they trust for professional tasks is a different boundary. Anthropic’s ad-free stance is a preemptive defense against this privacy erosion. OpenAI must convince users that their sensitive queries (financial planning, proprietary business brainstorming) will be siloed safely away from the ad-targeting ecosystem, a difficult sell in today's regulatory climate.

Funding the Future: The Real Cost of Intelligence

To fully grasp the pressure on OpenAI and the confidence of Anthropic, one must look at the underlying economics, as investigated in searches regarding long-term funding strategies for large language models. Training and running frontier models costs hundreds of millions, often billions, annually.

The AI race is currently fueled by a mix of hyper-growth VC investment and cloud credits. However, investors eventually demand clear paths to profitability that aren't solely reliant on future speculation.

- API & Enterprise Licensing: This is the bedrock for both companies. Selling access to the raw intelligence via APIs to developers and large corporations provides predictable, high-margin revenue. Anthropic leans heavily here, knowing that a business customer paying $100,000 a year demands quality and privacy.

- The Free Tier Burden: For OpenAI, the free ChatGPT user is currently a massive operational cost center. Advertising is an attempt to subsidize these users, turning attention into revenue. The risk is that if the free tier becomes degraded by ads, those high-value users (who might otherwise upgrade to Plus) will migrate to ad-free competitors like Claude or Google Gemini's premium tiers.

If OpenAI cannot successfully implement advertising without degrading the core user experience—which remains the strongest argument for query 2, OpenAI ChatGPT advertising integration challenges—they may find the advertising revenue gained is less than the subscription revenue lost by power users abandoning the platform.

Practical Implications: Which Model Wins the Workday?

The choice between an ad-supported interface and a subscription-gated one fundamentally defines the tool’s identity. This has concrete implications for businesses adopting AI:

For the Professional User and Enterprise

Businesses prioritize **reliability, security, and focus**. An environment free of commercial distraction ensures that the AI remains a cognitive partner rather than a subtle sales funnel. Anthropic’s path validates the concept that for mission-critical or deeply creative tasks, users will readily pay a premium to maintain a pristine user experience.

For Developers and the Broader Ecosystem

If the free-tier experience becomes saturated with ads, it drives innovation toward the API layer. Developers building specialized applications will overwhelmingly choose the API provider that offers the cleanest, most reliable interface, regardless of the consumer front-end strategy. This reinforces the importance of **Query 1: viability of AI subscription models**—if the premium tier is demonstrably better and cleaner, the upgrade path becomes obvious.

Societal Implications: The Democratization vs. Quality Divide

OpenAI’s approach aims for maximum democratization—getting the most powerful models into the hands of the most people, regardless of income, subsidized by advertisers. This accelerates societal awareness and adoption. Anthropic’s approach risks creating an AI quality divide, where the most capable, most trustworthy AI tools are reserved for those who can afford the subscription.

Actionable Insights for Navigating the AI Frontier

As technology leaders, product managers, and consumers, we must recognize that these two paths will likely coexist, targeting different market segments. Here is how stakeholders should react:

- Businesses: Mandate Privacy-First APIs: When integrating LLMs into core workflows, prioritize providers who guarantee no data leakage to advertising affiliates. Assume any "free" tool has the potential to analyze conversational data for commercial intent unless explicitly stated otherwise (as Anthropic is doing).

- Product Managers: Define Your User’s Intent: If your product requires deep focus (coding, legal review, complex writing), adopt a model where users pay for focus (like Anthropic). If your product serves high-volume, low-stakes tasks (quick lookups, general brainstorming), the ad-supported or freemium model might capture the necessary scale.

- Consumers: Vote with Your Wallet (and Attention): Pay close attention to the quality of the free tier experience. If ChatGPT becomes unusable due to ads, the market signal is clear: paying $20/month for an ad-free experience is the preferred exchange for quality.

- Future-Proofing AI Development: The most robust long-term funding strategy involves diversified revenue. Relying solely on ads or solely on early-stage venture capital is brittle. The companies that successfully blend high-margin enterprise API sales with a compelling, clean consumer subscription tier are best positioned for the next decade.

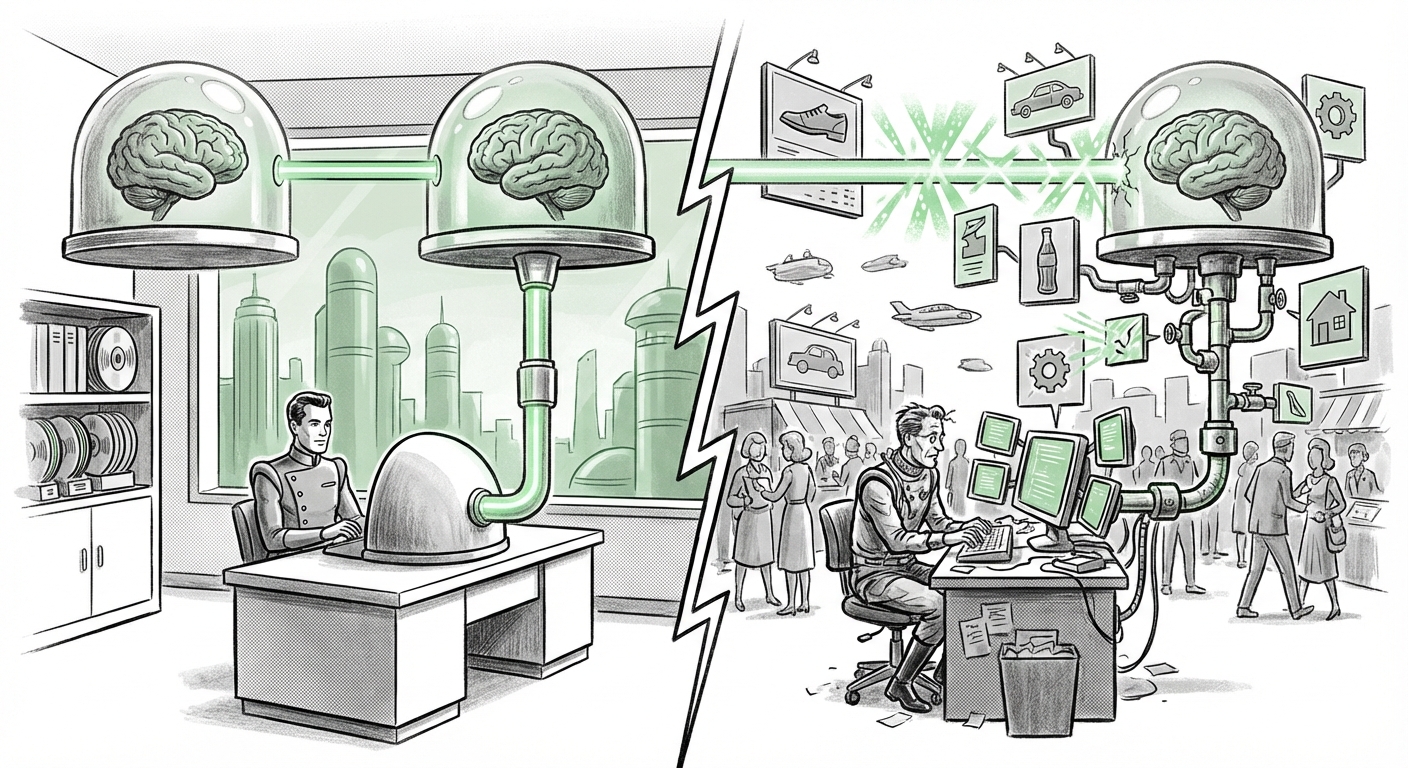

The competition between Anthropic and OpenAI is more than a battle for market share; it is a philosophical debate about the nature of digital utility in the age of artificial intelligence. Do we want our most advanced cognitive tools to feel like clean offices built for productivity, or like bustling digital city centers filled with commerce? The answer will shape not just their balance sheets, but the very way we interact with intelligent machines.