The Bio-AI Revolution: How LLMs Are Cracking Biology's Data Bottleneck and Reshaping Science

The world of Artificial Intelligence is rapidly moving past general consumer applications and diving headfirst into the most complex challenges facing humanity: the mysteries of biology. A recent announcement involving **Anthropic partnering with leading research institutes to tackle biology's data bottleneck** is not just corporate news; it is a critical signal marking the next major phase of AI deployment.

As an AI analyst, I see this development as a clear indicator that the future of powerful AI lies in deep specialization. We are shifting from general-purpose models to highly focused scientific agents built to conquer specific, data-intensive fields. Biology, with its staggering complexity and oceans of unstructured data, is the perfect proving ground for this next generation of technology.

The Core Problem: Biology’s Data Bottleneck

To understand why Anthropic’s move is significant, we must first understand the "data bottleneck." Imagine trying to read every book ever written about human disease, every lab result, every genetic sequence, and every protein interaction—all simultaneously, and then asking the computer to find a completely new way to cure something. That is the task facing biologists.

Biology generates vast amounts of data—genomics, proteomics, imaging, and clinical trials. However, this data is often:

- Disorganized: Stored in different formats across different labs.

- Complex: Interpreting a single protein structure can take months of specialized human effort.

- Scarce: For rare diseases or specific drug interactions, enough data may not exist in one place to train a reliable model.

This is where Large Language Models (LLMs), adapted for scientific reasoning, step in. They offer the ability to ingest, synthesize, and find patterns across this chaos far faster than traditional computational methods. This push is part of a wider trend, as seen when major industry players and venture capital heavily support platforms focused on accelerating early-stage drug discovery via AI foundation models.

The Evolution: From LLMs to Scientific AI Agents

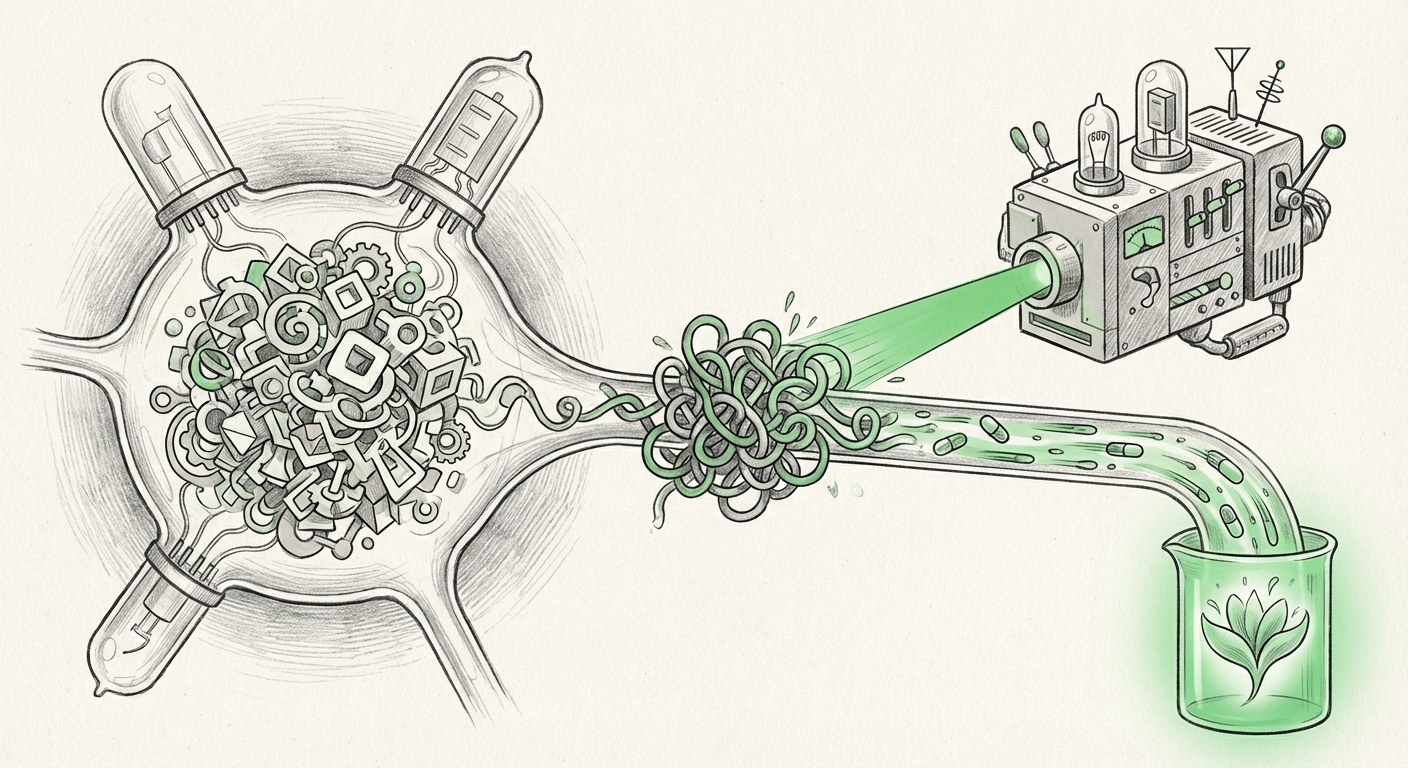

The key phrase accompanying this partnership is the development of **AI Agents**. This distinction is crucial for both technical and business audiences. A simple LLM can summarize a paper; a true AI Agent can formulate a testable hypothesis, design the parameters for the experiment, query simulations, interpret the resulting data, and suggest the next optimal step.

This moves AI from being a helpful research assistant to being an active, autonomous collaborator in the research process. Sources focused on domain-specific LLMs for scientific reasoning confirm that this functionality requires more than just general intelligence; it demands specialized reasoning layers fine-tuned on experimental constraints and scientific laws.

Why Anthropic’s Entry Matters

Anthropic, known for developing frontier models like Claude with an emphasis on safety and robust reasoning, entering this space means two things:

- Focus on Trustworthiness: Scientific applications require high accuracy. Anthropic’s core philosophy suggests they are aiming to build agents that can not only generate plausible ideas but also *explain their reasoning* clearly, which is vital when human health is on the line.

- The Agentic Workflow: By targeting the bottleneck, they are signaling that the next wave of productivity gains in R and D will come from automating the iterative loop of scientific discovery itself. This means reducing the time from target identification to pre-clinical candidate selection, potentially shaving years off development timelines.

The Competitive Landscape and Commercial Imperative

Anthropic is not acting in a vacuum. The success of models like DeepMind’s AlphaFold in predicting protein structures proves the immense value locked within biology. The entire ecosystem—from academic labs to pharmaceutical giants—is vying for control over the best biological data and the most powerful proprietary models.

Analysis of Venture Capital funding in AI biology platforms shows explosive growth. Investors are betting heavily that the companies that successfully build the AI "operating system" for biological research will capture immense value. For Anthropic, partnering directly with established research institutes provides immediate, high-quality data streams and access to domain experts—a much faster path to creating a market-ready Bio-AI solution than simply training on public data alone.

For businesses, this signals a shift: **AI integration is no longer optional in R and D; it is a prerequisite for remaining competitive.** Companies not yet developing or licensing specialized Bio-AI tools risk being relegated to using slower, legacy research methods.

Practical Implications: From Code to Cures

What does this convergence mean for the day-to-day reality of science and business?

For Researchers: A New Era of Automation

Researchers will spend less time managing spreadsheets and more time on complex, creative problem-solving. AI agents will handle the tedious work of data integration and initial hypothesis filtering. Think of an AI agent suggesting a novel drug modification based on subtle patterns it found between thousands of unrelated genetic studies—a connection a human might never spot.

For Pharma & Biotech: Decoupling Time from Money

The cost of bringing a new drug to market is often measured in billions of dollars and over a decade. If AI agents can reduce the failure rate in early discovery—by better predicting toxicity or efficacy before costly lab work begins—the economic payoff is staggering. This capability directly addresses the need for AI foundation models to accelerate drug discovery.

For Society: Accelerating Solutions

Perhaps the most profound implication is the potential speed-up in solving major health crises. Whether it’s developing tailored cancer therapies or responding rapidly to novel pandemics, solving the data bottleneck means accelerating the entire pipeline of scientific innovation.

Navigating the New Frontier: Governance and Access

With great power comes complex governance. As AI models delve into highly sensitive biological data, questions of ownership, privacy, and equitable access become paramount. Partnerships between private AI firms and public research institutions must navigate murky waters regarding intellectual property.

Discussions around AI in biological research data sharing governance highlight the need for clear frameworks. If an AI agent discovers a breakthrough based on public data it was fine-tuned on, who owns that insight? Establishing transparent, ethical guidelines now is essential to ensure that these powerful tools serve broad societal benefit, rather than becoming exclusively locked behind proprietary walls.

Actionable Insights for Leaders

To capitalize on the momentum generated by developments like Anthropic's partnership, leaders must act strategically:

- Audit Your Data Strategy: Treat your proprietary biological data not just as records, but as the necessary training fuel for future AI advantage. Is it clean, accessible, and standardized for machine consumption?

- Invest in Agentic Skills: Move beyond hiring data scientists focused only on predictive modeling. Seek out teams skilled in reinforcement learning, prompt engineering for complex tasks, and integrating AI outputs directly into laboratory automation systems.

- Form Strategic Alliances: Just as Anthropic partnered with institutes, organizations should seek symbiotic relationships. Tech companies need biological domain expertise; research institutions need world-class AI infrastructure. Collaboration shortens the time-to-value dramatically.

- Prioritize Explainability (XAI): When an AI suggests a novel chemical compound or targets a new gene pathway, the human scientist must understand *why*. Demand high levels of transparency and explainability from any AI system deployed in critical R andD pathways.

The convergence of frontier AI and the life sciences is no longer a distant prediction—it is the central business strategy of the coming decade. The success of specialized AI agents in dismantling biology's most stubborn data barriers will redefine what is scientifically possible.