Cerebras $23B Valuation: The AI Hardware War Shifts Beyond Nvidia

The recent news that AI chip startup Cerebras Systems secured over one billion dollars in funding, catapulting its valuation to an eye-watering $23 billion, is far more than just a successful financing round. It is a resounding confirmation of a major strategic pivot in the world of artificial intelligence: the urgent need to diversify the compute landscape. This massive influx of capital, significantly catalyzed by a multi-billion dollar deal with OpenAI, suggests that the foundation upon which the next generation of AI models is being built is cracking under the weight of current GPU dependence.

For years, the AI gold rush has been defined by the gold miners: the creators of the computational tools necessary to train massive models. Unsurprisingly, Nvidia has been the undisputed king of this domain. However, as models grow exponentially in size—demanding unprecedented amounts of memory, speed, and power—the market is beginning to value specialization over generality. This article dissects what Cerebras’s success means for the future of AI infrastructure, the strategic plays being made by OpenAI, and the broader implications for technology adoption.

The Context: Why $23 Billion Matters in the AI Chip Race

To understand the significance of Cerebras achieving a $23 billion valuation, we must first anchor it against the current reality. The AI hardware market has been, until very recently, a near-monopoly. Nvidia's Graphics Processing Units (GPUs), particularly the H100 and forthcoming H200 series, are the lingua franca of deep learning. They are powerful, flexible, and supported by a vast software ecosystem (CUDA).

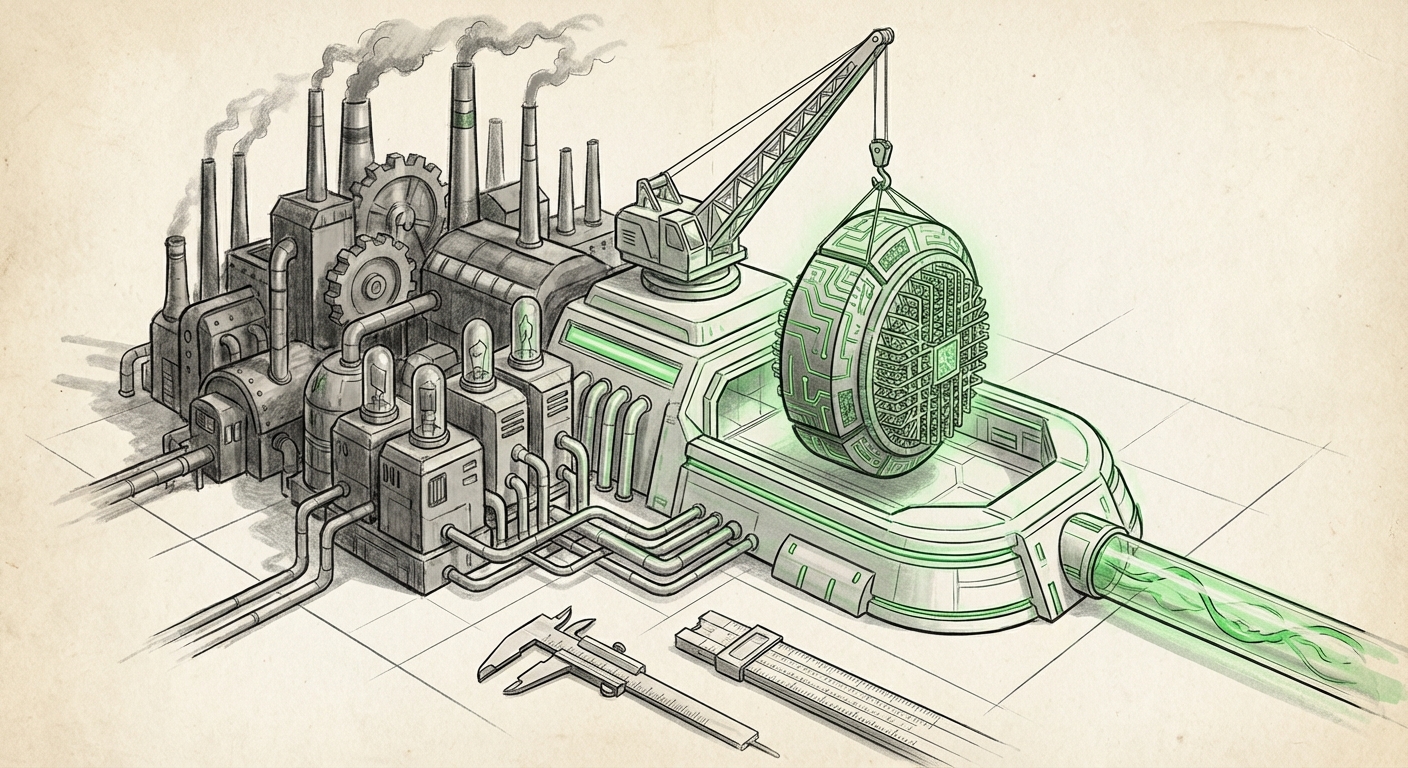

However, scaling the next wave of foundation models—models far larger and more complex than today’s GPT-4—runs into physical and economic bottlenecks with traditional GPU clusters. Imagine trying to build the world's largest skyscraper using only standard-sized building blocks; eventually, you need a specialized, massive piece to make the next leap. Cerebras offers that specialized piece. Their Wafer-Scale Engine (WSE) is, literally, a single chip that covers an entire silicon wafer, offering unparalleled on-chip memory bandwidth and interconnectivity.

The **AI Compute Specialization Thesis** suggests that while GPUs will remain crucial for much of AI work (especially inference), training the absolute frontier models requires purpose-built silicon. Cerebras's valuation demonstrates that sophisticated investors and leading AI developers agree: bespoke hardware designed for massive matrix multiplication is the key to unlocking the next tier of AI capability.

The Competitive Landscape: Beyond the GPU Giants

Cerebras is not operating in a vacuum. The massive capital flowing into specialized AI hardware signals a frantic race to create credible alternatives to Nvidia. This diversification is vital for resilience and innovation.

When we look at the wider field, we see a marketplace segmenting by task:

- Inference Specialists (e.g., Groq): Focused intensely on speed for delivering answers (inference) once a model is trained, often using a specialized design called an LPU (Language Processing Unit).

- Domain-Specific ASICs: Companies designing chips optimized for specific algorithms or data types, often custom-built for hyperscalers like Amazon (Trainium/Inferentia) or Google (TPU).

- Wafer-Scale Innovation (Cerebras): Targeting the most demanding, large-scale training workloads where eliminating communication bottlenecks between thousands of chips is the primary goal.

The implication for infrastructure engineers is clear: the days of "one chip fits all" AI development are numbered. Businesses must now select hardware not just based on cost, but on the precise nature of their AI workload—training, fine-tuning, or deployment.

This competitive energy is exactly what investors are betting on. As analyst reports tracking alternative AI accelerator market trends often show, the total addressable market for AI silicon is so vast that even capturing a small percentage of the training market represents billions in revenue. Cerebras’s success validates the high-risk, high-reward strategy of betting on radical architectural shifts.

The OpenAI Factor: Strategic Hedging and Compute Sovereignty

The most electrifying element of this story is the confirmed partnership with OpenAI. This single deal provides Cerebras with the ultimate seal of approval.

For those tracking the **OpenAI infrastructure strategy**, this move is particularly telling. OpenAI, deeply entwined with Microsoft’s Azure cloud infrastructure (which is heavily provisioned with Nvidia hardware), could have been expected to rely solely on that established pipeline. Their commitment to Cerebras signifies strategic necessity:

- Workload Specificity: OpenAI may require the unique density and low-latency communication of the WSE for training their next, colossal models, where current GPU cluster interconnects introduce unacceptable latency barriers.

- Supply Chain De-risking: Relying entirely on one vendor, even a friendly one like Microsoft/Nvidia, presents a single point of failure for the world’s leading AI lab. Securing contracts with leading alternative hardware providers ensures continuity, even amid potential shortages or shifting priorities.

- Compute Sovereignty: By partnering with a high-valuation, independent hardware provider, OpenAI gains leverage and ensures they can access cutting-edge compute capacity that might not be immediately available through standard cloud offerings.

For the average reader, think of it like an airline that relies heavily on Boeing for its main fleet but buys a few massive Airbus planes for ultra-long-haul, specialized routes. They are hedging their bets against unforeseen problems with one supplier while securing superior performance for specific, critical missions. OpenAI’s adoption of Cerebras is a strong indicator that the "next frontier" of AI demands hardware optimization that the current mainstream tools cannot deliver efficiently.

Future Implications: Reshaping the AI Ecosystem

The Cerebras valuation ripples outward, fundamentally changing how businesses must approach AI implementation.

1. Accelerated Commoditization of Mid-Range Compute

When the largest players secure massive contracts for bespoke, cutting-edge hardware, it puts pressure on the mid-tier hardware providers. As Cerebras and others handle the *frontier* training, existing GPU solutions will rapidly become the "commodity" compute layer. This means that for standard enterprise tasks—fine-tuning existing large models, running smaller language models, or routine inference—the cost of GPU time could drop significantly as supply normalizes or better inference chips emerge.

2. The Rise of the AI Infrastructure Architect

In the past, an infrastructure team might decide: "We need 1,000 GPUs." Now, the decision tree is infinitely more complex: "Do we need 1,000 H100s, 50 TPUs, a dedicated Groq cluster for real-time response, or a Cerebras cluster for three months of foundational training?"

This complexity elevates the importance of the AI Infrastructure Architect—a role that blends semiconductor knowledge, cloud economics, and algorithm optimization. Businesses that fail to invest in this expertise risk paying exorbitant fees for the wrong type of compute power.

3. Democratization via Specialization (The Paradox)

While the *creation* of frontier AI models becomes hyper-concentrated among those who can afford specialized hardware like Cerebras, the *utilization* of AI might become more democratized. If specialized hardware dramatically lowers the cost or time required to generate a highly capable model (like GPT-5), the resulting model weights can then be distributed, fine-tuned, and run on cheaper, generalized hardware later on.

The race is on to build the fastest foundation. Once the foundation is poured, the subsequent structures built on top of it can be less resource-intensive.

Actionable Insights for Businesses Today

The lesson from Cerebras’s $23 billion valuation isn't just about investing in chips; it’s about preparing your organization for a hardware-agnostic future.

For Executives and Strategists:

Diversify Your Compute Horizon: Do not treat AI compute as a single line item dominated by one vendor. Start assessing your 2-year roadmap. If your goals involve building genuinely novel, trillion-parameter models, you must begin investigating access to specialized hardware like Cerebras, AMD Instinct, or custom ASICs now. Cloud contracts focused solely on current-generation GPUs might lock you into inefficient compute for next-generation problems.

For Engineers and Developers:

Embrace Portability and Abstraction: While learning to optimize for CUDA is still valuable, actively explore frameworks and software layers that allow for easier migration between different hardware architectures (e.g., using OpenXLA or PyTorch's evolving device strategies). The best code of tomorrow will be the code that runs efficiently, regardless of whether the underlying silicon is a GPU, an LPU, or a WSE.

For Investors:

Follow the Compute Contracts: The Cerebras deal sets a new benchmark. Look for corroborating evidence of deep partnerships between major AI labs (Anthropic, Mistral, Google DeepMind) and emerging hardware vendors. These strategic contracts, rather than simple cloud spending reports, are the clearest indicators of where the true performance barriers—and thus, the next valuation surges—will occur.

The foundation of AI is shifting beneath our feet. The massive valuation of Cerebras, cemented by the trust of OpenAI, signals that the era of monolithic hardware dependence is ending. The future of AI will be defined by heterogeneity—a vibrant ecosystem where specialized tools are deployed precisely where they offer the greatest, most radical advantage. This is not merely competition; it is the necessary evolution required to sustain the exponential growth curve of artificial intelligence itself.