The Context Revolution: How Kimi 2.5's Massive Memory Changes the LLM Future

The world of Large Language Models (LLMs) is often defined by a single metric: the size of its parameters. However, in recent months, the narrative has shifted dramatically toward another, arguably more practical measure of capability: context length. When "The Sequence AI of the Week" recently deconstructed Kimi 2.5 by Moonshot AI, it wasn't just detailing a new Chinese LLM; it was illuminating a genuine inflection point in how AI handles information.

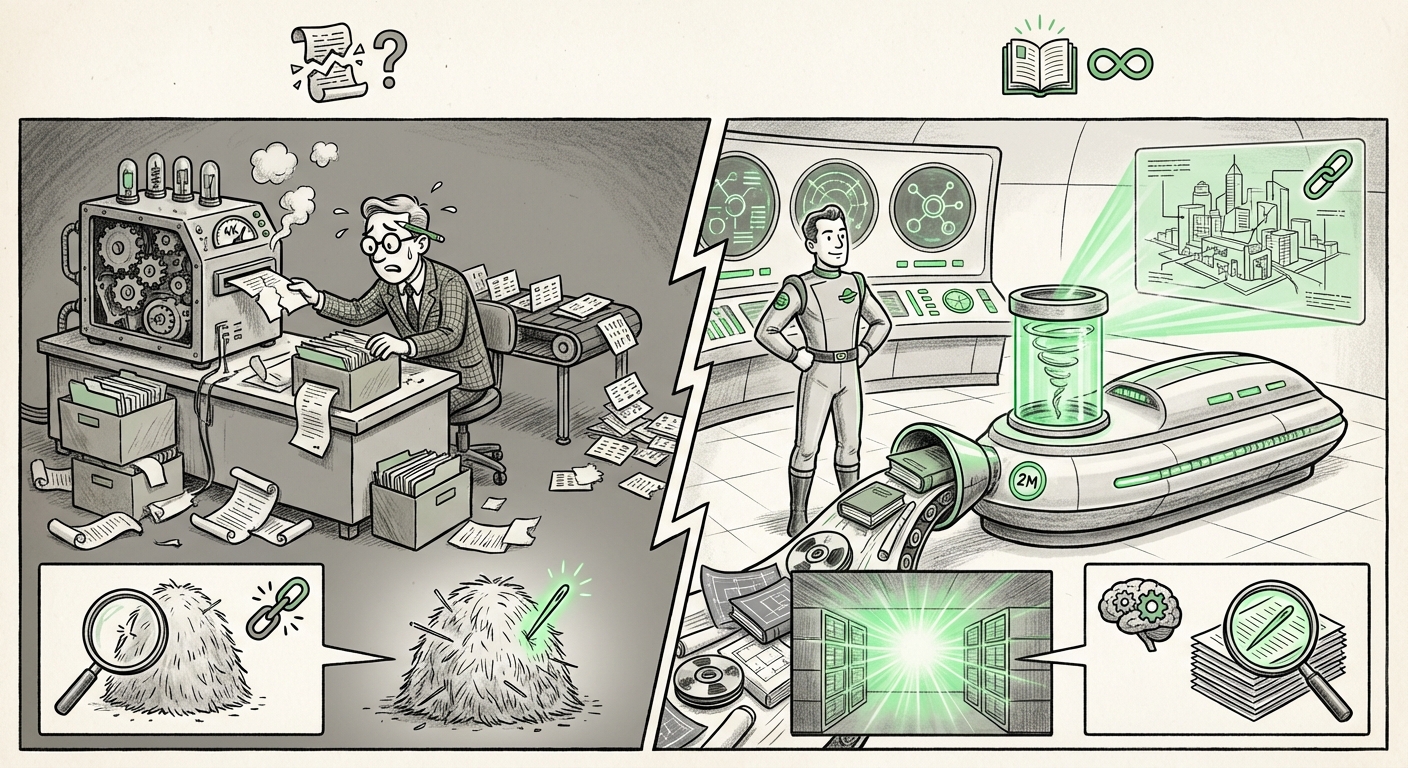

Kimi 2.5's headline feature—a context window reportedly stretching up to 2 million tokens—forces us to look past benchmark scores and consider the fundamental restructuring of AI interaction. A 2 million token context is akin to giving an AI the ability to read and remember hundreds of full-length novels simultaneously. This article synthesizes the key technical achievements, compares this leap against global and regional rivals, and maps out the profound implications for the future of enterprise technology.

I. The Technical Barrier Shattered: Beyond Quadratic Complexity

To grasp the magnitude of Kimi 2.5’s achievement, we must understand the fundamental bottleneck in the Transformer architecture that powers nearly all modern LLMs. This bottleneck is the attention mechanism. Imagine trying to check every single word in a massive document against every other word to understand context—this relationship scales quadratically (very, very fast). For every doubling of the text length, the computational cost doesn't just double; it quadruples.

This is why, historically, context windows were capped at 4K, 8K, or perhaps 128K tokens. Pushing beyond this required architectural innovation, not just bigger clusters of GPUs. Kimi 2.5’s success suggests that Moonshot AI has either implemented highly optimized versions of known efficient attention methods or developed novel approaches to manage this scaling challenge.

Contextualizing the Technical Leap

Researchers worldwide have been grappling with this scaling limitation. The development of methods like FlashAttention or emerging concepts such as Ring Attention are critical milestones that address the quadratic complexity. Kimi 2.5’s performance validates that these techniques—or similar, proprietary optimizations—have matured enough to support enterprise-scale context handling.

What this means for Engineers: The era of feeding an LLM context piecewise, or relying heavily on complex Retrieval-Augmented Generation (RAG) systems just to hold a conversation, may be waning. We are moving toward "native memory" models where the model processes the entire data set directly, leading to vastly superior accuracy in complex reasoning tasks over large documents.

II. The Regional Battlefield: Kimi 2.5 in the Chinese AI Ecosystem

Kimi 2.5 is a pivotal release not just for its technology, but for its origin. The Chinese AI landscape is fiercely competitive, populated by well-funded giants like Baidu (with ERNIE) and Alibaba (with Qwen). Analyzing Kimi 2.5 requires positioning it within this arena.

Benchmarking Against Domestic Powerhouses

When comparing Moonshot AI against its domestic rivals, the focus shifts from raw parameter counts to specialized performance. While general benchmarks like C-Eval or CMMLU measure broad understanding, Kimi’s long-context capability offers a specialized advantage. If Kimi can efficiently process the entirety of a company’s internal documentation or a year’s worth of complex regulatory filings, it carves out a niche where brute-force parameter scaling is less important than memory capacity.

For Investors and Strategists: This highlights a potential divergence in AI strategy. While global leaders often chase the largest generalized models, players like Moonshot AI are demonstrating that solving specific, difficult scaling problems can yield immediate, defensible market advantages. The competitive focus is increasingly shifting from "who has the smartest model?" to "who has the most usefully large model?"

III. The Global Context Race: Benchmarking Against Western Titans

Kimi 2.5 is not operating in a vacuum. The global pursuit of long context is perhaps the most visible feature battle between OpenAI, Google, and Anthropic. To truly assess Kimi’s significance, we must hold it up against models like Anthropic’s Claude 3 Opus or Google’s Gemini iterations, which have also marketed massive context windows.

Context Fidelity: More Than Just Size

A model might claim 1 million tokens, but its true utility rests on context fidelity—its ability to retrieve the correct, obscure piece of information hidden deep within that massive window. Early tests on long-context models often reveal a "needle-in-a-haystack" problem, where accuracy drops off significantly for data placed in the middle of the input sequence.

If Kimi 2.5 demonstrates consistent, high-fidelity retrieval across its massive context window—rivaling or exceeding the performance of top Western models in structured tasks—it signals that the technological gap is closing rapidly, if not inverted, in this specific domain. This is the critical data point that anchors Kimi 2.5 as a global contender, not just a regional success story.

IV. Practical Disruption: What 2 Million Tokens Unlocks for Business

The shift from thousands of tokens to millions transforms LLMs from sophisticated chat partners into true information processors. The practical implications span nearly every knowledge-based industry.

The End of Incremental RAG?

Currently, businesses build complex RAG systems: databases stuffed with company data that an LLM queries *before* answering. This process is necessary because older models couldn't read the whole database at once. With 2M token context, the architecture changes. Why query a separate database when you can feed the model the relevant 50 PDFs, 10 design specs, and 5 recent meeting transcripts all at once?

- Legal and Compliance: Instead of reviewing summaries, an AI can ingest every clause of a massive international contract, cross-reference it against all historical regulatory changes, and flag conflicts—all in one prompt.

- Software Engineering: Developers can feed an entire, complex codebase (thousands of lines) into the model to debug deeply nested errors, suggest refactoring across modules, or integrate new features coherently.

- Scientific Research: Researchers can input 10 years of journal abstracts on a niche topic to synthesize a literature review instantly, catching subtle connections missed by traditional search algorithms.

For Application Developers: This is a mandate to rethink information architecture. Future successful enterprise applications will be those that utilize native context depth rather than relying on external, often imperfect, retrieval augmentation.

V. The Road Ahead: Actionable Insights for Navigating the Context Era

The developments highlighted by Kimi 2.5 are not a niche feature; they represent the next generation of enterprise AI utility. Here are actionable steps for leaders and technologists to prepare for this new reality.

1. Re-evaluate Data Preparation Workflows

If your current LLM strategy is heavily reliant on optimizing vector databases and chunking strategies for RAG, understand that this overhead may soon become obsolete for certain use cases. Focus R&D on structuring data for direct ingestion (e.g., optimizing documentation formatting for bulk upload) rather than optimized retrieval indexing.

2. Prioritize Context Fidelity Testing

When evaluating any new long-context model—Kimi, Claude, or others—do not accept the token count at face value. Design rigorous "needle-in-a-haystack" tests tailored to your most critical business documents. Test retrieval accuracy at the beginning, middle, and end of the window to confirm true utility.

3. Watch the Chinese Ecosystem Closely

The rapid, competitive innovation cycle seen in China suggests that agility in solving core technical hurdles (like context scaling) yields immediate results. Monitoring Moonshot AI and its rivals provides a leading indicator for where global LLM capabilities will be in 12 to 18 months, particularly in efficiency and cost-effectiveness.

4. The Architectural Convergence

We are seeing a convergence where the world’s best models—regardless of geography—are solving the same core engineering problems using advanced attention mechanisms. The differentiator is rapidly moving from can it hold the data? to how fast and how cheaply can it process it? Cost modeling for inference at massive context lengths will become the next major competitive battleground.

Kimi 2.5 is more than a software release; it is a proof point that the scaling limitations we once accepted in LLMs are yielding to focused engineering breakthroughs. The future of AI belongs to those models that can remember everything relevant to the task at hand, and Moonshot AI has firmly planted its flag at the forefront of that revolution.