Nvidia's $20B OpenAI Bet: The Hidden Calculus of AI Dominance and Compute Power

The technology world is abuzz with reports suggesting Nvidia, the undisputed champion of AI processing chips, is set to invest a staggering $\$20$ billion into OpenAI’s latest funding round. While the headline figures—and the subsequent downplaying of executive tensions—grab immediate attention, the true significance of this transaction extends far beyond mere finance. This is not just a capital infusion; it is a deeply strategic move solidifying the foundational interdependence between the architect of AI hardware and the creator of the most advanced AI software.

As an AI technology analyst, I see this as a critical juncture where software ambition meets silicon reality. For the business executive, the CTO, and the everyday user, understanding this partnership illuminates where the next bottlenecks—and breakthroughs—in artificial intelligence will occur.

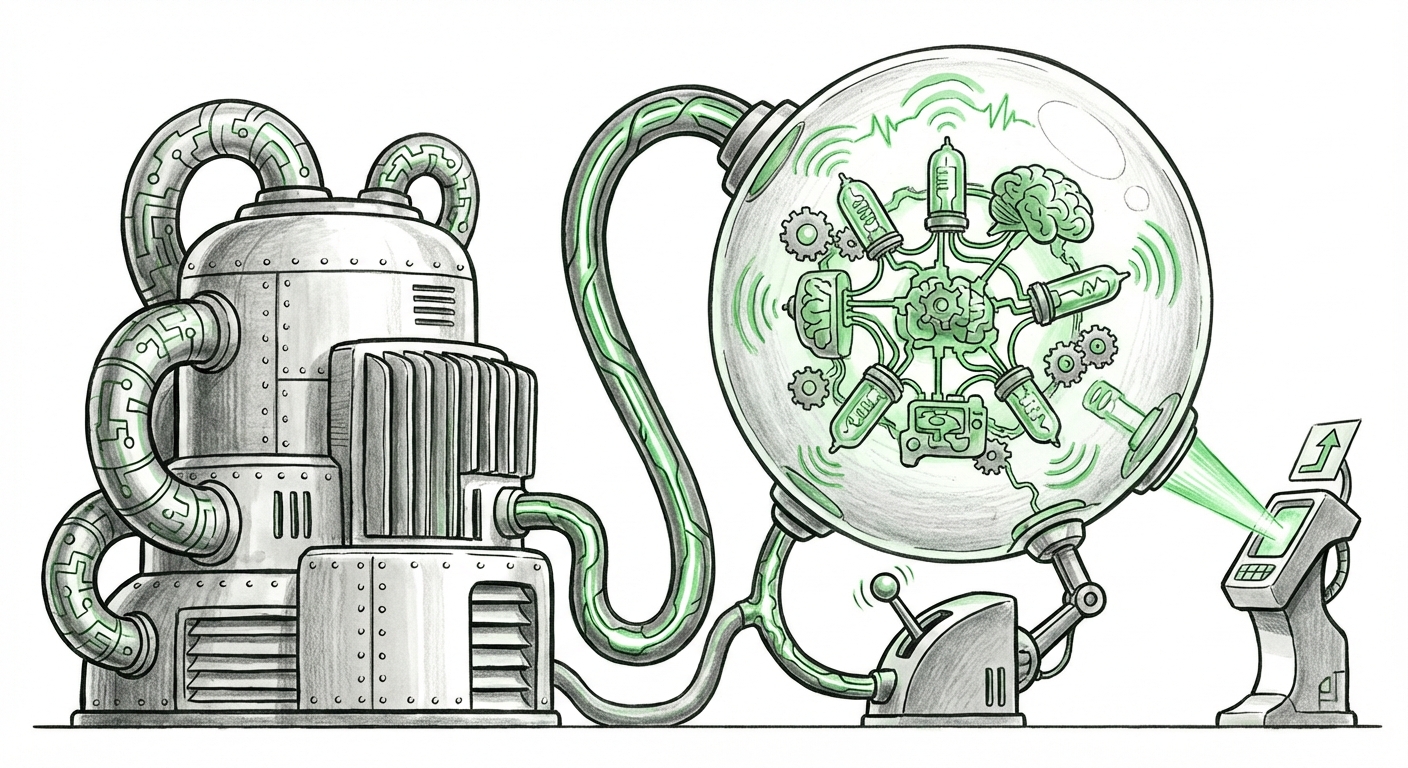

The Symbiosis of Silicon and Superintelligence

To understand the $\$20$ billion figure, we must first appreciate the scale of modern AI training. Training a model like GPT-4 consumes thousands of the most powerful GPUs (Graphics Processing Units) running constantly for months. The next generation of frontier models, often whispered about in terms of "trillion parameter scale," will require compute power previously reserved for national labs.

Nvidia’s current chips, such as the H100 and the anticipated Blackwell series, are the essential oil in this AI engine. Without them, OpenAI hits a hard computational wall. This investment, therefore, serves multiple roles for Nvidia:

- Securing Future Supply: It guarantees OpenAI a prioritized queue for future, highly constrained chip releases.

- Validation of Strategy: It publicly validates Nvidia’s market position as the essential infrastructure provider for all serious AI players.

- Downstream Revenue Certainty: It effectively pre-commits a massive future customer to purchasing hundreds of millions (if not billions) worth of hardware over the next few years.

As corroborating analysis suggests, the demand for these chips vastly outstrips supply. When market reports detail the backlog for Nvidia's AI accelerators (Source 1 focus), it underscores that access, not just cost, is the defining scarcity in building state-of-the-art AI. Nvidia’s investment acts as a high-level reservation system for the future of AI computation.

The Complex Web of Partnerships: Microsoft, Azure, and Nvidia

OpenAI’s relationship with Microsoft is foundational; Azure is their primary cloud infrastructure. So, where does Nvidia fit into this established cloud dynamic? This is where the strategic calculus becomes delicate.

Microsoft is already a foundational partner, heavily invested in OpenAI and a gigantic purchaser of Nvidia hardware for its Azure data centers. Nvidia’s direct investment signals a desire to maintain a direct line to the software frontier, potentially bypassing or at least diversifying its dependency on the cloud provider layer. While reports often smooth over executive tensions, the reality is that control over compute access is a constant source of negotiation.

By investing directly, Nvidia gains a seat at the table concerning OpenAI’s long-term compute roadmap. This ensures that as OpenAI designs future training regimes, Nvidia's hardware architecture—which is optimized for its CUDA software ecosystem—remains the default choice. This dynamic is crucial for venture capital and corporate strategy teams (Source 2 focus), as it reveals that the AI race isn't just about algorithms; it’s about who controls the pipes connecting the algorithms to the power.

The Cost of Ambition: Training the Next Generation

The original $\$20$ billion figure, reportedly less than earlier rumors, tells us that OpenAI’s compute needs are astronomical. Industry estimates for training the next generational leap in large language models (LLMs) often reach into the tens of billions of dollars for hardware alone (Source 3 focus). This suggests that the reported investment is likely just one component of a much larger, multi-stage capital expenditure plan required to move past GPT-4 and toward systems capable of more generalized reasoning.

For a business audience, this means that the entry barrier for competing at the absolute frontier of AI—what we might call "AGI research"—is no longer solely about having brilliant researchers; it is now overwhelmingly about securing access to capital capable of funding massive, multi-year hardware procurement cycles.

Implications for Businesses: Actionable Insights Now

If you are a CTO or business leader looking to leverage AI, Nvidia’s strategy provides critical guidance:

- Prioritize Your Compute Strategy: If your AI goals require training large, novel foundation models, you must secure long-term supply contracts with cloud providers or directly with Nvidia/OEMs *now*. Waiting means competing for remaining inventory against giants like OpenAI and Google.

- Master Inference, Not Just Training: While training is expensive, running these models (inference) at scale is the long-term cost center. Companies should aggressively explore smaller, highly specialized models or leverage techniques like quantization and distillation to run powerful models efficiently on less expensive hardware.

- Watch the Ecosystem Wars: Nvidia’s dominance is reliant on its proprietary CUDA software platform. While it is the industry standard today, technological stagnation or a sudden pivot by a major player could shift the landscape.

The Competitive Check: Alternative Silicon Matters

Nvidia’s confidence, evidenced by this investment, is currently justified by its technological lead. However, a healthy AI ecosystem requires competition. If Nvidia maintains an insurmountable monopoly on the fastest chips, innovation outside its ecosystem could stall due to lack of accessible, high-performance alternatives.

Therefore, it is vital to monitor rivals like Google with their Tensor Processing Units (TPUs) and emerging threats from AMD and others (Source 4 focus). These alternatives offer different trade-offs in performance, cost, and programmability. Should one of these competitors manage to deliver a chip that performs comparably to the H100/Blackwell series at a significantly lower total cost of ownership (TCO), the leverage shifts dramatically.

For now, the investment confirms that OpenAI needs Nvidia GPUs for its immediate roadmap, and Nvidia needs OpenAI’s success to justify its staggering valuation and ongoing R&D into next-generation accelerators.

The Future Trajectory: From Vertical Integration to Compute Monopolies

What this entire financial maneuver tells us about the future is the increasing vertical integration of the AI stack.

In the early days of deep learning, researchers could train decent models on consumer-grade GPUs. Today, the gap between the "frontier" (OpenAI, Google DeepMind) and the rest of the field is widening due to compute access. When the foundational layer of compute (Nvidia) invests directly into the application layer (OpenAI), it creates a near-insurmountable moat. This consolidation of capital, talent, and hardware means the leading AI capabilities will be developed under a very small umbrella.

This has profound societal implications. Whoever controls the most advanced AI models will possess unprecedented economic and informational power. Nvidia is ensuring it remains indispensable to that process. The phrase "proceed one step at a time," attributed to the executives, suggests careful, deliberate scaling—but that scaling requires committed capital only giants like Nvidia can reliably provide.

We are moving toward an era where AI progress is less about algorithmic novelty and more about the ability to fund and deploy compute resources at an industrial, national-scale level. The $\$20$ billion investment is not just a purchase order; it is a strategic declaration of partnership at the very peak of the AI hierarchy.