The Digital Dust-Up: Why Evidence Destruction in the xAI vs. OpenAI Feud Threatens AI Transparency

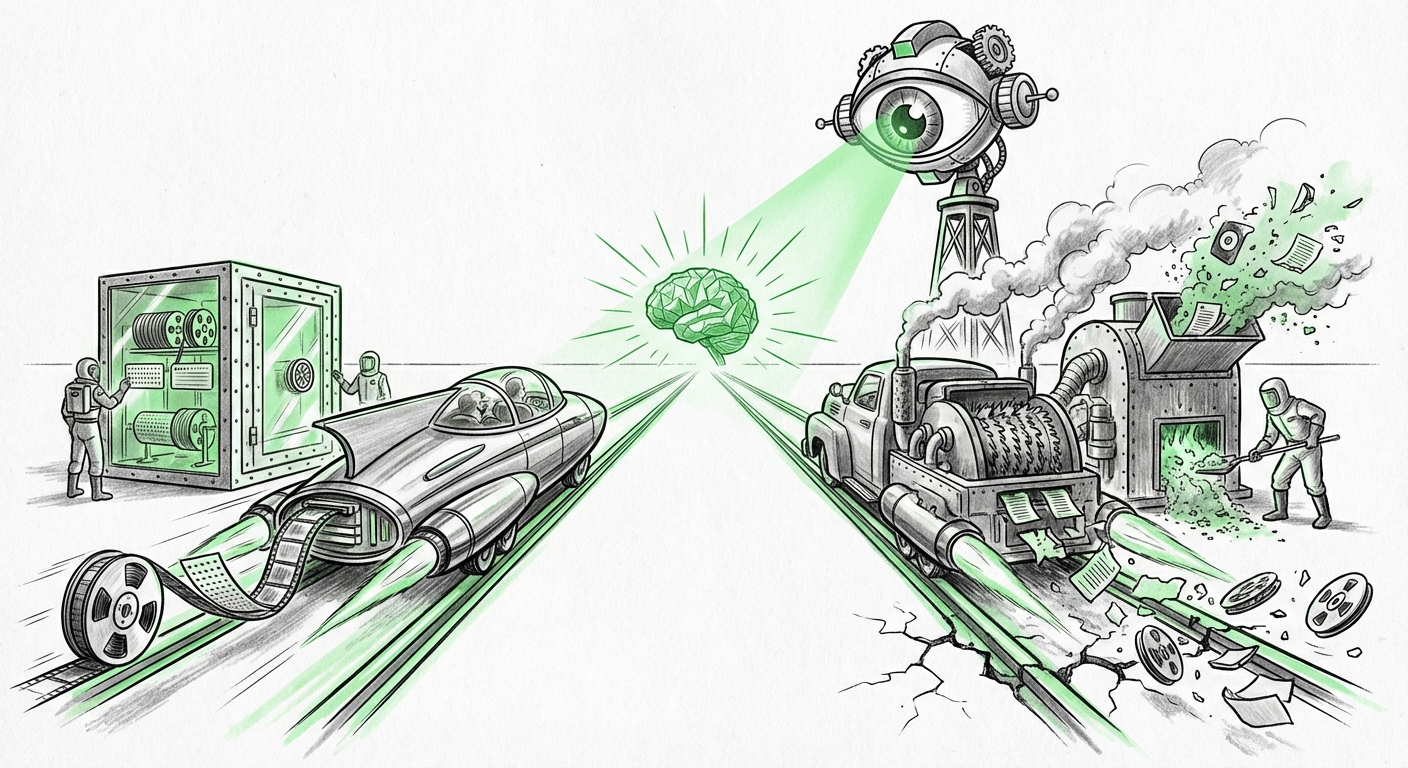

The race to Artificial General Intelligence (AGI) is not just being fought in server farms and research labs; it is increasingly being fought in courtrooms. The latest escalation in the public feud between OpenAI and Elon Musk’s xAI—specifically, OpenAI’s accusation that xAI has engaged in the "systematic and intentional destruction" of evidence—is more than just corporate drama. It is a critical stress test for the transparency, accountability, and legal oversight required for the next generation of AI.

For analysts, investors, and regulators, this situation demands an immediate deep dive. We must look beyond the sensational headlines to understand what this procedural fight signals about the future of proprietary model development and the standards of legal discovery in this rapidly evolving technological landscape.

The Legal Crucible: When Data Vanishes in High-Stakes Tech Litigation

In any major lawsuit, especially those involving intellectual property, trade secrets, or antitrust claims, the "discovery" phase is paramount. Discovery is the formal process where parties exchange evidence—emails, documents, code repositories, and, crucially for AI, training logs and model architecture details. OpenAI is essentially claiming that xAI, under Musk’s leadership, has attempted to erase the digital trail related to their early work and interactions.

To assess this, one must examine the historical legal precedent. We are dealing with claims that evoke past battles against tech giants where the sheer volume of digital information (the "data deluge") was sometimes weaponized to obscure facts. However, accusing a party of *systematic destruction* is far more severe than merely failing to preserve everything. It implies intent to hide wrongdoing.

What this means for technology companies: Whether xAI’s actions are proven or not, the accusation alone raises the compliance stakes. Any company developing large foundation models must have airtight, immediate data preservation protocols in place the moment litigation is foreseeable. Failure to do so, as explored in analyses of past tech discovery disputes, can lead to severe sanctions, including adverse jury instructions or even default judgments. For startups, litigation risk management now includes mandatory, pre-emptive digital hoarding policies.

For further context on how courts handle digital evidence disputes, analysts closely watch reports from legal journals detailing the specific motions filed by OpenAI, which lay out the precise evidence purportedly destroyed.

The Philosophical Chasm: AGI, Openness, and Competitive Advantage

This legal entanglement is a direct consequence of the ideological rupture between Elon Musk and Sam Altman/OpenAI. Musk co-founded OpenAI with the stated goal of developing AGI for the benefit of humanity, often advocating for an open or non-profit structure. He has publicly argued that OpenAI’s current trajectory—heavily backed by Microsoft and focused on proprietary, closed models—is a betrayal of that foundational mission.

The lawsuit is rooted in Musk’s claim that OpenAI breached its founding contract by prioritizing commercial gain over open science. The evidence OpenAI seeks is likely aimed at proving that xAI benefited from proprietary OpenAI knowledge or technology that was shared under the initial, more open premise. If xAI destroyed evidence, it strongly suggests they are trying to shield the data that would prove or disprove this transfer of knowledge.

What this means for the future of AI development: This conflict illuminates the central tension defining the next decade of AI: Open vs. Closed.

- The Closed Camp (e.g., OpenAI, Google DeepMind): Argues that the immense compute and data required for frontier models necessitate secrecy to protect massive investments and maintain a lead against bad actors.

- The Open Camp (e.g., Meta’s Llama releases, Musk’s stated goals): Argues that safety and equitable access require transparency so that the global community can scrutinize models for bias, security flaws, and alignment issues.

When disputes move from academic debate to litigation centered on destroyed evidence, it pushes the entire industry toward greater scrutiny of *intent*. The perception that secretive development is being protected by legal maneuvers erodes public trust—a commodity essential for widespread AI adoption.

The philosophical rift is well documented in technology reporting that covered Musk’s departure from the OpenAI board years ago, contrasting his early advocacy for open AGI with the company's current structure.

The Regulatory Tightrope: Demanding Transparency in the Black Box Era

Perhaps the most significant implication of this case lies in its impact on nascent AI regulation globally. Policymakers are scrambling to establish guardrails for technologies that learn and evolve rapidly. Regulators, from the European Union with its AI Act to US agencies like the FTC, are struggling with one core problem: how to audit a complex, proprietary "black box" model.

If a company like xAI can be credibly accused of deleting core development data, it suggests that relying solely on voluntary compliance or self-reporting from AI labs is insufficient. The current fight over discovery in the OpenAI case will inform future regulatory mandates:

- Mandatory Audit Trails: Regulators may be compelled to require standardized, immutable logging of all major training runs, data provenance, and architectural pivots—data that OpenAI is likely seeking from xAI.

- Increased Litigation Risk for IP Theft: As models become more valuable than the companies that build them, the temptation to illegally leverage competitor data will rise. Courts will need clearer rules on what constitutes digital trade secret theft in the context of iterative model training.

This issue transcends the Musk/OpenAI rivalry. It touches upon the very real possibility that future foundation models (the engines behind most advanced AI applications) could be built on stolen or improperly sourced data, yet provide no external mechanism for verification. If companies can successfully destroy evidence proving improper data sourcing (Query 4 context), the incentive structure for ethical data acquisition collapses.

The ongoing debate around the EU AI Act highlights this regulatory challenge, specifically concerning the obligations placed on "General Purpose AI Models" (GPAIMs) regarding transparency and risk assessment.

Unpacking the Underlying Data Question: What Was xAI Potentially Hiding?

To understand the urgency behind OpenAI's accusation, we must consider the context of xAI’s product, Grok. Grok is trained on massive datasets, heavily leveraging the real-time information flow from X (formerly Twitter). The core question in this litigation is: Did xAI use proprietary OpenAI materials (such as early GPT-4 training methodology or specific dataset structures) while developing Grok, particularly in its early stages?

If xAI is destroying evidence, it suggests the hidden material could reveal:

- Direct transfer of OpenAI confidential data.

- Evidence of using X data in ways that violate prior agreements or copyright, especially concerning data that may have been co-mingled or filtered through OpenAI systems during the parties' collaboration period.

For technology analysts, this means paying close attention to any investigative reports concerning xAI’s data sourcing. Any smoking gun that suggests xAI rapidly accelerated its capabilities by appropriating OpenAI secrets directly validates OpenAI’s extreme legal posture.

Implications and Actionable Insights for Stakeholders

This ongoing legal showdown serves as a stark warning for the entire AI ecosystem. The future of AGI development depends heavily on establishing trust, and trust requires auditable processes.

For Business Leaders and Investors: Risk Mitigation

Actionable Insight: Assume Future Scrutiny. Treat all proprietary data related to model training and architecture as if it will be subpoenaed tomorrow. Implement rigorous version control and access logs for all key development milestones (pre-AGI). Furthermore, diversify your legal counsel’s knowledge base to include expertise not just in software IP, but specifically in the emerging field of digital evidence preservation for machine learning artifacts.

For Technologists and Developers: Documentation as Defense

Actionable Insight: Prioritize Provenance. Every training run, every dataset modification, and every architectural change must be immutably logged. If you are training models using public data, you must be able to prove, definitively, that you did not inadvertently train on proprietary competitor output. This level of documentation moves from 'good practice' to 'essential operational security.'

For Policymakers: Closing the Loopholes

Actionable Insight: Mandate Digital Forensics Readiness. Legislation must move beyond merely regulating the *output* of AI models to regulating the *process*. This includes establishing clear, internationally recognized standards for evidence preservation specific to large-scale neural networks, ensuring that the promise of AGI safety is not undermined by the ability to erase the history of its creation.

Conclusion: The High Cost of Secrecy

The accusations leveled by OpenAI against xAI are a loud signal that the period of relatively informal development and competitive maneuvering in the AGI race is ending. The stakes are too high, and the potential competitive advantage too vast, for data oversight to be treated as an afterthought.

If the courts uphold OpenAI’s claims regarding systematic evidence destruction, it will set a powerful precedent: In the age of AGI, accountability cannot be deleted. The long-term success of this transformative technology relies not just on its intelligence, but on the integrity and transparency of the organizations building it. This legal battle is shaping the ground rules for how that integrity will be enforced.