The Small Model Revolution: Why 3 Billion Parameters is the New Million-Billion Standard

For years, the story of Artificial Intelligence was one of relentless scaling. If you wanted better performance, you needed more parameters—hundreds of billions, or even trillions. The race was defined by sheer computational might. However, a recent announcement from Alibaba is forcing a critical reassessment of this paradigm: their new coding model, Qwen3-Coder-Next, delivers performance comparable to significantly larger models while running on a mere 3 billion active parameters.

This isn't just an incremental update; it’s a powerful signal that the industry is entering the era of the "Small but Mighty" (SBM) model. As an AI technology analyst, I see this development as the most significant trend reshaping enterprise AI deployment, cost structures, and the very nature of where intelligence resides.

The Shift: From Brute Force to Engineering Finesse

The traditional view, often rooted in early scaling laws, suggested a direct correlation between model size and capability. If GPT-3 had 175B parameters, achieving similar reasoning required approaching that scale. The Qwen3 breakthrough challenges this, suggesting that highly specialized architectures and superior training methodologies can unlock vast potential in a compact footprint.

Corroborating the Efficiency Wave

Alibaba’s achievement doesn’t exist in a vacuum. It is the culmination of several concurrent industry efforts focused on parameter efficiency. To understand the magnitude of this shift, we must look at the broader trends supporting the SBM movement:

- SLMs vs. LLMs Benchmarks: Researchers are actively tracking how Smaller Language Models (SLMs) compare to their massive counterparts. When models under 10 billion parameters start posting competitive scores on benchmarks like HumanEval (for code quality) or MMLU (for general knowledge), it confirms that data quality and training focus can overcome size deficits. This benchmarking exercise is vital for CTOs deciding where to invest development resources.

- The Code Specialization Trend: The focus on coding models highlights the viability of creating highly efficient, domain-specific tools. Just as specialist tools outperform generalists in manufacturing, specialized, small code models demonstrate that knowledge retrieval and structural generation benefit immensely from targeted fine-tuning, even when parameters are limited. We see echoes of this in specialized models like Microsoft's Phi series, which emphasize deep understanding over vast parameter space.

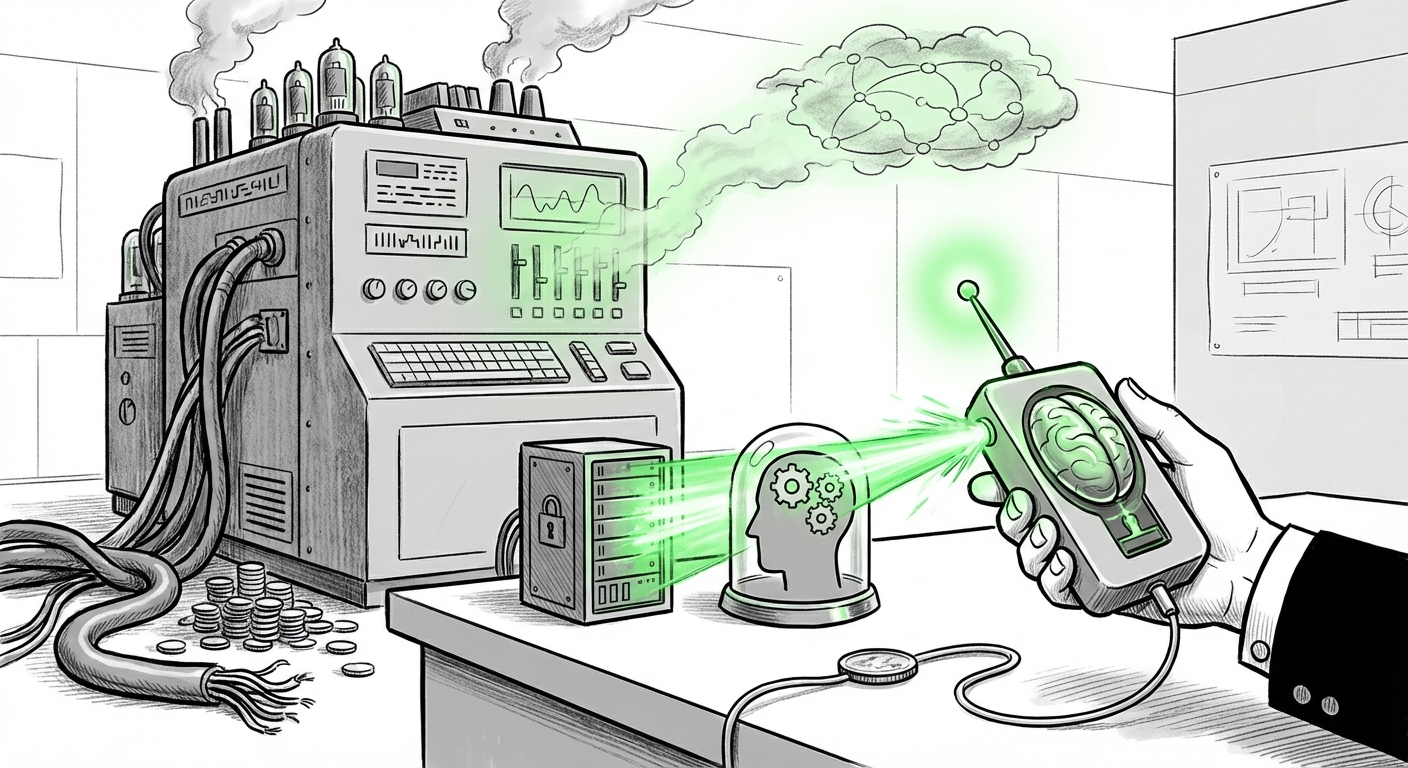

The underlying technology enabling this involves sophisticated techniques like advanced quantization (compressing the model’s numbers), structured sparsity (removing unimportant connections), and meticulous curriculum learning during training.

The End of the Cloud Monopoly: Edge and On-Device AI

Perhaps the most immediate practical implication of a powerful 3B parameter model is its impact on deployment strategy. Running a 100B+ parameter model requires substantial, centralized GPU clusters in the cloud—a high-cost, high-latency affair.

Practical Implications: Lowering the Barrier to Entry

A 3B model radically changes the economic and security equation. This is precisely why reports detailing the solutions for on-device AI deployment are gaining traction:

- Cost Reduction: For businesses, moving inference from paying premium cloud GPU time to running models locally on less expensive, in-house servers (or even powerful consumer hardware) translates directly into massive operational savings.

- Latency and Speed: For developers using code assistants, every millisecond counts. A local model responds instantly, creating a seamless, integrated experience that cloud APIs struggle to match due to network overhead.

- Data Sovereignty and Privacy: The ability to run powerful code generation locally means sensitive proprietary code never needs to leave the corporate firewall. This solves major compliance hurdles for regulated industries like finance and healthcare.

In essence, Qwen3-Coder-Next makes advanced AI infrastructure accessible to smaller enterprises and individual developers who could never afford the compute required for the largest frontier models.

Revisiting the Fundamentals: Scaling Laws Under Scrutiny

For years, researchers followed models of scaling that favored size. The success of SBMs forces us to look back at the theoretical underpinnings. We must examine revisions to concepts like the Chinchilla Scaling Laws.

The Data-Centric Counter-Argument

If the physical size of the model (parameters) is less important than its performance ceiling, then the focus must shift to the fuel: data quality and mixture. The fact that Qwen3-Coder-Next excels at coding suggests its training data was exceptionally curated and focused specifically on high-quality, functional code examples, rather than simply flooding the model with general internet text.

This implies a key strategic move for the next generation of AI builders: The competitive advantage is moving from hardware procurement to data curation excellence. Who can identify, clean, and structure the highest quality training sets for niche tasks will win, regardless of their parameter count.

Future Outlook: Actionable Insights for Technology Leaders

What does this signal for the next 18 to 24 months in the AI landscape?

For the Enterprise Architect: Embrace Hybridity

Do not assume you need the largest cloud model for every task. Adopt a tiered approach. Use massive LLMs for open-ended research and broad creativity, but deploy highly optimized SBMs like Qwen3-Coder-Next for core, high-volume tasks like internal code completion, document summarization, or customer service routing. This "best-tool-for-the-job" mindset will define efficient AI operations.

For the Developer: Local First Mentality

Expect to see integrated development environments (IDEs) and enterprise tools that default to running optimized, local models. If you are currently waiting on cloud API responses for basic coding suggestions, your productivity is being hampered by unnecessary network latency. Investigate frameworks that support efficient local inference.

For the Researcher: Rethinking Efficiency Metrics

The industry standard must evolve beyond simple FLOPs and parameter counts. Future research should heavily weight metrics like Performance-per-Watt and Performance-per-Dollar-of-Inference. The goal is maximizing utility while minimizing the energy footprint.

Conclusion: Intelligence is Becoming Invisible (and Powerful)

Alibaba’s Qwen3-Coder-Next is more than just a good coding model; it is a bellwether announcing the maturity of efficient AI architectures. We are moving past the "wow factor" of massive scale and into the pragmatic reality of deployment.

When an AI can deliver expert-level performance without requiring a data center to run it, AI stops being a novelty and starts becoming ubiquitous infrastructure. This trend toward small, mighty, and local models is the key to unlocking pervasive, secure, and cost-effective intelligence across every industry.