The Million-Token Revolution: How Claude Opus 4.6 is Redefining AI Context and Enterprise Capability

The landscape of Artificial Intelligence is defined by incremental, yet sometimes explosive, leaps in capability. The recent announcement of **Claude Opus 4.6** featuring a staggering **one million token context window** is one such explosive moment. For the layperson, a "token" is like a word or a piece of a word the AI reads; a million tokens is equivalent to several very large novels or hundreds of complex technical manuals. This isn't just an incremental upgrade; it represents a fundamental shift in what Large Language Models (LLMs) can handle in a single request.

As an AI analyst, this development signals the end of the era where context limitations dictated the scope of AI work. We are moving from models that are excellent conversationalists to models that can genuinely *read, cross-reference, and synthesize* vast internal knowledge bases without external help. This piece will synthesize the current developments, analyze the competitive pressures driving this race, and explore the profound implications for future AI architecture and business strategy.

The Context Arms Race: Why Length Matters More Than Ever

The primary utility of any LLM lies in its ability to understand *context*. If you ask a model to summarize a document, the model must hold the entire document in its active memory (its context window) to perform the task accurately. Previous generations of models struggled when documents exceeded 32k or 128k tokens, forcing users to chop up large files, leading to fragmented understanding.

Anthropic’s move to 1M tokens is a direct challenge to the industry. This threshold allows a single prompt to ingest data equivalent to:

- The complete works of Shakespeare.

- Several hundred pages of highly detailed engineering specifications.

- The entirety of a mid-sized company's policy and compliance documentation.

This race for context length is not arbitrary; it is driven by the increasing complexity of enterprise data. Businesses don't just need summaries; they need AI that can act as a junior associate capable of understanding the entire history of a legal case or the full architecture of legacy software.

The Competitive Mirror

As an analyst, one must immediately look at the competitive environment. This development is happening against a backdrop of intense rivalry. Google’s Gemini 1.5 Pro has already demonstrated similar capabilities, often citing massive context windows as a key differentiator.

When a leading player like Anthropic (a company known for its safety focus and strong enterprise partnerships) launches a flagship model hitting this benchmark, it solidifies the **one million token window as the new expected floor** for top-tier models. Analysts and investors will now gauge competitors like OpenAI (GPT series) on whether they can meet or exceed this capability, and at what cost in terms of latency and price. This confirms that the frontier of LLM development is now firmly rooted in scaling memory, not just improving base reasoning.

Technical Validation: Can the AI Really Read All That?

The engineering required to handle a context window this large is immense. Moving from 100,000 tokens to 1,000,000 tokens doesn't just require more RAM; it often leads to computational bottlenecks and, more critically, informational degradation.

A major technical concern addressed by the press release is information retrieval reliability. In AI research, this is often tested using the "needle in a haystack" scenario. Imagine hiding a single, crucial fact deep within a million tokens of irrelevant text. Can the AI reliably pull that one fact out?

If Claude Opus 4.6 can reliably locate information at the beginning, middle, and end of such a massive input, it means Anthropic has significantly overcome the inherent "lost in the middle" problem common in older transformer architectures. For engineers and data scientists, this is the technical proof point. It moves the capability from theoretical possibility to practical reliability, validating the underlying architectural improvements that power this massive context.

Future Implications: Reshaping AI Architecture

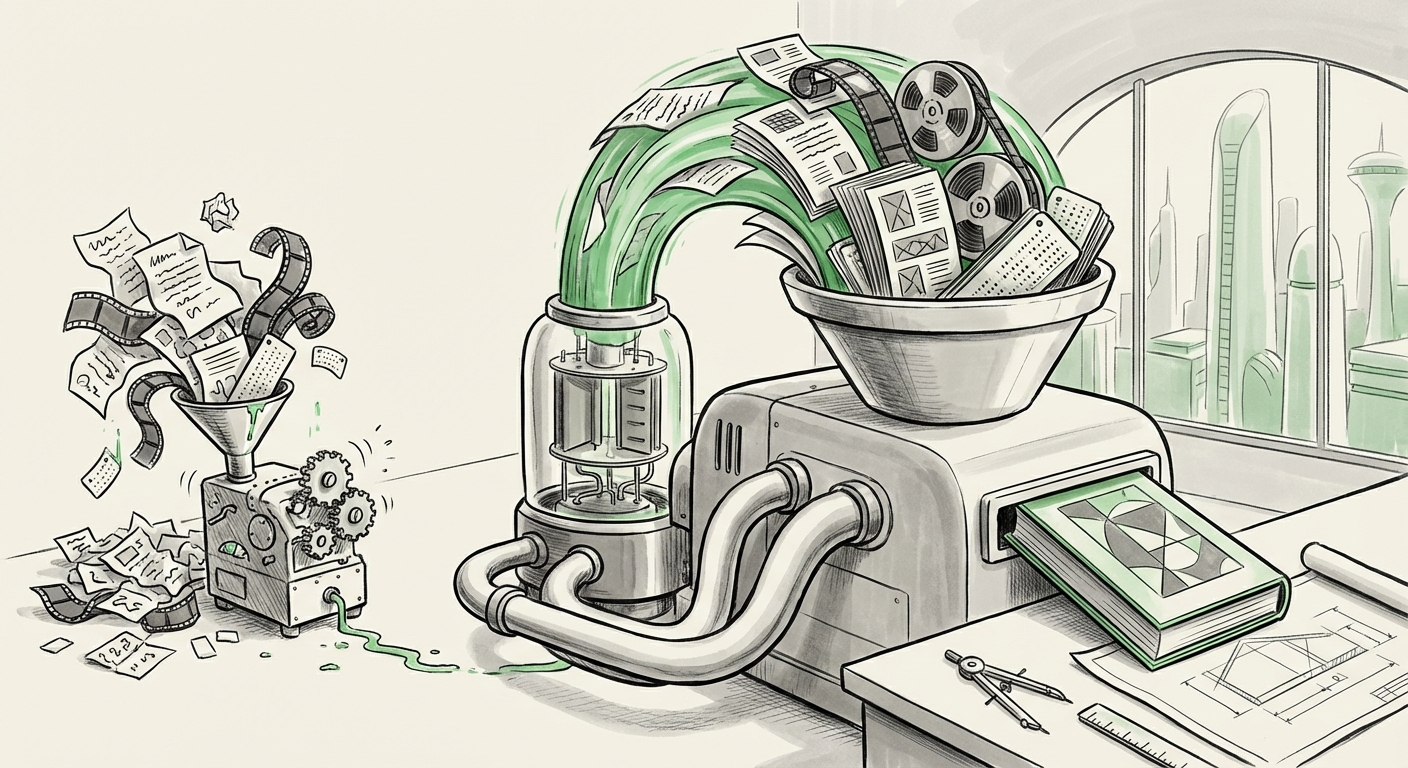

Perhaps the most radical implication of this 1M token window is its potential impact on **Retrieval Augmented Generation (RAG)** systems. RAG has been the industry standard for grounding LLMs in proprietary data. A typical RAG system works like this:

- User asks a question.

- The system searches an external database (a vector database) for the most relevant small chunks of company data.

- These chunks are inserted into the LLM's small context window along with the question.

- The LLM answers based on the provided context.

With 1M tokens, complex organizations can potentially **upload massive portions—or even all—of their knowledge bases directly into the prompt.**

The Decline of the Middle Layer?

If an entire corporate policy manual, thousands of lines of code, or years of quarterly financial reports can fit into one prompt, the need for sophisticated, costly, and latency-inducing vector database orchestration decreases significantly for certain tasks. AI Architects must now weigh:

- The Old Way: Complex RAG pipeline running constantly, managing updates, chunking, and embeddings.

- The New Way: A single, large, context-heavy prompt sent directly to the model.

This simplification in the stack could drastically lower the barrier to entry for advanced enterprise AI application development, allowing smaller teams to tackle problems previously requiring extensive MLOps teams dedicated to vector management.

Practical Applications: Where This Feature Drives Immediate ROI

For business leaders, the abstract size of "one million tokens" translates into tangible competitive advantages across several highly regulated and data-heavy sectors.

1. Legal and Compliance Deep Dives

Legal professionals often spend weeks manually cross-referencing clauses across dozens of long contracts or discovery documents. With 1M tokens, an AI can ingest an entire merger agreement, all associated regulatory filings, and prior correspondence, then be asked a nuanced question like: "Based on Clause 4.B in the 2022 NDA and the subsequent amendments filed last quarter, is our current operational scope compliant with the environmental impact stipulations?"

2. Software Engineering and Codebase Auditing

For software developers, feeding an entire legacy codebase—perhaps 50,000 lines of code spanning multiple modules—into the context window allows the model to understand system-wide dependencies instantly. This revolutionizes bug tracing, security auditing, and feature integration, tasks that previously required developers to constantly jump between dozens of files.

3. Financial Analysis and Due Diligence

Imagine M&A due diligence. Instead of reading thousands of pages of audited statements, shareholder communications, and internal financial forecasts, an analyst can feed all source documents to Opus 4.6 and ask for a synthesis of risk factors pertaining only to supply chain vulnerabilities mentioned in documents published before Q4 2023. This capability compresses months of work into minutes.

Navigating the New Reality: Actionable Insights for Businesses

This technology demands a strategic response from organizations looking to lead with AI. Here are actionable insights:

1. Re-Evaluate Your Knowledge Management Strategy

If your existing RAG system is primarily designed to retrieve small, discrete data chunks, you may need to adapt. Start experimenting with **context stuffing**. Can you consolidate related documents into larger, coherent packets suitable for direct ingestion by models like Opus 4.6? Understand the trade-off between the overhead of RAG and the potential cost of long-context prompting.

2. Prioritize Data Hygiene Over Database Complexity

When context length is virtually limitless, the quality of the input data becomes paramount. Garbage in, garbage out—especially when there’s a million tokens of garbage in. Companies must invest heavily in cleaning, structuring, and tagging their massive document repositories. The AI can now read everything; ensure everything it reads is accurate.

3. Focus on "Deep Inference" Tasks

Stop using powerful models for simple tasks like rewriting emails. Focus your resources on problems that were previously intractable due to data volume—complex cross-document reasoning, systemic risk analysis, and comprehensive codebase review. These are the areas where the ROI on 1M tokens is immediate and measurable.

The Road Ahead: Beyond the Million Mark

The release of Claude Opus 4.6 is more than a feature announcement; it's an inflection point. It shifts the bottleneck from *memory* to *reasoning*. While we celebrate the ability to ingest vast libraries, the next major frontier will be ensuring that the model’s internal reasoning capabilities scale proportionally to the size of the context it processes.

We are moving toward a future where the model doesn't just hold information; it *lives* inside your enterprise data. This requires trust, transparency, and continued rigorous testing—especially regarding the retrieval accuracy that Anthropic claims to have solved. The context war is heating up, and the ultimate winner will be the enterprise that can most effectively harness these super-sized brains to solve their most complex, data-intensive challenges.