The Million-Token Moment: How Claude Opus 4.6 Redefines AI Context and Enterprise Capability

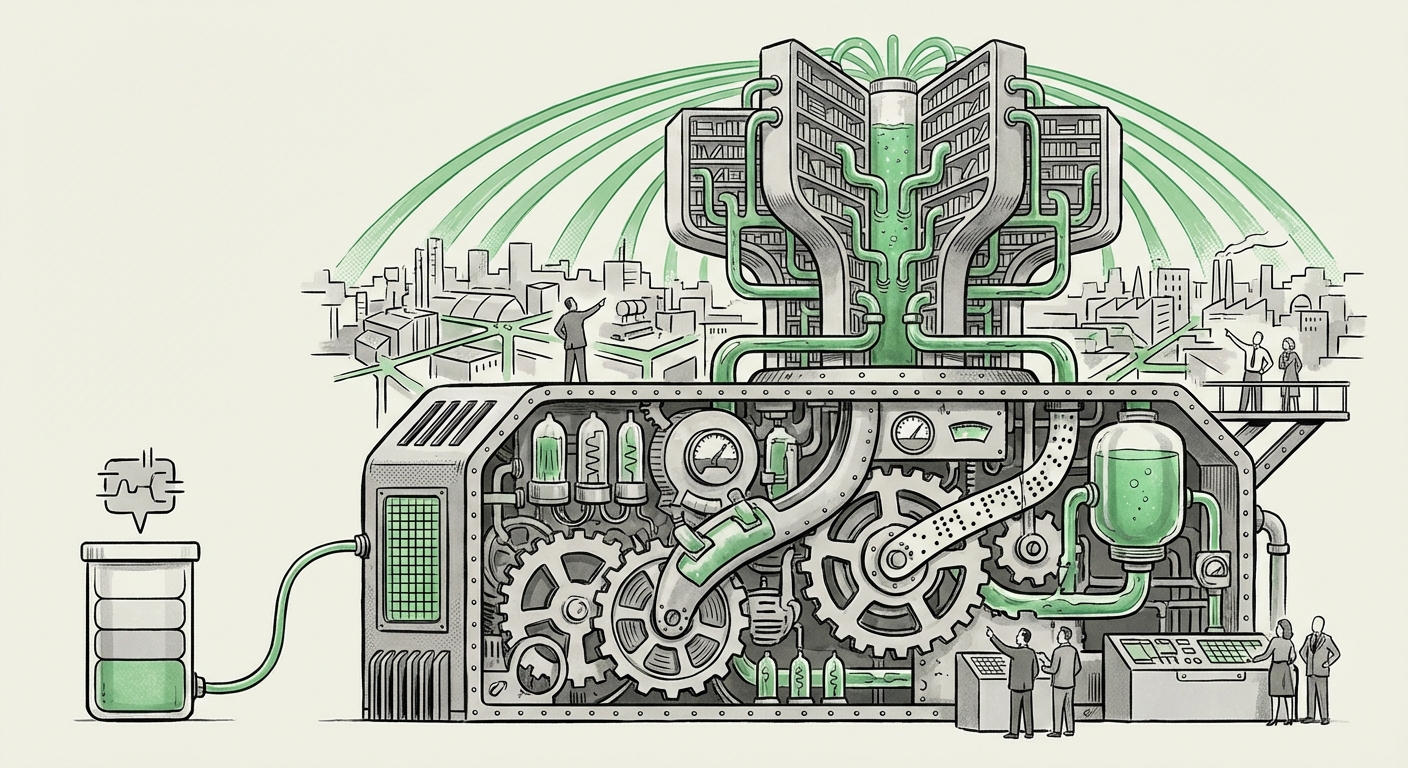

The landscape of Artificial Intelligence is often defined by incremental improvements, but occasionally, a development arrives that fundamentally re-writes the rules of engagement. The release of Anthropic’s Claude Opus 4.6, featuring a groundbreaking one-million-token context window, is precisely such a moment.

For the general public, a "token" might sound abstract, but to understand the significance of this release, we need to establish a baseline. A token is roughly equivalent to 3-4 characters or about three-quarters of a word in English. A one-million-token context window means Claude Opus 4.6 can ingest, process, and reason over the equivalent of several large novels—or dozens of complex technical manuals—simultaneously. This is not just a larger memory buffer; it is a qualitative shift in what AI can achieve in complex, real-world tasks.

The Context War: Establishing the New Benchmark

The race for superior context length has become the defining battleground for flagship Large Language Models (LLMs) in 2024. This isn't about raw intelligence scores alone; it’s about utility. A brilliant model that forgets the beginning of your 500-page legal brief is less useful than a slightly less brilliant model that can read the entire brief and synthesize conclusions across all sections.

Anthropic’s introduction of Opus 4.6 directly challenges existing leaders. We must situate this advancement within the broader context of the LLM context window competition. Competitors, most notably Google's Gemini 1.5 Pro, have also pushed boundaries into the million-token space. This parity indicates that the industry consensus has settled on long context as the next critical feature. However, the focus now shifts from *if* a model can handle a million tokens, to *how accurately* it handles them.

Anthropic’s specific claim—that Opus 4.6 locates relevant information more reliably—is key. It suggests their engineering focus was not just on brute capacity, but on maintaining high precision across the entire window. This technical rigor is what separates a novel feature from a production-ready tool.

The Critical Test: Recall and Accuracy

To truly gauge the impact, we look toward independent verification. When examining the "Needle in a haystack" test results, analysts seek evidence that the model doesn't suffer from "Lost in the Middle" syndrome, where information placed in the center of a vast document is ignored. If Opus 4.6 demonstrates near-perfect recall across the entirety of its context, it validates that Anthropic has solved a major architectural hurdle.

For AI Developers and researchers, this accuracy metric is the new headline performance indicator. It signals a maturing architecture, capable of deep document understanding that moves beyond simple summarization to genuine, cross-referenced analysis.

Practical Revolution: Applications Where Context Matters Most

The primary value proposition of massive context is the ability to feed an AI system an entire operational universe for a specific task. This capability immediately impacts sectors drowning in proprietary, unstructured data.

Deep Dive into Enterprise Utility

Consider the profound impact of a one-million-token context window on legal tech. A lawyer can now upload an entire discovery cache, multiple years of case law citations, and complex contracts, asking the AI to find subtle connections or inconsistencies across thousands of pages in a single prompt. This reduces review time from weeks to hours and minimizes the risk of human oversight.

Similarly, in finance and regulatory compliance, Opus 4.6 can process comprehensive annual reports (10-Ks), complex regulatory frameworks (like Basel III documentation), and internal auditing procedures simultaneously. The implication for CTOs is clear: AI can now handle tasks that previously required dedicated teams of highly paid analysts simply to *read* the source material.

This moves AI from being a helpful writing assistant to becoming a truly indispensable research and compliance partner.

The Engineering Reality: Cost, Speed, and Scalability

While the user experience focuses on the output, the true measure of a breakthrough is its deployability. A one-million-token context window is computationally expensive. The attention mechanism—the core technology that allows LLMs to weigh the importance of different tokens—scales poorly with context length ($O(n^2)$ complexity, where $n$ is the token count).

This brings us to the necessary investigation into latency and computational cost of 1 million token LLMs. For a feature to be truly adopted, it must be fast enough for near real-time interaction and affordable enough for sustained business operations. This necessity drives innovation in underlying infrastructure and algorithms:

- Hardware Optimization: Models must be heavily optimized for High Bandwidth Memory (HBM) to rapidly access the massive Key-Value (KV) cache required to store the context history.

- Algorithmic Innovation: Techniques like Ring Attention or block-sparse attention are crucial for reducing the quadratic scaling problem, turning an operationally impossible task into a merely very difficult one.

If Anthropic has found ways to manage the inference cost of this scale efficiently—perhaps through highly optimized custom hardware usage or novel caching strategies—it represents a significant engineering victory that will trickle down across the entire AI ecosystem. For decision-makers, the cost-per-query matters more than the token count itself.

The Road Ahead: Agents and Persistent Memory

Looking beyond current document analysis, the one-million-token window is foundational for realizing the next major paradigm shift: sophisticated, autonomous AI agents.

Current LLMs operate largely within a "session memory." If a task requires planning across hundreds of steps, involving reading manuals, executing external tools, reviewing the results, and iterating based on those results, previous models struggled to maintain the full history and context of the entire mission plan. The context window essentially served as short-term working memory.

With Opus 4.6, we are moving toward persistent operational memory. By exploring the future of AI agents with large context windows, we see a clear path where agents can maintain comprehensive state awareness:

- Goal Definition: Ingesting the entire project requirement document (100k tokens).

- Execution History: Storing all previous steps, code written, and API calls made (300k tokens).

- External Reference Material: Keeping key technical specifications and error logs open for review (600k tokens).

This persistence means fewer hallucinations based on forgotten instructions and the ability to handle long-running, multi-faceted projects—a true move toward systems that manage complex workflows autonomously rather than just responding to simple prompts.

Actionable Insights for Business and Technology Leaders

The arrival of models capable of processing a million tokens simultaneously is not merely an academic achievement; it demands strategic response from businesses relying on data processing.

For CTOs and Engineering Leaders:

1. Re-evaluate Data Ingestion Pipelines: Stop relying on chunking and embedding strategies for proprietary knowledge bases. If your core documentation fits within a million tokens, you can now use the LLM directly for reasoning. This is faster, more accurate, and bypasses the retrieval limitations of vector databases.

2. Prioritize Recall Benchmarking: When testing any new long-context model (from Anthropic or competitors), make the "Needle in the Haystack" test a core part of your evaluation rubric. High token count without high recall accuracy is wasted computational budget.

3. Investigate Agent Frameworks: Begin experimenting with how these context capacities can sustain complex, multi-step agent loops. Identify internal processes that currently fail due to context loss and map them to the new capabilities.

For Enterprise Decision Makers:

1. Identify High-Volume, Low-Tolerance Tasks: Target areas where large document analysis carries high risk—regulatory auditing, contract negotiation, scientific literature review. These areas promise the fastest ROI from long-context AI.

2. Foster Cross-Functional Literacy: Ensure that legal, finance, and R&D departments understand that their massive document repositories are now immediately actionable by AI. Bridge the gap between data scientists and domain experts.

Conclusion: The Era of Deep Comprehension

Claude Opus 4.6’s million-token context window signifies the end of the "short-term memory" era for flagship LLMs. By offering massive, reliable context, Anthropic has provided a tool capable of true deep comprehension over vast bodies of specialized information.

This development escalates the competition, pressures infrastructure providers to innovate on efficiency, and, most importantly, opens the door to a new generation of business applications that were previously confined to science fiction. The AI systems we deploy today will soon be expected not just to chat, but to read, understand, and reason across our entire organizational memory, making the ability to handle immense context the new fundamental prerequisite for advanced artificial intelligence.