The Global AI Gauntlet: How the EU AI Act Reshapes Innovation, Risk, and the Future of Tech Deployment

The development of Artificial Intelligence is no longer a purely technological race; it has become a geopolitical and legal contest. At the center of this contest is the European Union’s AI Act, the world’s first comprehensive legal framework governing how AI systems are built, deployed, and governed. For businesses leveraging modern tech—from small startups to massive multinational corporations—this Act is not just an EU concern; it is the *de facto* global standardsetter.

Understanding the AI Act requires moving beyond the headlines about 'banning' certain tech. We must analyze the practical compliance roadmaps, the shifting tectonic plates of global regulation, and the severe technical implications for the industry’s most powerful tools: General Purpose AI (GPAI) models.

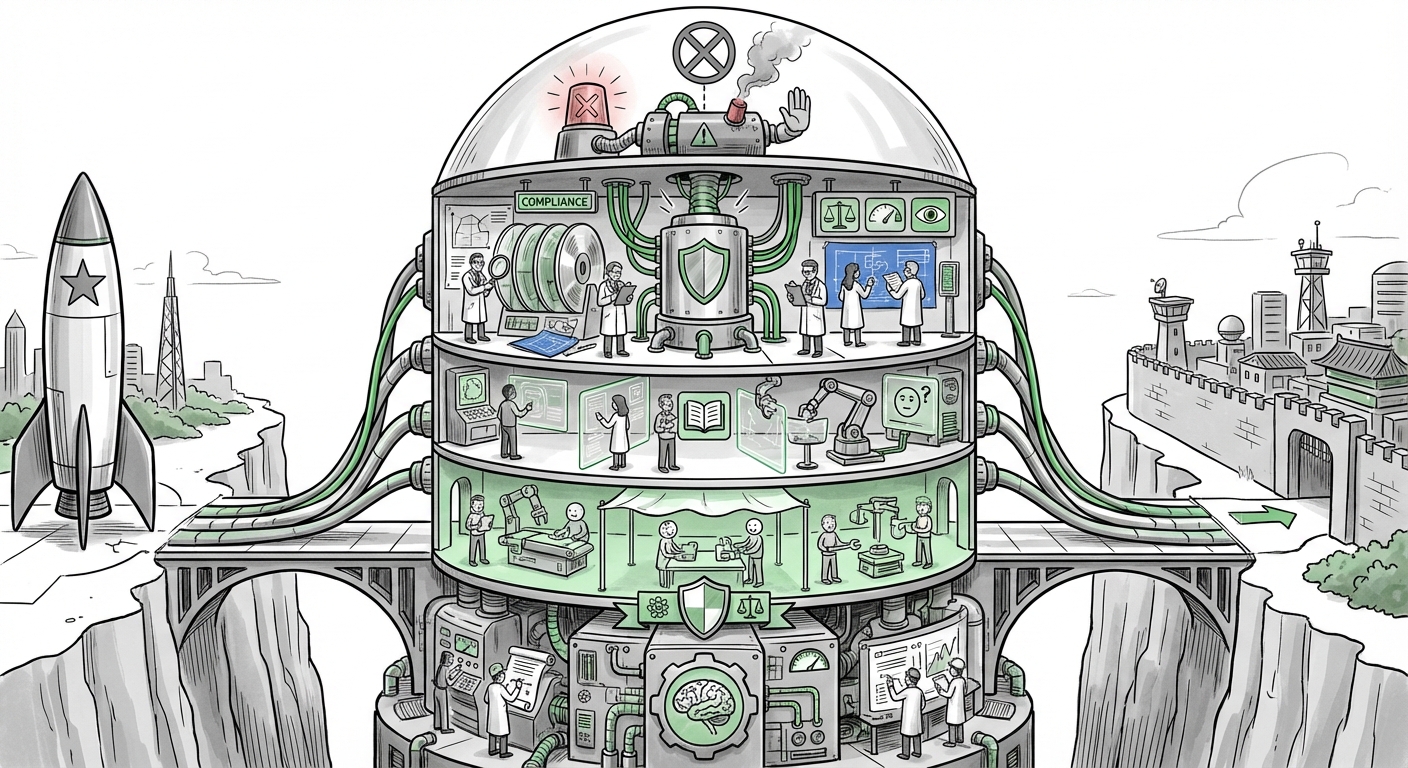

The Regulatory Tiers: From Banned to Business-as-Usual

The core innovation of the EU AI Act is its risk-based approach, segmenting AI applications into four key tiers. This tiered structure dictates the level of scrutiny, transparency, and human oversight required.

- Unacceptable Risk (Banned): These are systems that pose a clear threat to fundamental rights, such as social scoring by governments or manipulative subliminal techniques. For most innovative tech providers, this tier is a hard stop.

- High-Risk: This is the crucial operational category, covering AI used in critical areas like employment screening, medical devices, critical infrastructure management, and law enforcement. These systems face stringent requirements: data quality mandates, robust documentation, human oversight, and conformity assessments before hitting the market.

- Limited Risk: This captures systems requiring transparency so users know they are interacting with AI (e.g., chatbots or deepfakes). This is primarily a compliance and labeling issue.

- Minimal Risk: The vast majority of common AI applications, such as spam filters or video game mechanics, fall here, requiring adherence only to voluntary codes of conduct.

What this means for the future: The AI Act solidifies the principle that AI must be trustworthy by design. It forces developers to bake ethics, data integrity, and documentation directly into the development lifecycle, moving compliance from an afterthought to an essential, upfront engineering task.

The Developer's Dilemma: Turning Law into Code

For any company selling AI into Europe, the theoretical framework must translate into practical engineering sprints. The challenge lies in the phased implementation. As noted in analyses focusing on practical implementation (e.g., roadmaps published by major consulting firms), the timelines are aggressive, forcing immediate re-evaluation of existing systems designated as "high-risk."

Actionable Insight for CTOs: Compliance for high-risk systems means establishing verifiable data provenance (where did the training data come from?), maintaining comprehensive technical documentation accessible for years, and ensuring mechanisms for human review are fail-safe. For Small and Medium Enterprises (SMEs), this burden can be disproportionate, demanding accessible compliance tools or regulatory sandboxes to prevent innovation from stalling due to bureaucratic overhead.

The Brussels Effect vs. Innovation Hubs: A Trilateral AI Future

The EU AI Act’s impact extends far beyond Brussels. It is the leading edge of a global regulatory divergence, primarily contrasting with the approaches taken by the United States and China.

The US approach, often described as sector-specific and innovation-friendly, relies heavily on existing regulatory bodies (like the FTC) and non-binding executive orders to guide development. This fosters rapid iteration but lacks the uniformity of the EU framework. As the Brookings Institution often highlights in their comparative analyses, the US prioritizes maximizing competitive advantage, whereas the EU prioritizes safeguarding citizen rights.

Meanwhile, China employs a model centered on content control and state-mandated alignment, focusing on algorithmic recommendations and deep synthesis outputs to ensure ideological conformity. (See analyses comparing the frameworks which note this divergence in focus).

Future Implication: Fragmentation and the "Brussels Effect." Companies operating globally cannot afford to build three different AI models. Faced with the strictest rules globally, many developers will adopt the EU's high standards across their entire product line to ensure universal access. This phenomenon—where regional regulation becomes the *de facto* global standard—is the "Brussels Effect" in action, potentially slowing down deployment in the short term but ensuring a baseline level of trust globally.

The Foundation Model Crucible: New Rules for the Titans

Perhaps the most significant evolution in the final AI Act text concerns General Purpose AI (GPAI) models—the large language models (LLMs) and massive multimodal systems powering modern generative AI. These models are inherently powerful and pervasive, so the regulation needed to evolve past traditional, narrow applications.

The Act singles out GPAI providers, especially those deemed to pose a "systemic risk" due to their size, capability, or widespread deployment. This imposes new, significant obligations:

- Systemic Risk Assessment: Providers must conduct model evaluations, stress-test against potential misuse, and report serious incidents to the European AI Office.

- Technical Documentation: They must provide detailed descriptions of training methods, energy consumption, and data handling, a massive undertaking for models trained on petabytes of data.

- Transparency: Clear adherence to copyright laws regarding training data, and mandatory watermarking or labeling for synthetic content.

This directly impacts ML Engineers. It shifts focus from purely maximizing performance metrics (like accuracy) to optimizing for compliance metrics (like data source transparency and adversarial robustness). Technical analysis from major cloud providers confirms that significant redesigns in MLOps pipelines are necessary to track metadata required for EU compliance.

When AI Fails: The New Era of Legal Accountability

Regulation inherently alters risk. If an AI system malfunctions—a self-driving car crashes, or a diagnostic tool misses a tumor—who pays? The EU AI Act, coupled with related discussions on AI product liability, is attempting to eliminate the legal grey zone surrounding autonomous decisions.

Before regulation, proving fault was murky. Was it the data provider, the model developer, or the end-user deployer? Legal experts focusing on AI liability frameworks suggest the Act clearly delineates roles, making it easier to hold the appropriate entity accountable. Providers must now actively manage the risk of their models being used outside their intended parameters.

Future Trajectory for Insurance: This increased clarity is driving a parallel industry: AI Insurance. Underwriters can now better price the risk of high-risk deployments because the regulatory standards define the expected level of diligence. If a company deploys a system that fails to meet the documented safety standards of the Act, proving negligence becomes far easier, raising insurance premiums or even leading to uninsurability for non-compliant solutions.

Practical Implications: Building Trust into the Stack

For businesses today, the message is clear: **Trust is the new currency.** AI systems that are opaque, poorly documented, or built on questionable data will be economically unviable in major markets.

For Developers: Embrace Explainable AI (XAI) not as a niche tool, but as a core function. If you cannot explain *why* a high-risk decision was made to an auditor, you cannot deploy it.

For Business Leaders: Implement dedicated AI Governance Boards. These boards must bridge the gap between the legal department (understanding the Act’s requirements), the engineering team (implementing the technical fixes), and the executive suite (managing financial and reputational exposure).

For Society: The long-term implication is a slowing, but perhaps safer, technological ascent. We trade breakneck, unregulated speed for predictable, rights-respecting deployment. This regulatory friction is designed to ensure that AI development serves human well-being rather than simply maximizing speed or profit at any cost.

Conclusion: From Compliance Checkbox to Competitive Advantage

The EU AI Act is more than just a set of rules; it is a strategic blueprint for the next decade of technological development. It demands that we stop treating AI as magical black boxes and start treating them as sophisticated, governed products subject to strict quality control.

While the initial implementation will involve significant friction—particularly concerning the technical documentation load for GPAI models and the cost of compliance for SMEs—those who adapt fastest will gain a significant competitive edge. Proactive compliance today is risk mitigation tomorrow. The businesses that master these new standards of transparency, data integrity, and liability management will not merely survive the global AI gauntlet; they will define the next generation of trustworthy technology.