The Global AI Reckoning: Decoding the EU AI Act's Impact on Innovation, Compliance, and Future Tech

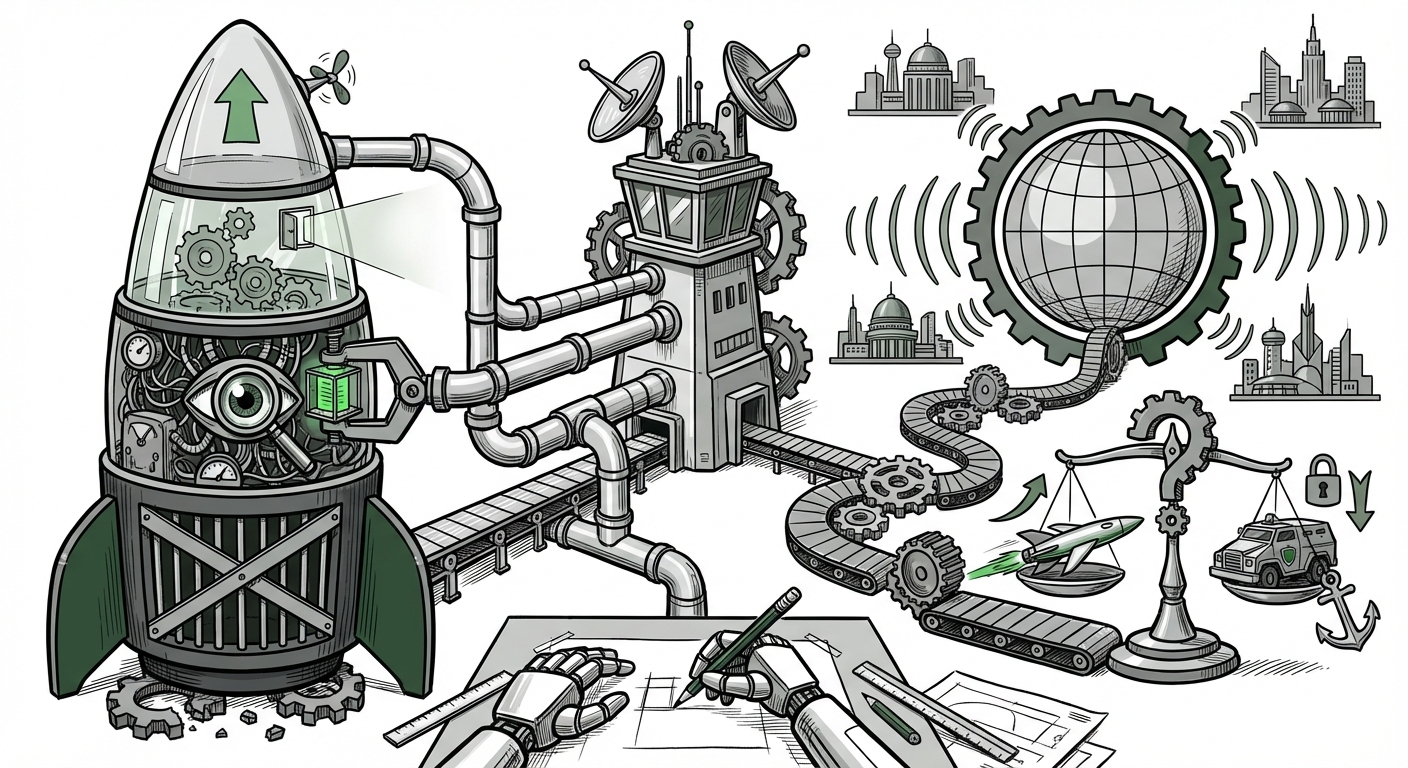

The European Union’s Artificial Intelligence Act (AI Act) is not merely a regional piece of legislation; it is a foundational text reshaping the global trajectory of artificial intelligence. By adopting a comprehensive, risk-based regulatory framework, the EU has fired the starting gun on what many are calling the age of Trustworthy AI. For businesses that build, deploy, or even simply use AI tools, this Act signifies a profound shift from a "move fast and break things" ethos to one rooted in accountability and fundamental rights.

As technology analysts, our role is to look beyond the headlines—beyond the initial focus on simple business risk—to understand the deep, structural changes this legislation introduces. To gain a full picture, we must examine its geopolitical ripple effects, the hard reality of technical compliance, its grip on the newest frontier (Generative AI), and the ultimate trade-off between safety and speed.

The "Brussels Effect": Geopolitics and the Global Competitive Landscape

The EU has historically wielded immense soft power through regulation—a phenomenon dubbed the "Brussels Effect." Whether with GDPR reshaping data privacy worldwide or safety standards influencing global manufacturing, companies often find it easier to adopt the strictest standard globally rather than maintain multiple compliance regimes.

The AI Act is positioned to be the ultimate test of this effect. It categorizes AI applications based on risk: unacceptable risk (banned), high-risk (subject to strict oversight), limited risk (transparency required), and minimal risk (unregulated). This tiered system immediately forces global tech giants to evaluate their product roadmaps through a European lens.

This is where the international reaction becomes critical. When examining the US reaction to the EU AI Act and the competitive landscape, we see divergence. The US has favored a more sector-specific, innovation-first approach, often relying on executive orders and existing regulatory bodies rather than sweeping, horizontal legislation like the EU’s. This creates potential friction:

- Compliance Costs: For a US company selling software across the Atlantic, integrating EU compliance means higher development costs, potentially delaying market entry elsewhere until standards align.

- The Trust Advantage: Conversely, companies that master EU compliance early may gain a competitive edge globally, marketing their products as "Certified Trustworthy" to risk-averse consumers and governments worldwide.

In essence, the AI Act dictates that if you want access to one of the world’s largest single markets, you must align with its ethical and safety guardrails. This is AI governance as a geopolitical tool.

The Technical Tightrope: Practical Compliance for High-Risk Systems

For Chief Technology Officers (CTOs) and Chief Risk Officers, the true challenge lies not in reading the law, but in implementing it. Our deep dives into the practical compliance challenges for "high-risk systems" under the EU AI Act reveal a significant technical hurdle.

What makes a system high-risk? If an AI determines whether someone gets a loan, is hired for a job, or receives necessary public services, it falls under this heavy scrutiny. Compliance demands are intense, extending far beyond merely documenting the code:

- Data Governance: Companies must prove their training data was sourced ethically and is free from harmful bias that could lead to discriminatory outcomes. This requires forensic-level auditing of massive datasets.

- Traceability and Logging: Every decision made by a high-risk AI must be traceable back to its source inputs. This mandates robust, immutable logging infrastructure—a massive undertaking for legacy systems.

- Human Oversight: The Act demands that these systems must be designed to allow for meaningful human intervention and override. The system cannot be a true "black box."

This pushes technical teams to adopt practices akin to those in high-stakes engineering fields like aerospace. The focus shifts from optimization to verifiability. Auditors and compliance teams are now asking for Model Cards and Data Sheets for Datasets as standard artifacts, treating AI components with the same rigor as critical hardware.

The New Frontier: Taming General Purpose AI (GPAI) and Foundation Models

The AI legislative landscape moved incredibly fast, and the EU AI Act had to sprint to catch up with the rise of powerful Large Language Models (LLMs) and multimodal systems. Understanding the EU AI Act's transparency requirements for General Purpose AI Models (GPAI) is essential for any entity building or deploying models like GPT-4 or similar open-source alternatives.

The Act recognizes that a foundational model, which can be adapted for countless uses, poses a systemic risk simply by its inherent capability. The regulation elegantly splits GPAI obligations:

- All GPAI Providers: Must adhere to basic transparency—documenting model architecture, capabilities, and potential limitations.

- Systemic GPAI Providers: If a model is powerful enough (based on computational resources used for training), it faces enhanced obligations. These include conducting model evaluations, stress-testing for systemic risks, and ensuring cybersecurity resilience.

For product managers developing applications atop these models, this means accountability still flows down. While the model creator handles systemic risk mitigation, the deployer must ensure their specific use-case remains within the boundaries defined by the model provider and does not inadvertently create a prohibited application (like social scoring).

Innovation vs. Safety: The Great Trade-Off Debate

The most spirited debate surrounding the AI Act centers on whether this massive regulatory apparatus will crush innovation within the EU bloc. This inquiry into the impact of the EU AI Act on AI innovation versus ethical alignment frames the core challenge for the coming decade.

Skeptics argue that the compliance costs—especially for SMEs and startups attempting to innovate in high-risk areas—are prohibitive. If securing third-party conformity assessments becomes the bottleneck, smaller European players might lag behind US or Asian competitors operating in less regulated spaces.

However, the counterargument emphasizes the long-term value proposition: Trustworthy AI is sustainable AI. By embedding ethics and safety standards upfront, the EU hopes to cultivate an AI ecosystem that is inherently more resilient to public backlash and future regulatory crises. This approach aims to create market differentiation based on verified safety, rather than just speed.

The future of AI development in Europe may look different: less focus on rapidly deploying bleeding-edge, untested models, and more focus on robust, explainable, and narrowly tailored solutions where the legal and ethical risks are clearly understood and managed.

Actionable Insights for Navigating the New Reality

The EU AI Act is transitioning from a future threat to an immediate operational mandate. Here are concrete steps businesses must take now, regardless of their primary geographic location, if they interact with the European market or any organization sensitive to global regulatory trends:

1. Establish an AI Governance Council (AGC)

This cross-functional team must include legal, compliance, engineering, and risk management. Their first task is mapping every current and planned AI system against the four risk categories of the Act. You must know which of your tools are "high-risk" today.

2. Prioritize Documentation Maturity

Treat documentation not as a chore, but as intellectual property insurance. Invest heavily in tools that automate the generation of technical documentation required for high-risk systems, focusing on data lineage, model testing reports, and human intervention protocols.

3. Rethink Generative AI Procurement

If you are using APIs from major GPAI providers, demand transparency regarding their compliance efforts for systemic risks. Furthermore, mandate internal guardrails (filters, usage policies, and validation steps) to ensure your application of that GPAI remains low-risk or that you assume the liability correctly for a high-risk deployment.

4. Look Globally, Act Locally (on Risk)

Even if your operations are outside the EU, anticipate that clients, partners, and investors will increasingly demand adherence to baseline principles found in the Act. Adopting the highest common denominator for data ethics and bias mitigation is no longer optional; it is prerequisite for dealing with modern enterprises.

Conclusion: The Dawn of Accountable AI

The EU AI Act represents a watershed moment. It formalizes the understanding that AI, particularly powerful AI, is a societal infrastructure, not just a consumer gadget. The legislation forces a necessary maturation process upon the technology sector. It is a complex, demanding framework that will undoubtedly cause initial friction, particularly for startups focused purely on speed.

However, this moment is fundamentally about building systems that serve humanity responsibly. The future of AI will not belong solely to the fastest builders, but to the most trustworthy ones. By establishing clear boundaries and mandatory accountability structures, the EU is actively defining the conditions under which the next generation of groundbreaking, yet safe, artificial intelligence will emerge and thrive globally.