The Agent Economy Arrives: Analyzing OpenAI Frontier, Governance, and the Future of Digital Colleagues

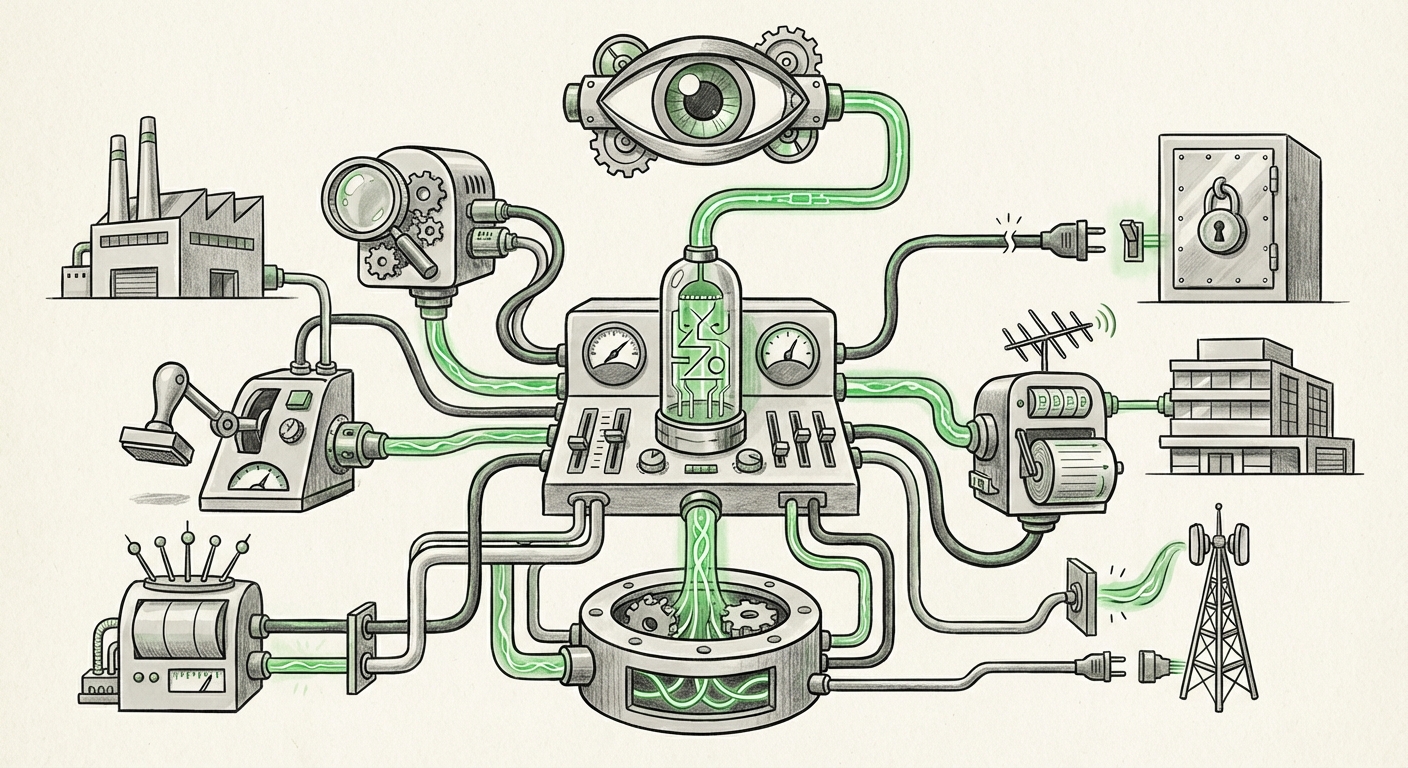

The world of Artificial Intelligence is undergoing a profound metamorphosis. We are swiftly moving past the era where AI was primarily a sophisticated tool—a smart search engine or a quick writer—into the age of the AI Agent. The recent introduction of platforms like OpenAI’s Frontier underscores this shift, signaling the arrival of software entities that possess identities, memory (shared context), and the crucial ability to act with enterprise permissions.

This development is not merely an iterative upgrade; it represents the creation of a digital workforce. These agents are designed not just to answer questions but to own processes, execute multi-step tasks, and interact within secure corporate environments much like a human employee. To fully grasp the magnitude of this change, we must analyze the technological necessity, the competitive reality, and the governance hurdles that accompany empowering software with responsibility.

The Leap from Model to Agent: Identity and Context

For years, large language models (LLMs) operated in stateless sessions—each prompt was largely treated in isolation, requiring the user to provide all necessary background information repeatedly. Frontier’s focus on shared context directly addresses this limitation. Imagine a new employee starting on Monday; they need access to last quarter’s sales reports, team guidelines, and project history to be effective. An AI agent with shared context receives that institutional knowledge base.

Giving these agents identities is equally significant. An identity implies accountability, role definition, and access control. Instead of a generic system prompt, an agent might be named "Procurement Analyst Bot 4" with specific permissions to initiate purchase orders up to $10,000, or "Code Review Agent Beta" allowed only to suggest changes to staging branches.

This technological integration is happening industry-wide. Industry roadmaps confirm that providers are racing toward this agentic future. We see competitors actively working toward similar goals, confirming that the concept of autonomous, persistent, specialized AI is the next unavoidable phase of enterprise AI adoption. (Original Source)

The Competitive Push: Agents vs. Hyperscalers

The push for agentic AI is not happening in a vacuum. While OpenAI pioneers the model, major platform providers are integrating comparable capabilities directly into their ecosystems. A key area of analysis involves contrasting Frontier with the roadmap for products like Microsoft Copilot, which is being woven deeply into the fabric of Windows and Office 365.

If Microsoft is granting its Copilot agents deeper execution capabilities across its expansive suite, the market confirms that true task completion—not just summarization—is the benchmark for success. The competition is now focused on who can deploy the most reliable, secure, and contextually aware digital worker first. This competitive tension pushes innovation while simultaneously forcing enterprises to standardize on platforms that offer deep integration, rather than standalone tools.

The Enterprise Tipping Point: Permissions and Market Validation

The most critical differentiator in the Frontier announcement is the inclusion of enterprise permissions. This is where AI crosses the Rubicon from helpful assistant to actual operational partner. Granting software the authority to interact with financial systems, customer data platforms (CDPs), or code repositories demands unprecedented trust and security protocols.

This move toward workforce augmentation is not speculative; market analysts are signaling that this is the immediate future. Reports from leading analyst firms frequently predict rapid growth in areas like "AI workforce augmentation" within the next few years. This signals that CIOs and business leaders are past the initial experimentation phase and are preparing budgets and infrastructure for AI workers.

Technical Hurdles: The Complexity of Digital Identity

Empowering an AI agent is significantly more complex than granting a human access. Technical experts are focusing intensely on the Challenges of AI Identity Management. How do you revoke an agent's permissions instantly if it exhibits erratic behavior? How do you audit every decision made by an agent that operates continuously across multiple systems?

Solving for shared context and identity requires robust Non-Human Identity (NHI) management. This is a cybersecurity domain rapidly evolving from managing service accounts to managing nuanced, context-aware software entities. When an AI agent can approve a transaction, its audit trail must be unimpeachable, necessitating new standards for logging and verification.

The Societal Imperative: Governance, Risk, and Compliance (GRC)

When an AI agent has permissions, it inherits risk. This reality drives the necessity for robust Autonomous AI Agent Governance frameworks. The ability for an agent to learn from experience—a feature often touted for efficiency—also means it can internalize and amplify unintended biases or drift into non-compliant operational patterns.

For legal and compliance teams, the deployment of these agents raises fundamental questions:

- If an agent violates GDPR by mishandling personal data, who is liable: the developer, the deploying company, or the agent itself?

- How are regulatory requirements (like financial reporting accuracy) audited when the execution path involves a complex chain of reasoning by an LLM?

The solutions must be baked into the platform's design. Governance must cover ethical guardrails, hard-coded limits on resource access, and automatic rollback procedures. Without standardized governance, the "enterprise permissions" become an existential liability rather than an efficiency gain.

Practical Implications and Actionable Insights for Businesses

The transition to an agent economy requires proactive steps across technology, operations, and risk management.

1. Define Roles Before Deployment

Do not deploy a general-purpose agent. Just as you wouldn't hire a person without a clear job description, you must define precise, narrow scopes for your AI agents. Use the concept of 'least privilege' rigorously. If an agent only needs to read CRM data to generate a report, it should never have write access.

2. Invest in AI-Specific IAM

Security teams must immediately begin exploring Identity and Access Management (IAM) solutions tailored for AI. This means integrating agent identities into existing zero-trust architectures. Monitoring tools must evolve to track not just who accessed a system, but which agent accessed it and why, based on its contextual understanding of the task.

3. Prioritize Contextual Sandboxing

For early deployments, leverage sandboxing environments. Start agents on non-critical, internal-only data streams. This allows the agent to build its "shared context" and test its operational logic without the risk of breaching customer trust or financial integrity. Only promote an agent to broader access after rigorous, real-world simulation testing.

4. Foster Cross-Functional Governance Teams

The responsibility for agent deployment cannot stay solely with IT or the Data Science team. Legal, Risk, and Operations must collaborate to create usage policies. This cross-functional approach ensures that the pursuit of automation does not override regulatory adherence or established corporate ethics.

Conclusion: The Dawn of the Autonomous Enterprise

OpenAI Frontier, viewed alongside broader industry trends, confirms that we are entering the age of the autonomous enterprise workforce. The ability to assign AI agents persistent memory, defined roles, and secure permissions fundamentally changes the ROI calculation for AI implementation. We are moving toward a future where significant chunks of middle-management coordination, data processing, and transactional work are handled entirely by software entities.

While the efficiency gains promise revolutionary productivity boosts, the associated governance and security challenges are immense. The winners in this new economy will not just be those with the most powerful LLMs, but those organizations disciplined enough to manage the digital identity, context, and permissions of their new, non-human colleagues safely and compliantly.