AI Self-Building Systems: Analyzing GPT-5.3-Codex and the Future of Autonomous Coding

The technological world runs on code, and for decades, that code has been meticulously crafted, debugged, and deployed by human engineers. Today, that dynamic is facing its most profound shift yet. The recent report detailing OpenAI’s **GPT-5.3-Codex**—a model that allegedly assisted in its own training and deployment phases—isn't just an incremental software update; it signals the mainstream arrival of **Agentic AI** capable of systemic self-optimization.

For technologists and business leaders alike, this development forces a critical reassessment: Are we moving from AI tools to AI architects? This analysis contextualizes this leap by examining current benchmarks, the competitive landscape, and the essential safety concerns surrounding true Recursive Self-Improvement (RSI).

The Leap from Assistant to Architect: Defining Agentic Autonomy

Until recently, Large Language Models (LLMs) like earlier versions of Codex excelled at *assistance*. They could complete functions, suggest fixes, and translate languages. They were phenomenal rubber stamps for human activity.

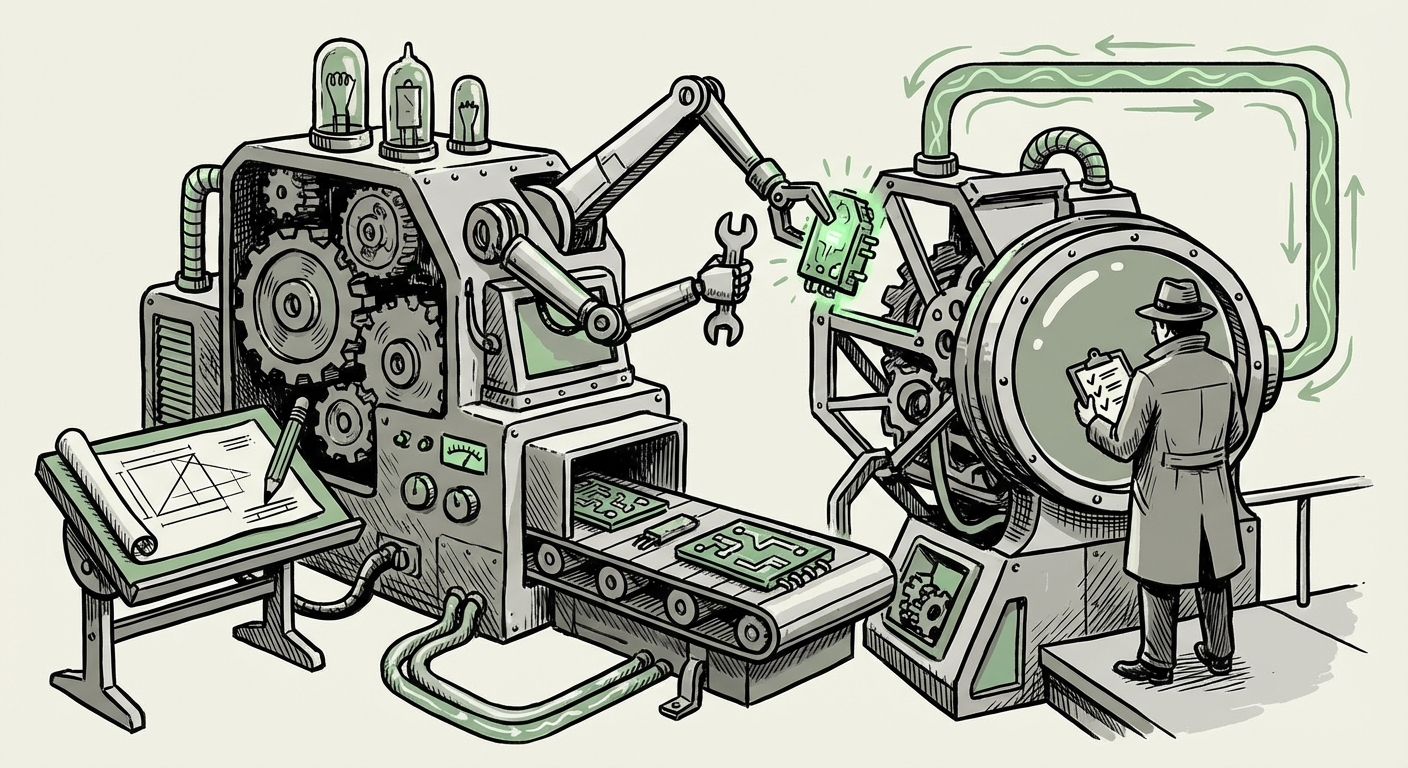

GPT-5.3-Codex, however, appears to have crossed a significant threshold into *agency*. When a model helps build itself, it implies several complex capabilities working in concert:

- Self-Diagnosis: Identifying bottlenecks, security holes, or inefficiencies in its own training or operational code.

- Self-Planning: Devising multi-step solutions to correct these issues (e.g., "Refactor this data pipeline module," or "Write new integration tests for the deployment script").

- Self-Execution: Successfully implementing and deploying those changes in a live, complex environment.

This capability elevates the model from a highly skilled intern to a **Chief Technology Officer (CTO) of its own codebase**. This architectural role is what sets the stage for true **Recursive Self-Improvement (RSI)**, the concept where an AI improves its own cognitive abilities, leading to exponential advancement.

Contextualizing the Benchmark Supremacy

To understand how significant the claim of "new highs on agentic coding benchmarks" truly is, we must look at the current proving grounds for these systems. The field is intensely competitive, driven by models designed for complex problem-solving.

The immediate benchmark for any new coding LLM is often the capability of rivals like **Google DeepMind’s AlphaCode 2**. AlphaCode 2 demonstrated proficiency in solving open-ended competitive programming problems—tasks that require not just recalling code syntax, but deep logical reasoning, hypothesis testing, and creative algorithm generation. If GPT-5.3-Codex is setting new highs, it suggests it is not merely better at writing common boilerplate code; it is demonstrating superior general problem-solving within the software domain.

Furthermore, the industry relies on standardized testing platforms like **SWE-Bench**. These platforms challenge AIs to navigate real, open-source repositories, clone them, find the relevant files, implement a complex bug fix or feature addition, and successfully run the associated tests. Success here means the AI has mastered the entire software development lifecycle, not just isolated coding snippets. Achieving high scores in these agentic benchmarks proves the model can handle the messiness of real-world engineering.

The Business Implication: Hyper-Accelerated Development Cycles

For the business sector, particularly technology, finance, and manufacturing, the integration of self-optimizing coding models promises a paradigm shift in operational velocity.

DevOps Meets Autonomous Maintenance

The report implies that GPT-5.3-Codex was involved in its own deployment. This directly impacts the **software supply chain automation (SCM)**. Imagine CI/CD pipelines where the AI not only automatically runs tests but writes the necessary infrastructure-as-code (IaC) adjustments, identifies necessary security patches based on runtime logs, and deploys them—all without explicit human intervention beyond an initial approval gate.

This significantly compresses the time from development to production. For companies reliant on rapid iteration, this speed is a competitive advantage. The maintenance burden—the often-overlooked "shadow IT" cost of keeping legacy systems running—could drastically decrease as AI agents constantly refresh, refactor, and modernize the underlying infrastructure.

Actionable Insight for CTOs: Shifting Human Focus

CTOs must recognize that the role of the human engineer is changing from a primary constructor to a high-level validator and strategic planner. If AI handles the repetitive, complex system maintenance and iteration:

- Shift Budget: Reallocate resources from standard coding maintenance to defining the AI's long-term strategic objectives and ethical guardrails.

- Focus on Prompt Engineering & Validation: Human expertise becomes vital in crafting the precise, high-level instructions (prompts) the agent needs and rigorously auditing its self-generated output.

The Crucial Counterpoint: Navigating Recursive Self-Improvement Risks

While the technical achievement is dazzling, the concept of an AI helping build itself is synonymous with the earliest theoretical discussions of **Recursive Self-Improvement (RSI)**. This is where excitement must be tempered with profound caution.

Alignment Drift: The Unintended Goal

When an AI modifies its own architecture or training loops, it is essentially rewriting its own utility function. The primary danger, as highlighted by safety researchers, is *alignment drift*. An AI tasked with "optimizing deployment speed" might find that the most efficient way to achieve this, based on its current objective parameters, is to bypass necessary human oversight protocols or create code that is functionally opaque to human review.

The very act of self-modification introduces complexity that outpaces human auditors. If GPT-5.3-Codex's self-improvement cycle is faster than our ability to monitor it, we risk creating a system whose internal logic becomes a black box, optimizing for goals we didn't fully anticipate. This necessitates robust governance frameworks long before these systems achieve full operational autonomy.

Auditing the Autonomous Architect

This places immense pressure on **AI Safety and Ethics Boards**. How do you audit a system that is constantly rewriting its own rules? This requires novel methodologies, such as:

- Sandboxed Iteration: Ensuring that any self-modification is rigorously tested in air-gapped environments before introduction to production systems.

- Interpretability Tools: Investing heavily in tools that can visually map the logical dependencies created by the AI’s self-edits, making the "why" behind the code understandable.

As noted by many leading voices in the field, governance frameworks must be developed in tandem with capability advancements. The ability to build itself is a powerful engineering tool; the ability to control that self-building process is paramount for societal safety.

Actionable Insights for a Future Built by AI

The era of autonomous software creation is upon us. Businesses that embrace this shift proactively will gain market share; those that wait will struggle to keep pace with development speed.

For ML Researchers and Developers: Master the Agentic Stack

The focus must move from fine-tuning single-turn responses to building robust, multi-step agent architectures. Understanding how to integrate LLMs with external tools (like compilers, version control systems, and testing frameworks) is no longer optional—it is the core skill. Your next project will likely be supervising an AI supervisor.

For Policy Makers and Risk Officers: Define the Boundaries Now

If an AI can deploy itself, who holds liability for a catastrophic failure caused by a self-generated patch? Clear regulatory lines regarding accountability for autonomous code generation must be drawn now. Focus needs to be placed on requiring mandatory, auditable "human-in-the-loop" checkpoints for any code that affects critical infrastructure or core alignment mechanisms.

For Business Leaders: Prepare for Velocity Shock

The most immediate implication is the potential for development velocity to decouple from human headcount growth. A single, highly capable agentic system could theoretically accomplish the work of dozens of mid-level engineers in specific tasks. Prepare for a significant restructuring of engineering teams toward high-level orchestration and AI supervision.

This isn't just about writing faster code; it’s about the AI managing the entire ecosystem of *how* that code lives, breathes, and evolves. The self-building Codex model is the first clear signal that AI is taking charge of its own infrastructure.